Seedream4 Models

Seedream v4, a cutting-edge image generation model by ByteDance, redefines creative workflows by combining lightning-fast inference speeds with breathtaking 4K high-definition output. Beyond its raw performance, the model leverages advanced knowledge and reasoning to interpret complex prompts with precision, enabling seamless prompt-based editing and a vast spectrum of versatile artistic styles that make it the ultimate solution for professional design, content creation, and digital marketing.

探索領先模型

Atlas Cloud 為您提供最新的行業領先創意模型。

Seedream4 Models 的核心亮點

Atlas Cloud 為您提供業界領先的最新創意模型。

影像合成

使用 Seedream v3–v4 模型根據文字提示詞生成圖像。

直接編輯

透過 Seedream v4/edit 端點優化影像。

循序編輯

Applies step-by-step changes with edit-sequential model.

順序輸出

透過順序生成提供多步驟結果。

版本選項

提供 v3、v3.1 和 v4 變體,以滿足不同需求。

影像輸入

編輯模型可以將現有圖像作為輸入,並透過提示詞(prompts)對其進行優化。

極速

最低成本

| 模態 | 描述 |

|---|---|

| Seedream v4 API(Text To Image) | Seedream v4 API 使開發者能夠將文字描述轉化為令人驚嘆的高保真視覺效果。透過利用先進的擴散架構,它能生成單張具有複雜細節和藝術精度的高解析度影像,非常適合快速概念藝術生成和優質數位資產製作。 |

| Seedream v4 Edit API(Image To Image) | 此 API 提供對視覺轉換的精細控制,允許開發人員透過文字引導修改或重新構想現有影像。它產生單一、精緻的輸出,在保持原始結構完整性與新的創意方向之間取得平衡,專為專業照片修飾和迭代設計工作流程而優化。 |

| Seedream v4 Sequential API(Text To Image) | Seedream v4 序列化 API 使創作者能夠透過單一提示詞或敘事序列,生成包含 1 到 14 張圖像的連貫系列。透過確保多幀之間嚴格的風格與角色一致性,它是快速製作分鏡腳本、角色設計圖和主題視覺合集的首選解決方案。 |

| Seedream v4 Edit Sequential API(Image To Image) | 專為進階疊代工作流程設計,此 API 處理參考圖像以生成 1 到 14 個不同的變體或演變序列。透過在批次處理中應用漸進式編輯和風格轉換,它提供了一組多功能的資產,專為逐格動畫關鍵影格和複雜的視覺敘事而最佳化。 |

Seedream4 Models 新功能 + 展示

將先進模型與 Atlas Cloud 的 GPU 加速平台相結合,為圖像和視頻生成提供無與倫比的速度、可擴展性和創意控制。

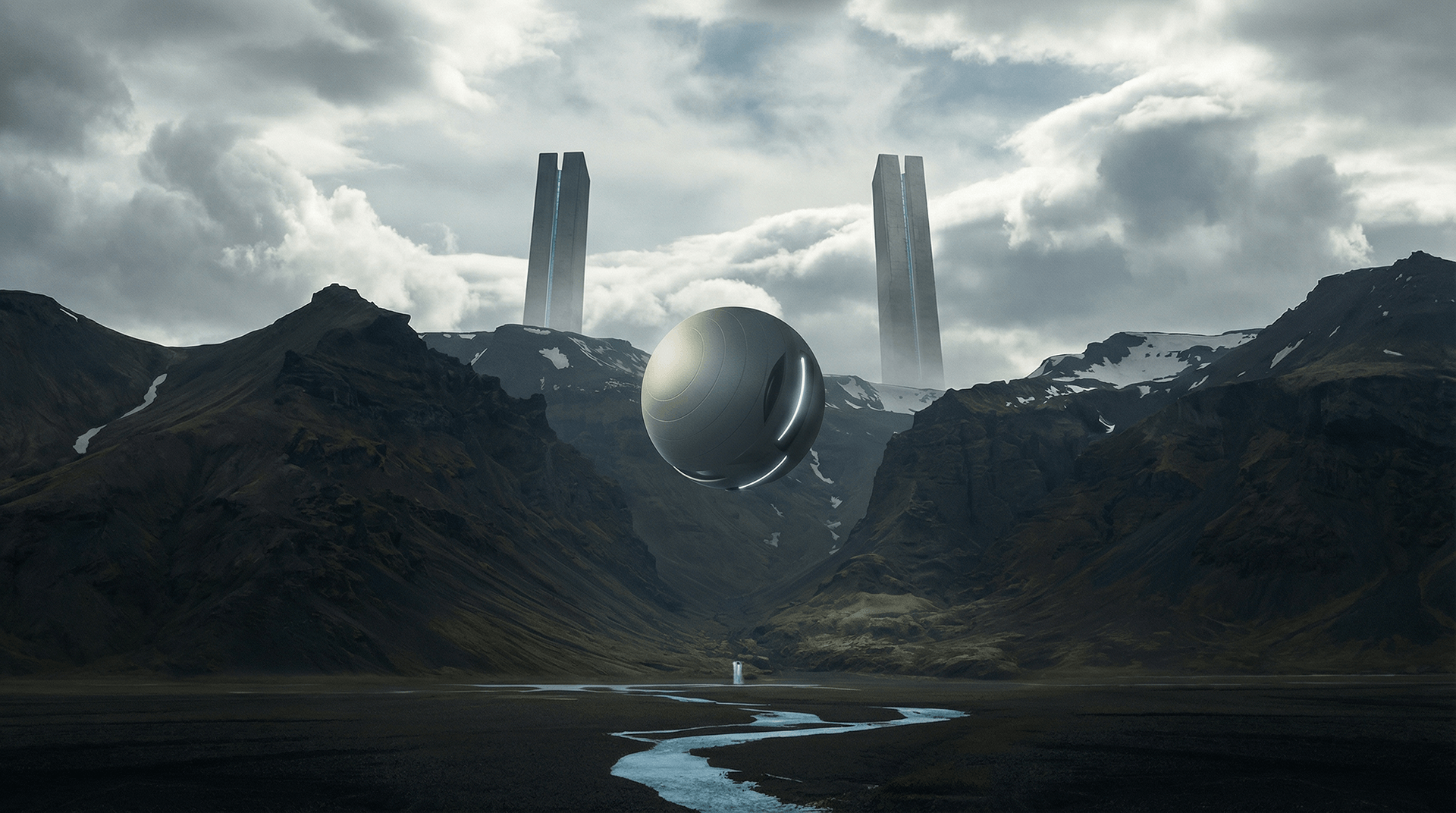

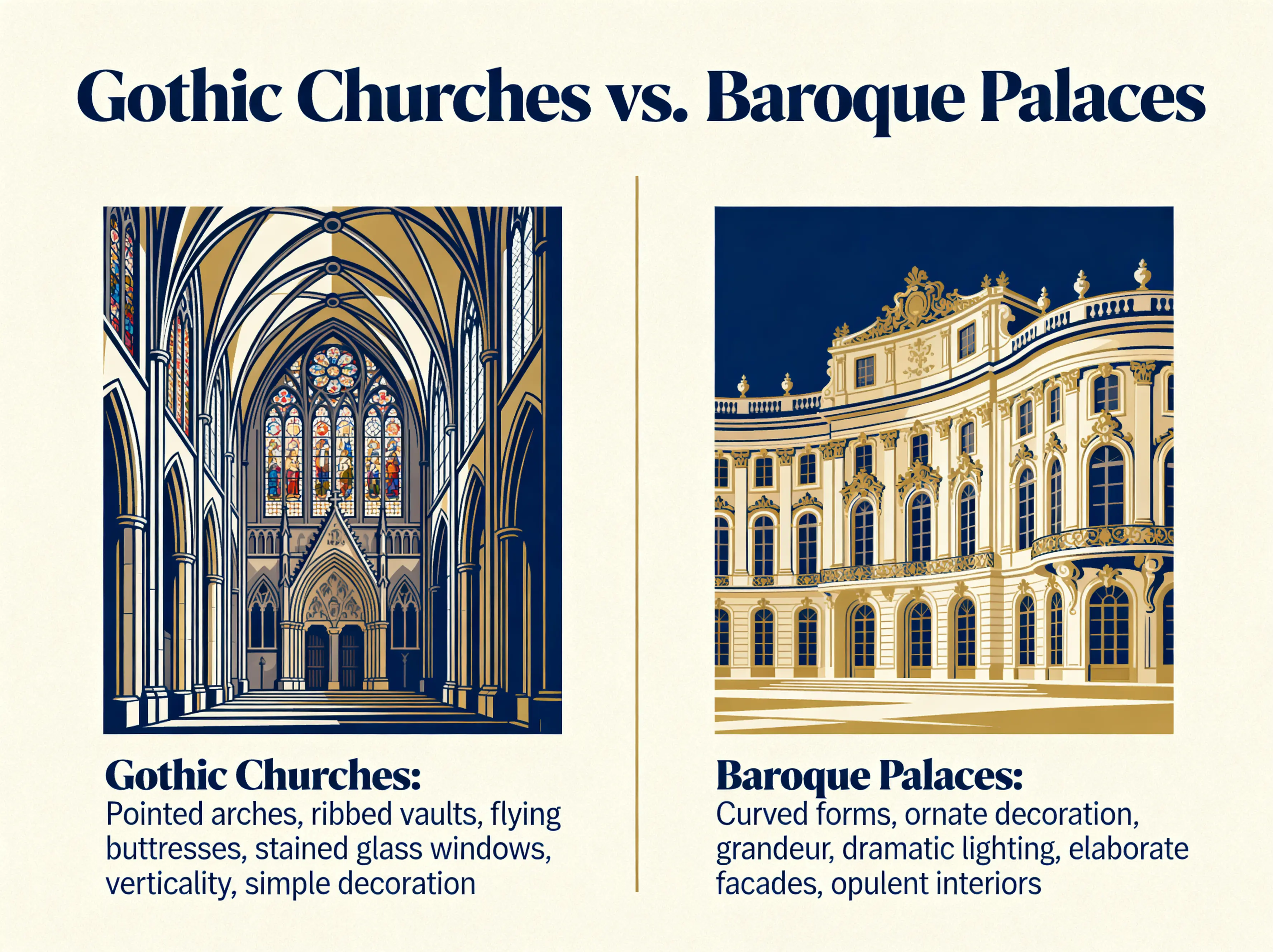

透過 Seedream v4 API 實現深度知識與邏輯推理

Seedream v4 整合了龐大的語意數據集,能以類人的推理能力和空間感知力解讀複雜的提示詞。透過理解錯綜複雜的文化細微差別和物理定律,該模型確保生成的每一個元素在語境上精確無誤且符合邏輯。它是視覺敘事、歷史重現以及概念複雜的創意簡報的終極解決方案。

使用 Seedream v4 API 進行基於提示詞的精準編輯

Seedream v4 透過直觀的文字指令實現對影像屬性的精細控制,且不破壞原始構圖。使用者可以精確修改紋理、光照或特定主體,確保在多次迭代中保持像素級的完美一致性。這是快速視覺原型設計、專業商業修圖和動態設計探索的終極解決方案。

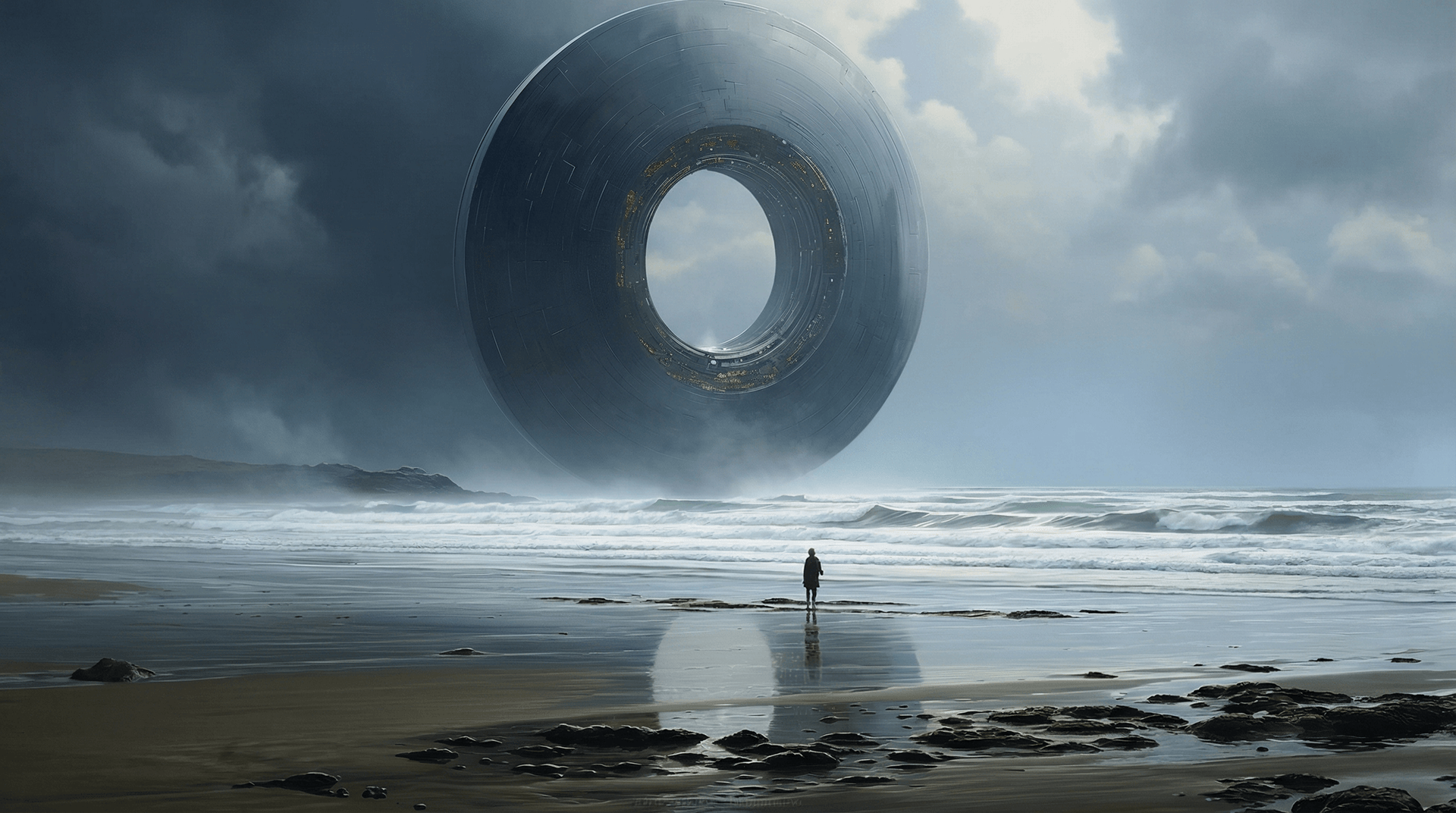

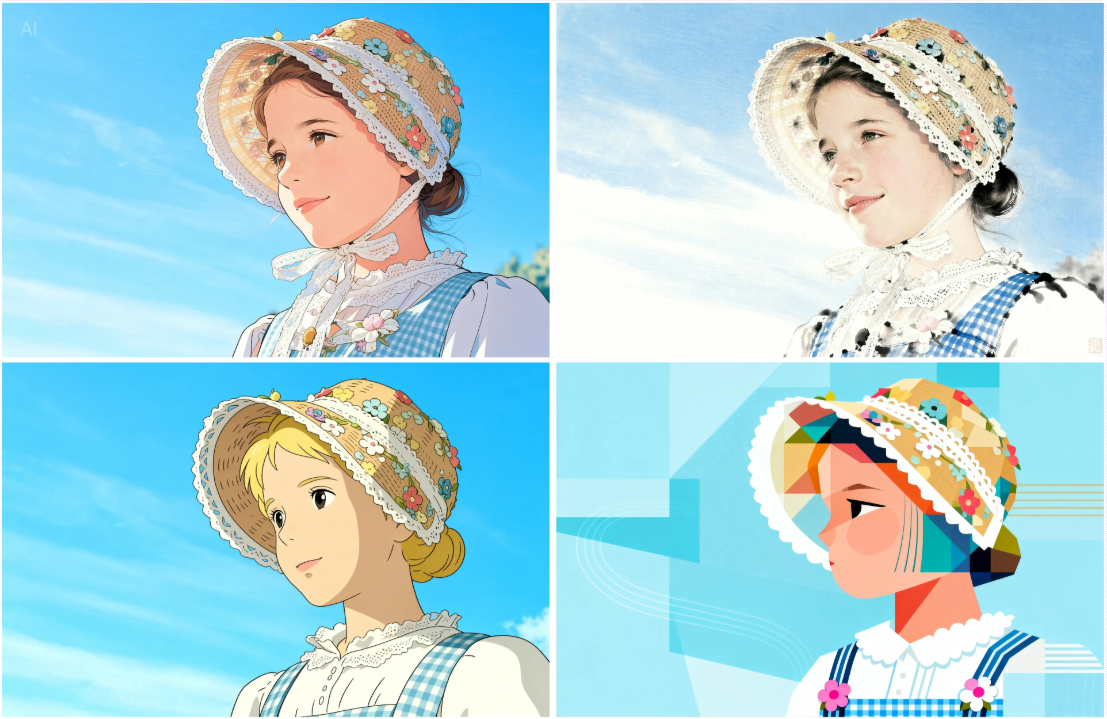

使用 Seedream v4 API 實現無限藝術多樣性

Seedream v4 提供廣泛的美學表現庫,涵蓋從超寫實電影攝影到前衛數位插畫的各種風格。其自適應架構能夠捕捉任何藝術媒介的靈魂,為任何視覺構想提供高保真紋理和真實的色彩分級。它是多樣化品牌活動、沉浸式遊戲資產和高端跨平台內容製作的終極解決方案。

使用 Seedream4 Models 可以做什麼

探索使用該模型家族可以構建的實際應用場景和工作流 — 從內容創作、自動化到生產級應用。

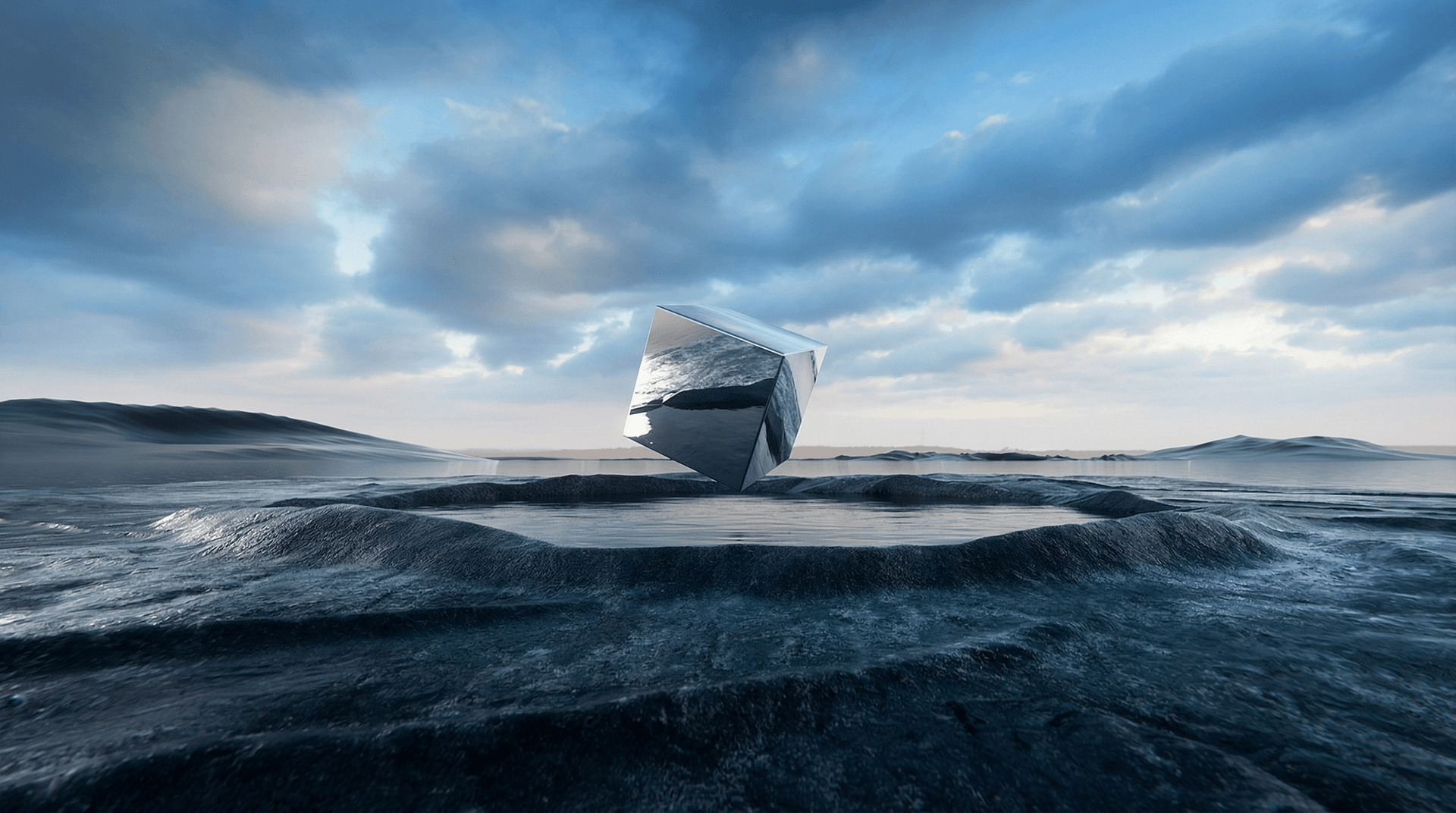

透過 Seedream v4 API 打造頂級電商影像

Seedream v4 賦能品牌即時生成高質感產品視覺圖,細膩渲染拉絲金屬、粒面皮革或動態液體飛濺等複雜材質。憑藉原生 4K 超高畫質輸出,該模型保持了精緻的光影過渡和景深控制。它是奢侈品行銷和電商詳情頁的理想解決方案,無需物理布光即可實現影棚級效果。

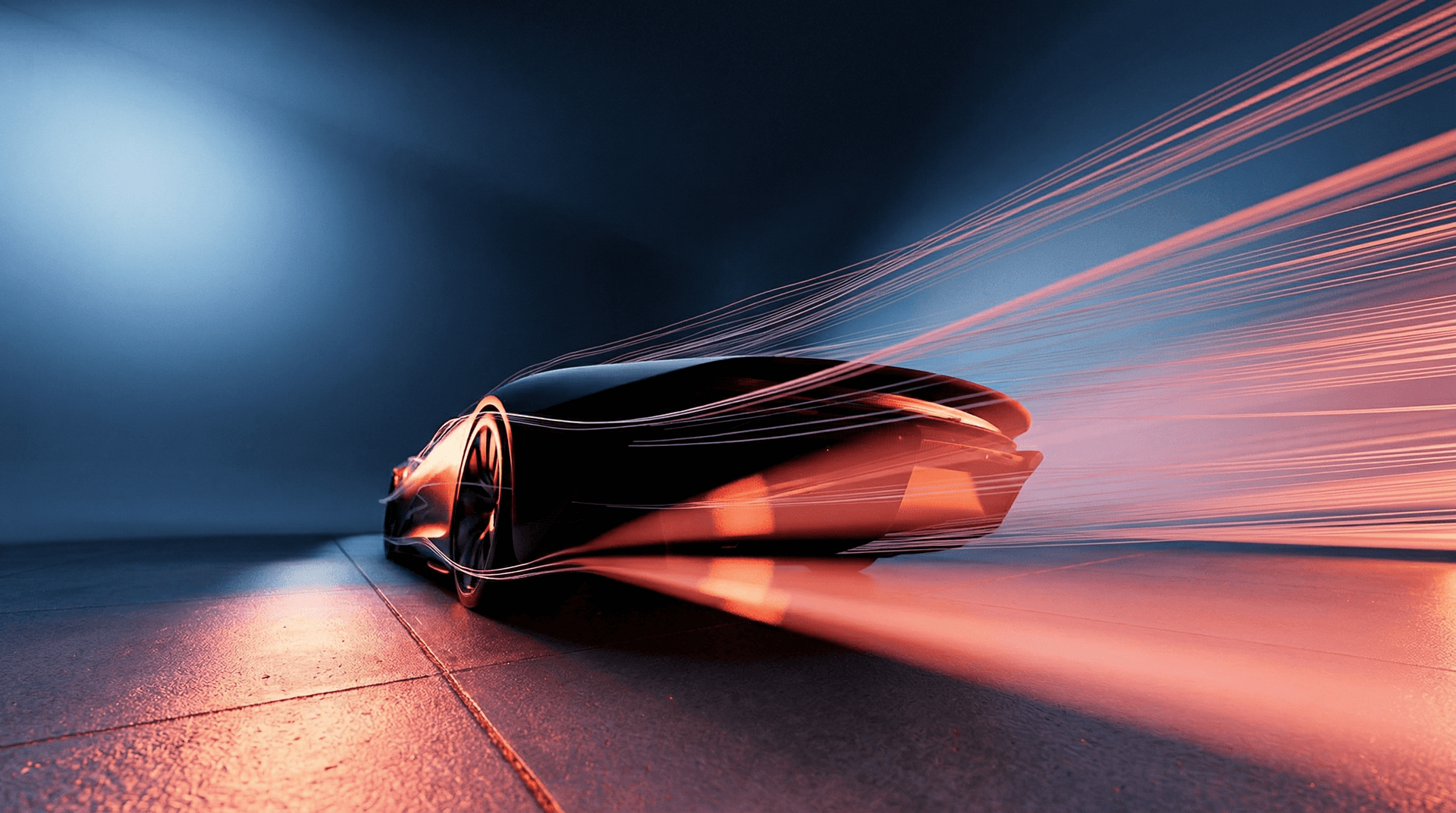

使用 Seedream v4 API 進行快速創意構思

對於步調快速的創意代理商而言,Seedream v4 利用業界領先的推論速度,在幾秒鐘內將腦力激盪的想法轉化為高傳真的視覺草稿。這種加速生成的流程顯著縮短了從腳本到概念藝術的回饋迴圈,使其成為廣告提案、社群媒體趨勢以及任何周轉速度與視覺效果同等重要的時效性行銷活動的完美選擇。

透過 Seedream v4 API 製作超高畫質大圖輸出影像

Seedream v4 產生的視覺內容即使放大用於戶外看板、公車候車亭或實體畫廊展示,仍能保持令人驚嘆的像素清晰度。從複雜的排版元素到宏大的全景細節,該模型確保每個紋理都能經得起近距離檢視。這適用於任何需要為高端線下視覺媒體、大型海報和室內裝飾提供絕不妥協的解析度的場景。

模型對比

查看不同廠商的模型表現 — 對比效能、價格和獨特優勢,做出明智決策。

| 模型 | 參考影像限制 | 輸出數量 | 解析度 | 長寬比 |

|---|---|---|---|---|

| Seedream v4 | 10 | 1~14 | 1024P~4K+ | Width[1024, 4096]px; Height[1024, 4096]px |

| Seedream 4.5 | 10 | 1~15 | 1080P~4K+ | Width[1440, 4096]px; Height[1440, 4096]px |

| Seedream 5.0 Lite | 14 | 1~15 | 2K~4K+ | 1:1 3:2 2:3 3:4 4:3 4:5 5:4 9:16 16:9 21:9 |

| Nano Banana 2 | 14 | 1 | 4K, 2K, 1K | 1:1 3:2 2:3 3:4 4:3 4:5 5:4 9:16 16:9 21:9 |

| Qwen-Image | 3 | 1~6 | 512P~2K | Width[512, 2048]px; Height[512, 2048]px |

| Wan 2.6 I2I(Image To Image) | 4 | 1 | 580P~1080P+ | 1:1 3:2 2:3 3:4 4:3 4:5 5:4 9:16 16:9 21:9 9:21 |

如何在 Atlas Cloud 上使用 Seedream4 Models

幾分鐘即可上手 — 按照以下簡單步驟,透過 Atlas Cloud 平台整合和部署模型。

建立 Atlas Cloud 帳戶

在 atlascloud.ai 註冊並完成驗證。新用戶可獲得免費額度,用於探索平台和測試模型。

為何在 Atlas Cloud 使用 Seedream4 Models

將先進的 Seedream4 Models 模型與 Atlas Cloud 的 GPU 加速平台相結合,提供無與倫比的效能、可擴展性和開發體驗。

效能與靈活性

低延遲:

GPU 最佳化推理,實現即時回應。

統一 API:

一次整合,暢用 Seedream4 Models、GPT、Gemini 和 DeepSeek。

透明定價:

按 Token 計費,支援 Serverless 模式。

企業與規模

開發者體驗:

SDK、資料分析、微調工具和模板一應俱全。

可靠性:

99.99% 可用性、RBAC 權限控制、合規日誌。

安全與合規:

SOC 2 Type II 認證、HIPAA 合規、美國資料主權。

關於 Seedream4 Models 的常見問題

支援高達 4K 超高畫質 (4096*4096) 輸出,確保大幅面列印和高精度設計任務呈現令人驚豔的細節。

Seedream v4 提供顯著更快的推理速度和增強的邏輯推理能力,能夠更精確地解讀複雜提示詞中的空間關係。

是的。Seedream v4 具備強大的基於提示詞的編輯功能,允許使用者透過簡單的文字指令調整紋理、光照或特定主體。

探索更多系列

Seedance 2.0 Models

Seedance 2.0(by Bytedance) is a multimodal video generation model that redefines "controllable creation," moving beyond the limitations of text or start/end frames. It supports quad-modal inputs—text, image, video, and audio—and introduces an industry-leading "Universal Reference" system. By precisely replicating the composition, camera movement, and character actions from reference assets, Seedance 2.0 solves critical issues with character consistency and physical coherence, empowering creators to act as true "directors" with deep control over their output.

GPT Image 2 Models

GPT Image 2 is a state-of-the-art multimodal foundation model engineered for exceptional text-to-image generation with unprecedented photorealism and creative versatility. Developed by OpenAI as the evolution of the DALL-E lineage, it transforms detailed natural language descriptions into hyper-realistic imagery at up to 4K resolution. With proprietary "Neural Rendering Engine" technology for precise visual control, GPT Image 2 delivers studio-quality results with accurate anatomy, lighting, and composition—making it the premier AI tool for professional creators, enterprises, and developers demanding production-ready visual assets.

Wan2.7 Models

Launching this March, Wan2.7 is the latest powerhouse in the Qwen ecosystem, delivering a massive upgrade in visual fidelity, audio synchronization, and motion consistency over version 2.6. This all-in-one AI video generator supports advanced features like first-and-last frame control, 3x3 grid synthesis, and instruction-based video editing. Outperforming competitors like Jimeng, Wan2.7 offers superior flexibility with support for real-person image inputs, up to five video references, and 1080P high-definition outputs spanning 2 to 15 seconds, making it the premier choice for professional digital storytelling and high-end content marketing.

Veo3.1 Models

Google DeepMind’s Veo 3.1 represents a paradigm shift in AI video generation, empowering creators with director-level narrative control and cinematic-grade audio quality that seamlessly integrates with its enhanced visual realism. By bridging the gap between imaginative concepts and photorealistic execution, this advanced model offers a transformative solution for a wide range of application scenarios, from professional filmmaking and high-end advertising to immersive digital content creation.

ERNIE Image Models

ERNIE-Image is an open-weight text-to-image model developed by the ERNIE-Image Team at Baidu, built on a single-stream Diffusion Transformer (DiT) with 8B parameters and paired with a lightweight Prompt Enhancer that rewrites short prompts into richer, more structured descriptions before passing them to the diffusion backbone. NYU Shanghai RITS Released on April 15, 2026 under the Apache 2.0 license, it transforms natural language descriptions into detailed imagery with particular strength in text rendering and structured layout generation. ERNIE-Image is designed not only for strong visual quality, but for controllability in practical generation scenarios where accurate content realization matters as much as aesthetics — making it well-suited for commercial posters, comics, multi-panel layouts, and other content creation tasks that require both visual quality and precise control.

Happy Horse 1.0

HappyHorse-1.0 is a unified multimodal AI video generation model that climbed to the top of the Artificial Analysis Video Arena blind-test leaderboard for both text-to-video and image-to-video generation. CNBC Alibaba Group confirmed ownership of HappyHorse, developed under its Alibaba Token Hub (ATH) business unit, where it leads benchmarks outperforming ByteDance's Seedance 2.0 and others. Caixin Global Led by Zhang Di — the former VP of Kuaishou who architected Kling AI — the 15-billion parameter model generates 1080p video with synchronized audio in a single pass using a unified transformer architecture that bypasses the multi-stage pipelines used by every major competitor.

GPT Image Models

The GPT Image Family is OpenAI's latest suite of multimodal image generation and editing models, built on the powerful GPT architecture. This family includes three tiers — GPT Image-1, GPT Image-1.5, and GPT Image-1 Mini — each available in both Text-to-Image and Image-to-Image variants. Combining GPT's world-class language understanding with DALL·E-class visual synthesis, these models deliver exceptional prompt adherence, photorealistic rendering, and creative versatility across illustration, photography, design, and visualization tasks. The series offers flexible pricing and quality tiers to match any workflow — from rapid prototyping and high-volume content production to professional-grade final deliverables. Whether you need ultra-fast iterations at minimal cost or maximum quality for brand campaigns, the GPT Image Family has a solution tailored to your needs.

Nano Banana2 Models

Nano Banana 2 (by Google), is a generative image model that perfectly balances lightning-fast rendering with exceptional visual quality. With an improved price-performance ratio, it achieves breakthrough micro-detail depiction, accurate native text rendering, and complex physical structure reconstruction. It serves as a highly efficient, commercial-grade visual production tool for developers, marketing teams, and content creators.

Seedream5.0 Models

Seedream 5.0, developed by ByteDance’s Jimeng AI, is a high-performance AI image generation model that integrates real-time search with intelligent reasoning. Purpose-built for time-sensitive content and complex visual logic, it excels at professional infographics, architectural design, and UI assistance. By blending live web insights with creative precision, Seedream 5.0 empowers commercial branding and marketing with a seamless, logic-driven workflow that turns sophisticated data into stunning, high-fidelity visuals.

Kling3.0 Models

Kuaishou’s flagship video generation suite, Kling 3.0, features two powerhouse models—Kling 3.0 (Upgraded from Kling 2.6) and Kling 3.0 Omni (Kling O3, Upgraded from Kling O1)—both offering high-fidelity native audio integration. While Kling 3.0 excels in intelligent cinematic storytelling, multilingual lip-syncing, and precision text rendering, Kling O3 sets a new standard for professional-grade subject consistency by supporting custom subjects and voice clones derived from video or image inputs. Together, these models provide a comprehensive solution tailored for cinematic narratives, global marketing campaigns, social media content, and digital skit production.

GLM LLM Models

GLM is a cutting-edge LLM series by Z.ai (Zhipu AI) featuring GLM-5, GLM-4.7, and GLM-4.6. Engineered for complex systems and long-horizon agentic tasks, GLM-5 outperforms top-tier closed-source models in elite benchmarks like Humanity’s Last Exam and BrowseComp. While GLM-4.7 specializes in reasoning, coding, and real-world intelligent agents, the entire GLM suite is fast, smart, and reliable, making it the ultimate tool for building websites, analyzing data, and delivering instant, high-quality answers for any professional workflow.

Open AI Model Families

Explore OpenAI’s language and video models on Atlas Cloud: ChatGPT for advanced reasoning and interaction, and Sora-2 for physics-aware video generation.