Seedream Image Models

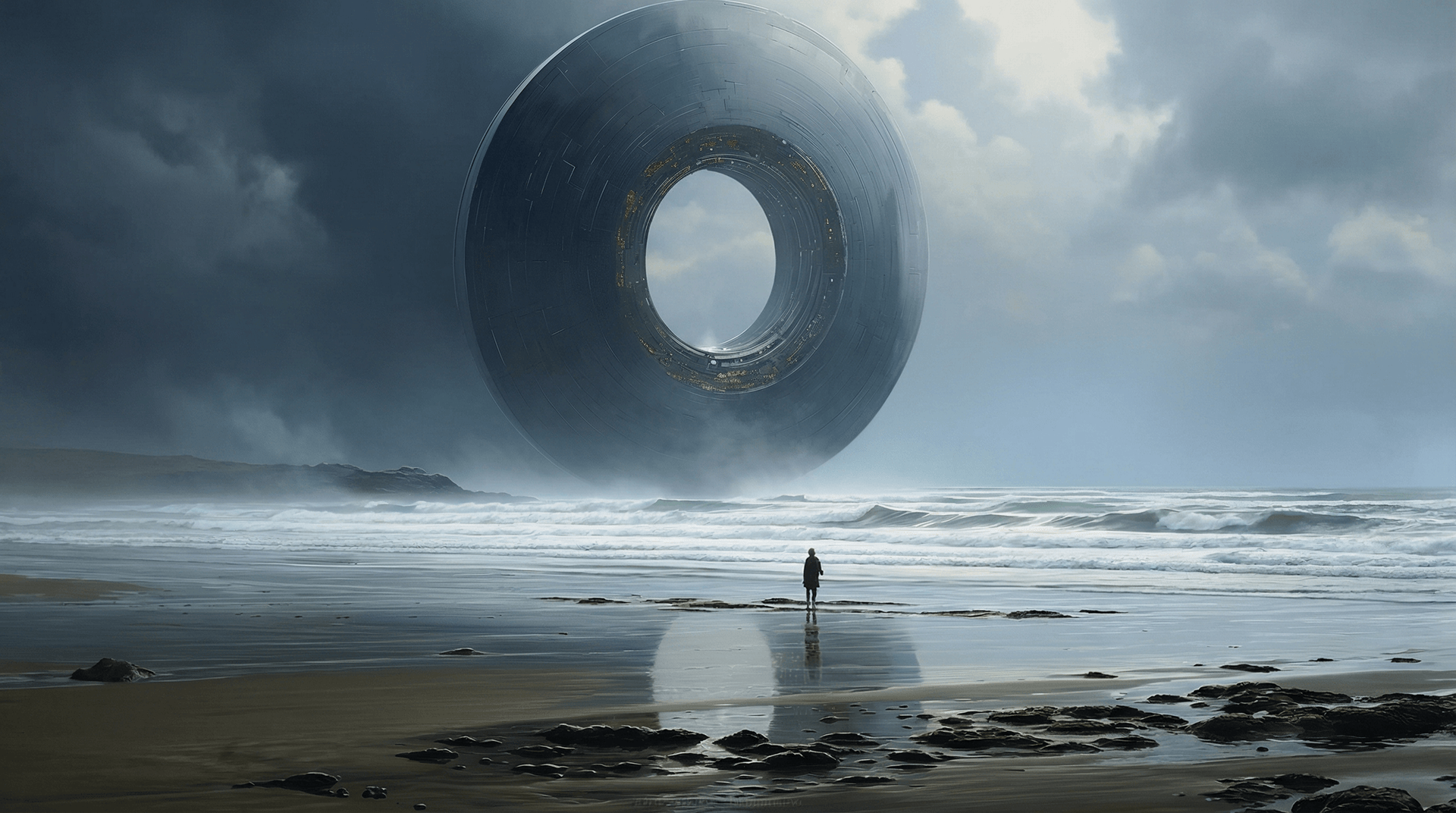

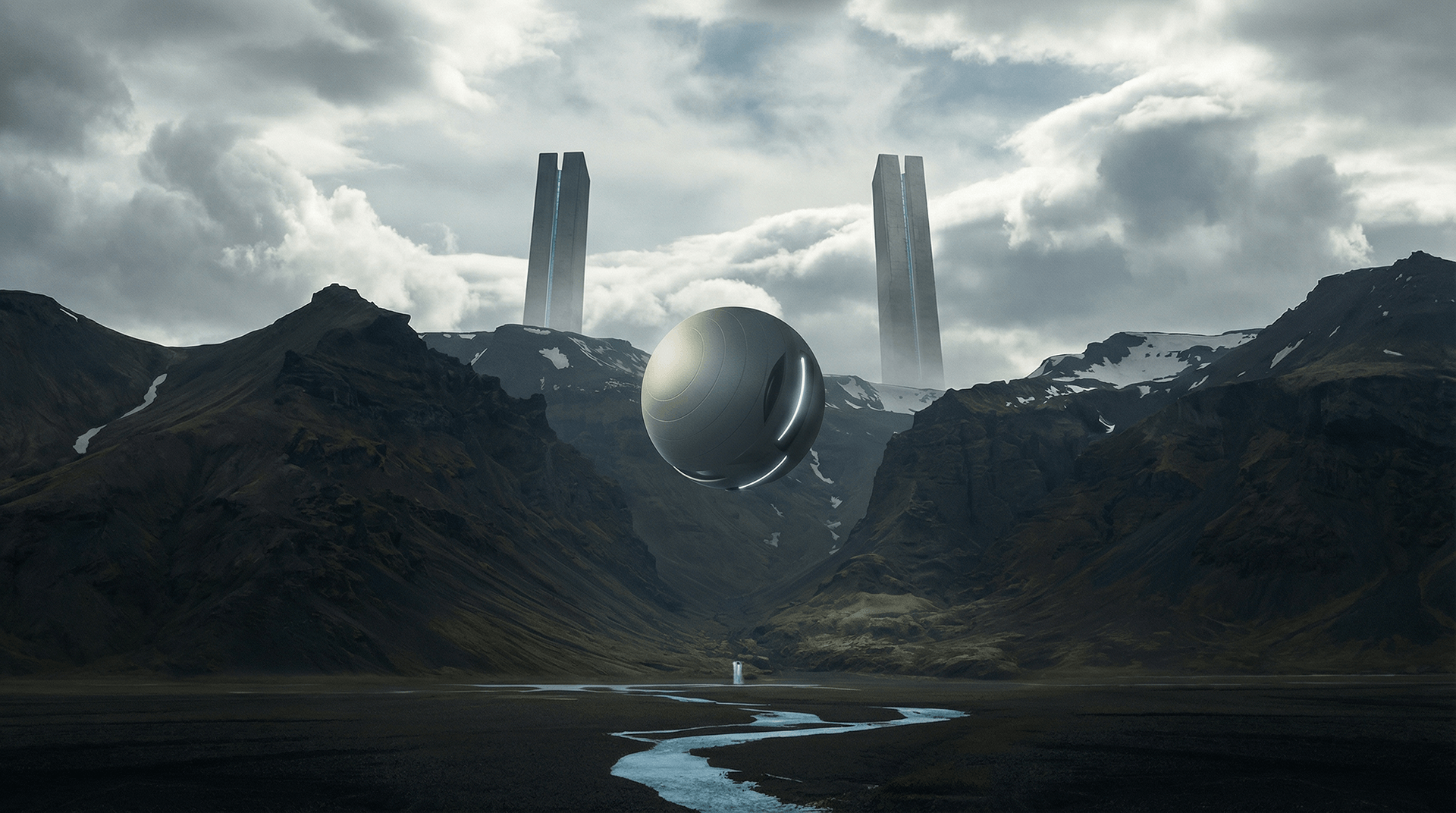

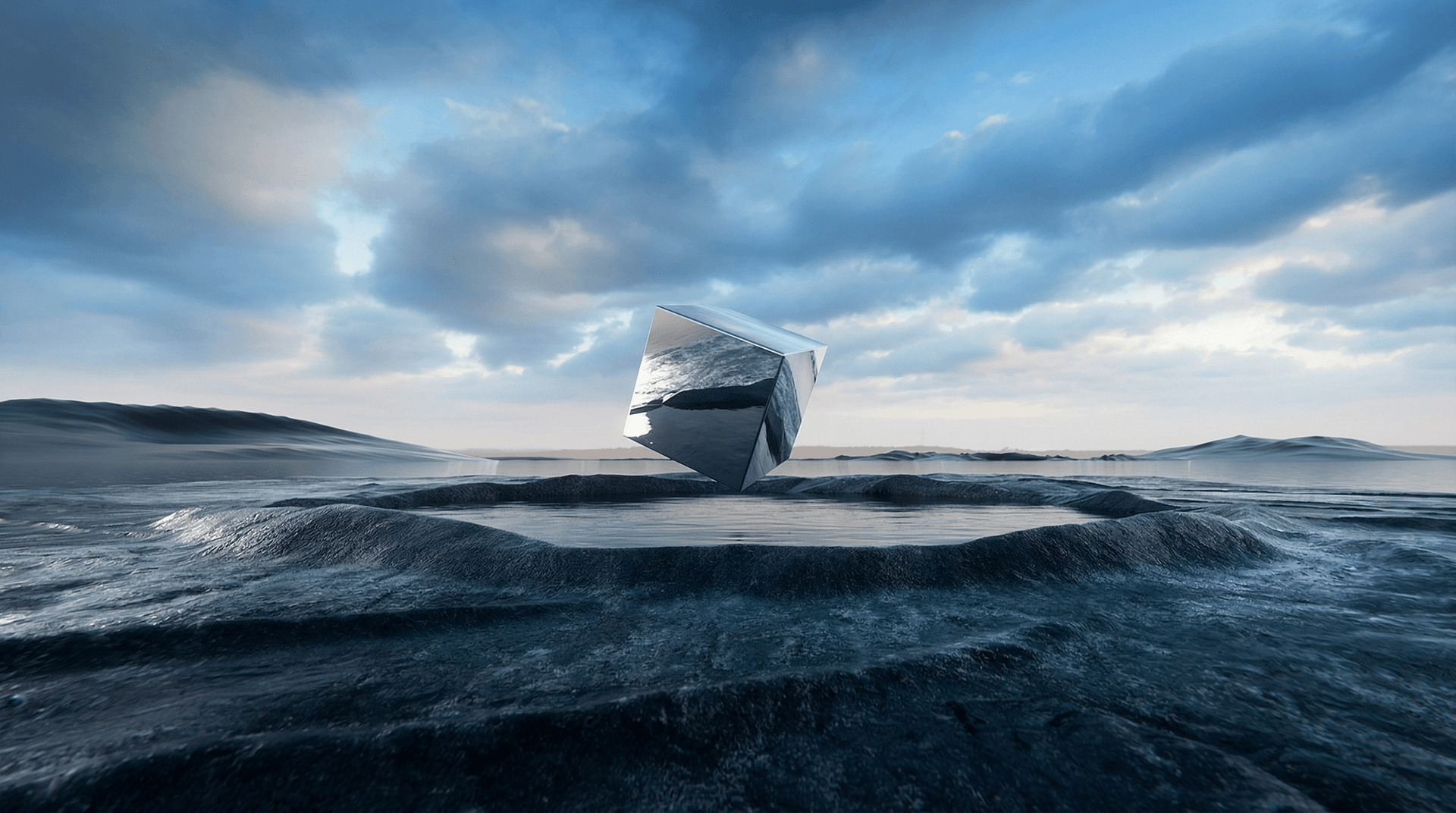

Seedream은 ByteDance가 개발한 고급 AI 이미지 생성 모델로, 텍스트와 참조 이미지를 빠른 성능과 풍부한 창의적 제어 기능을 통해 고품질의 전문적인 비주얼로 변환하도록 설계되었습니다. 이는 텍스트-이미지 생성(text-to-image)과 직관적인 이미지 편집을 단일 워크플로로 통합하여 다중 이미지 참조와 일관된 스타일 결과물을 지원합니다. Seedream은 초고속 고해상도 이미지(최대 4K)를 제공하며 세밀한 렌더링에 탁월하여 디자이너, 마케터 및 크리에이터에게 이상적입니다.

주요 모델 탐색

Atlas Cloud는 업계 최고의 최신 크리에이티브 모델을 제공합니다.

Seedream v4.5

ByteDance latest image generation model achieving all-round improvements. Excels at typography, poster design, and brand visual creation with superior prompt adherence.

Seedream v4.5 Edit

ByteDance advanced image editing model that preserves facial features, lighting, and color tones while enabling professional-quality modifications.

Seedream v4.5 Sequential

ByteDance latest image generation model with batch generation support. Generate up to 15 images in a single request.

Seedream v4.5 Edit Sequential

ByteDance advanced image editing model with batch generation support. Edit multiple images while preserving facial features and details.

Seedream v4

Open and Advanced Large-Scale Image Generative Models.

Seedream v4 Sequential

Open and Advanced Large-Scale Image Generative Models.

Seedream v4 Edit

Open and Advanced Large-Scale Image Generative Models.

Seedream v4 Edit Sequential

Open and Advanced Large-Scale Image Generative Models.

Seedream v3.1

Open and Advanced Large-Scale Image Generative Models.

Seedream V3

ByteDance Seedream V3 is a state-of-the-art text-to-image model that excels in generating high-quality, photorealistic images with exceptional detail and artistic flair.

Seedream Image Models의 주요 특징

Atlas Cloud는 업계 최고의 최신 크리에이티브 모델을 제공합니다.

이미지 합성

Seedream v3–v4 모델을 사용하여 텍스트 프롬프트에서 이미지를 생성합니다.

직접 편집

Seedream v4/edit 엔드포인트를 통해 이미지를 정제합니다.

순차적 편집

Applies step-by-step changes with edit-sequential model.

순차 출력

순차적 생성을 통해 다단계 결과를 생성합니다.

버전 옵션

다양한 요구 사항을 충족하기 위해 v3, v3.1 및 v4 변형을 제공합니다.

이미지 입력

편집 모델은 기존 이미지를 입력으로 받아 프롬프트를 통해 이를 수정할 수 있습니다.

Seedream Image Models으로 할 수 있는 일

Atlas Cloud는 업계 최고의 최신 크리에이티브 모델을 제공합니다.

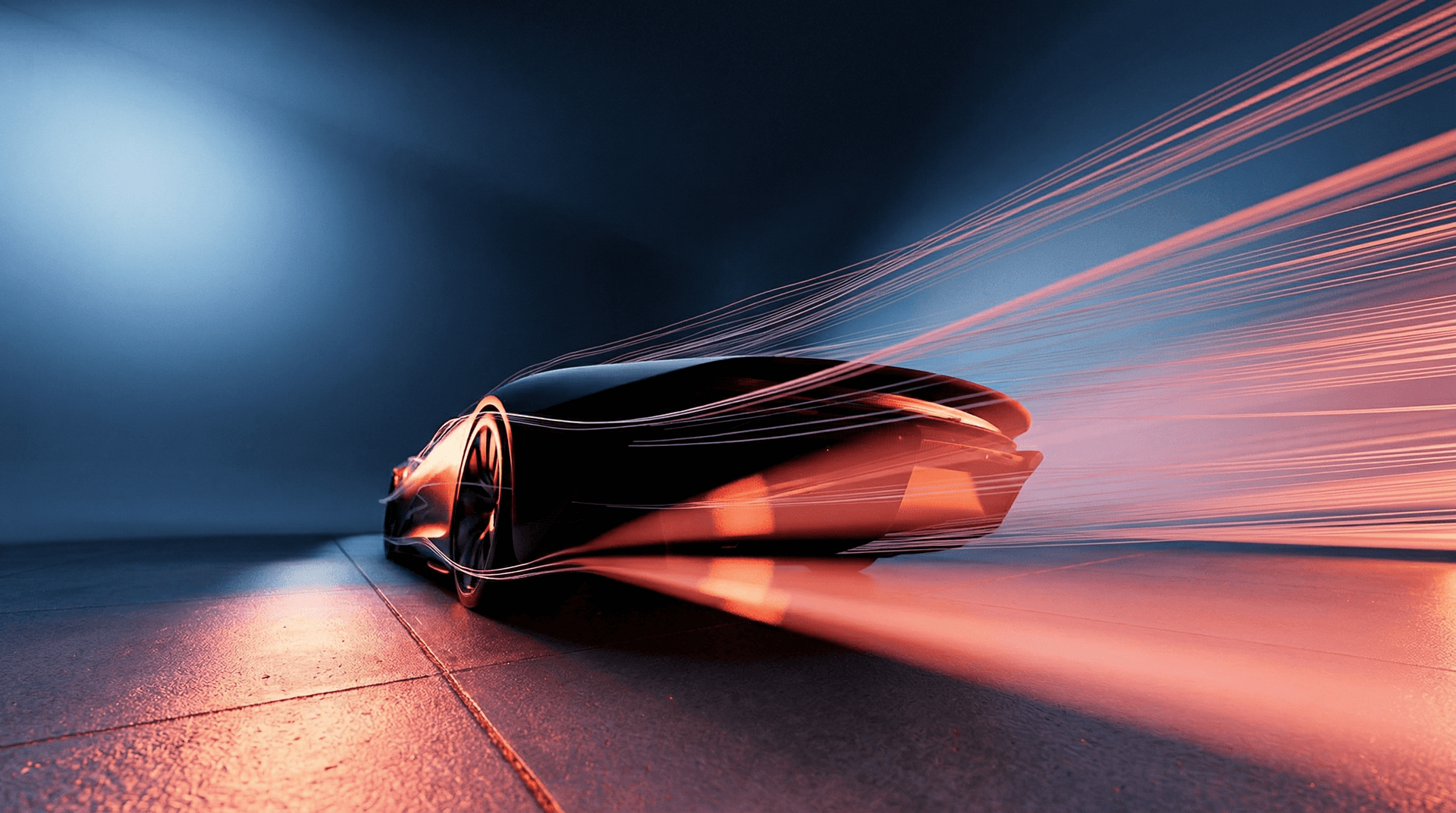

고해상도 마케팅 비주얼, 제품 이미지 및 크리에이티브 에셋을 생성하세요.

프레임 단위의 정밀한 제어로 디자인을 편집하고 반복 수정하세요.

텍스트 프롬프트로 예술적인 컨셉 아트나 실사 같은 렌더링을 생성하세요.

광고, 패션 및 디자인을 위한 확장 가능한 이미지 워크플로우를 구축하세요.

Atlas Cloud에서 Seedream Image Models을(를) 사용하는 이유

고급 Seedream Image Models 모델과 Atlas Cloud의 GPU 가속 플랫폼을 결합하여 비교할 수 없는 성능, 확장성 및 개발자 경험을 제공합니다.

성능 및 유연성

낮은 지연 시간:

실시간 추론을 위한 GPU 최적화 추론.

통합 API:

하나의 통합으로 Seedream Image Models, GPT, Gemini 및 DeepSeek를 실행합니다.

투명한 가격:

Serverless 옵션을 포함한 예측 가능한 token당 청구.

엔터프라이즈 및 확장

개발자 경험:

SDK, 분석, 파인튜닝 도구 및 템플릿.

신뢰성:

99.99% 가동 시간, RBAC 및 규정 준수 로깅.

보안 및 규정 준수:

SOC 2 Type II, HIPAA 준수, 미국 내 데이터 주권.

더 많은 패밀리 탐색

Seedance 2.0 Video Models

Seedance 2.0(by Bytedance) is a multimodal video generation model that redefines "controllable creation," moving beyond the limitations of text or start/end frames. It supports quad-modal inputs—text, image, video, and audio—and introduces an industry-leading "Universal Reference" system. By precisely replicating the composition, camera movement, and character actions from reference assets, Seedance 2.0 solves critical issues with character consistency and physical coherence, empowering creators to act as true "directors" with deep control over their output.

Vidu Video Models

Vidu (by ShengShu Technology) is a foundational video model built on the proprietary U-ViT architecture, combining the strengths of Diffusion and Transformer models. It features superior semantic understanding and generation capabilities, producing coherent, fluid visuals that adhere to physical laws without the need for interpolation. With exceptional spatiotemporal consistency and a deep understanding of diverse cultural elements, Vidu empowers professional filmmakers and creators with a stable, efficient, and imaginative tool for video production.

MiniMax LLM Models

MiniMax is a large language model developed by MiniMax AI, focused on efficient reasoning, long-context understanding, and scalable text generation. It is designed for complex tasks such as dialogue systems, document analysis, content creation, and AI agents. With an emphasis on high performance at lower computational cost, MiniMax is well suited for enterprise applications and developer use cases where stability, efficiency, and cost control are important.

GLM LLM Models

GLM (General Language Model) is a large language model developed by ZAI (Zhipu AI) for text understanding, generation, and reasoning. It supports both Chinese and English and performs well in dialogue, content creation, translation, and code assistance. GLM is widely used in chatbots, enterprise AI systems, and developer applications due to its stable performance and versatility.

Moonshot LLM Models

Kimi is a large language model developed by Moonshot AI, designed for reasoning, coding, and long-context understanding. It performs well in complex tasks such as code generation, analysis, and intelligent assistants. With strong performance and efficient architecture, Kimi is suitable for enterprise AI applications and developer use cases. Its balance of capability and cost makes it an increasingly popular choice in the LLM ecosystem.

Open AI Model Families

Explore OpenAI’s language and video models on Atlas Cloud: ChatGPT for advanced reasoning and interaction, and Sora-2 for physics-aware video generation.

Van Video Models

Van Model is a flagship video model family, perfectly retaining the cinematic visuals and complex dynamics of 3D VAE and Flow Matching. By leveraging proprietary compute distillation, it breaks the "quality equals cost" barrier to deliver extreme inference speeds and ultra-low costs. This makes Van the premier engine for enterprises and developers seeking high-frequency, scalable video production on a budget.

Kling 3.0 Video Models

Kling AI Video 3.0 (by Kuaishou) is a groundbreaking model designed to bridge the worlds of sound and visuals through its unique Single-pass architecture. By simultaneously generating visuals, natural voiceovers, sound effects, and ambient atmosphere, it eliminates the disjointed workflows of traditional tools. This true audio-visual integration simplifies complex post-production, providing creators with an immersive storytelling solution that significantly boosts both creative depth and output efficiency.

Veo3.1 Video Models

Veo 3.1 (by Google) is a flagship generative video model that sets a new standard for cinematic AI by deeply integrating semantic capabilities to deliver cinematic visuals, synchronized audio, and complex storytelling in a single workflow. Distinguishing itself through superior adherence to cinematic terminology and physics-based consistency, it offers professional filmmakers an unparalleled tool for transforming scripts into coherent, high-fidelity productions with precise directorial control.

Sora-2 Video Models

The Sora-2 family from OpenAI is the next-generation video + audio generation model, enabling both text-to-video and image-to-video outputs with synchronized dialogue, sound effect, improved physical realism, and fine-grained control.

Nano Banana Image Models

Nano Banana is a fast, lightweight image generation model for playful, vibrant visuals. Optimized for speed and accessibility, it creates high-quality images with smooth shapes, bold colors, and clear compositions—perfect for mascots, stickers, icons, social posts, and fun branding.

Wan2.6 Video Models

Wan 2.6 is a next-generation AI video generation model from Alibaba’s Tongyi Lab, designed for professional-quality, multimodal video creation. It combines advanced narrative understanding, multi-shot storytelling, and native audio–visual synchronization to produce smooth 1080p videos up to 15 s long from text and reference inputs. Wan 2.6 also supports character consistency and role-guided generation, enabling creators to turn scripts into cohesive scenes with seamless motion and lip syncing. Its efficiency and rich creative control make it ideal for short films, advertising, social media content, and automated video workflows.