Kling 3.0 Video Models

Kling AI Video 3.0 (by Kuaishou) — это революционная модель, разработанная для объединения миров звука и изображения благодаря уникальной архитектуре Single-pass. Одновременно генерируя визуальные эффекты, естественную закадровую речь, звуковые эффекты и окружающую атмосферу, она устраняет разрозненные рабочие процессы, характерные для традиционных инструментов. Эта истинная аудиовизуальная интеграция упрощает сложный постпродакшн, предоставляя создателям иммерсивное решение для сторителлинга, которое значительно повышает как творческую глубину, так и эффективность производства.

Исследуйте ведущие модели

Atlas Cloud предоставляет вам новейшие ведущие креативные модели индустрии.

Kling v2.6 Pro Avatar

Kling V2 AI Avatar Pro generates high-quality AI avatar videos with clean detail, stable motion, and strong identity consistency—ideal for profiles, intros, and social content.

Kling v2.6 Std Avatar

Kling AI Avatar generates high-quality AI avatar videos for profiles, intros, and social content, delivering clean detail and cinematic motion with reliable prompt adherence.

Kling v2.6 Pro Motion Control

Kling 2.6 Pro Motion Control turns reference motion clips (dance, action, gesture) into smooth, realistic animations. Upload a character image (or source video) and a motion video; the model transfers the movement while preserving identity and temporal consistency.

Kling v2.6 Std Motion Control

Kling 2.6 Standard Motion Control transfers motion from reference videos to animate still images. Upload a character image and a motion clip (dance, action, gesture), and the model extracts the movement to generate smooth, realistic video.

Kling v2.6 Pro Text-to-Video

Latest text-to-video model from Kuaishou with sound generation, flexible aspect ratios, and cinematic quality.

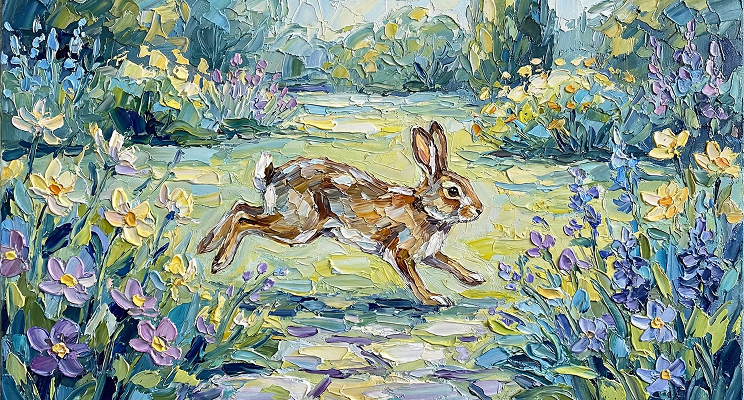

Kling v2.6 Pro Image-to-Video

Latest image-to-video model from Kuaishou with sound generation, enhanced dynamics, and cinematic quality.

Kling Video O1 Image-to-video

Kling Omni Video O1 Image-to-Video transforms static images into dynamic cinematic videos using MVL (Multi-modal Visual Language) technology. Maintains subject consistency while adding natural motion, physics simulation, and seamless scene dynamics. Ready-to-use REST API, best performance, no coldstarts, affordable pricing.

Kling Video O1 Reference-to-video

Kling Omni Video O1 Reference-to-Video generates creative videos using character, prop, or scene references from multiple viewpoints. Extracts subject features and creates new video content while maintaining identity consistency across frames. Ready-to-use REST API, best performance, no coldstarts, affordable pricing.

Kling Video O1 Text-to-video

Kling Omni Video O1 is Kuaishou's first unified multi-modal video model with MVL (Multi-modal Visual Language) technology. Text-to-Video mode generates cinematic videos from text prompts with subject consistency, natural physics simulation, and precise semantic understanding. Ready-to-use REST API, best performance, no coldstarts, affordable pricing.

Что выделяет Kling 3.0 Video Models

Atlas Cloud предоставляет вам новейшие ведущие креативные модели в отрасли.

Продвинутое понимание промптов

Точно интерпретирует сложный текст, действия и операторские сигналы для создания связного, сюжетно-ориентированного контента.

Плавное, реалистичное движение

Улучшенное пространственно-временное моделирование обеспечивает естественные движения персонажей и кинематографическую плавность.

Визуальное качество уровня 4K

Создает детализированные клипы в разрешении 1080p и раннем 4K со стабильным освещением, текстурой и глубиной.

Редактирование динамических сцен

Добавляйте, заменяйте или удаляйте объекты и предметы с помощью простого ввода текста или изображений.

Точное управление кадрами

Настраивайте ракурсы камеры, тайминг и переходы с точностью до кадра.

Точное управление кадрами

Настраивайте ракурсы камеры, тайминг и переходы с точностью до кадра.

Что вы можете сделать с Kling 3.0 Video Models

Atlas Cloud предоставляет вам новейшие ведущие креативные модели в отрасли.

Создавайте реалистичные видеоролики по простым текстовым запросам.

Превратите фотографии в выразительные видеоклипы с непрерывностью движения.

Добивайтесь связности на уровне сцены, идеально подходящей для сторителлинга, рекламы и визуальных эффектов.

Создавайте контент кинематографического качества в форматах 16:9, 9:16 или квадратном для соцсетей или профессионального продакшна.

Быстро переключайтесь между режимами Standard, Pro и Master для баланса скорости и качества.

Почему использовать Kling 3.0 Video Models на Atlas Cloud

Объединение продвинутых моделей Kling 3.0 Video Models с GPU-ускоренной платформой Atlas Cloud обеспечивает непревзойденную производительность, масштабируемость и опыт разработчика.

Производительность и гибкость

Низкая латентность:

GPU-оптимизированный вывод для рассуждения в реальном времени.

Единый API:

Запускайте Kling 3.0 Video Models, GPT, Gemini и DeepSeek с одной интеграцией.

Прозрачные цены:

Предсказуемое поточное биллирование с serverless опциями.

Предприятие и масштаб

Опыт разработчика:

SDK, аналитика, инструменты тонкой настройки и шаблоны.

Надежность:

99.99% uptime, RBAC и логирование соответствия.

Безопасность и соответствие:

SOC 2 Type II, соответствие HIPAA, суверенитет данных в США.

Исследуйте больше семейств

Van Video Models

Van Model is a flagship video model family, perfectly retaining the cinematic visuals and complex dynamics of 3D VAE and Flow Matching. By leveraging proprietary compute distillation, it breaks the "quality equals cost" barrier to deliver extreme inference speeds and ultra-low costs. This makes Van the premier engine for enterprises and developers seeking high-frequency, scalable video production on a budget.

Veo3.1 Video Models

Veo 3.1 (by Google) is a flagship generative video model that sets a new standard for cinematic AI by deeply integrating semantic capabilities to deliver cinematic visuals, synchronized audio, and complex storytelling in a single workflow. Distinguishing itself through superior adherence to cinematic terminology and physics-based consistency, it offers professional filmmakers an unparalleled tool for transforming scripts into coherent, high-fidelity productions with precise directorial control.

Kling 3.0 Video Models

Kling AI Video 3.0 (by Kuaishou) is a groundbreaking model designed to bridge the worlds of sound and visuals through its unique Single-pass architecture. By simultaneously generating visuals, natural voiceovers, sound effects, and ambient atmosphere, it eliminates the disjointed workflows of traditional tools. This true audio-visual integration simplifies complex post-production, providing creators with an immersive storytelling solution that significantly boosts both creative depth and output efficiency.

Kling O3 Video Models

Kling AI Video O3 (by Kuaishou) is an unified multimodal video model designed to unlock endless creative possibilities through its advanced MVL architecture. By integrating videos, images, and text descriptions, it offers a more intuitive and efficient workflow than traditional tools, enabling creators to transform complex intentions into high-quality cinematic content with ease.

MiniMax LLM Models

MiniMax is a large language model developed by MiniMax AI, focused on efficient reasoning, long-context understanding, and scalable text generation. It is designed for complex tasks such as dialogue systems, document analysis, content creation, and AI agents. With an emphasis on high performance at lower computational cost, MiniMax is well suited for enterprise applications and developer use cases where stability, efficiency, and cost control are important.

GLM LLM Models

GLM (General Language Model) is a large language model developed by ZAI (Zhipu AI) for text understanding, generation, and reasoning. It supports both Chinese and English and performs well in dialogue, content creation, translation, and code assistance. GLM is widely used in chatbots, enterprise AI systems, and developer applications due to its stable performance and versatility.

Seedance 1.5 Video Models

Seedance 1.5 (by ByteDance) is an advanced AI video generation model designed for high-quality, cinematic video creation with synchronized audio. It supports text-to-video and image-to-video generation with smooth motion, cohesive storytelling, and reliable visual consistency. Unlike traditional tools that add sound later, Seedance 1.5 can produce videos with natural audio-visual alignment, making it ideal for creators, marketers, and social media content workflows. Its balanced performance and ease of use help lower production cost and speed up content output.

Moonshot LLM Models

Kimi is a large language model developed by Moonshot AI, designed for reasoning, coding, and long-context understanding. It performs well in complex tasks such as code generation, analysis, and intelligent assistants. With strong performance and efficient architecture, Kimi is suitable for enterprise AI applications and developer use cases. Its balance of capability and cost makes it an increasingly popular choice in the LLM ecosystem.

Wan2.6 Video Models

Wan 2.6 is a next-generation AI video generation model from Alibaba’s Tongyi Lab, designed for professional-quality, multimodal video creation. It combines advanced narrative understanding, multi-shot storytelling, and native audio–visual synchronization to produce smooth 1080p videos up to 15 s long from text and reference inputs. Wan 2.6 also supports character consistency and role-guided generation, enabling creators to turn scripts into cohesive scenes with seamless motion and lip syncing. Its efficiency and rich creative control make it ideal for short films, advertising, social media content, and automated video workflows.

Flux.2 Image Models

The Flux.2 Series is a comprehensive family of AI image generation models. Across the lineup, Flux supports text-to-image, image-to-image, reconstruction, contextual reasoning, and high-speed creative workflows.

Nano Banana Image Models

Nano Banana is a fast, lightweight image generation model for playful, vibrant visuals. Optimized for speed and accessibility, it creates high-quality images with smooth shapes, bold colors, and clear compositions—perfect for mascots, stickers, icons, social posts, and fun branding.

Image and Video Tools

Open, advanced large-scale image generative models that power high-fidelity creation and editing with modular APIs, reproducible training, built-in safety guardrails, and elastic, production-grade inference at scale.