Seedream Image Models

Seedream — это передовая модель генерации изображений на основе ИИ, разработанная ByteDance, предназначенная для преобразования текста и референсных изображений в высококачественный профессиональный визуальный контент с высокой скоростью и широкими возможностями творческого контроля. Она объединяет генерацию изображений по тексту и интуитивное редактирование изображений в единый рабочий процесс, поддерживая использование нескольких референсов и сохранение единого стиля. Seedream создает сверхбыстрые изображения высокого разрешения (до 4K) и отличается превосходной детализацией рендеринга, что делает её идеальным инструментом для дизайнеров, маркетологов и авторов контента.

Исследуйте ведущие модели

Atlas Cloud предоставляет вам новейшие ведущие креативные модели индустрии.

Seedream v4.5

ByteDance latest image generation model achieving all-round improvements. Excels at typography, poster design, and brand visual creation with superior prompt adherence.

Seedream v4.5 Edit

ByteDance advanced image editing model that preserves facial features, lighting, and color tones while enabling professional-quality modifications.

Seedream v4.5 Sequential

ByteDance latest image generation model with batch generation support. Generate up to 15 images in a single request.

Seedream v4.5 Edit Sequential

ByteDance advanced image editing model with batch generation support. Edit multiple images while preserving facial features and details.

Seedream v4

Open and Advanced Large-Scale Image Generative Models.

Seedream v4 Sequential

Open and Advanced Large-Scale Image Generative Models.

Seedream v4 Edit

Open and Advanced Large-Scale Image Generative Models.

Seedream v4 Edit Sequential

Open and Advanced Large-Scale Image Generative Models.

Seedream v3.1

Open and Advanced Large-Scale Image Generative Models.

Seedream V3

ByteDance Seedream V3 is a state-of-the-art text-to-image model that excels in generating high-quality, photorealistic images with exceptional detail and artistic flair.

Что выделяет Seedream Image Models

Atlas Cloud предоставляет вам новейшие ведущие креативные модели в отрасли.

Синтез изображений

Генерирует изображения по текстовым запросам с использованием моделей Seedream v3–v4.

Прямые правки

Дорабатывает изображения через конечную точку Seedream v4/edit.

Последовательные правки

Applies step-by-step changes with edit-sequential model.

Последовательный вывод

Создает многоэтапные результаты с помощью последовательной генерации.

Параметры версии

Предлагает варианты v3, v3.1 и v4 для удовлетворения различных потребностей.

Ввод изображений

Модели редактирования могут принимать существующее изображение в качестве входных данных и дорабатывать его с помощью промптов.

Что вы можете сделать с Seedream Image Models

Atlas Cloud предоставляет вам новейшие ведущие креативные модели в отрасли.

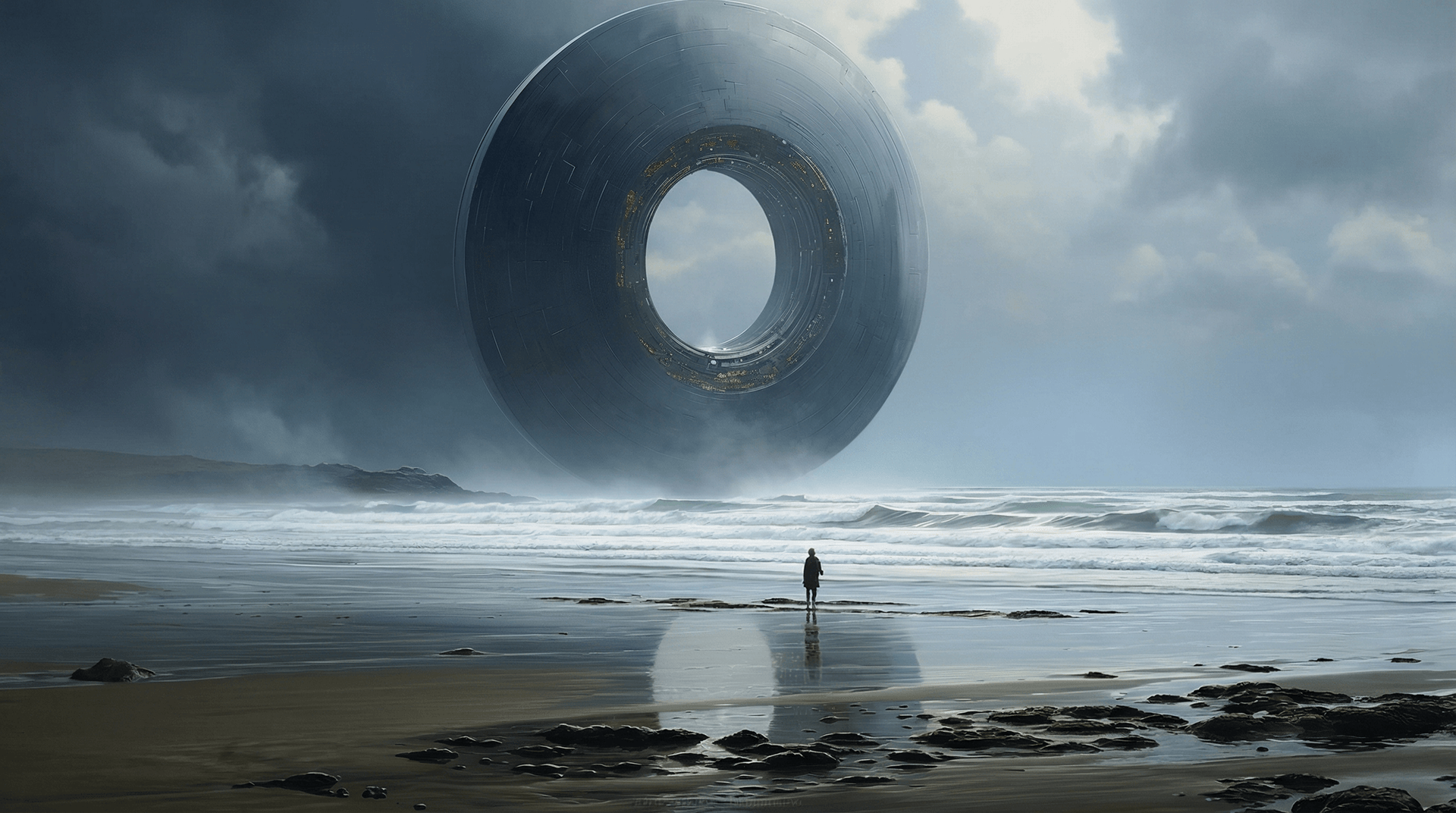

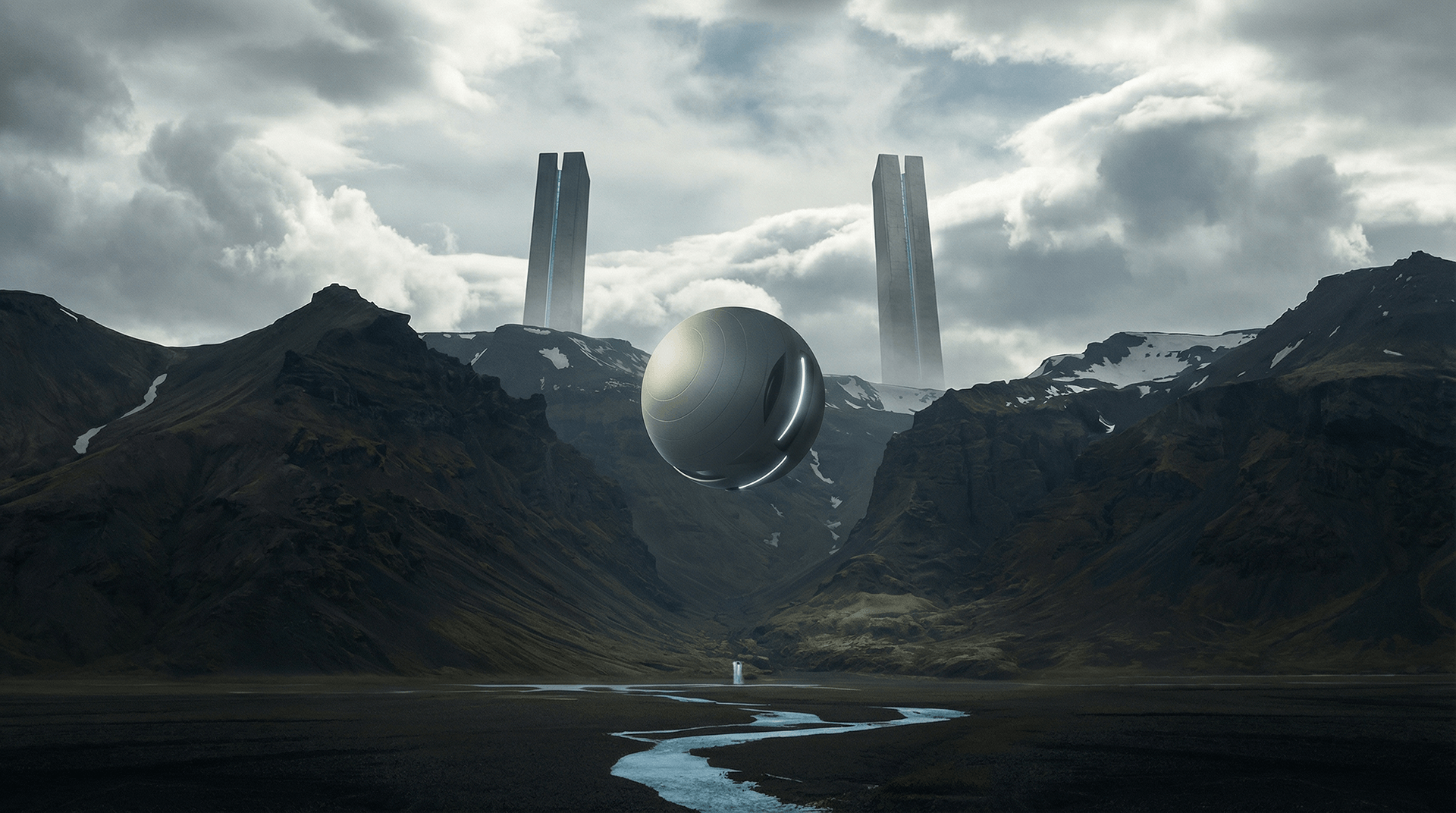

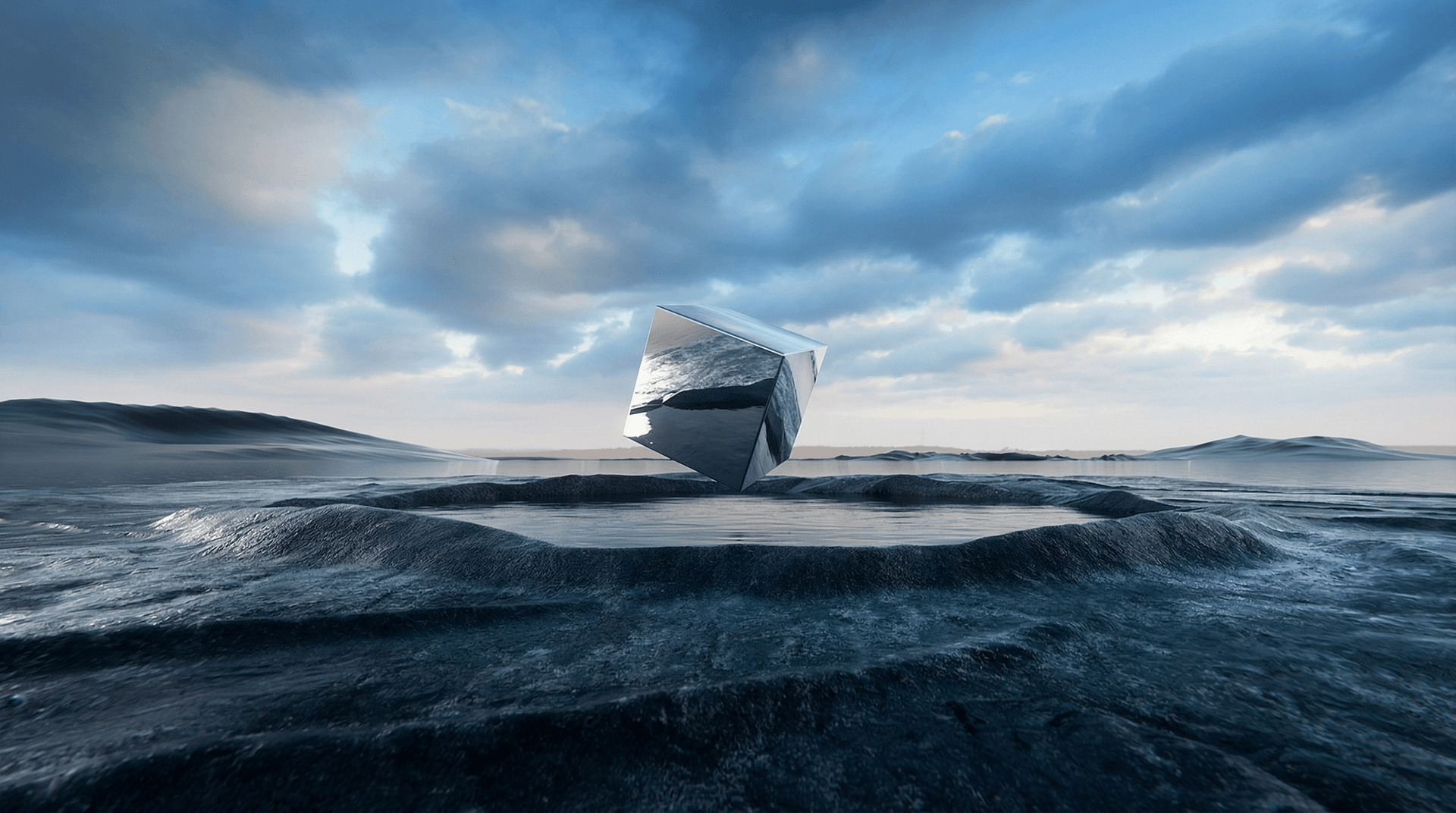

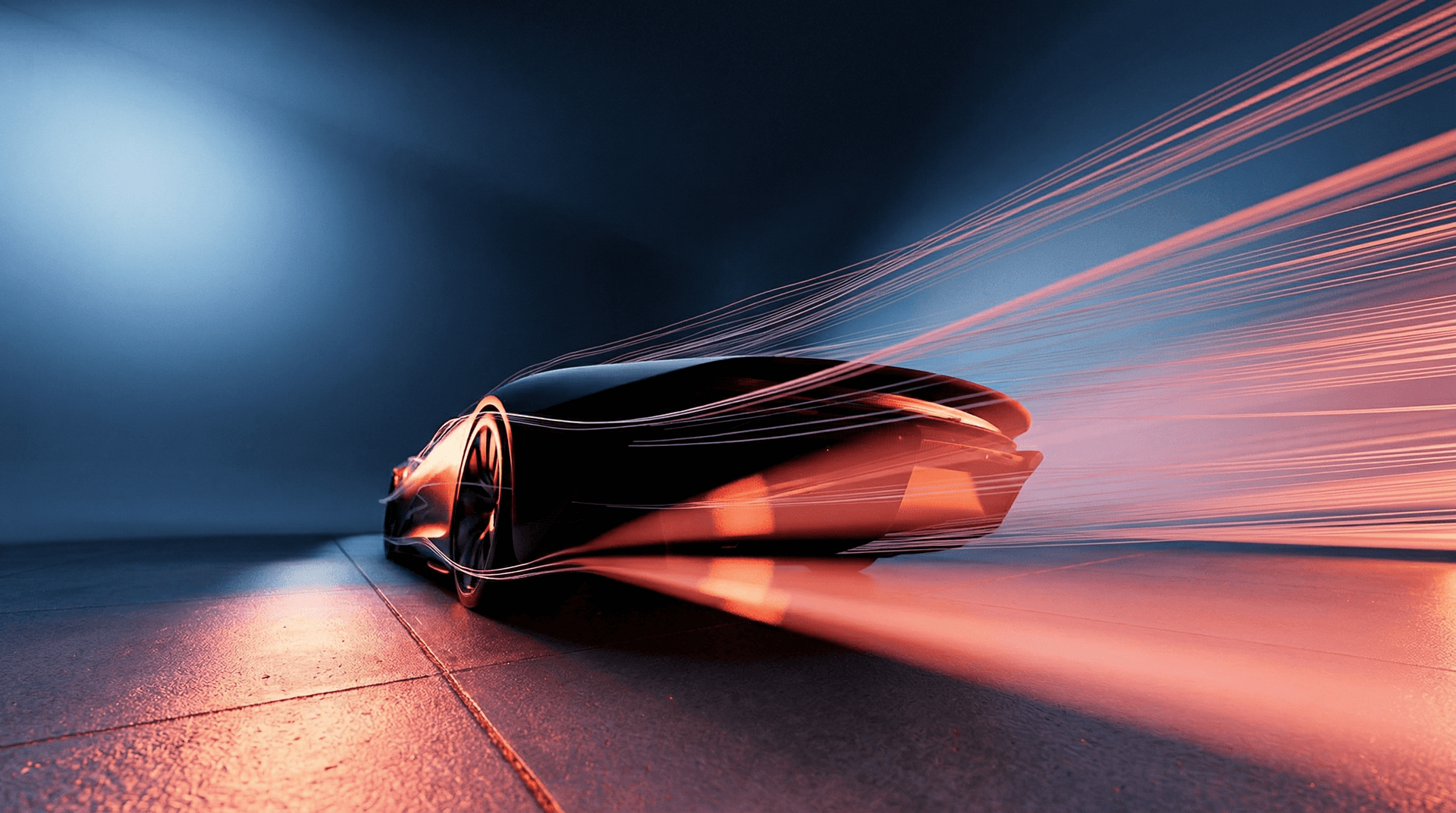

Создавайте маркетинговые визуализации, изображения продуктов или креативные материалы в высоком разрешении.

Редактируйте и дорабатывайте дизайн с помощью покадрового управления.

Создавайте художественные концепты или фотореалистичные рендеры по текстовым запросам.

Создавайте масштабируемые рабочие процессы для изображений в сфере рекламы, моды и дизайна.

Почему использовать Seedream Image Models на Atlas Cloud

Объединение продвинутых моделей Seedream Image Models с GPU-ускоренной платформой Atlas Cloud обеспечивает непревзойденную производительность, масштабируемость и опыт разработчика.

Производительность и гибкость

Низкая латентность:

GPU-оптимизированный вывод для рассуждения в реальном времени.

Единый API:

Запускайте Seedream Image Models, GPT, Gemini и DeepSeek с одной интеграцией.

Прозрачные цены:

Предсказуемое поточное биллирование с serverless опциями.

Предприятие и масштаб

Опыт разработчика:

SDK, аналитика, инструменты тонкой настройки и шаблоны.

Надежность:

99.99% uptime, RBAC и логирование соответствия.

Безопасность и соответствие:

SOC 2 Type II, соответствие HIPAA, суверенитет данных в США.

Исследуйте больше семейств

Seedance 2.0 Video Models

Seedance 2.0(by Bytedance) is a multimodal video generation model that redefines "controllable creation," moving beyond the limitations of text or start/end frames. It supports quad-modal inputs—text, image, video, and audio—and introduces an industry-leading "Universal Reference" system. By precisely replicating the composition, camera movement, and character actions from reference assets, Seedance 2.0 solves critical issues with character consistency and physical coherence, empowering creators to act as true "directors" with deep control over their output.

Vidu Video Models

Vidu (by ShengShu Technology) is a foundational video model built on the proprietary U-ViT architecture, combining the strengths of Diffusion and Transformer models. It features superior semantic understanding and generation capabilities, producing coherent, fluid visuals that adhere to physical laws without the need for interpolation. With exceptional spatiotemporal consistency and a deep understanding of diverse cultural elements, Vidu empowers professional filmmakers and creators with a stable, efficient, and imaginative tool for video production.

MiniMax LLM Models

MiniMax is a large language model developed by MiniMax AI, focused on efficient reasoning, long-context understanding, and scalable text generation. It is designed for complex tasks such as dialogue systems, document analysis, content creation, and AI agents. With an emphasis on high performance at lower computational cost, MiniMax is well suited for enterprise applications and developer use cases where stability, efficiency, and cost control are important.

GLM LLM Models

GLM (General Language Model) is a large language model developed by ZAI (Zhipu AI) for text understanding, generation, and reasoning. It supports both Chinese and English and performs well in dialogue, content creation, translation, and code assistance. GLM is widely used in chatbots, enterprise AI systems, and developer applications due to its stable performance and versatility.

Moonshot LLM Models

Kimi is a large language model developed by Moonshot AI, designed for reasoning, coding, and long-context understanding. It performs well in complex tasks such as code generation, analysis, and intelligent assistants. With strong performance and efficient architecture, Kimi is suitable for enterprise AI applications and developer use cases. Its balance of capability and cost makes it an increasingly popular choice in the LLM ecosystem.

Open AI Model Families

Explore OpenAI’s language and video models on Atlas Cloud: ChatGPT for advanced reasoning and interaction, and Sora-2 for physics-aware video generation.

Van Video Models

Van Model is a flagship video model family, perfectly retaining the cinematic visuals and complex dynamics of 3D VAE and Flow Matching. By leveraging proprietary compute distillation, it breaks the "quality equals cost" barrier to deliver extreme inference speeds and ultra-low costs. This makes Van the premier engine for enterprises and developers seeking high-frequency, scalable video production on a budget.

Kling 3.0 Video Models

Kling AI Video 3.0 (by Kuaishou) is a groundbreaking model designed to bridge the worlds of sound and visuals through its unique Single-pass architecture. By simultaneously generating visuals, natural voiceovers, sound effects, and ambient atmosphere, it eliminates the disjointed workflows of traditional tools. This true audio-visual integration simplifies complex post-production, providing creators with an immersive storytelling solution that significantly boosts both creative depth and output efficiency.

Veo3.1 Video Models

Veo 3.1 (by Google) is a flagship generative video model that sets a new standard for cinematic AI by deeply integrating semantic capabilities to deliver cinematic visuals, synchronized audio, and complex storytelling in a single workflow. Distinguishing itself through superior adherence to cinematic terminology and physics-based consistency, it offers professional filmmakers an unparalleled tool for transforming scripts into coherent, high-fidelity productions with precise directorial control.

Sora-2 Video Models

The Sora-2 family from OpenAI is the next-generation video + audio generation model, enabling both text-to-video and image-to-video outputs with synchronized dialogue, sound effect, improved physical realism, and fine-grained control.

Nano Banana Image Models

Nano Banana is a fast, lightweight image generation model for playful, vibrant visuals. Optimized for speed and accessibility, it creates high-quality images with smooth shapes, bold colors, and clear compositions—perfect for mascots, stickers, icons, social posts, and fun branding.

Wan2.6 Video Models

Wan 2.6 is a next-generation AI video generation model from Alibaba’s Tongyi Lab, designed for professional-quality, multimodal video creation. It combines advanced narrative understanding, multi-shot storytelling, and native audio–visual synchronization to produce smooth 1080p videos up to 15 s long from text and reference inputs. Wan 2.6 also supports character consistency and role-guided generation, enabling creators to turn scripts into cohesive scenes with seamless motion and lip syncing. Its efficiency and rich creative control make it ideal for short films, advertising, social media content, and automated video workflows.