Summarize/Abstract:

MiniMax M2.7 has officially launched on Atlas Cloud. As the first model to deeply participate in its own iteration, M2.7 builds upon the M2 series with a primary focus on enhancing Agent capabilities. in programming scenarios, M2.7’s logic construction and autonomous error-correction capabilities have been strengthened, enabling it to autonomously iterate through 100 rounds of code. In Agent applications, M2.7’s "hands-on" ability is improved, allowing it to build complex Agent Harnesses and complete highly complex productivity tasks based on capabilities such as Agent Teams, Complex Skills, and Tool Search.

We recommend using the model through the OpenAI-compatible API provided by Atlas Cloud. This not only allows for the concurrent use of multiple mainstream generative models but also offers pricing that is more transparent and lower than that of similar competitors.

MiniMax M2.7, developed by MiniMax, is now live on Atlas Cloud!

- What is MiniMax M2.7: This is the first model launched by the MiniMax team to deeply participate in its own iteration, extending their M2 series Large Language Model (LLM) product line.

- Core Advantages: MiniMax M2.7 achieves deep autonomous evolution capabilities, possesses end-to-end software engineering capabilities, and offers "MAX" professional office delivery performance.

- Price: 💲0.3/1.2 M in/out

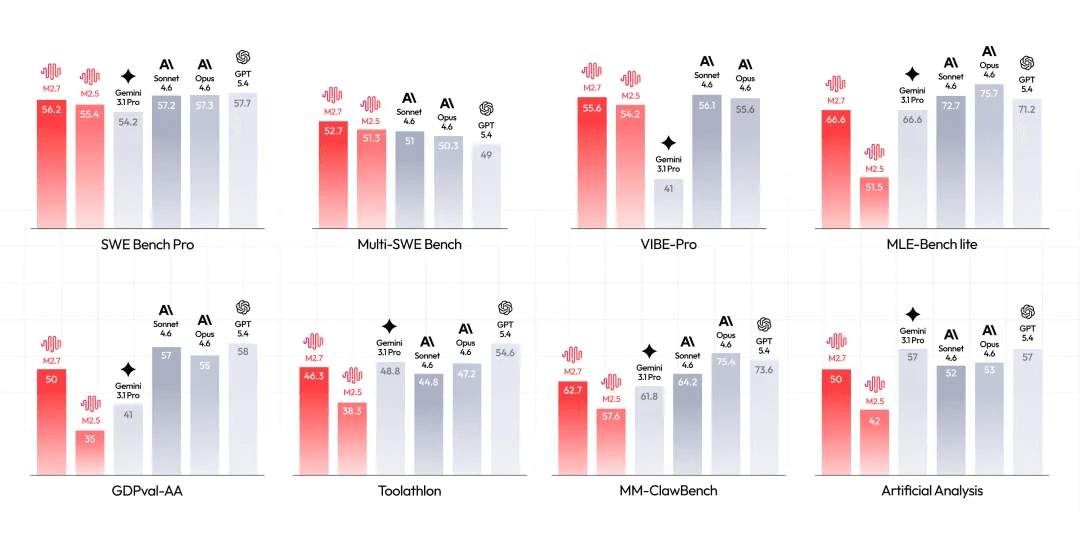

The earlier M2.5 captured market attention by matching the performance of Opus 4.6 at an ultra-low cost (up to a 20x reduction). Now, M2.7 goes a step further, with standalone programming capabilities equaling GPT-5.3-Codex, while matching the comprehensive performance of Opus 4.6 in full-project delivery. In the following sections, we will take a deep dive into the exceptional features of MiniMax M2.7.

MiniMax M2.7 Core Features

Deep Self-Evolution Capability

Based on the research-oriented Agent framework established within the M2 series, the MiniMax team designed and implemented a simple "scaffolding" to guide the Agent through autonomous optimization. This enables M2.7 to interact and collaborate with multiple research project groups and autonomously explore optimal solutions.

- Comprehensive System Coverage: Data pipelines, training environments, evaluation infrastructure, cross-team collaboration, and persistent memory.

- Full-Process Intelligence: Automatically monitors and analyzes experiment statuses, dynamically triggers log reading, troubleshooting, and metric analysis. It can even complete code fixes, merge requests, and smoke tests to identify and handle subtle yet critical changes.

- Core Modules: Short-term memory, self-feedback, and self-optimization.

Researchers only need to appear during key decisions and discussions; M2.7 can perfectly handle the rest of the work. Beyond significantly boosting delivery efficiency, M2.7 allows us to imagine a future where AI builds and optimizes AI.

Professional Office Delivery Capability

MiniMax M2.7 demonstrates superior adherence when executing user prompts. Specifically, the model thinks and pushes more proactively to meet user needs, like actively finding solutions, iterating on old outputs, and providing detailed explanations. Its powerful world knowledge, ability to handle Word, Excel, and PPT, and proficiency in generalized daily scenarios lead to a massive boost in office productivity.

- Information Retrieval & Translation: M2.7 can more accurately call skills to efficiently complete user requests.

- Reading Reports & Data Analysis: Exhibits research-grade understanding and output capabilities.

- Office Document Processing & Delivery: Supports multi-round editing and output based on designated templates.

These improvements effectively reduce the manual correction work required from developers and content creators, significantly optimizing the entire workflow from input intent to final product. Official documentation states that in certain R&D scenarios, M2.7 can handle approximately 30%—50% of the workload.

End-to-End Software Engineering Capability

M2.7 displays top-tier prowess in real-world software engineering scenarios, mastering the complete engineering cycle from debugging to collaboration.

- Production Environment Troubleshooting: Completes problem localization and repair within 3 minutes through causal reasoning and root-cause verification, vastly increasing efficiency.

- Code Generation & System Cognition: Maintains high accuracy in complex engineering tasks with a deep understanding of system logic and engineering workflows.

- Native Multi-Agent Collaboration: Supports team development with stable role division, balancing logic and efficiency when handling complex tasks.

M2.7 represents an all-around revolution in software engineering capabilities.

Agent Tasks: Enhanced Performance in Scenarios like OpenClaw

In complex scenarios involving Agent applications, MiniMax M2.7 performs like a true Pro. With its improved context understanding and memory, it stays perfectly on track with goals throughout complex dialogues and lengthy tasks.

- Tool Usage: Picks and uses tools with accuracy and efficiency.

- Task Planning: Breaks down long-term tasks scientifically, keeping it practical and actionable.

- Error Handling: Spots mistakes and fixes them all by itself.

This level of robustness greatly enhances the reliability of Agents in real-world operations. Whether it's automating your office work or crunching through data analysis, MiniMax M2.7 has got your back.

Application Scenario Examples

Knowledge-Intensive Research Reports

In professional fields such as finance, M2.7 can already understand, judge, and output like a junior analyst. It self-corrects through multi-round interactions, autonomously reads annual reports, integrates research information, builds revenue models, and outputs PPTs and research reports.

Frontend Page Design

Compared to M2.5, M2.7’s aesthetic capability has improved. M2.7 demonstrates better results in fonts, card layouts, and interactive effects.

M2.7 vs M2.5, Source: @Aibattle_ on X

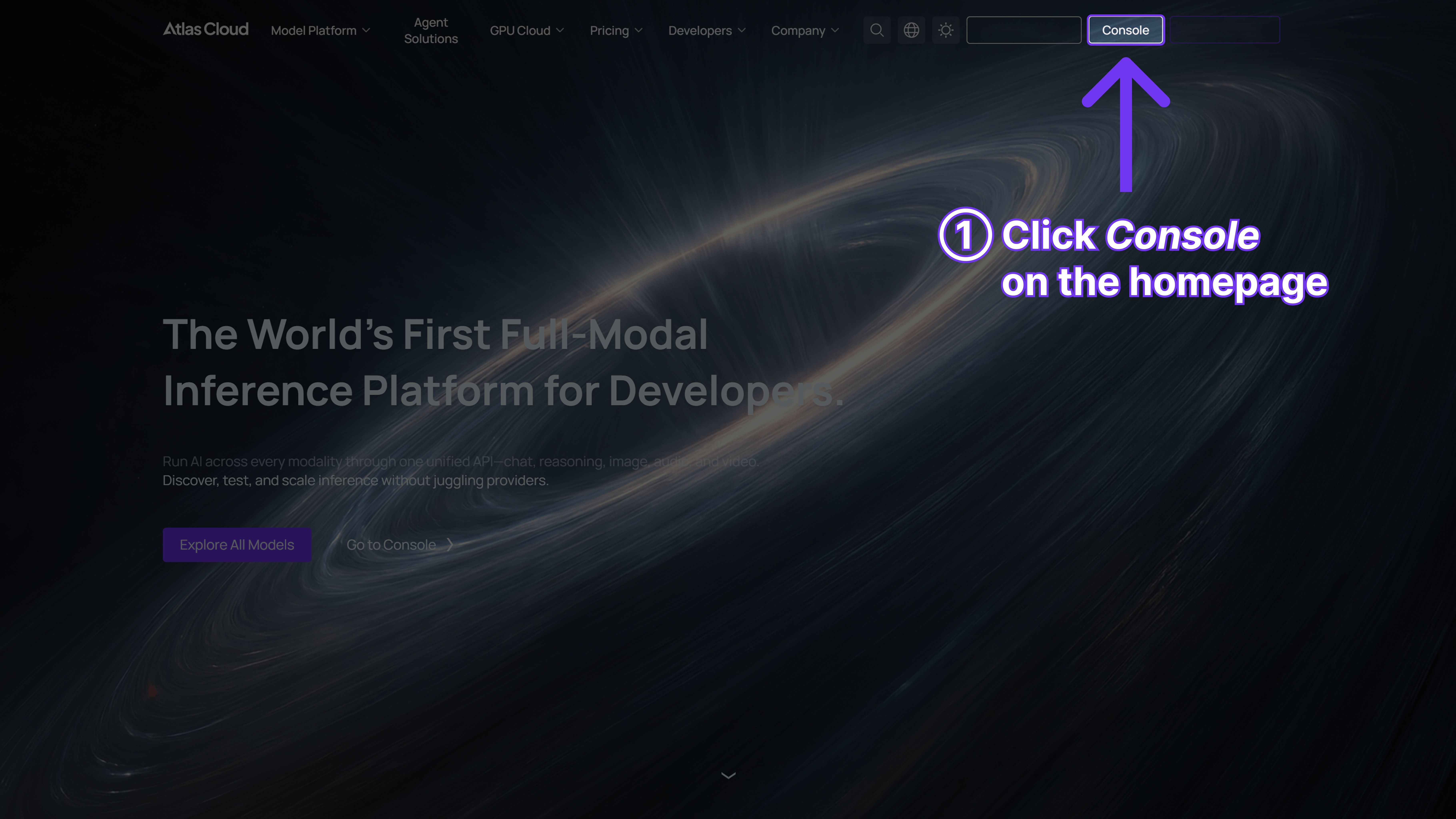

Why Use MiniMax M2.7 on Atlas Cloud?

As an all-modal AI infrastructure platform, Atlas Cloud provides users with a unified API interface. Once connected, users can easily unlock over 300 advanced AI models including generating text, images, video, or multi-modal models.

Target Audience

- Independent Developers seeking low-cost, simplified solutions to call various AI models.

- Enterprises requiring stable, secure, and scalable infrastructure to support core business.

- Development Teams needing to efficiently integrate multiple cross-modal models into projects.

- Workflow Users who prioritize toolchain compatibility and use ComfyUI or n8n.

Product Features

- Greatly Simplified Integration: The platform provides an OpenAI-compatible API, instantly simplifying the developer's workload. No more juggling multiple vendor keys or stressing over maintenance costs across platforms.

- Cost Advantage: Compared to competitors, Atlas Cloud has lower deployment costs. Nano Banana 2 costs **0.056/image∗∗(competitor:0.056/image** (competitor: 0.056/image∗∗(competitor:0.07/image); Veo 3.1 is priced at **0.09/second∗∗(competitor:0.09/second** (competitor: 0.09/second∗∗(competitor:0.1/second). Additionally, the Playground interface offers full price transparency, with the "Run" button directly labeling the deduction amount per image or second of video.

- Enterprise-Grade Stability & Support: Atlas Cloud ensures data protection meets strict privacy standards and can tackle sensitive information.

- Plug-and-Play Friendly: Built to work effortlessly with tools like ComfyUI and n8n, helping businesses cut down on switching costs and hit the ground running.

Comparison with Similar Products

- Fal.ai: While they offer some models, Atlas Cloud provides a wider selection (300+), more competitive pricing, and new registered users receive a $1 trial credit.

- Wavespeed: Pricing is significantly higher. Atlas Cloud offers additional enterprise compliance support and expert technical guidance that Wavespeed does not emphasize.

- Kie.ai: Uses an opaque credit system. Atlas Cloud displays the exact cost for every run directly on the interface. The model count is also higher than Kie.ai.

- Replicate: Focuses on model hosting. Atlas Cloud’s advantages lie in API unification, the speed of model deployment, and more developer-friendly support policies.

- OpenAI or Google: These vendors only provide their own models. Users with cross-modal needs usually need to integrate multiple services. Atlas Cloud integrates proprietary and open-source models under one API, reducing system complexity.

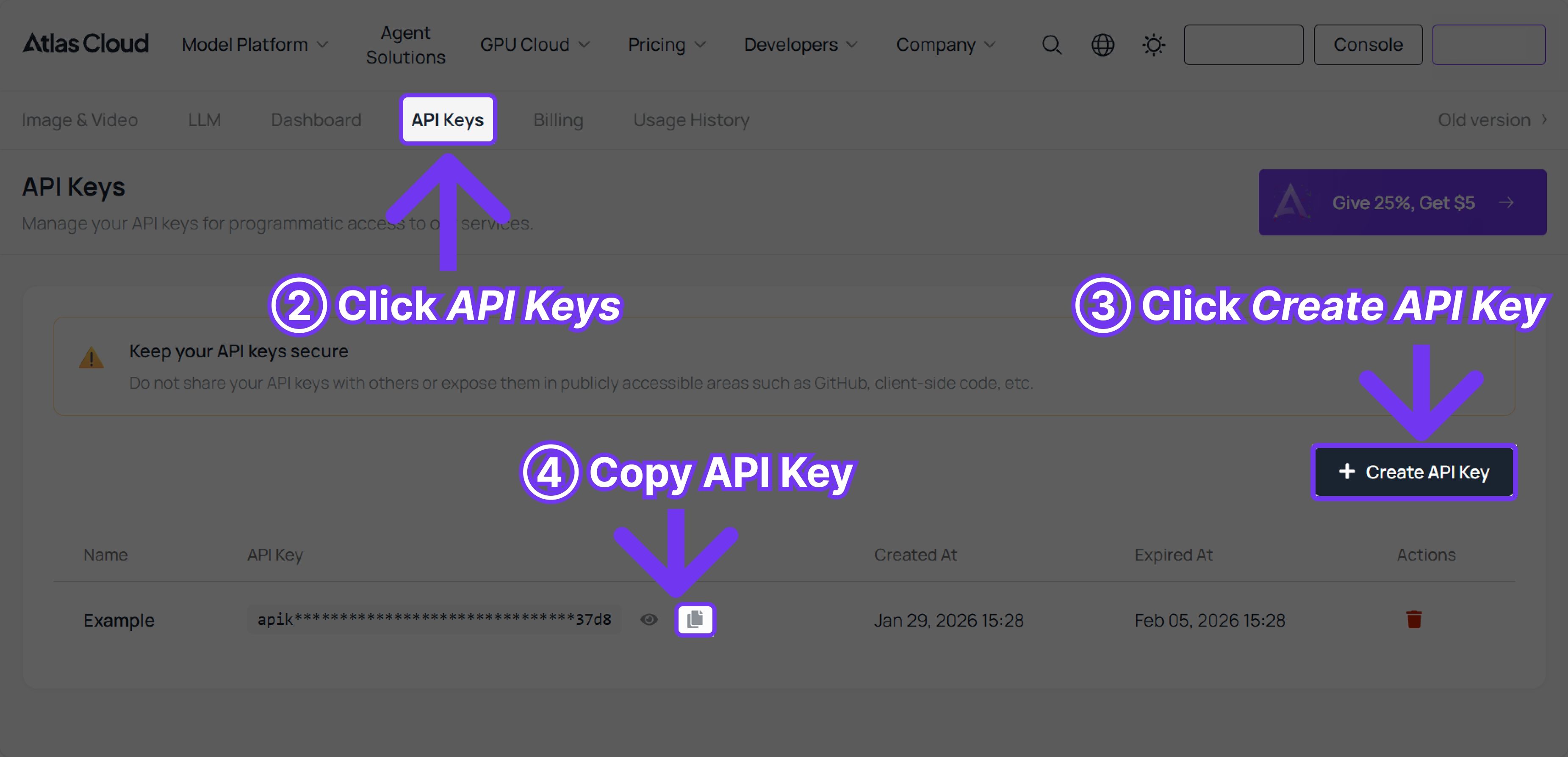

How to Use MiniMax M2.7 on Atlas Cloud?

Method 1: Use directly on the platform

Method 2: Use via API integration

Step 1: Get your API Key. Create and paste your API key in the console:

Step 2: Consult the API Docs. Check request parameters, authentication methods, etc.

Step 3: Make your first request (Python Example)

plaintext1{ 2 "model": "minimaxai/minimax-m2.7", 3 "messages": [ 4 { 5 "role": "user", 6 "content": "Hello" 7 } 8 ], 9 "max_tokens": 1024, 10 "temperature": 0.7, 11 "stream": false 12}

FAQ

In the 2026 mainstream model competition, how does M2.7’s price-performance ratio stack up?

Compared to Claude Opus 4.6, M2.7 significantly reduces inference costs when called via Atlas Cloud while maintaining the same delivery capability.

Especially with the current popularity of the OpenClaw framework, M2.7 has been specifically optimized for long-sequence tasks and tool calling (Skill adherence rate reaches 97%), resulting in an extremely high "unit productivity to price ratio."

What is the core breakthrough of MiniMax M2.7 compared to previous versions?

MiniMax M2.7 is the first model to achieve deep participation in "self-iteration." It not only sees a massive boost in Agent capabilities but also possesses end-to-end software engineering capabilities.

What is the level of MiniMax M2.7’s programming and software engineering capabilities?

M2.7’s standalone programming capability has caught up with the powerful GPT-5.3-Codex, while its comprehensive performance in full-project delivery rivals Opus 4.6. It supports localizing and fixing production environment faults within 3 minutes and possesses a deep understanding of complex system logic, handling approximately 30%—50% of the workload in actual R&D scenarios.