OpenAI Sora 2 is a state-of-the-art model for AI video generation, specifically in physics simulation. Objects fall, bounce, break, and interact with their surroundings in ways that seem legitimately plausible -- a feat that no competing model has yet to match to its fullest. This Sora 2 tutorial covers all you need to know as a developer to integrate Sora 2 into your workflow through the Sora 2 Atlas Cloud API, including pricing, Python code examples, prompt engineering, and a direct comparison against Seedance 2.0, Kling 3.0, and Veo 3.1.

See Sora 2 in action:

The Sora 2 API is accessible via Atlas Cloud for 0.15persecondofgeneratedvideo.Atlasalsoprovides0.15 per second of generated video. Atlas also provides 0.15persecondofgeneratedvideo.Atlasalsoprovides1 in free credit upon sign-up. Atlas customers can access Sora 2 as well as 300+ other models with a single API key, without having to use separate accounts with OpenAI, Kuaishou, ByteDance, and Google.

Sora 2 at a Glance

- Developer: OpenAI

- Model ID: `openai/sora-2/text-to-video-pro-developer`

- Max Resolution: High Definition

- Max Duration: 12 seconds

- Frame Rate: 30fps

- Native Audio: Yes

- Reference Input: 1 image

- Core Strength: Physics simulation, realistic object interactions

- Atlas Cloud Price: $0.15/sec

Key Features of Sora 2

Physics Simulation

It's also in this area where Sora 2 really distances itself from the pack. Gravity, momentum, fluid dynamics and collision dynamics are simulated with a degree of fidelity other models can't reproduce in a consistent way. Throw a prompt in describing a bowling ball hitting some pins and the result shows proper force distribution, pin scatter patterns, and follow through. Kling 3.0 and Seedance 2.0 mostly estimate these interactions; Sora 2 simulates them.

Realistic Object Interactions

Sora 2 models also have an understanding of physics beyond the single-object case. Pouring a liquid into a glass, stacking blocks that tilt and fall, or a cat knocking things off a table all involve some causal reasoning about how objects interact with one another. Sora 2 models exhibit greater robustness to complex, multi-object interactions than any other model available through public APIs to date.

Material and Surface Rendering

Glass, metal, water, fabric and wood all behave with unique physical properties in Sora 2 outputs. Light bends as it passes through transparent media. Mirrors capture and warp their environment. Cloth hangs and creases with suitable heft. This level of material fidelity adds to the overall verisimilitude that characterizes Sora 2's output.

Native Audio Generation

Sora 2 can create audio alongside video. Steps have the correct cadence for walking, impacts have the appropriate sounds, and the background noise makes sense for the scene. The audio isn't at production quality, but it gives a usable baseline that removes an additional step from many pipelines.

Temporal Coherence

For the full 12 seconds of its maximum length, Sora 2 shows a high level of internal consistency in lighting, character presentation, and background detail. Objects don't change color or form from frame to frame. Shadows follow light sources appropriately throughout the whole clip. This level of continuity is especially crucial for shots with slow, methodical motion that could easily show jarring discontinuities.

Sora 2 Pricing

OpenAI Sora 2 Official Access

Access to OpenAI Sora 2 is provided by ChatGPT as a part of their Plus subscription (20/month)orProsubscription(20/month) or Pro subscription (20/month)orProsubscription(200/month) with strict limitations. While Plus subscription users get a capped number of generations per month, queue times may be long during peak hours. Access through OpenAI's direct API is also possible, however, it is only available to developer accounts which are approved by OpenAI, and Sora 2 is priced at a higher premium.

Atlas Cloud API Pricing (Recommended)

The easiest way for developers to access the Sora 2 API is through Atlas Cloud:

- Model: `openai/sora-2/text-to-video-pro-developer`

- Price: $0.15/sec

- 10-second clip: $1.50

- 12-second clip (max): $1.80

- Free signup credit: $1.00

- Queue: No wait times

The $1 free credit at sign up = approx 6 secs of Sora 2 video -- the ability to sample the quality and physics accuracy before deciding to use more for a fee. With the Sora 2 pricing, the expense scales linearly and very predictably for teams that produce at scale.

How to Access Sora 2 API

Option 1: OpenAI Direct

Sora 2 is accessible to users through OpenAI's official routes, namely via ChatGPT subscriptions or through the developer API. The developer API access is contingent on having an approved account and incurs higher costs per generation. Documentation is provided at platform.openai.com, with the onboarding process including waitlist approval for video generation endpoints.

Option 2: Atlas Cloud (Recommended)

For many developers, the quickest route to production will be through the Sora 2 Atlas Cloud integration. One API key to the power of OpenAI Sora 2 and over 300 other models, including Seedance 2.0, Kling 3.0, and Veo 3.1. No waitlist. No separate accounts. Single billing.

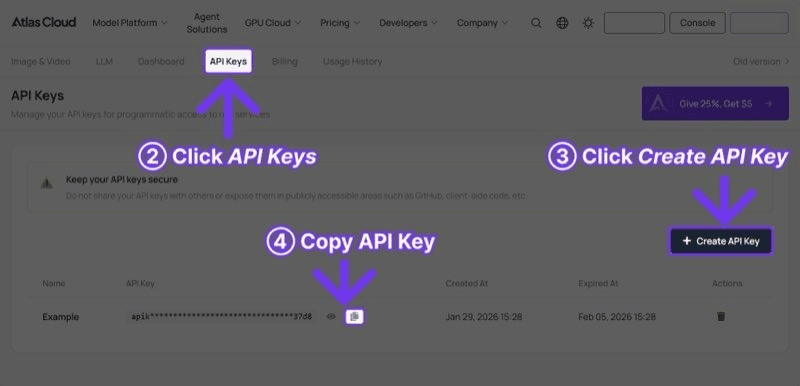

Step 1: Sign up on atlascloud.ai (https://www.atlascloud.ai?utm_medium=article&utm_source=blog&utm_campaign=sora-2-guide) and get your API key from the dashboard. $1 free credit is added automatically to your account.

Step 2: Generate video with Sora 2 in Python:

plaintext1```python 2import requests 3import time 4 5 6API_KEY = "your-atlas-cloud-api-key" 7BASE_URL = "https://api.atlascloud.ai/api/v1" 8 9 10# Generate video with Sora 2 11response = requests.post( 12 f"{BASE_URL}/model/generateVideo", 13 headers={ 14 "Authorization": f"Bearer {API_KEY}", 15 "Content-Type": "application/json" 16 }, 17 json={ 18 "model": "openai/sora-2/text-to-video-pro-developer", 19 "prompt": "A glass sphere rolling down a wooden staircase, each bounce creating ripples of light, realistic physics and reflections, cinematic slow motion", 20 "duration": 10, 21 "resolution": "1080p" 22 } 23) 24 25 26result = response.json() 27 28 29# Poll for results 30while True: 31 status = requests.get( 32 f"{BASE_URL}/model/prediction/{result['request_id']}/get", 33 headers={"Authorization": f"Bearer {API_KEY}"} 34 ).json() 35 if status["status"] == "completed": 36 print(f"Video: {status['output']['video_url']}") 37 break 38 time.sleep(5) 39```

Step 3: The API immediately returns a `request_id`. Poll the prediction endpoint until its status is `completed`, and then get the video URL from the response. The generation time is usually 30 seconds to 3 minutes for Sora 2 depending on duration and complexity.

Sora 2 Prompt Tips

After a significant amount of testing with Sora 2 API, this Sora 2 tutorial segment includes various prompting methods that have reliably yielded high-quality results. Sora 2 excels in responding to prompts focused on physical interactions and material behavior.

- Be explicit about physics. The most powerful engine under Sora 2 is physical simulation, so exploit it. Instead of just "a ball bouncing," say "a rubber ball dropped from shoulder height onto a hardwood floor, bouncing 3 times with decreasing height, realistic elasticity and shadow movement." The model encourages physics-specific detail.

- Describe materials and surfaces. Sora 2 simulates materials with different properties. Use material names: "frosted glass", "brushed aluminum", "wet cobblestone", "silk curtain". The model recognizes these textures, and maps light physics correctly to each.

- Use cinematic language for camera work. Sora 2 translates basic cinematography vocabulary to camera commands reasonably well. "Slow dolly forward," "rack focus from foreground to background," "low-angle tracking shot" -- all of these terms will make the camera do what you think they will. Don't use ambiguous instructions like “cool camera movement.”

- Design prompts to be 10-12 seconds. Given the 12 second maximum, prompts should describe one action or set of actions that flow together. One subject, one major movement, one payoff image. Don't try to force multiple scenes and cuts into one generation.

- Take advantage of cause-and-effect chains. Sora 2 seems strong at handling one thing happening after another in the physical world. "Dominoes toppling across a desk, knocking over a cup of pencils" or "striking a match, which lights and sets fire to a candle wick" -- these phrases with multiple steps involving physical events play to the model's strengths.

Example prompts that performed well in testing:

Physics showcase:

plaintext1``` 2A ceramic mug tipping off the edge of a kitchen counter in slow motion, 3shattering on impact with a tile floor, fragments and liquid scattering 4realistically, soft morning light from a nearby window, cinematic depth of field 5```

Product demonstration:

plaintext1``` 2A smartphone placed on a reflective dark surface, water droplets falling 3onto the screen and beading naturally, demonstrating water resistance, 4studio lighting with subtle blue accent lights, 4K commercial style 5```

Nature simulation:

plaintext1``` 2A single drop of water falling into a still pond, creating concentric 3ripples that expand outward, a fallen autumn leaf floating on the surface 4gently disturbed by the waves, golden hour lighting, macro lens perspective 5```

Sora 2 vs Competitors

- Sora 2: Max Resolution: High Definition, Max Duration: 12s, Reference Input: 1 image, Native Audio: Yes, API Cost (Atlas Cloud): $0.15/sec, Best Strength: Physics realism, Content Filter: Strict

- Seedance 2.0: Max Resolution: High Definition, Max Duration: 15s, Reference Input: 12 files, Native Audio: Yes, API Cost (Atlas Cloud): $0.022/sec, Best Strength: Multimodal control, Content Filter: Strict

- Kling 3.0: Max Resolution: Ultra HD, Max Duration: 10s, Reference Input: 1-2 images, Native Audio: Yes (5 languages), API Cost (Atlas Cloud): $0.126/sec, Best Strength: Resolution + value, Content Filter: Very strict

- Veo 3.1: Max Resolution: HD Cinematic, Max Duration: 8s, Reference Input: 1-2 images, Native Audio: Yes, API Cost (Atlas Cloud): $0.03/sec, Best Strength: Cinematic polish, Content Filter: Moderate

Where Sora 2 Wins

OpenAI Sora 2 is state of the art in physics simulation fidelity and believability in how objects behave. When it comes to physics-based cause and effect video content -- product demos, explainer visualizations, physics-based art and creativity -- no other model rivals its quality of output. And its 12-second maximum length is an improvement over Kling 3.0 (10s) and Veo 3.1 (8s), allowing more story content within a single clip.

Where Sora 2 Falls Short

Comparing Sora 2 pricing, 0.15/secforSora2isthehighestpricedinthisarticle.Seedance2.0at0.15/sec for Sora 2 is the highest priced in this article. Seedance 2.0 at 0.15/secforSora2isthehighestpricedinthisarticle.Seedance2.0at0.022/sec is about 85% cheaper per second. Resolution is maxed out at high definition, lower than Kling 3.0's ultra-high-definition. Reference input is one image, Seedance 2.0 can handle up to 12 files (nine images, three videos, three audio). For budget constrained teams or projects needing heavy reference based control, these differences are meaningful.

The Practical Approach

As covered in this Sora 2 tutorial, most teams will need more than one model and won't want to use Sora 2 exclusively. Sora 2 is for productionizing physics-heavy scenes. Seedance 2.0 is for complex, multi-reference projects. Kling 3.0 is for final renders at full resolution. Veo 3.1 is for cinematic polish. Atlas Cloud makes this multi-model approach feasible with a single API key and consolidated billing.

Who Should Use Sora 2?

Choose Sora 2 if:

- Physics accuracy is the primary requirement. Gravity, fluid dynamics, collisions, and material interactions are Sora 2's defining strength.

- The project involves product demonstrations where objects need to behave realistically -- drops, splashes, rotations, mechanical movements.

- Educational or scientific visualization work demands plausible physical behavior that withstands scrutiny.

- The 12-second duration ceiling provides sufficient time for the intended content. Sora 2 offers more time per clip than Kling 3.0 and Veo 3.1.

- Budget is less of a concern than output quality for physics-driven content.

Choose Seedance 2.0 instead if:

- The project requires extensive reference material -- multiple images, videos, and audio files as input.

- Budget efficiency is paramount. At $0.022/sec on Atlas Cloud, Seedance 2.0 is roughly 7x cheaper than Sora 2.

- Longer clips (up to 15 seconds) are needed.

- Multimodal input control is more important than physics accuracy.

Choose Kling 3.0 instead if:

- Ultra-high-definition output is required. Sora 2 outputs at high-definition resolution.

- Free-tier access matters. Kling 3.0 offers 66 daily credits; Sora 2 requires a paid subscription.

- E-commerce content needs crisp, readable text rendering in generated video.

Choose Veo 3.1 instead if:

- Cinematic visual quality and color grading are the top priorities.

- Cost-effective scaling is needed. At $0.03/sec, Veo 3.1 is 5x cheaper than Sora 2.

- Shorter clips (up to 8 seconds) are acceptable for the use case.

Frequently Asked Questions

How do I access the Sora 2 API?

The easiest way is via Atlas Cloud. Sign up, obtain an API key, and include the model ID `openai/sora-2/text-to-video-pro-developer` in your requests. The $1 free credit automatically credits to your account at sign up. Sora 2 is also available via OpenAI's official API, but requires separate approval to their developer program.

How much does Sora 2 cost per video?

Sora 2 is priced at 0.15/secondofgeneratedvideoonAtlasCloud.A10secondclipwillbe0.15/second of generated video on Atlas Cloud. A 10 second clip will be 0.15/secondofgeneratedvideoonAtlasCloud.A10secondclipwillbe1.50. A maximum length 12 second clip will be $1.80. Pricing through OpenAI directly for Sora 2 varies based on subscription tier, but is generally more expensive than the Sora 2 Atlas Cloud rate for comparable output.

What is the maximum video length Sora 2 can generate?

Sora 2 has a maximum length of 12 seconds at HD resolution. It sits in the middle of Seedance 2.0 (15 seconds max) and Kling 3.0 (10 seconds max). Most people create multiple clips and combine them in editing for longer works.

Can Sora 2 do image-to-video generation?

Yes. Sora 2 takes a single reference image as input, and the model takes the image as an initial frame or style reference for the created video, but it only allows one. Seedance 2.0 has up to 12 reference files for more complicated reference-based generation.

Is Sora 2 better than Seedance 2.0 or Kling 3.0?

The models are the strongest in different areas. Sora 2 has the best physics simulation and real-world object interaction. Seedance 2.0 has the best multimodal input control and lowest API pricing ($0.022/sec). Kling 3.0 has the highest resolution output and most lenient free tier. For most teams the best strategy will be to use all three through a unified platform such as Atlas Cloud and direct each task to the appropriate model.

Does Sora 2 generate audio with the video?

Yes. Sora 2's native audio generation is timed to the visuals. The audio is workable for draft and social media content, though for professional content it may be replaced or enhanced in post-production.

Verdict

Sora 2 has a very specific place in the AI video generation ecosystem. It is not the most cost-effective, it doesn't have the highest resolution, and it doesn't have the most open-ended input system. It does have the most physically-accurate video generation available in any public API. If your application or content relies on physics that are true to the real world -- product demos, education, scientific visualization, art grounded in reality -- Sora 2 is the most powerful option for you.

The one practical take away from this Sora 2 tutorial: Access Sora 2 + Seedance 2.0, Kling 3.0, Veo 3.1, 300+ models on Atlas Cloud. One API key. One bill. Freedom to select the best model for each job. $1 free credit to get started with Sora 2 physics simulation side-by-side and scale as you go.

Get $1 Free Credit on Atlas Cloud -- Try Sora 2 and 300+ Models

────────────────────────────────────────────────────────────