Seedream Image Models

Seedream 是由 ByteDance 开发的一款先进 AI 图像生成模型,旨在将文本和参考图像转化为高质量、专业级的视觉内容,具备快速的性能和丰富的创意控制。它将文生图和直观的图像编辑统一在一个工作流中,支持多图参考和一致的风格输出。Seedream 提供超快的高分辨率图像(最高 4K),在细节渲染方面表现出色,是设计师、营销人员和创作者的理想选择。

探索领先模型

Atlas Cloud 为您提供最新的行业领先创意模型。

Seedream v4.5

ByteDance latest image generation model achieving all-round improvements. Excels at typography, poster design, and brand visual creation with superior prompt adherence.

Seedream v4.5 Edit

ByteDance advanced image editing model that preserves facial features, lighting, and color tones while enabling professional-quality modifications.

Seedream v4.5 Sequential

ByteDance latest image generation model with batch generation support. Generate up to 15 images in a single request.

Seedream v4.5 Edit Sequential

ByteDance advanced image editing model with batch generation support. Edit multiple images while preserving facial features and details.

Seedream v4

Open and Advanced Large-Scale Image Generative Models.

Seedream v4 Sequential

Open and Advanced Large-Scale Image Generative Models.

Seedream v4 Edit

Open and Advanced Large-Scale Image Generative Models.

Seedream v4 Edit Sequential

Open and Advanced Large-Scale Image Generative Models.

Seedream v3.1

Open and Advanced Large-Scale Image Generative Models.

Seedream V3

ByteDance Seedream V3 is a state-of-the-art text-to-image model that excels in generating high-quality, photorealistic images with exceptional detail and artistic flair.

Seedream Image Models 的核心亮点

Atlas Cloud 为您提供业界领先的最新创意模型。

图像合成

使用 Seedream v3–v4 模型根据文本提示词生成图像。

直接编辑

通过 Seedream v4/edit 端点优化图像。

序列编辑

Applies step-by-step changes with edit-sequential model.

顺序输出

通过顺序生成提供多步骤结果。

版本选项

提供 v3、v3.1 和 v4 变体,以满足不同需求。

图像输入

编辑模型可以将现有图像作为输入,并通过提示词(prompts)对其进行优化。

使用 Seedream Image Models 可以做什么

Atlas Cloud 为您提供业界领先的最新创意模型。

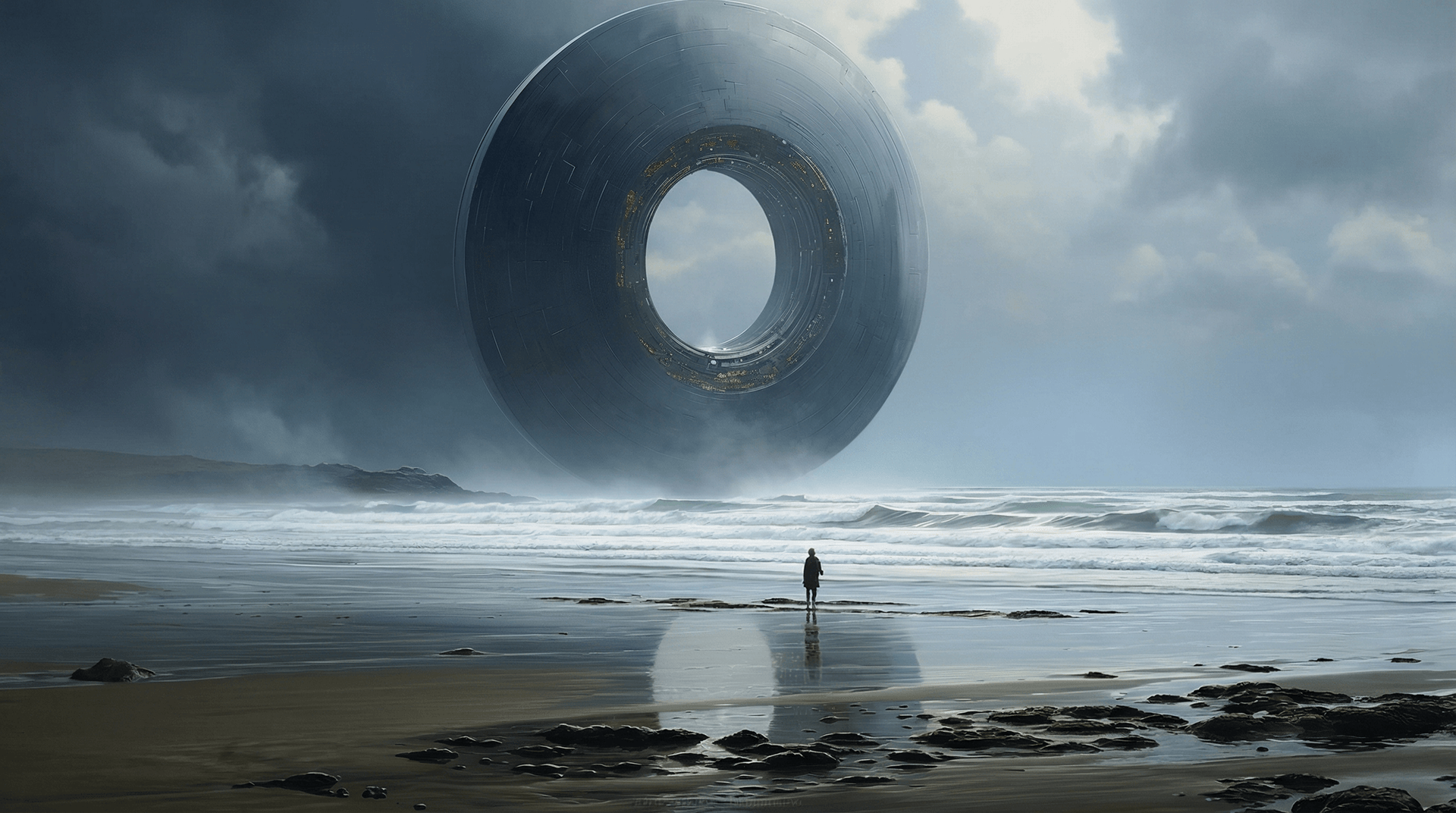

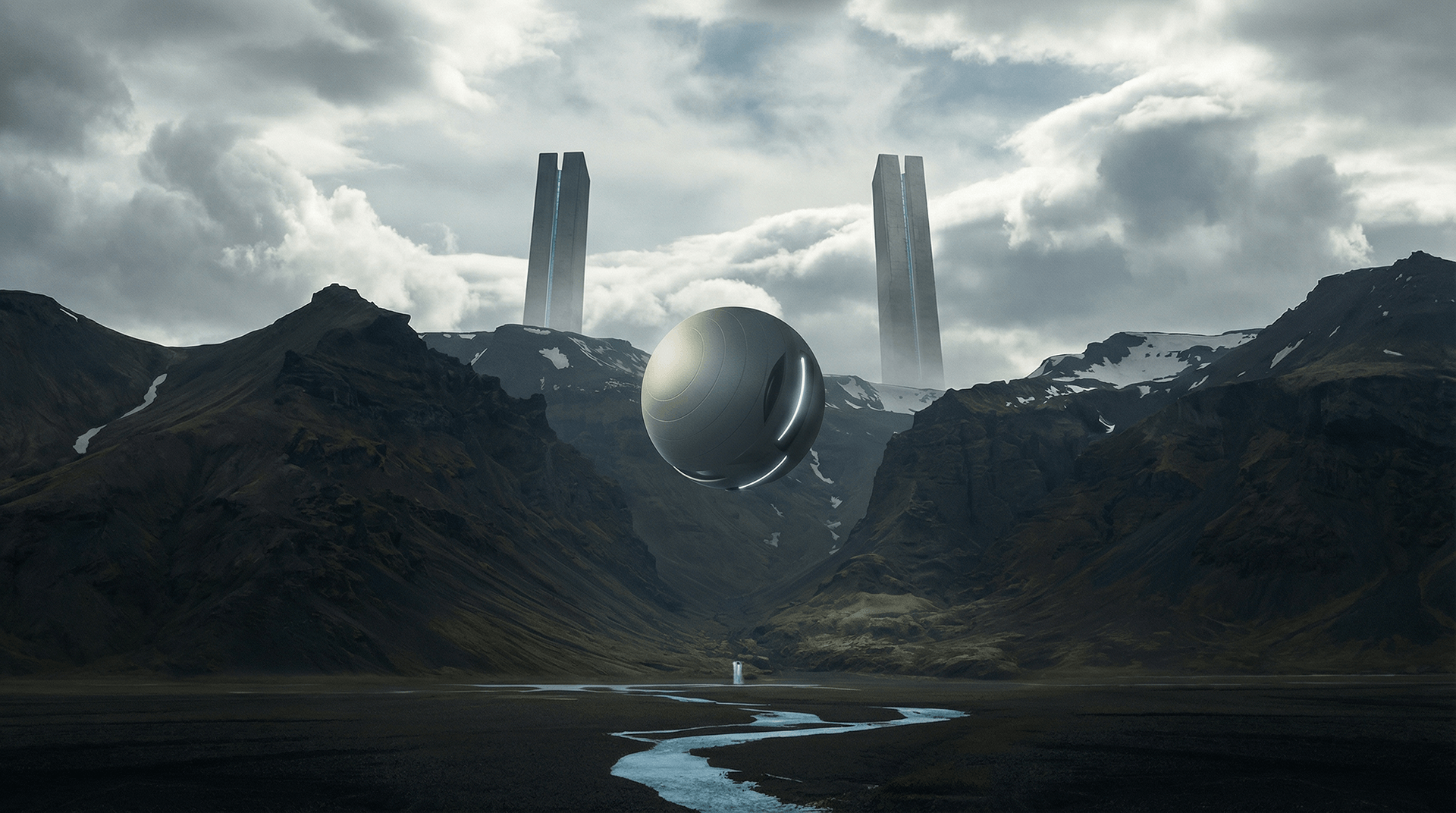

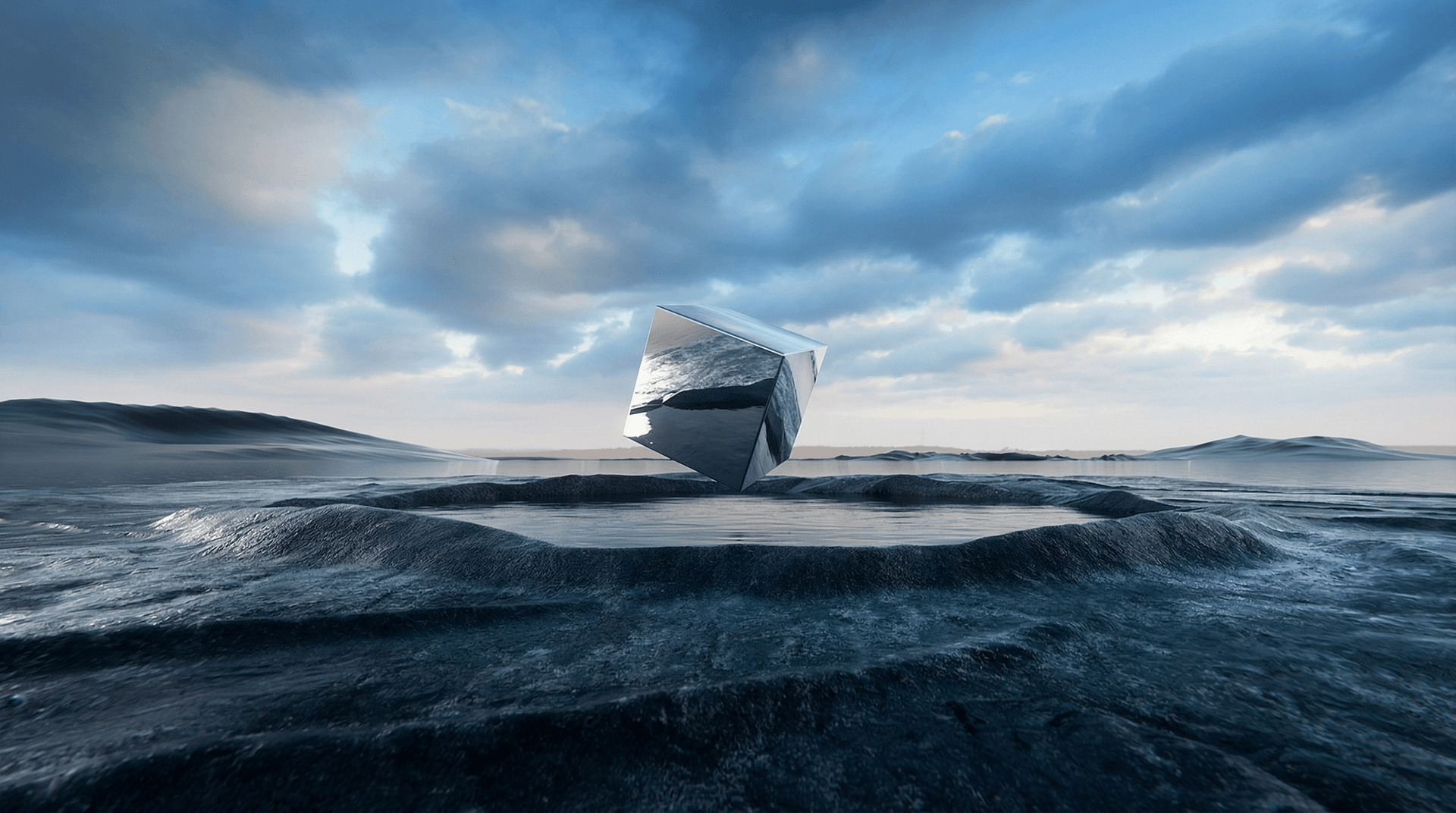

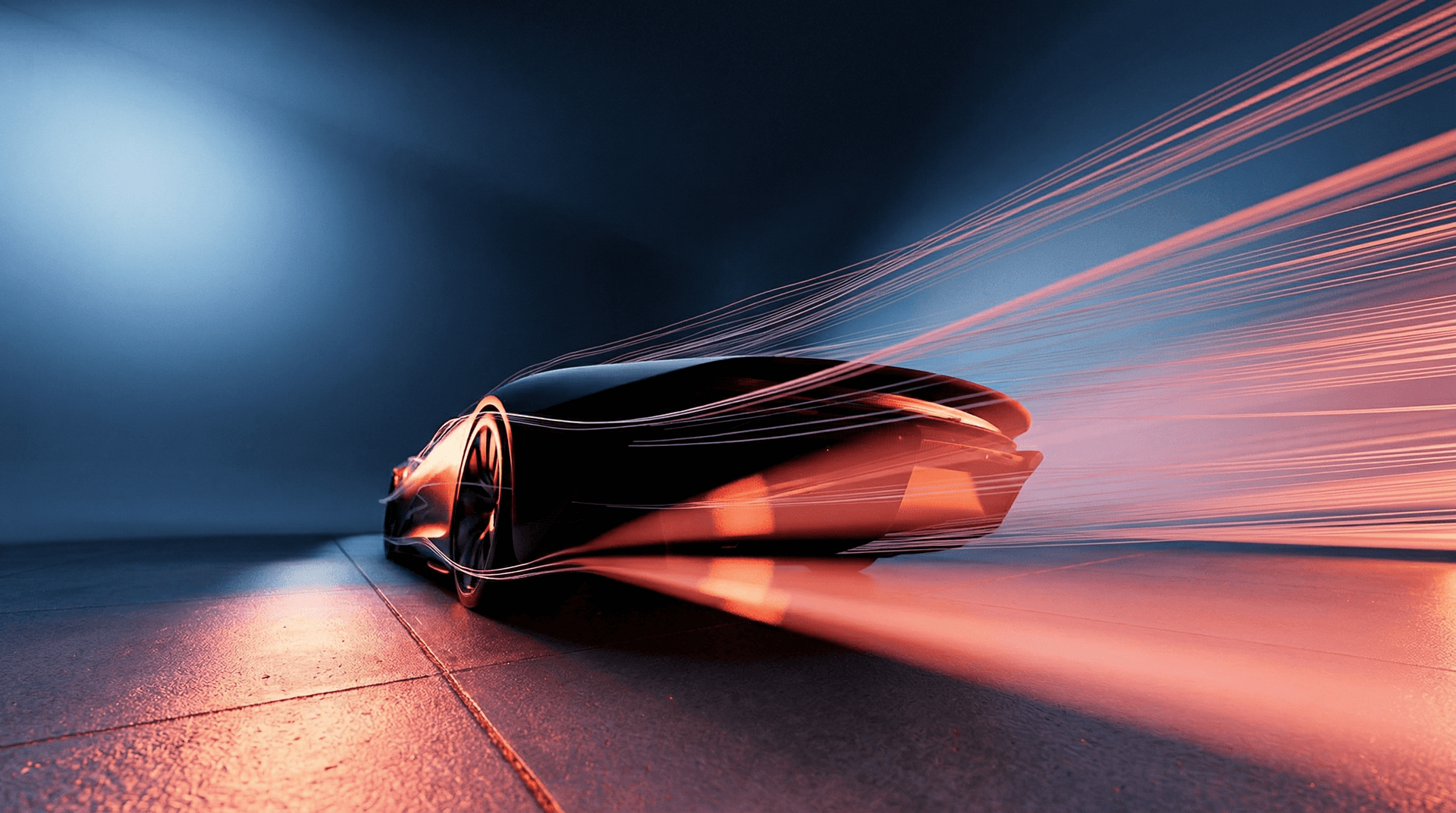

生成高分辨率营销视觉图、产品图片或创意素材。

通过逐帧控制,编辑并迭代设计。

通过文本提示词生成艺术概念图或照片级逼真渲染。

为广告、时尚和设计行业构建可扩展的图像工作流。

为何在 Atlas Cloud 使用 Seedream Image Models

将先进的 Seedream Image Models 模型与 Atlas Cloud 的 GPU 加速平台相结合,提供无与伦比的性能、可扩展性和开发体验。

性能与灵活性

低延迟:

GPU 优化推理,实现实时响应。

统一 API:

一次集成,畅用 Seedream Image Models、GPT、Gemini 和 DeepSeek。

透明定价:

按 Token 计费,支持 Serverless 模式。

企业与规模

开发者体验:

SDK、数据分析、微调工具和模板一应俱全。

可靠性:

99.99% 可用性、RBAC 权限控制、合规日志。

安全与合规:

SOC 2 Type II 认证、HIPAA 合规、美国数据主权。

探索更多系列

Seedance 2.0 Video Models

Seedance 2.0(by Bytedance) is a multimodal video generation model that redefines "controllable creation," moving beyond the limitations of text or start/end frames. It supports quad-modal inputs—text, image, video, and audio—and introduces an industry-leading "Universal Reference" system. By precisely replicating the composition, camera movement, and character actions from reference assets, Seedance 2.0 solves critical issues with character consistency and physical coherence, empowering creators to act as true "directors" with deep control over their output.

Vidu Video Models

Vidu (by ShengShu Technology) is a foundational video model built on the proprietary U-ViT architecture, combining the strengths of Diffusion and Transformer models. It features superior semantic understanding and generation capabilities, producing coherent, fluid visuals that adhere to physical laws without the need for interpolation. With exceptional spatiotemporal consistency and a deep understanding of diverse cultural elements, Vidu empowers professional filmmakers and creators with a stable, efficient, and imaginative tool for video production.

MiniMax LLM Models

MiniMax is a large language model developed by MiniMax AI, focused on efficient reasoning, long-context understanding, and scalable text generation. It is designed for complex tasks such as dialogue systems, document analysis, content creation, and AI agents. With an emphasis on high performance at lower computational cost, MiniMax is well suited for enterprise applications and developer use cases where stability, efficiency, and cost control are important.

GLM LLM Models

GLM (General Language Model) is a large language model developed by ZAI (Zhipu AI) for text understanding, generation, and reasoning. It supports both Chinese and English and performs well in dialogue, content creation, translation, and code assistance. GLM is widely used in chatbots, enterprise AI systems, and developer applications due to its stable performance and versatility.

Moonshot LLM Models

Kimi is a large language model developed by Moonshot AI, designed for reasoning, coding, and long-context understanding. It performs well in complex tasks such as code generation, analysis, and intelligent assistants. With strong performance and efficient architecture, Kimi is suitable for enterprise AI applications and developer use cases. Its balance of capability and cost makes it an increasingly popular choice in the LLM ecosystem.

Open AI Model Families

Explore OpenAI’s language and video models on Atlas Cloud: ChatGPT for advanced reasoning and interaction, and Sora-2 for physics-aware video generation.

Van Video Models

Van Model is a flagship video model family, perfectly retaining the cinematic visuals and complex dynamics of 3D VAE and Flow Matching. By leveraging proprietary compute distillation, it breaks the "quality equals cost" barrier to deliver extreme inference speeds and ultra-low costs. This makes Van the premier engine for enterprises and developers seeking high-frequency, scalable video production on a budget.

Kling 3.0 Video Models

Kling AI Video 3.0 (by Kuaishou) is a groundbreaking model designed to bridge the worlds of sound and visuals through its unique Single-pass architecture. By simultaneously generating visuals, natural voiceovers, sound effects, and ambient atmosphere, it eliminates the disjointed workflows of traditional tools. This true audio-visual integration simplifies complex post-production, providing creators with an immersive storytelling solution that significantly boosts both creative depth and output efficiency.

Veo3.1 Video Models

Veo 3.1 (by Google) is a flagship generative video model that sets a new standard for cinematic AI by deeply integrating semantic capabilities to deliver cinematic visuals, synchronized audio, and complex storytelling in a single workflow. Distinguishing itself through superior adherence to cinematic terminology and physics-based consistency, it offers professional filmmakers an unparalleled tool for transforming scripts into coherent, high-fidelity productions with precise directorial control.

Sora-2 Video Models

The Sora-2 family from OpenAI is the next-generation video + audio generation model, enabling both text-to-video and image-to-video outputs with synchronized dialogue, sound effect, improved physical realism, and fine-grained control.

Nano Banana Image Models

Nano Banana is a fast, lightweight image generation model for playful, vibrant visuals. Optimized for speed and accessibility, it creates high-quality images with smooth shapes, bold colors, and clear compositions—perfect for mascots, stickers, icons, social posts, and fun branding.

Wan2.6 Video Models

Wan 2.6 is a next-generation AI video generation model from Alibaba’s Tongyi Lab, designed for professional-quality, multimodal video creation. It combines advanced narrative understanding, multi-shot storytelling, and native audio–visual synchronization to produce smooth 1080p videos up to 15 s long from text and reference inputs. Wan 2.6 also supports character consistency and role-guided generation, enabling creators to turn scripts into cohesive scenes with seamless motion and lip syncing. Its efficiency and rich creative control make it ideal for short films, advertising, social media content, and automated video workflows.