Happy Horse 1.0 vs Kling 3.0: We Tested Both With the Same 9 Prompts

Before you hit generate on an AI video prompt, there's always a half-second where you genuinely don't know what's coming back. We've been sitting with that feeling long enough, so we went straight to Kling's official prompt library, pulled nine prompts as-is, and ran them through Happy Horse 1.0 word for word.

The range is pretty unforgiving: on one end, a slow perfume close-up in a Paris apartment with a French voiceover; on the other, a 15-second moonlit garden scene where a woman in a dark green gown releases a white flower mid-sprint while period-costumed figures flood in from both sides and a man reaches for her hand. Most models would quietly struggle somewhere in that gap.

Both were running on Atlas Cloud, so same platform, same conditions, nothing tweaked on either side. The videos are below, in order of difficulty. Scroll down and the videos speak for themselves.

Happy Horse 1.0 vs Kling 3.0: Full Technical Comparison

| Model | Happy Horse 1.0 | Kling 3.0 Pro |

|---|---|---|

| Provider | Alibaba | Kuaishou |

| Modality | T2V, I2V, R2V & Video edit | T2V, I2V |

| Resolution | 1080P, 720P | 1080P |

| Aspect Ratio | 16:9, 9:16, 1:1, 4:3, 3:4 | 16:9, 9:16, 1:1 |

| Audio generation | √ | √ |

| Duration | 3~15s | 3~15s |

| Price | Start from USD 0.14/s | Start from USD 0.095/s |

Kling 3.0 is built on the Diffusion Transformer (DiT) architecture, enabling the model to simultaneously understand the spatial and temporal relationships of pixels. This significantly reduces flickering and texture jitter compared to its predecessor.

It supports the 'AI Director' feature, which allows multiple different camera transitions within a single generation while maintaining spatial continuity of characters across shots. As seen in the videos, this 'AI Director' capability leads to natural camera transitions in Kling 3.0’s videos. However, it also weakens adherence to specific camera instructions from the prompts.

Additionally, Kling 3.0’s ability to maintain consistency for more than three characters ensures that the generated characters feel more realistic and free from AI-generated faces.

Happy Horse 1.0, on the other hand, uses a unified Transformer architecture with 15 billion parameters (15B) and features 40 layers of self-attention mechanisms, producing high-quality, detail-rich videos. Its DMD-2 distillation compresses denoising steps to just 8, combined with the MagiCompiler for acceleration, enabling it to generate 1080p videos on an H100 within approximately 38 seconds. This greatly reduces generation time, allowing it to produce videos quickly and efficiently.

Head-to-Head Results: Happy Horse 1.0 vs Kling 3.0

Test 1: Product Shots & Static Scenes

Perfume commercial

Let's first look at the performance of Kling 3.0:

On screen, the render captures stunning afternoon light and shadows, though the shots were self-edited and didn't fully adhere to the prompts.

The piano piece has interruptions, but they feel natural. The tone and pacing of the narration align well with the video content.

Overall, the result is already breathtaking.

Let's take a look at the performance of Happy Horse 1.0 next:

First, visually, the lighting and shadows are more lavish and detailed compared to Kling 3.0. It even features a close-up of the 'Kling' logo, with a left-to-right sliding reflection effect following the camera movement. The shot progression also fully adheres to the prompts.

As for the background music, the piano piece is harmonious and elegant, blending in subtly. The narration effect is similar to Kling 3.0's.

Overall, Happy Horse 1.0 outshines in this round.

Family watching TV

Let's start by looking at the performance of Kling 3.0:

The transitions between shots are smooth, but the interaction between the four characters is lacking, especially in the scenes where the first two are speaking—there's no reaction from the others, as if they didn't hear.

In terms of sound, while it doesn't include the air conditioner vent noise mentioned in the prompts, there is TV audio, which fits a realistic, everyday vibe.

Overall, the performance is fairly standard.

Now, let's take a look at the performance of Happy Horse 1.0:

Visually, the interactions between the characters feel more natural and dynamic compared to Kling 3.0. However, in the latter part of the video, the adult woman and the two children show identical smiles, revealing some AI-generated traits that detract from authenticity.

In terms of sound, Happy Horse 1.0 falls short of Kling 3.0 this time, with no ambient noise at all. The tone of the characters' line delivery also comes across as relatively flat.

Overall, both performances are fairly standard.

Test 2: Single-Character Narrative Sequences

Working woman — one continuous take

Similarly, let's first look at the performance of Kling 3.0:

The results are already stunning; now let's take a look at the performance of Happy Horse 1.0:

It is evident that Kling 3.0 delivers higher quality this time.

The prompts did not describe the setup of the office scene, so both models took creative liberties. However, the scene generated by Kling 3.0 is more logical. In contrast, Happy Horse 1.0 shows an illogical setup where the space between two elevators is separated by a glass door.

As for character actions, Kling 3.0 adheres more closely to the prompts, depicting actions such as 'take off her sunglasses and tucks them into her commuter bag' and 'hang the bag on a coat rack near the entrance'. On the other hand, in Happy Horse 1.0's video, the main character's glasses disappear entirely after being taken off, and both the bag and the coat vanish after the character removes the coat—only for the coat to later reappear on the character.

However, neither model successfully portrayed the scenes 'shrugs off her outer jacket and hangs that on the same rack' and 'signs the document before handing it back' The coat-hanging scene is missing entirely. In the signing scene, Kling 3.0 omitted the signing, while Happy Horse 1.0 had the character sign on an upside-down document—quite illogical.

Overall, in this round, Kling 3.0 comes out on top.

Truck driver — 4-shot sequence

Let's start by looking at the performance of Kling 3.0:

It's clear that the lighting rendering and atmosphere creation are very strong, and the characters have distinctive features without any obvious AI-generated faces. However, there is a slight flaw in the second shot—inside the car, there shouldn't be light coming from the back-right side of the male protagonist's head. In the fourth shot, there is distortion in the bottom-right corner of the photo.

Overall, the effect is quite impressive.

Now, let's take a look at the performance of Happy Horse 1.0:

The child's photo doesn't look very realistic, with strange messy lines appearing on their left arm.

Overall, the two are on par with each other. Aside from some flaws in the details, both fulfilled the requirements of the prompts.

Snowmobile — 6-angle sequence

Let's start by looking at the performance of Kling 3.0, then move on to Happy Horse 1.0's performance.

The camera movement in Kling 3.0 is more natural, and the car's motion feels more realistic. In contrast, Happy Horse 1.0's equipment looks too new, which makes it seem unrealistic, and in the third shot, the tracks on the snow disappear.

Kling 3.0 comes out ahead.

Test 3: Two-Person Dialogue & Interaction

Terrace couple — 4-line scene

Let's start by reviewing the performance of Kling 3.0, then move on to Happy Horse 1.0's performance:

Kling 3.0 features beautiful coloring, close-up shots that align with the prompts, richer facial expressions, more accurate lip-syncing, and more distinctive character appearances.

Happy Horse 1.0 falls short in camera performance compared to Kling 3.0. For the male character's first line, the lip-sync is quite blurry.

In this round, Kling 3.0 delivers a better performance.

Madrid street — asking for directions

Let's first review the performance of Kling 3.0, and then move on to Happy Horse 1.0:

Both models demonstrate good Spanish language skills. In Kling 3.0's video, the white-haired store clerk's movements appear unnatural, as he keeps pointing at the tourist.

In this case, Happy Horse 1.0 delivers more natural actions—the female tourist reads Spanish from her phone, and the white-haired clerk's movements are also more natural.

Happy Horse 1.0 comes out ahead in this round.

Test 4: Complex Multi-Character Scenes

Garden run — epic ensemble scene

First, the video from Kling 3.0, followed by the video from Happy Horse 1.0:

Happy Horse 1.0 demonstrates stronger adherence to the prompts, successfully capturing scenes like 'At the 8 seconds mark...she reaches back to take his hand as the two of them sprint forward together,' as well as 'In the final three seconds...their figures gradually filling the center of the frame.'

In contrast, Kling 3.0 consistently maintains a side-tracking shot throughout.

Overall, neither model performs particularly well, which might partially be due to the prompt being insufficiently detailed. That said, Happy Horse 1.0 delivers a relatively better performance than Kling 3.0 in this round.

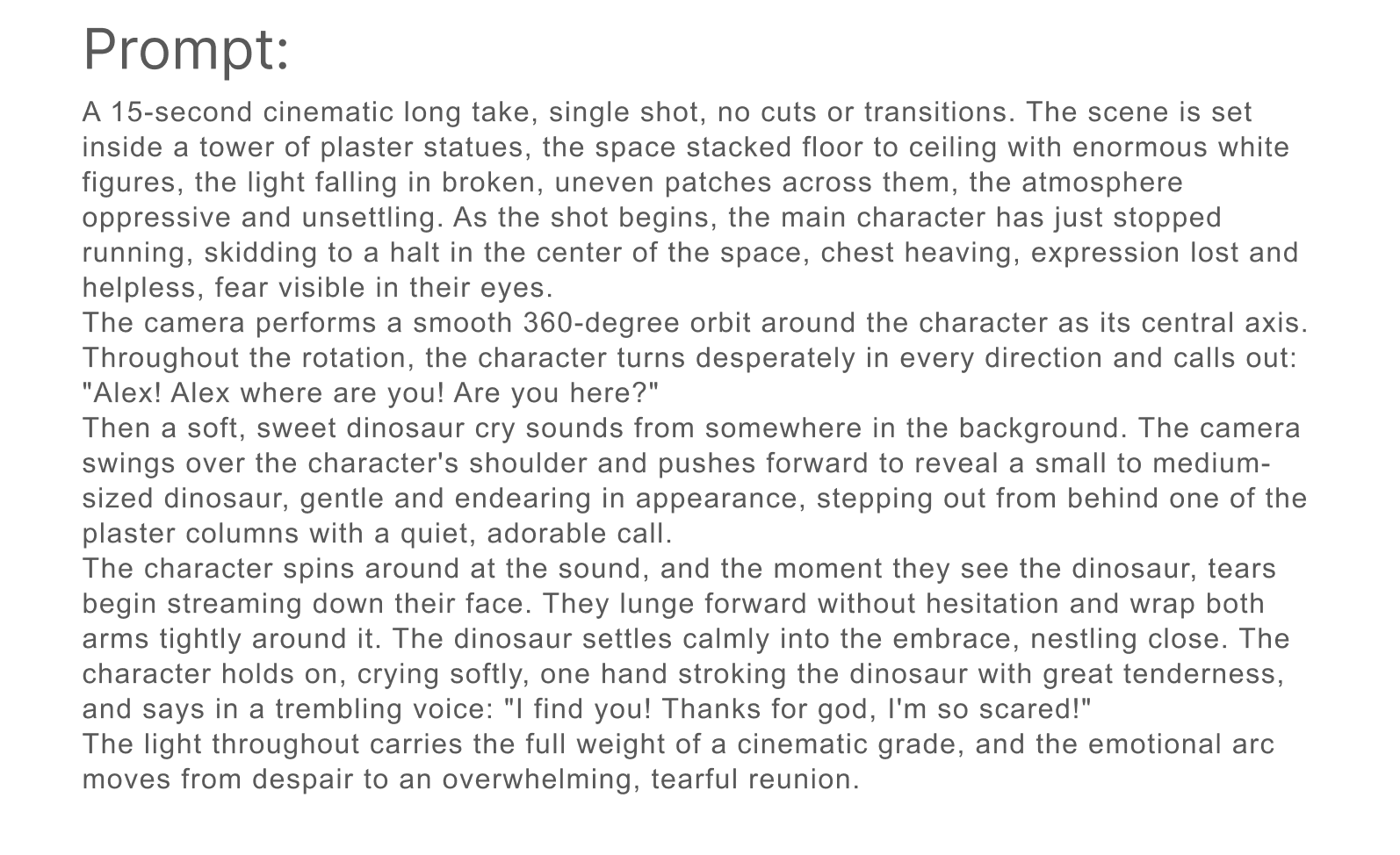

Plaster statue tower — reunion with a dinosaur

Similarly, let's first look at Kling 3.0, and then at Happy Horse 1.0:

Kling 3.0's overall visuals look more realistic and cinematic, and the content adheres to the prompts. In contrast, Happy Horse 1.0's little dinosaur fails to emerge from behind the sculpture, which not only deviates from the prompts but also makes the storyline inconsistent with common sense.

In this round, Kling 3.0 is the winner.

Happy Horse 1.0 or Kling 3.0: Which One Fits Your Workflow?

Happy Horse 1.0 excels in detail rendering, action interaction, adherence to prompts, and generation speed.

Kling performs better in camera design, visual quality, and environmental sound effects.

When rapid generation, high iteration, or content focused on character actions and interactions is needed (e.g., short dramas, social content, product demonstrations), choose Happy Horse 1.0.

When intricate camera design or content demanding high visual quality and atmospheric immersion is required (e.g., commercials, brand promotions, film previews), choose Kling 3.0.

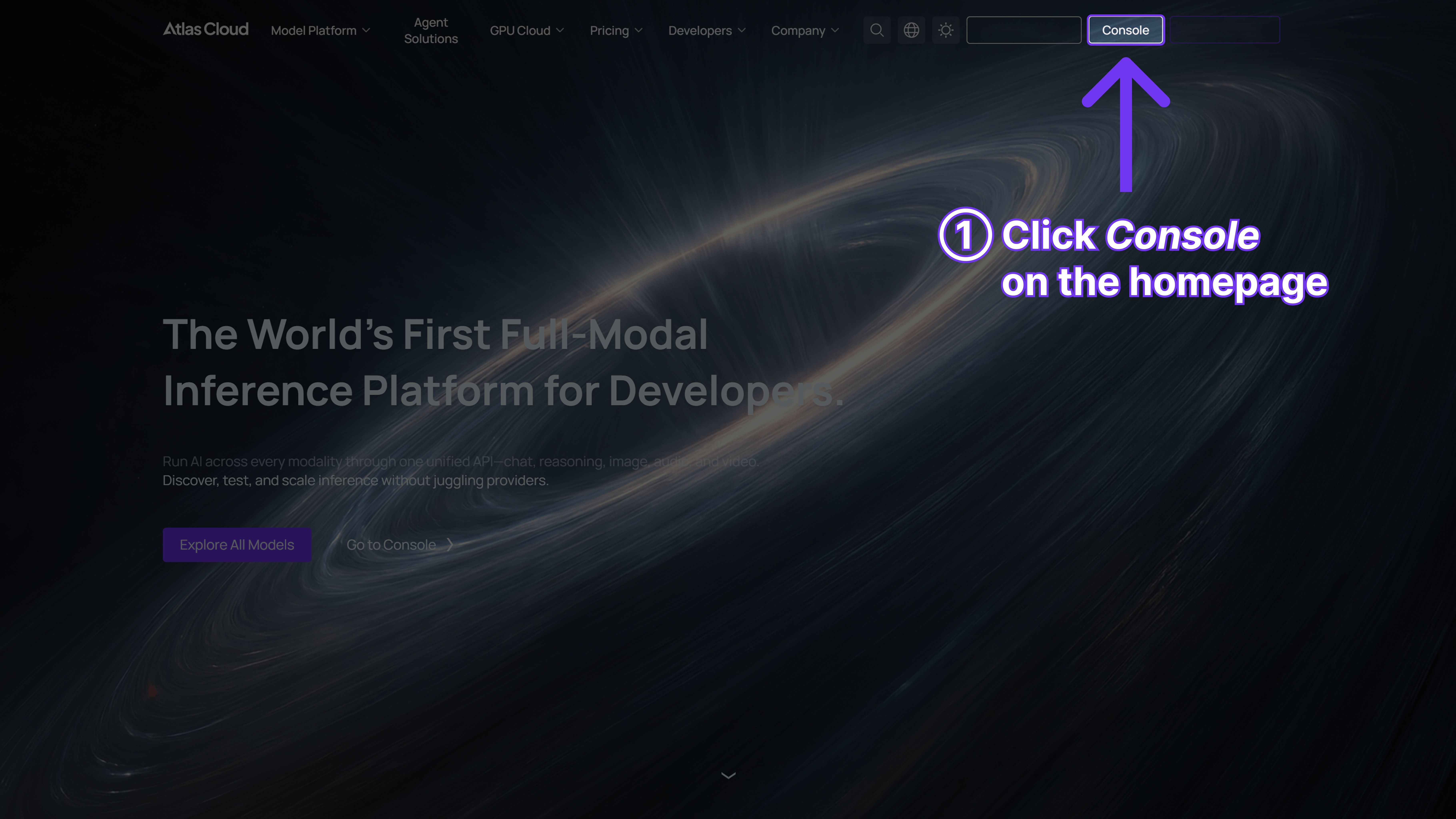

Running Both Models on Atlas Cloud

What is Atlas Cloud?

It’s a platform that simplifies AI by giving you access to 300+ top models in one place—text, images, video, and more.

Who’s it for?

• Developers who want easy, affordable AI access. • Teams handling projects that need AI across multiple areas. • Businesses needing reliable AI for important work. • People using tools like ComfyUI and n8n.

Why choose it?

• One API lets you use everything—just one key. • Clear pricing, no surprises, and low costs. • Built for enterprise: stable, secure, and supported by experts. • Works with the tools you already have. • Your data stays safe and meets compliance needs.

How does it compare?

• Fal.ai: Atlas has more models and better prices. • Wavespeed: Atlas costs less and includes enterprise support. • Kie.ai: Atlas is clearer on pricing and offers a bigger selection. • Replicate: Atlas has more models and better prices. • Other providers (like OpenAI): Atlas combines everything in one simple platform.

How to Use Happy Horse 1.0 on Atlas Cloud

Atlas Cloud lets you use models side by side — first in a playground, then via a single API.

Method 1: Use directly in the Atlas Cloud playground

Click the link below to use it in the playground.

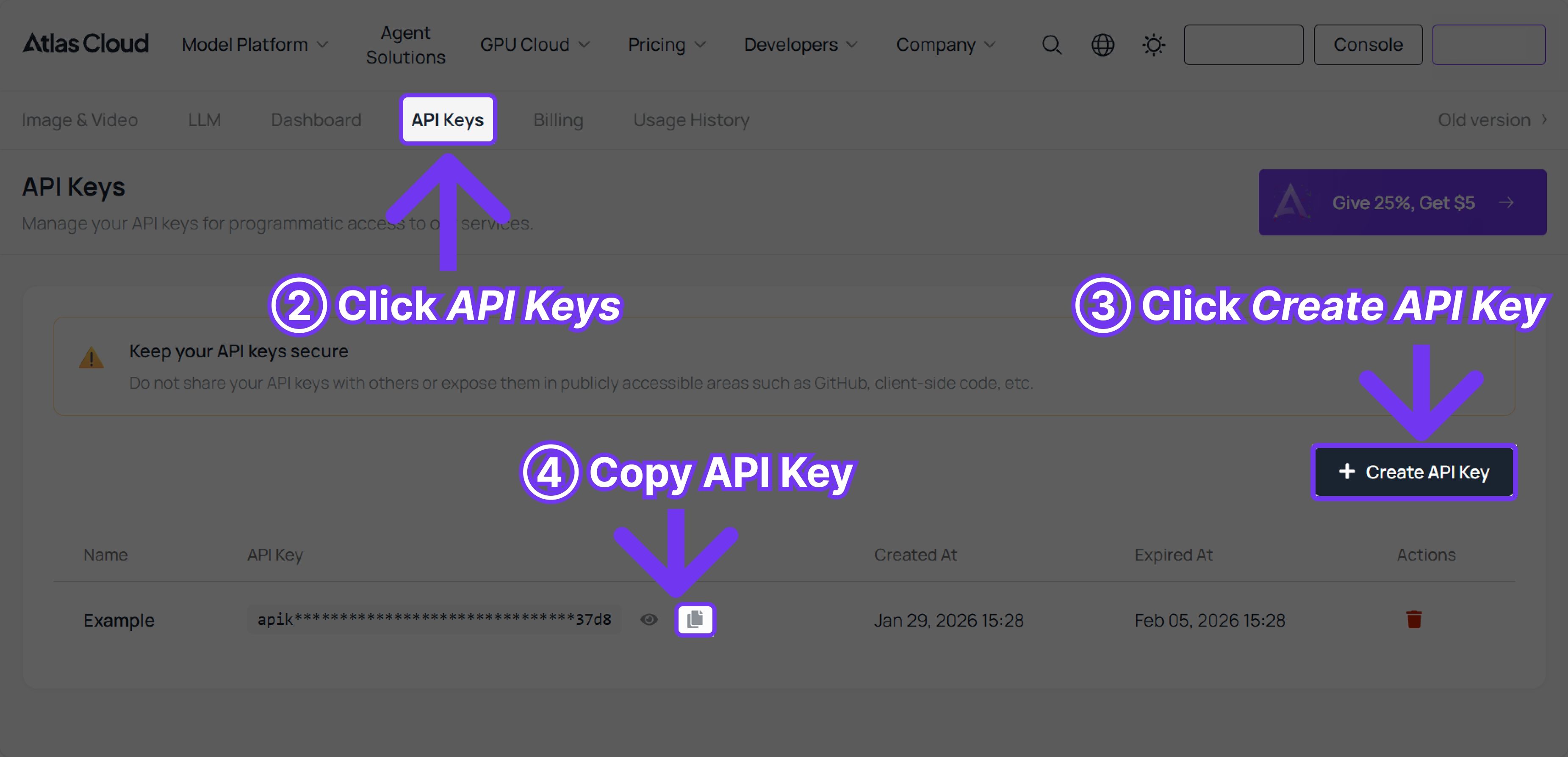

Method 2: Access via API

Step 1: Get your API key

Create an API key in your console and copy it for later use.

Step 2: Check the API documentation

Review the endpoint, request parameters, and authentication method in our API docs.

Step 3: Make your first request (Python example)

Example: generate a video with Happy Horse 1.0 (Text to Video)

plaintext1import requests 2import time 3 4# Step 1: Start video generation 5generate_url = "https://api.atlascloud.ai/api/v1/model/generateVideo" 6headers = { 7 "Content-Type": "application/json", 8 "Authorization": "Bearer $ATLASCLOUD_API_KEY" 9} 10data = { 11 "model": "alibaba/happyhorse-1.0/text-to-video", # Required. Model name. options: alibaba/happyhorse-1.0/text-to-video 12 "prompt": "A lone traveler walks slowly across a vast desert at sunset, golden light casting long shadows on the rippled sand dunes. The wind gently lifts fine grains of sand into the air, creating a soft, cinematic haze. The camera follows from behind at a low angle, gradually circling to reveal the traveler’s silhouette against the glowing horizon. Subtle lens flare, ultra-realistic lighting, shallow depth of field, 4K cinematic quality, slow motion, highly detailed textures, atmospheric, dramatic mood.", # Required. Text prompt describing the video content 13 "resolution": "1080P", # Output video resolution. options: 720P | 1080P 14 "ratio": "16:9", # Aspect ratio of the generated video. options: 16:9 | 9:16 | 1:1 | 4:3 | 3:4 15 "duration": 5, # Video duration in seconds. (min: 3, max: 15) 16 "seed": -1, # Random seed for video generation. (min: -1, max: 2147483647) 17} 18 19generate_response = requests.post(generate_url, headers=headers, json=data) 20generate_result = generate_response.json() 21prediction_id = generate_result["data"]["id"] 22 23# Step 2: Poll for result 24poll_url = f"https://api.atlascloud.ai/api/v1/model/prediction/{prediction_id}" 25 26def check_status(): 27 while True: 28 response = requests.get(poll_url, headers={"Authorization": "Bearer $ATLASCLOUD_API_KEY"}) 29 result = response.json() 30 31 if result["data"]["status"] in ["completed", "succeeded"]: 32 print("Generated video:", result["data"]["outputs"][0]) 33 return result["data"]["outputs"][0] 34 elif result["data"]["status"] == "failed": 35 raise Exception(result["data"]["error"] or "Generation failed") 36 else: 37 # Still processing, wait 2 seconds 38 time.sleep(2) 39 40video_url = check_status()

Happy Horse 1.0 & Kling 3.0: Frequently Asked Questions

Q1: Which is better, Happy Horse 1.0 or Kling 3.0?

We ran both through nine identical prompts. Neither model swept the other. Happy Horse was quicker and stuck closer to what we actually typed. Kling's output just had better visual instincts with shots that felt composed rather than generated. Which one matters more comes down to the project.

Q2: Who made Happy Horse 1.0?

Alibaba, though they stayed quiet about it for a while. The model came from a team called Future Life Lab inside Alibaba's Token Hub division. Zhang Di, the engineer behind Kling 1.0 and 2.0 at Kuaishou, led the building and he rejoined Alibaba in late 2025.

Q3: How long does Happy Horse 1.0 take to generate a video?

About 38 seconds for 1080p on an H100. The short version: DMD-2 distillation cuts the denoising process to 8 steps. Most models need far more. That's where the speed comes from.

Q4: What is Kling 3.0's AI Director feature?

Rather than generating one continuous shot, it breaks your prompt into a sequence of cuts — different angles, different framing — and keeps the character looking consistent throughout. The catch is it makes its own decisions about which shots to use, so if your prompt specifies a particular camera move, the model may go a different direction anyway.

Q5: How much do Kling 3.0 and Happy Horse 1.0 cost?

On Atlas Cloud, Kling 3.0 is priced at 0.095persecond.HappyHorseis0.095 per second. Happy Horse is 0.095persecond.HappyHorseis0.14 per second at 720p. There's no monthly fee on either. The bill reflects exactly what you rendered.

Q6: What generation modes does Happy Horse 1.0 support?

The model handles four input types: text-to-video, image-to-video, reference-to-video, and video editing. Maximum output is 1080p. For aspect ratio, it covers 16:9, 9:16, 1:1, 4:3, and 3:4.