世界をリードする大規模言語モデル。

あらゆる 会話。

エンタープライズグレードの大規模言語モデルで、推論、コーディング、自然言語処理を強化。

豊富なLLMモデルを探索

Qwen3.5 represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency.

Qwen3.5 122B A10B

Qwen3.5 represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency.

Qwen3.5 35B A3B

Qwen3.5 represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency.

Qwen3.5 27B

Qwen3 Coder represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency.

Qwen3 Coder Next

Qwen3.5 represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency.

Qwen3.5 397BA17B

MiniMax-M2.5 is a lightweight, state-of-the-art large language model optimized for coding, agentic workflows, and modern application development. With only 10 billion activated parameters, it delivers a major jump in real-world capability while maintaining exceptional latency, scalability, and cost efficiency.

MiniMax M2.5

GLM-5 is Z.AI’s latest flagship model, featuring upgrades in two key areas: enhanced programming capabilities and more stable multi-step reasoning/execution. It demonstrates significant improvements in executing complex agent tasks while delivering more natural conversational experiences and superior front-end aesthetics.

GLM 5

Kimi K2.5 is an advanced large language model with strong reasoning and upgraded native multimodality. It natively understands and processes text and images, delivering more accurate analysis, better instruction following, and stable performance across complex tasks. Designed for production use, Kimi K2.5 is ideal for AI assistants, enterprise applications, and multimodal workflows that require reliable and high-quality outputs.

Kimi K2.5

Atlas Cloudの優位性:

スピード、スケール、コスト削減

当社のモデルライブラリは単に最大規模なだけではありません。最もコスト効率が高く、信頼性があり、本番環境対応です。実証されたパフォーマンス、データに裏付けられています。

300以上のモデル、1つの統合API

マルチモーダル、オープンソース、プロプライエタリ:すべて1つの一貫したエンドポイントで。

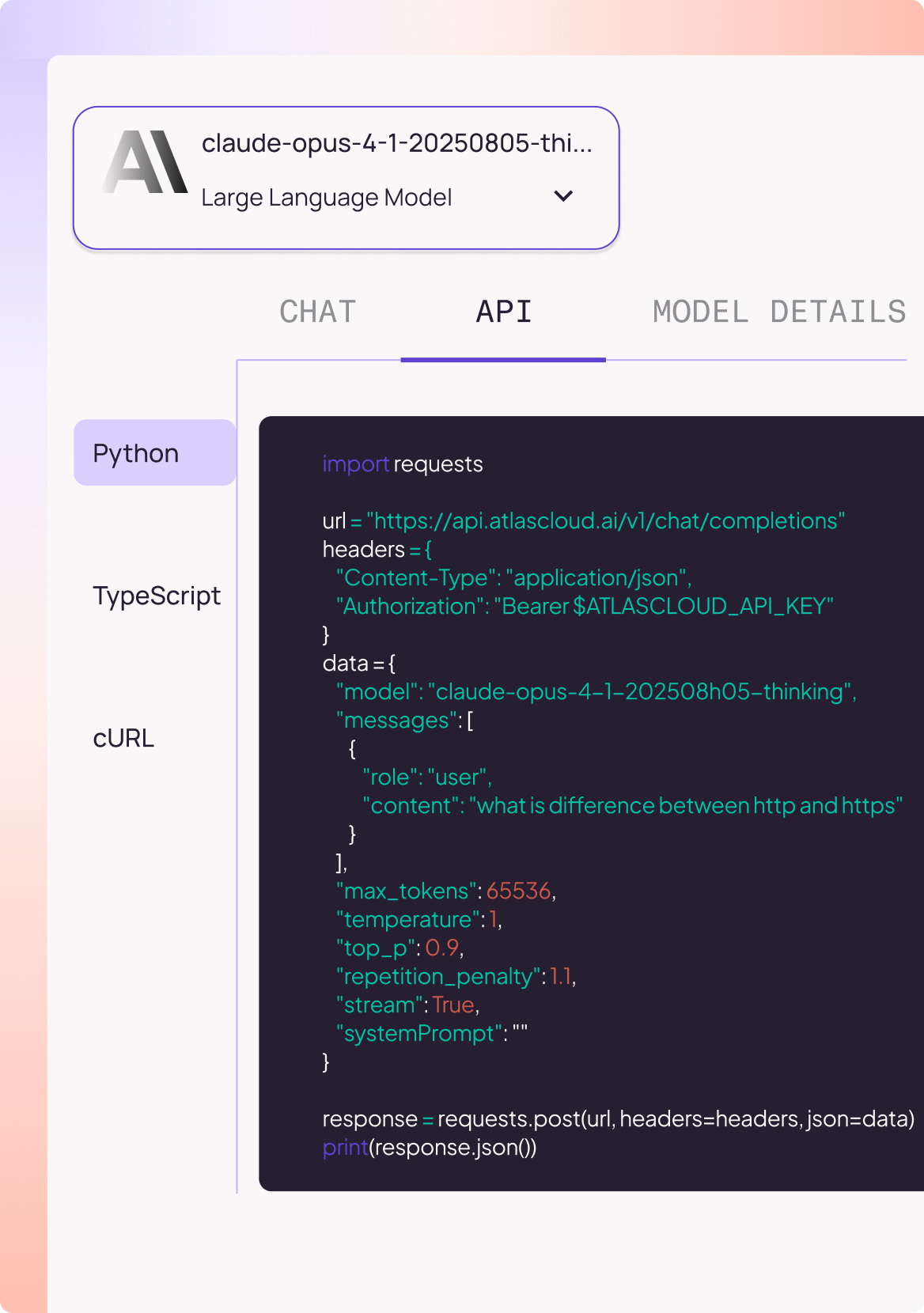

ServerlessによるAPIアクセス

Python、TypeScript、cURLで即座に開始、インフラ設定不要。

大規模な実証されたパフォーマンス

月間1000万以上のAPI呼び出し、70以上のTPS安定性、12のグローバルリージョンに展開。

透明で柔軟な料金

従量課金制。エンタープライズ向け最大50%割引。