We ran 6 scenarios, 12 videos, and one shared prompt set to find out.

On April 10, Alibaba's ATH team released Happy Horse 1.0. Within days it claimed the top spot on Artificial Analysis's video model leaderboard — T2V Elo 1389, I2V Elo 1416, leading Bytedance's Seedance 2.0 by roughly 115 points on the text-to-video side.

If you work in AI video content, product selection, or industry research, the immediate question is obvious: does this ranking hold up under real workloads?

We spent a week finding out. Same prompts, same reference assets, same evaluation framework — Happy Horse 1.0 and Seedance 2.0 run side by side across 6 scenario types, 12 videos total. This article covers three things: what actually got Happy Horse to the top, the evaluation methodology we used (a full white paper is coming), and what the 6 scenarios revealed that the leaderboard doesn't show.

By the end you'll have a clear picture of when to reach for HH, when to reach for SD, and why running this kind of comparison through Atlas Cloud's One API — one key, one SDK, one model string swap — is the most practical way to do model selection right now.

Why Happy Horse 1.0 Leads the Elo Leaderboard

A few facts worth knowing before the test results.

| Happy Horse 1.0 | Seedance 2.0 | |

|---|---|---|

| Team | Alibaba ATH | Bytedance |

| Release | Unveiled 2026/04/10, live on Atlas Cloud 4/27 | Generally available |

| Architecture | 15B unified Transformer (joint audio-video generation, no cross-attention) | Mixture-of-experts architecture |

| Native Audio | ✅ | ✅ |

| Multilingual | Lip sync in 7 languages (Mandarin / Cantonese / English / Japanese / Korean / German / French) | Prompt input in 6 languages (Chinese / English + Japanese / Indonesian / Spanish / Portuguese) |

| Generation Speed | ~38s per clip at 1080p on a single H100 | — |

| Artificial Analysis Elo | T2V 1389 (ranked #1) / I2V 1416 (ranked #1) | T2V ~1274 |

Three things genuinely earned it the top ranking.

Unified Transformer architecture. Audio and video are generated in the same sequence, not stitched together in post. Lip sync, audio timing, and edit points are modeled simultaneously. This matters because the "generate video first, add audio later" pipeline approach tends to produce visible misalignment — HH avoids that at the architecture level.

Native 7-language lip sync. Mandarin, Cantonese, Japanese, Korean, German, French, English. This is the broadest multilingual lip sync coverage in any publicly available video model right now, and it has real value for global content production.

Visual ceiling. Looking at individual frames from our test runs, HH's skin texture, single-frame aesthetics, and cinematic color grading are genuinely ahead of SD. Artificial Analysis uses human blind evaluation, and human evaluators are highly sensitive to "which one looks more like a film." That's the main explanation for the Elo gap.

But Elo is a single aggregate score. It tells you who won more head-to-head comparisons — not where they won, or where they didn't. A total score hides the real structure underneath. That's the whole reason we built a proper evaluation framework.

AI Video Model Evaluation Framework

We've compiled a full AI Video Model Evaluation White Paper — here's the core methodology.

What existing benchmarks do (and don't do)

| System | Strengths | Limitations |

|---|---|---|

| VBench / VBench-2.0 (academic benchmark) | Granular dimensions (16 + 18 sub-dimensions), covers physics and commonsense | Complex setup, requires GPU to run, not intuitive |

| Artificial Analysis Elo (blind ranking) | Reflects human subjective preference, cross-model comparable | Black box, can't pinpoint weaknesses, single aggregate score |

| FVD / CLIP Score (quantitative metrics) | Objective, scriptable | Limited correlation with human perception |

| Demo cherry-picking (industry norm) | High visual impact | Not reproducible, severe selection bias |

VBench's v2.0 paper, published in March 2026, noted something blunt: even the strongest current models score around 50% on physical plausibility. The gold standard is still evolving. A single leaderboard score is not a reliable basis for model selection.

Five evaluation dimensions

| Dimension | Evaluation Question | Key Sub-Items |

|---|---|---|

| Prompt-Video Alignment | Does the output accurately follow the instructions? | Subject / Action / Scene / Style / Quantity & Spatial Relations |

| Visual Quality | Is each individual frame excellent? | Resolution / Aesthetics / Rendering / Detail |

| Motion & Physics | Does the motion obey physical laws? | Naturalness / Physics / Dynamic Range / Camera Movement Accuracy |

| Temporal Consistency | Are frames and shots coherent over time? | Subject Identity / Scene / Flickering / Multi-shot Consistency |

| Multimodal Capabilities | What can the model do beyond visuals? | Audio / Audio-Visual Sync / Lip Sync / Multilingual / Style Control |

Dimension 5 — multimodal capabilities (audio/lip sync/multilingual/style control) — is where model differentiation is playing out in 2026. It's also the main card HH is holding.

Three-layer method

| Layer | Use Case | Tools |

|---|---|---|

| L1 Objective Metrics | Large-scale screening, CI/CD | FVD / CLIP-Score / LAION Aesthetic / DINO / Optical Flow / SyncNet / MLLM-as-Judge |

| L2 Standardized Task Set | Tutorial evaluation, product comparison, white paper publication | VBench prompt suite / Atlas Cloud Prompt Hub / custom dimension-targeted prompts |

| L3 Subjective Blind Review | Final decisions, public-facing release | Double-blind Elo + five-dimension scoring card |

Multiple papers from 2025–2026 confirm that MLLM-as-Judge (using Claude or GPT-4V as evaluators) correlates significantly higher with human scores than pure quantitative metrics alone. That's the backbone of our L1 layer.

Prompt selection tiers

The biggest source of controversy in comparative benchmarks isn't the metrics — it's the prompts. Our minimum bar and tier structure:

| Tier | Definition | When to Use |

|---|---|---|

| A (default) | Model-neutral, dimension-targeted prompt — one prompt run on both models | Primary evaluation standard |

| B (avoid) | Same theme, but each model uses its own Hub prompt | Not used for scoring — showcase reels only |

Why a single score misleads

Video models in 2026 aren't just "text-to-video." A model might support T2V, I2V, Reference-to-Video, Video Editing, native audio, and multilingual lip sync simultaneously — and perform very differently across those modes. Elo collapses that into one number. Our framework tags every evaluation with its modality and outputs a capability matrix, not a ranking.

The full white paper will include a scoring card template, execution SOP, toolchain recommendations, and full academic references (VBench, Artificial Analysis, AIGCBench, LOVE, and others). The test results below were produced under this framework.

6 Scenarios: Where the Leaderboard #1 Loses

We selected 6 scenario types from Atlas Cloud's Prompt Hub — covering all five evaluation dimensions with balanced modality coverage. Unified parameters across all runs: 1080p / 16:9 / seed 42 / duration scaled to scenario complexity (5–15 seconds).

Scenario 1: Cave Exploration — Visual Quality + Ambient Audio

Prompt: flashlight exploration of a limestone cave, illuminating wet rock walls and crystal reflections, beam passing through shallow water creating caustic light patterns, stalactites casting long shadows that shift with the light source. Ambient audio: dripping water, wet rock footsteps, enclosed-space breathing.

| Dimension | SD | HH |

|---|---|---|

| Caustic light physics | ✅ | ✅ |

| Wet rock highlights / mineral texture | Tends toward over-polished | More realistic ✅ (stalactite anatomical detail wins) |

| Ambient audio | Dripping / footsteps / breathing — three layers distinct ✅ | Noticeable "AI quality," layers blended together |

HH wins on visuals, SD wins on audio. This scenario maps directly onto HH's leaderboard advantage — its visual detail is genuinely SOTA-level here.

Scenario 2: Hollywood Car Chase — Instruction Density

The prompt packs 7 distinct shot types into 15 seconds: aerial wide shot → low-angle ground tracking → hood POV → Dutch angle medium shot → ECU rear window → wide-angle side tracking → aerial pull-back.

| Dimension | SD | HH |

|---|---|---|

| 7-shot execution | 5/7 shots accurate ✅ | Only 2–3 shots |

| Smoke / debris physics | Dense and realistic ✅ | Tends toward light |

| Three-layer audio (engine / tires / road surface) | Distinct ✅ | Mixed together |

| Semantic misfire | — | Rendered "aerial drone shot" as an actual drone flying into frame |

SD wins clearly. HH's "drone mistake" is a clean example of semantic alignment failure — it knows the word "drone" but can't tell whether it refers to a camera move or a physical object in the scene.

Scenario 3: Cross-Scene Character Consistency

Reference: a woman with long red hair, blunt bangs, white shirt, black tie. Task: walk from office to home, maintaining consistent appearance and natural emotional transition throughout.

One thing worth flagging here: we used R2V (Reference-to-Video), not I2V. I2V defaults to locking the reference image as the first frame, which forces the video to start from that image — you can't test cross-scene consistency that way. The distinction matters more than it might seem.

| Dimension | SD | HH |

|---|---|---|

| Facial features / hairstyle consistency | ✅ | ✅ |

| Wardrobe continuity | Single continuous take from office to home (artistic but abrupt) | Clean outfit change, jacket removed while tie retained ✅ |

| Emotional transition frames | Two-beat jump cut | Eyes closing + faint smile as "leaving work mode" transition ✅ |

| Visual texture | Leans clean and polished | Fine freckle detail, but noticeable "AI plastic" sheen |

| Narrative completeness | 3 scenes + father character included ✅ | Mother-daughter focus only |

Call it a draw, two different tradeoffs: SD delivers a single continuous take with clean execution; HH uses conventional cutting with finer detail but noticeable AI smoothing artifacts.

Scenario 4: Talk Show Dual-Character Dialogue — Multimodal Performance ⚡

This is the most instruction-dense scenario of the six. Three explicit rhythm markers in the prompt (lean forward / fake-thinking pause / shared-laugh punchline) each function as a discrete pass/fail checkpoint.

The prompt specifies a Tonight Show-style three-round exchange, closing with both characters in a full laugh.

| Dimension | SD | HH |

|---|---|---|

| Rhythm cue: "dog leans forward" | ✅ Executed | ❌ Completely static throughout |

| Rhythm cue: "cat fake-thinking pause" | ✅ ECU thinking expression delivered | ❌ Not captured |

| Shared-laugh closing shot | ✅ Cut to cat's laugh (punchline beat) | ⚠️ Cut to dog instead (wrong character) |

| Text faithfulness | ✅ | ✅ (the one dimension HH held) |

| Voice matching | ✅ Accurate | ⚠️ Accurate but mechanical |

| Bonus creativity | ✅ Proactively added talk show audience laughter — genre-appropriate fill beyond the prompt | — |

| Voice consistency | ✅ | ❌ Cat's final laugh shifted to a male voice |

SD wins comprehensively. The most interesting detail: SD added audience laughter that wasn't in the prompt. Talk show content has an expected format — a laugh track at reaction beats — and the model filled it in. That's not just instruction-following. It's understanding what this type of content is supposed to be.

HH stayed text-faithful but hit a hard failure on audio: the cat's laugh shifted into a male voice mid-clip. Long-range audio consistency is a real weakness.

Scenario 5: Romantic Scene → Premeditated Reversal — Video Editing ⚡⚡

Source video: a foreign man says in English, "The moon is beautiful tonight, a shame I can't share it with you," a Chinese woman responds in Mandarin, "Anywhere feels like a beautiful view when I'm with you." Rooftop at night, soft atmosphere.

Editing prompt: full narrative reversal. The man's expression snaps from warm to cold. He pushes the woman off the roof without hesitation. Mid-fall, she screams in Mandarin: "You were lying to me from the start!" — not fear, disbelief. He stands at the edge with a cold smile and says quietly in English: "This is what you owed my family."

Four-layer test: expression reversal + key physical action + bilingual dialogue replacement + visual tone shift.

| 4-Layer Test | SD | HH |

|---|---|---|

| Man's expression reversal | ✅ Eye shift + cold smile | ❌ Expression reads as grief instead |

| Woman's reaction: disbelief not fear | ✅ Mid-fall rage and screaming | ❌ Textbook fear expression (opposite of the prompt) |

| Push-off-the-roof action | ✅ Actually happened (aerial fall shot + cityscape tilt) | ❌ Never pushed — woman still standing |

| Visual tone shift | ✅ | ⚠️ Held baseline |

| Bilingual dialogue generation | ✅ | ✅ (the one dimension HH held) |

| Voice realism | ✅ | ❌ Heavy AI quality |

SD executes the full scenario. HH fails completely.

HH parsed the entire prompt as "add some dialogue and some emotional conflict." The narrative structure didn't move. It handles surface instructions (what to say) but not narrative-level instructions (how the story goes).

Scenario 6: Multi-Modal Reference Fusion — Elevator Thriller ⚡⚡⚡

Input: 3 reference images (man's appearance / elevator interior / hallway) + 1 reference video (camera movement + facial expression). Task: fuse all 4 inputs and produce a sequence of fear → Hitchcock zoom → exiting elevator → mechanical arm tracking pan.

The two models use different endpoints — HH uses video-edit, SD uses reference-to-video — but both accept composite image-plus-video input. The endpoint names are asymmetric; the capability is equivalent. That's actually a useful proof point for what One API's abstraction layer does.

| Evaluation Item | SD | HH |

|---|---|---|

| Camera movement execution | ✅ Solid | ✅ Solid |

| Scene swap (elevator / hallway) | ✅ | ✅ |

| Man's identity matches img1 | ✅ Executed perfectly | ❌ No match — completely different face |

| Character consistency throughout | ✅ Stable | ⚠️ Appears to drift in the second half |

SD wins cleanly.

HH replicated the pose from the reference image (the hand-on-throat motion) but generated a completely different face. It copied the gesture, not the identity. This is structurally the same failure as Scenario 5: surface-level imitation works, but semantic depth doesn't.

Happy Horse vs Seedance: Instruction Comprehension Gap

One consistent structure emerged:

| Instruction Level | HH | SD |

|---|---|---|

| Surface-level instructions (dialogue, pose, parameters, scene elements) | ✅ Executes | ✅ Executes |

| Semantic-level instructions (narrative reversal, character identity, timing) | ❌ Fails | ✅ Executes |

| Genre convention fill-in (auto-adding talk show audience laughter, etc.) | ❌ | ✅ Proactively adds |

This isn't really a question of which model is "better." They operate at different instruction comprehension levels.

Give HH a line of dialogue to deliver, a pose to copy, a scene element to reproduce — it handles the detail well, and the visual texture is often superior. Ask it to reverse a narrative arc, maintain a specific person's identity across shots, or follow a sequence of rhythm cues — it stops at "add the surface elements" without getting to "execute what you actually meant."

SD works the other way. Less precise on surface texture, but more reliable on overall narrative, identity fidelity, and timing — and it will proactively fill in genre-appropriate elements the prompt didn't specify.

This explains the Elo result too. Artificial Analysis's blind evaluation is highly sensitive to "which looks more cinematic." HH's visual ceiling (skin texture, color grading, single-frame aesthetics) is real, and it shows in head-to-head comparisons. But Elo doesn't expose semantic comprehension gaps. Both things — the #1 ranking and the failure modes — are true simultaneously.

Happy Horse vs Seedance: Which Model Fits Your Use Case

| Scenario Type | Pick | Reason |

|---|---|---|

| Single best-looking shot (visual quality ceiling) | HH | Skin texture / cinematic color / single-frame aesthetics |

| Localized dialogue generation / translation / replacement | HH | Reliable text faithfulness |

| 7-language lip sync content | HH | Only openly available model covering this many languages |

| Mood pieces / emotional shorts / single-shot clips | HH | Finer visual detail + smooth emotional transitions |

| Multi-shot scripted video (car chase / talk show / action) | SD | Reliable shot-cut execution |

| Narrative reversal / video editing | SD | Semantic-level instruction comprehension |

| Cross-scene character consistency + identity fidelity | SD | Reference inputs swap the person, not just the pose |

| High instruction density / prompt-literal execution | SD | Instruction-aligned by default |

One API: Switch Models by Changing One String

The first engineering problem we hit running this evaluation: HH and SD use different SDKs, different endpoints, different auth methods. Just adapting the client code would take three separate implementations.

That's why Atlas Cloud put both Seedance 2.0 and Happy Horse 1.0 behind the same model pool and the same One API. One key, one SDK, one model string.

The Scenario 6 detail is worth noting again — HH's endpoint is called video-edit, SD's is reference-to-video. Different names, equivalent capability (both accept composite image+video input). One API abstracts that difference away. Developers write one implementation.

All 12 videos in this evaluation were generated through Atlas Cloud One API — same key, same SDK, same prompt strings, one field changed. Running a cross-model comparison has never been this low-friction.

Using the API

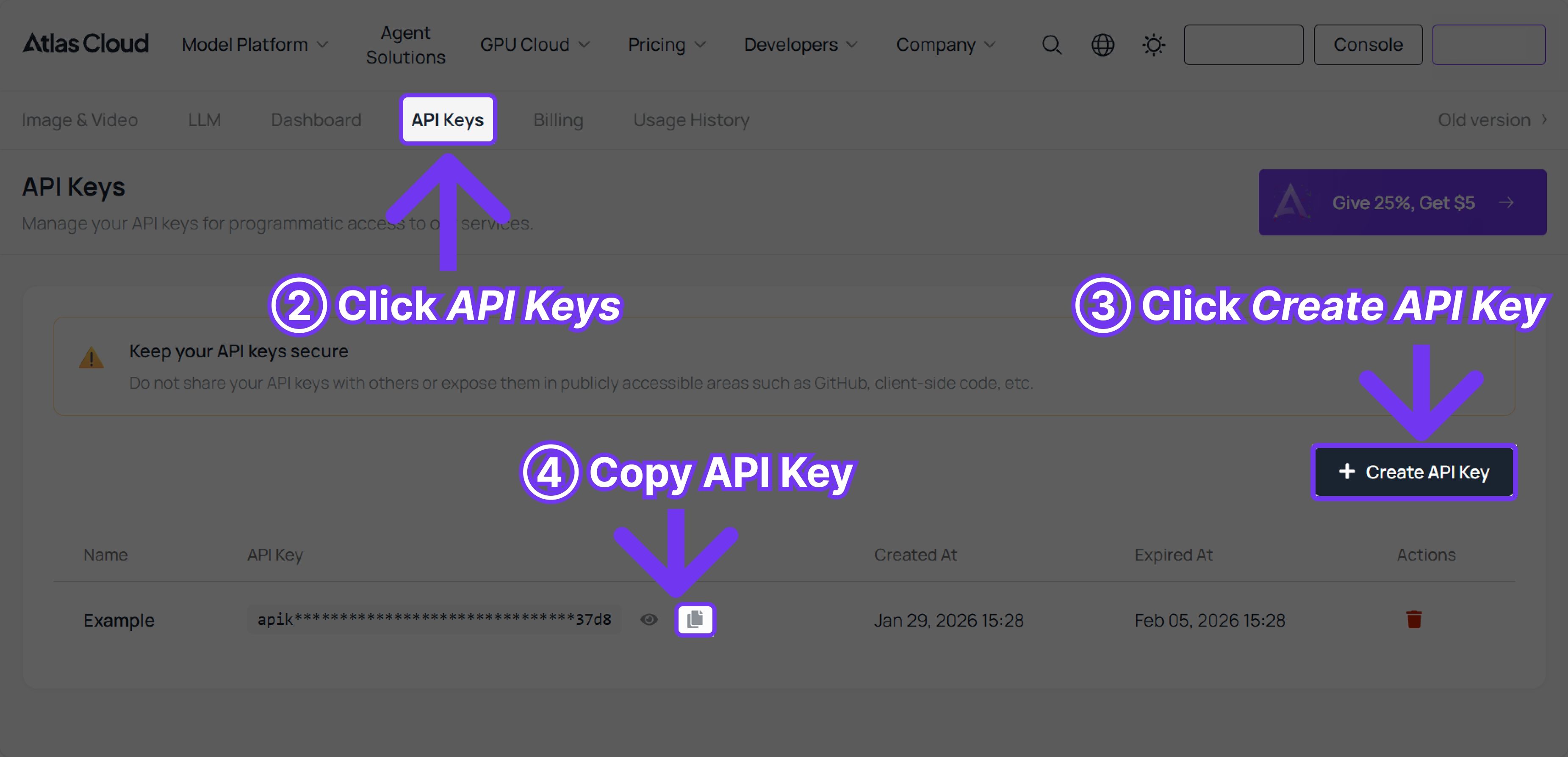

Step 1: Get your API key from the console.

Step 2: Check the API docs for endpoint details, request parameters, and authentication.

A Note on Honesty in Benchmarking

Before writing this, we had a real hesitation: publishing findings like "HH rendered the push-off-a-building scene as a conversation" or "HH generated the wrong person's face" — would that be unfair?

The value of an evaluation white paper is exactly that it's honest. Happy Horse is genuinely strong. The Elo double-first isn't noise. Its failure scenarios tell you precisely when to pick the other option — which is the whole point of a comparative benchmark.

Coming next:

Full White Paper v1.0 — the complete five-dimension × three-layer methodology with scoring card templates, execution SOP, and full academic references (VBench 2.0, Artificial Analysis, AIGCBench, LOVE)

Complete scoring matrix — 5 dimensions × 6 scenarios × 2 models, 60 cells scored individually

Evaluation toolchain — L1 automation scripts including an MLLM-as-Judge implementation

Additional models — Veo, Wan, Kling, and others added to the comparison matrix

If you're doing video model selection, drop your use case in the comments. The white paper v1.0 will include the comparison dimensions readers most asked about.

All evaluation samples, original prompts, extracted frames, and scoring details will be published alongside the white paper. The full evaluation was completed through Atlas Cloud One API on a single interface.