OpenAI LLM Models

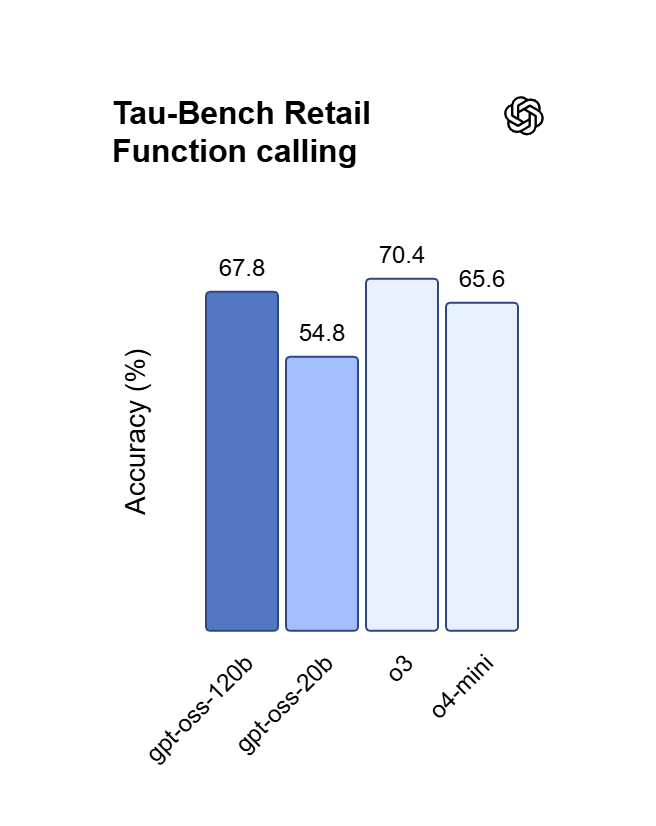

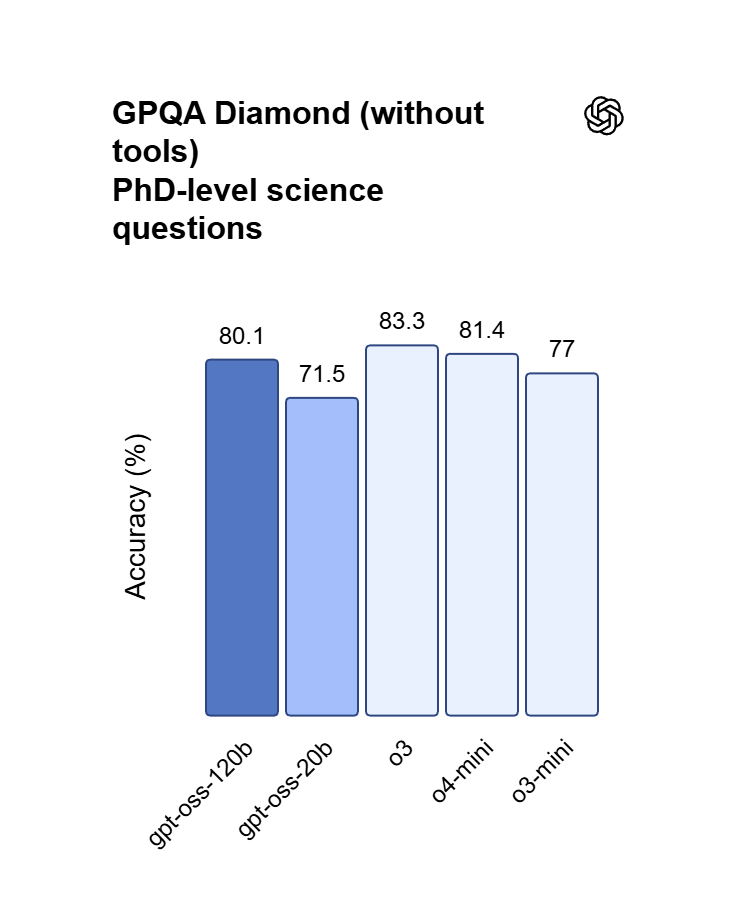

OpenAI’s premier GPT model family leads the industry, highlighted by the GPT OSS 120B which achieves near-parity with OpenAI o4-mini on core reasoning benchmarks while running efficiently on a single 80GB GPU. Perfectly optimized for vibecoding and complex logic operations, this model balances top-tier intelligence with hardware accessibility for modern developers and AI-driven web development.

Modellen Komen Eraan

We leggen de laatste hand aan deze collectie — verken in de tussentijd vergelijkbare collecties hieronder.

Verken Meer Families

Happy Horse 1.0

HappyHorse-1.0 is a unified multimodal AI video generation model that climbed to the top of the Artificial Analysis Video Arena blind-test leaderboard for both text-to-video and image-to-video generation. CNBC Alibaba Group confirmed ownership of HappyHorse, developed under its Alibaba Token Hub (ATH) business unit, where it leads benchmarks outperforming ByteDance's Seedance 2.0 and others. Caixin Global Led by Zhang Di — the former VP of Kuaishou who architected Kling AI — the 15-billion parameter model generates 1080p video with synchronized audio in a single pass using a unified transformer architecture that bypasses the multi-stage pipelines used by every major competitor.

Seedance 2.0 Models

Seedance 2.0(by Bytedance) is a multimodal video generation model that redefines "controllable creation," moving beyond the limitations of text or start/end frames. It supports quad-modal inputs—text, image, video, and audio—and introduces an industry-leading "Universal Reference" system. By precisely replicating the composition, camera movement, and character actions from reference assets, Seedance 2.0 solves critical issues with character consistency and physical coherence, empowering creators to act as true "directors" with deep control over their output.

GPT Image 2 Models

GPT Image 2 is a state-of-the-art multimodal foundation model engineered for exceptional text-to-image generation with unprecedented photorealism and creative versatility. Developed by OpenAI as the evolution of the DALL-E lineage, it transforms detailed natural language descriptions into hyper-realistic imagery at up to 4K resolution. With proprietary "Neural Rendering Engine" technology for precise visual control, GPT Image 2 delivers studio-quality results with accurate anatomy, lighting, and composition—making it the premier AI tool for professional creators, enterprises, and developers demanding production-ready visual assets.

Wan2.7 Models

Launching this March, Wan2.7 is the latest powerhouse in the Qwen ecosystem, delivering a massive upgrade in visual fidelity, audio synchronization, and motion consistency over version 2.6. This all-in-one AI video generator supports advanced features like first-and-last frame control, 3x3 grid synthesis, and instruction-based video editing. Outperforming competitors like Jimeng, Wan2.7 offers superior flexibility with support for real-person image inputs, up to five video references, and 1080P high-definition outputs spanning 2 to 15 seconds, making it the premier choice for professional digital storytelling and high-end content marketing.

Veo3.1 Models

Google DeepMind’s Veo 3.1 represents a paradigm shift in AI video generation, empowering creators with director-level narrative control and cinematic-grade audio quality that seamlessly integrates with its enhanced visual realism. By bridging the gap between imaginative concepts and photorealistic execution, this advanced model offers a transformative solution for a wide range of application scenarios, from professional filmmaking and high-end advertising to immersive digital content creation.

ERNIE Image Models

ERNIE-Image is an open-weight text-to-image model developed by the ERNIE-Image Team at Baidu, built on a single-stream Diffusion Transformer (DiT) with 8B parameters and paired with a lightweight Prompt Enhancer that rewrites short prompts into richer, more structured descriptions before passing them to the diffusion backbone. NYU Shanghai RITS Released on April 15, 2026 under the Apache 2.0 license, it transforms natural language descriptions into detailed imagery with particular strength in text rendering and structured layout generation. ERNIE-Image is designed not only for strong visual quality, but for controllability in practical generation scenarios where accurate content realization matters as much as aesthetics — making it well-suited for commercial posters, comics, multi-panel layouts, and other content creation tasks that require both visual quality and precise control.

GPT Image Models

The GPT Image Family is OpenAI's latest suite of multimodal image generation and editing models, built on the powerful GPT architecture. This family includes three tiers — GPT Image-1, GPT Image-1.5, and GPT Image-1 Mini — each available in both Text-to-Image and Image-to-Image variants. Combining GPT's world-class language understanding with DALL·E-class visual synthesis, these models deliver exceptional prompt adherence, photorealistic rendering, and creative versatility across illustration, photography, design, and visualization tasks. The series offers flexible pricing and quality tiers to match any workflow — from rapid prototyping and high-volume content production to professional-grade final deliverables. Whether you need ultra-fast iterations at minimal cost or maximum quality for brand campaigns, the GPT Image Family has a solution tailored to your needs.

Nano Banana2 Models

Nano Banana 2 (by Google), is a generative image model that perfectly balances lightning-fast rendering with exceptional visual quality. With an improved price-performance ratio, it achieves breakthrough micro-detail depiction, accurate native text rendering, and complex physical structure reconstruction. It serves as a highly efficient, commercial-grade visual production tool for developers, marketing teams, and content creators.

Seedream5.0 Models

Seedream 5.0, developed by ByteDance’s Jimeng AI, is a high-performance AI image generation model that integrates real-time search with intelligent reasoning. Purpose-built for time-sensitive content and complex visual logic, it excels at professional infographics, architectural design, and UI assistance. By blending live web insights with creative precision, Seedream 5.0 empowers commercial branding and marketing with a seamless, logic-driven workflow that turns sophisticated data into stunning, high-fidelity visuals.

Kling3.0 Models

Kuaishou’s flagship video generation suite, Kling 3.0, features two powerhouse models—Kling 3.0 (Upgraded from Kling 2.6) and Kling 3.0 Omni (Kling O3, Upgraded from Kling O1)—both offering high-fidelity native audio integration. While Kling 3.0 excels in intelligent cinematic storytelling, multilingual lip-syncing, and precision text rendering, Kling O3 sets a new standard for professional-grade subject consistency by supporting custom subjects and voice clones derived from video or image inputs. Together, these models provide a comprehensive solution tailored for cinematic narratives, global marketing campaigns, social media content, and digital skit production.

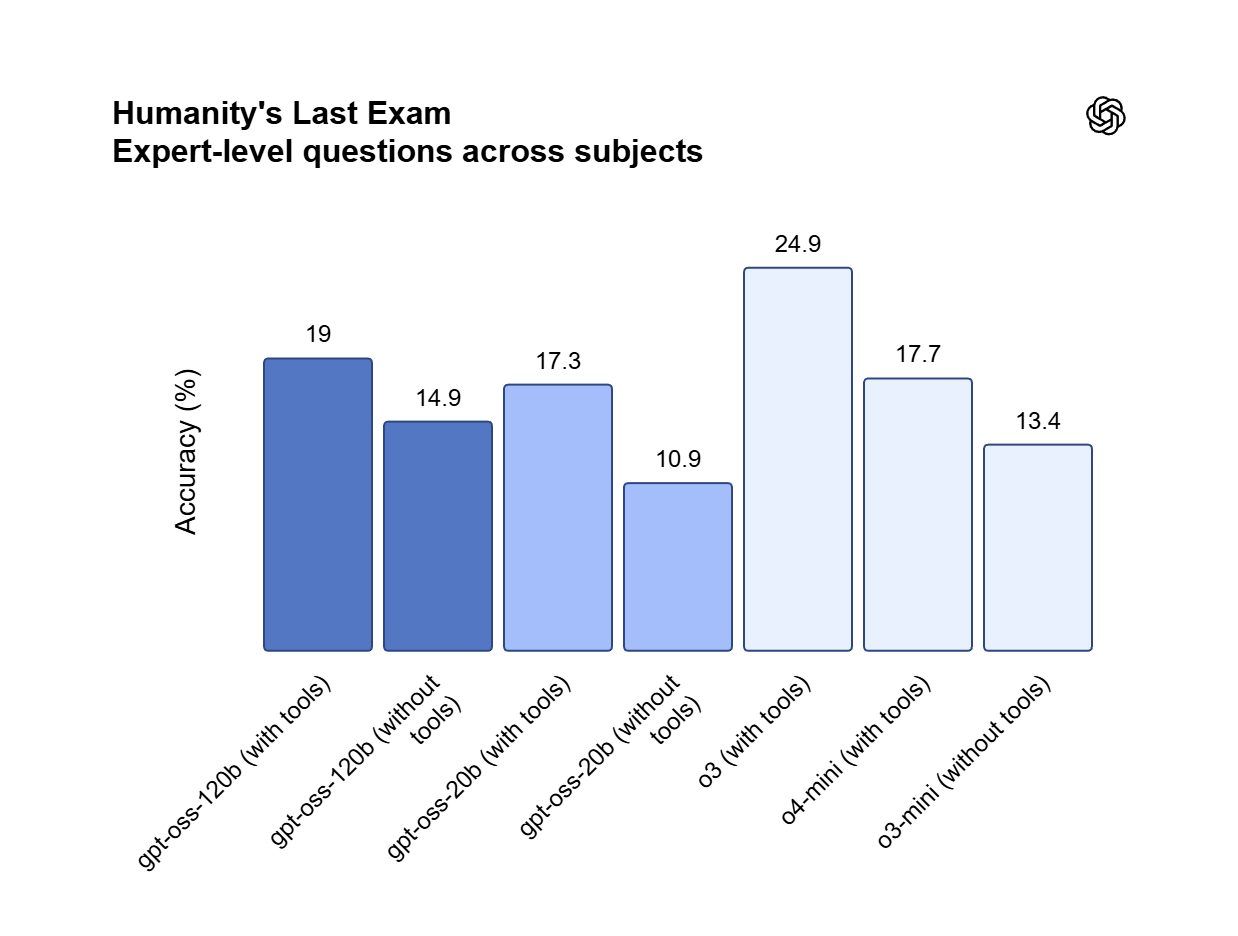

GLM LLM Models

GLM is a cutting-edge LLM series by Z.ai (Zhipu AI) featuring GLM-5, GLM-4.7, and GLM-4.6. Engineered for complex systems and long-horizon agentic tasks, GLM-5 outperforms top-tier closed-source models in elite benchmarks like Humanity’s Last Exam and BrowseComp. While GLM-4.7 specializes in reasoning, coding, and real-world intelligent agents, the entire GLM suite is fast, smart, and reliable, making it the ultimate tool for building websites, analyzing data, and delivering instant, high-quality answers for any professional workflow.

Open AI Model Families

Explore OpenAI’s language and video models on Atlas Cloud: ChatGPT for advanced reasoning and interaction, and Sora-2 for physics-aware video generation.

Wat OpenAI LLM Models Onderscheidt

Atlas Cloud biedt u de nieuwste toonaangevende creatieve modellen uit de industrie.

Frontier Research

Cutting-edge models that set global benchmarks in reasoning, multimodality, and AI safety.

Cost-Efficient Performance

Optimized families like GPT-4.1 mini and GPT-5 nano balance accuracy, speed, and cost.

Developer Ecosystem

APIs powering millions of daily requests across diverse platforms and industries.

Flexible Model Sizes

Choice of flagship, mini, and nano models for every workload and budget.

Enterprise Reliability

SLAs, monitoring, and compliance-ready logging trusted by Fortune 500 companies.

Open Model Options

Access to open-source models (gpt-oss-20b, gpt-oss-120b) for transparency and customization.

Pieksnelheid

Laagste kosten

| Model | Beschrijving |

|---|---|

| GPT OSS 120B | GPT OSS 120B is een krachtige, op redeneren gerichte LLM die een geoptimaliseerde architectuur integreert met robuuste 131.07K contextverwerkingsmogelijkheden; het bereikt bijna-pariteit met OpenAI o4-mini op een enkele 80 GB GPU en dient als motor voor snelle iteratieve ontwikkeling, inclusief vibecoding en het uitvoeren van complexe logica-gestuurde workflows. |

Nieuwe functies van OpenAI LLM Models + Showcase

De combinatie van geavanceerde modellen met het GPU-versnelde platform van Atlas Cloud biedt ongeëvenaarde snelheid, schaalbaarheid en creatieve controle voor beeld- en videogeneratie.

Precieze naleving van instructies via GPT OSS 120B

GPT OSS 120B vertoont uitzonderlijke stuurbaarheid en houdt zich strikt aan complexe systeemprompts om absolute betrouwbaarheid van de output te garanderen. Door gebruik te maken van de fijn afgestemde alignment-architectuur kunnen gebruikers specifieke formaten, beperkingen en stilistische nuances afdwingen zonder enige tekenafwijking (character drift). Het is de definitieve keuze voor autonome agents, extractie van gestructureerde data en missiekritieke productieomgevingen.

Commerciële soevereiniteit onder Apache 2.0-licentie

GPT OSS 120B wordt gedistribueerd onder de Apache 2.0-licentie, wat onbeperkt commercieel gebruik en private fine-tuning toestaat zonder kosten per token. In tegenstelling tot closed-source API's maakt het lokale hosting op één 80 GB GPU mogelijk om gevoelige bedrijfseigen gegevens volledig on-premise te houden. Dit framework biedt de juridische en technische vrijheid om AI-gestuurde softwarestacks te bouwen, aan te passen en op te schalen.

Hoog-efficiënte logica en Vibecoding met GPT OSS 120B

Dit model met 120 miljard parameters bereikt bijna pariteit met OpenAI o4-mini en blinkt uit in het verwerken van complexe codesynthese en wiskundige bewijzen. Ontwikkelaars kunnen de redeneer-engine gebruiken voor "vibe coding" — het direct vertalen van ideeën in natuurlijke taal naar functionele webapplicaties via iteratieve prompting. Het is een snelle oplossing voor het debuggen van geneste logica en het orkestreren van geavanceerde taakplanningsworkflows.

Wat U Kunt Doen met OpenAI LLM Models

Ontdek praktische use cases en workflows die u kunt bouwen met deze modelfamilie — van contentcreatie en automatisering tot productie-grade applicaties.

Diepe logica debuggen en prototyping met de GPT OSS 120B

De GPT OSS 120B stelt engineers in staat om "vibecoding"-uitdagingen op te lossen door architecturale ideeën op hoog niveau te vertalen naar productieklare Python- of React-componenten. De redeneermachine verwerkt de geneste afhankelijkheden en edge cases waar mini-modellen vaak over struikelen, waardoor wordt gegarandeerd dat code-synthese in meerdere stappen functioneel blijft. Met ondersteuning voor algoritmische bewijzen en complexe taakplanning is dit de perfecte tool voor het bouwen van technische MVP's, geautomatiseerde QA-scripts en data-intensieve webapplicaties.

Offline propriëtaire tooling met gebruik van GPT OSS 120B

Onder de Apache 2.0-licentie kunnen teams GPT OSS 120B hosten op één enkele 80 GB GPU om gevoelige interne data te verwerken zonder risico op cloud-lekken. Deze opzet maakt permanente lokale fine-tuning mogelijk op specifieke interne codebases of medische logs zonder terugkerende API-kosten per token. Het model is ideaal voor interne tools met hoge beveiliging en offline AI-assistentie, en biedt volledige soevereiniteit over de gewichten — ter ondersteuning van private RAG-systemen en aangepaste propriëtaire softwarestacks.

Schema-perfecte gegevensextractie met de GPT OSS 120B

De GPT OSS 120B stelt ontwikkelaars in staat om rommelige, ongestructureerde documenten om te zetten in strikt geformatteerde JSON of Markdown zonder "instruction drift". Door het 131.07K contextvenster te verankeren met rigide systeemregels, zorgt het model ervoor dat velden nooit worden gehallucineerd of overgeslagen tijdens langdurige verwerking. Ideaal voor CRM-automatisering en geautomatiseerde content tagging, handhaaft het logische vangrails over enorme datasets—ter ondersteuning van betrouwbare API-integraties en databasepopulatie.

Modelvergelijking

Bekijk hoe modellen van verschillende aanbieders zich verhouden — vergelijk prestaties, prijzen en unieke sterke punten voor een weloverwogen beslissing.

| Model | Context | Maximale uitvoer | Invoer | Positionering |

|---|---|---|---|---|

| GPT OSS 120B | 131.07K | 131.07K | Tekst | Hoog-efficiënte redeneer-LLM |

| GLM-5 | 202.75K | 202.75K | Tekst | Vlaggenschip-basismodel |

| DeepSeek V3.2 | 163.84K | 163.84K | Tekst | Vlaggenschip Algemeen |

| MiniMax-M2.5 | 204.8K | 196.6K | Tekst | SOTA agent-gebaseerd programmeren |

How to Use OpenAI LLM Models on Atlas Cloud

Get started in minutes — follow these simple steps to integrate and deploy models through Atlas Cloud’s platform.

Create an Atlas Cloud Account

Sign up at atlascloud.ai and complete verification. New users receive free credits to explore the platform and test models.

Waarom OpenAI LLM Models Gebruiken Op Atlas Cloud

De combinatie van OpenAI LLM Models's geavanceerde modellen met het GPU-versnelde platform van Atlas Cloud biedt ongeëvenaarde prestaties, schaalbaarheid en ontwikkelaarservaring.

Prestatie & Flexibiliteit

Lage Latentie:

GPU-geoptimaliseerde inferentie voor realtime reasoning.

Uniforme API:

Voer OpenAI LLM Models, GPT, Gemini en DeepSeek uit met één integratie.

Transparante Prijzen:

Voorspelbare op tokens gebaseerde facturering met serverloze opties.

Onderneming & Schaling

Ontwikkelaarservaring:

SDK's, analytics, fine-tuning tools en sjablonen.

Betrouwbaarheid:

99,99% beschikbaarheid, RBAC en compliance-ready logging.

Beveiliging & Compliance:

SOC 2 Type II, HIPAA-afstemming, gegevenssoevereiniteit in VS.

Veelgestelde Vragen over OpenAI LLM Models

Het bereikt bijna gelijkheid met OpenAI o4-mini op het gebied van kernredenering en wiskundige benchmarks. Hoewel o4-mini een gesloten API is, biedt OSS 120B vergelijkbare logische diepgang met het extra voordeel van volledige toegang tot de modelgewichten.

Het model is geoptimaliseerd voor een enkele 80 GB GPU, waardoor multi-node complexiteit wordt vermeden. Voor directe schaalbaarheid en nul onderhoud raden we echter aan om het via de API op Atlas Cloud te benaderen.

Ja. Het is uitgebracht onder de Apache 2.0-licentie, die onbeperkt commercieel gebruik, wijziging en distributie toestaat zonder licentiekosten per token of vendor lock-in.

Het contextvenster van 131.07K is ontworpen voor een nauwkeurigheid bij het terugvinden van informatie die vergelijkbaar is met het zoeken naar een "speld in een hooiberg". Het kan volledige projectmappen of technische handleidingen van meer dan 100 pagina's verwerken en daarbij de logische consistentie over de gehele invoer behouden.

Uitermate. De redeneermachine is gefinetuned voor iteratieve codesynthese. Het verwerkt geneste React-componenten en complexe Python-backends betrouwbaarder dan standaardmodellen uit de 70B-klasse, waardoor het ideaal is voor workflows van natuurlijke taal naar apps.