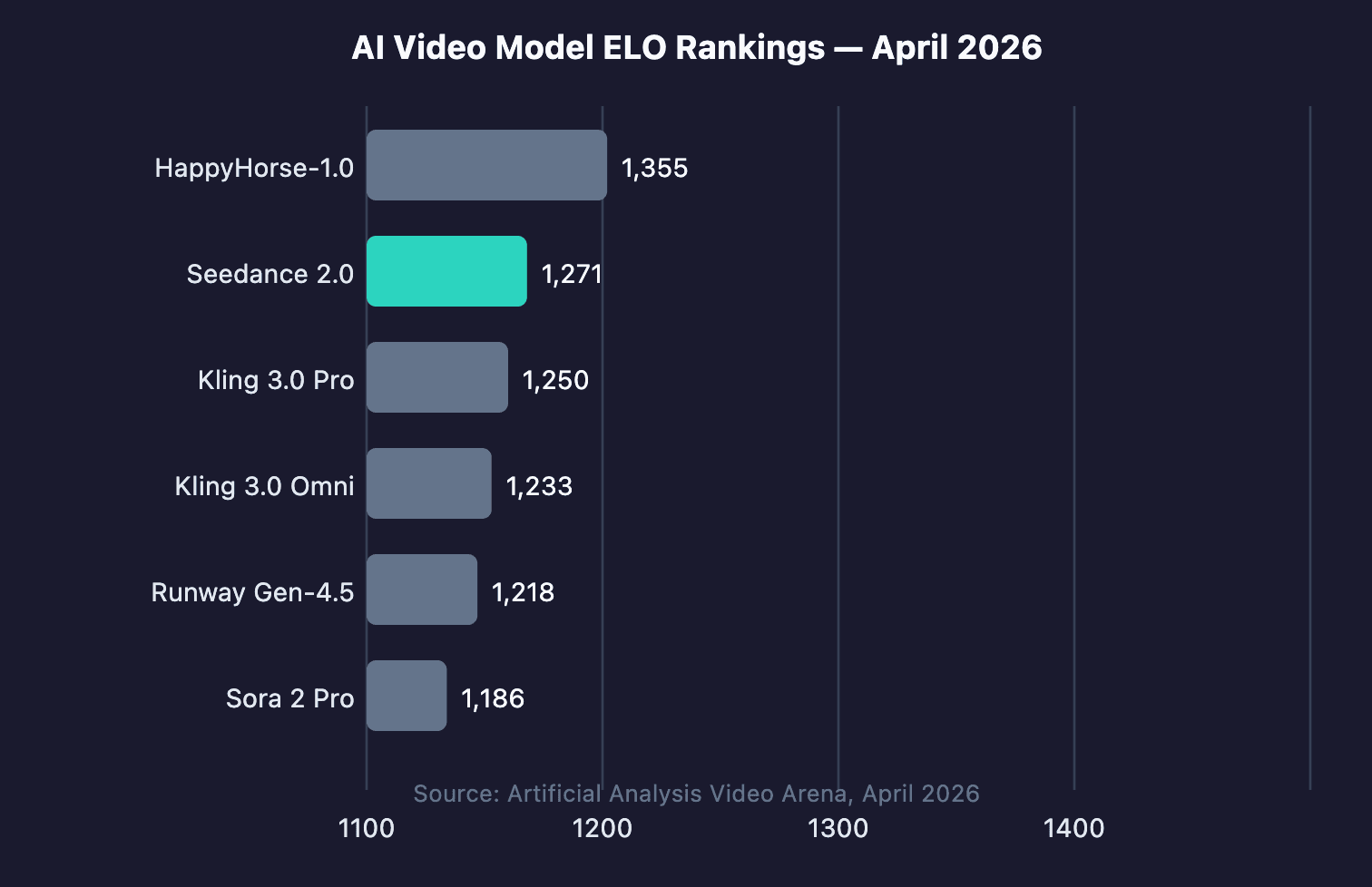

If you've been searching for a clear, practical guide on how to use Seedance 2.0, you've landed in the right place. A 60-second marketing video used to take 13 days to produce. With AI video tools like Seedance 2.0, the same job now takes 27 minutes, a 99.7% reduction in production time (Zebracat via AutoFaceless, 2026). ByteDance launched Seedance 2.0 on February 12, 2026, and it currently ranks 2nd globally on the Artificial Analysis Video Arena with an ELO of 1,271.

This guide covers every major access method on How to use Seedance 2.0 in the US, India or other countries outside China.

Key Takeaways

- Seedance 2.0 is globally available via Dreamina and CapCut. No VPN or Chinese phone number required.

- The free tier on Dreamina gives 225 daily tokens (watermarked). Paid plans start at $18/month.

- For developers in the USA and internationally, Atlas Cloud provides the most affordable API access at $0.081/sec.

- The model accepts text, images, audio, and video in a single multimodal pass using @AssetName syntax.

- 91% of businesses use video marketing in 2026 (Wyzowl via Ngram, 2026). AI production cuts cost by up to 91% vs. traditional video.

How to Use Seedance 2.0 for Free - Step-by-step Tutorials

Knowing how to use Seedance 2.0 for free is the first question most users ask. Dreamina offers 225 shared daily tokens at no cost, which is enough to generate roughly 10–15 short clips per day.

Free Option 1: Dreamina (Recommended)

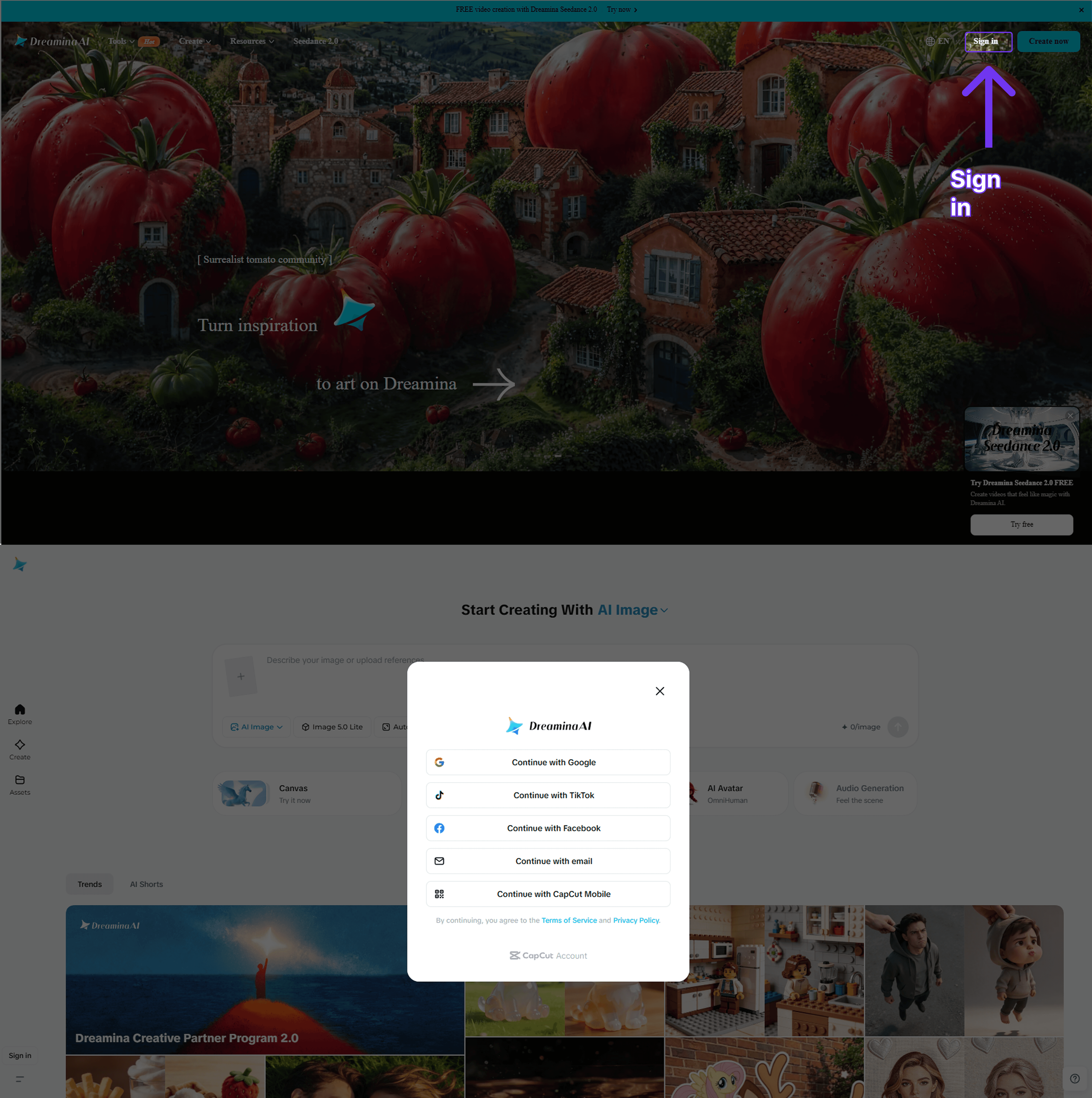

Step 1: Go to dreamina.capcut.com in any browser. No download required. The platform is available globally. Sign up for free using your Google account, Apple ID, or email address. No credit card needed.

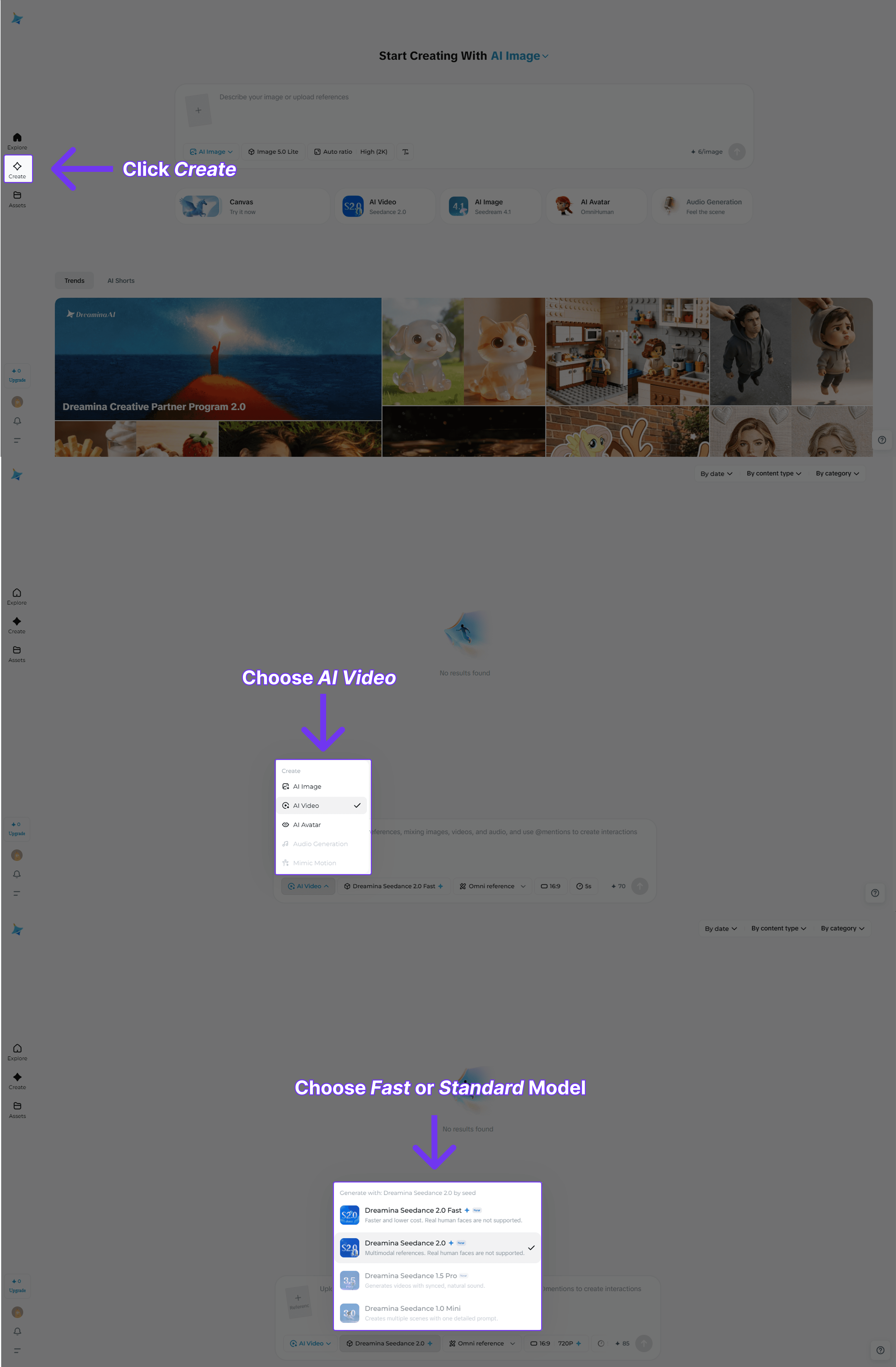

Step 2: Click "Create" on the left sidebar to open the video generation panel. Select Seedance 2.0 from the model dropdown at the top of the panel. You'll see Standard and Fast variants.

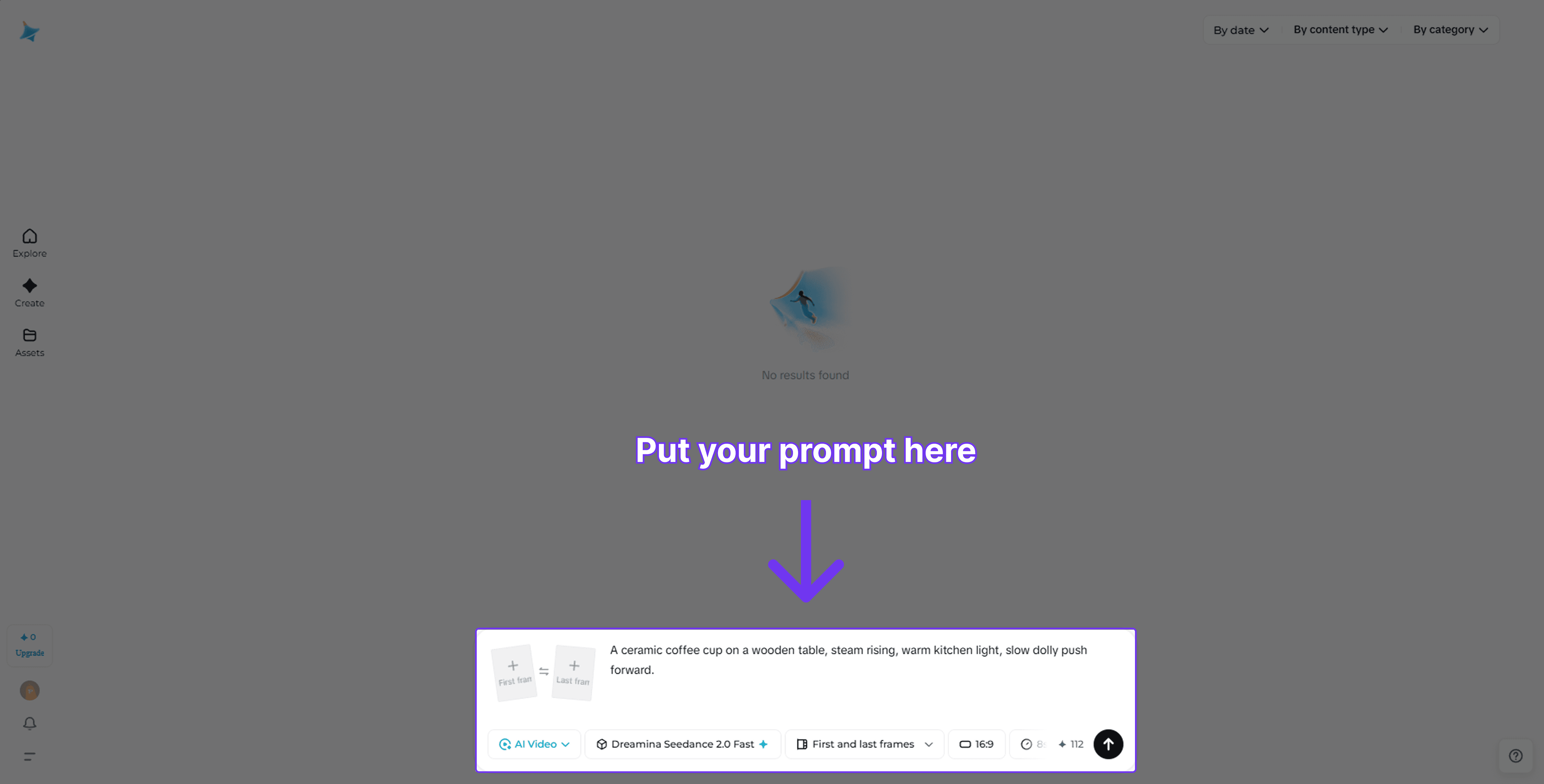

Step 3: Write your prompt using the five-part formula: Subject + Action + Setting + Mood + Camera Direction. Example: "A ceramic coffee cup on a wooden table, steam rising, warm kitchen light, slow dolly push forward." Set duration (4–8 seconds recommended for the free tier) and aspect ratio (16:9 for widescreen, 9:16 for vertical social).

Step 4: Click "Generate." Each clip uses approximately 15–20 tokens. Your 225 daily tokens refresh every 24 hours. Download your video. Free tier outputs include a watermark. Upgrade to the $18/month Standard plan to remove it.

Free Option 2: CapCut App

CapCut shares a token pool with Dreamina on the same ByteDance account. Download CapCut from the App Store or Play Store, sign in with the same account, and tap "AI Video" to access Seedance 2.0. Tokens are shared spending them in CapCut reduces your Dreamina balance and vice versa.

Seedance 2.0 API for Developers

For developers and businesses, the API route via Atlas Cloud is the most cost-effective and fastest integration path.

Atlas Cloud is an AI API aggregation platform that gives developers and businesses unified access to 300+ AI models, including all six Seedance 2.0 variants. It works through a single API key, a single billing dashboard, and a single base URL. It's the fastest way to integrate Seedance 2.0 into any application, pipeline, or product without dealing with ByteDance's direct enterprise contracts.

Key Features of Altas Cloud

- 300+ AI models (video, image, LLM, audio) via one API key

- All 6 Seedance 2.0 variants: text-to-video, image-to-video, reference-to-video (Standard & Fast)

- Seedance 2.0 Fast at $ 0.081/sec. Lowest cost among major platforms

- OpenAI SDK-compatible endpoints (drop-in replacement)

- Pay-as-you-go, no monthly subscriptions

- 99.9% uptime SLA with international data compliance

- MCP Server integration for 100+ IDE clients

- Accepts credit card, PayPal, crypto, WeChat Pay, Alipay

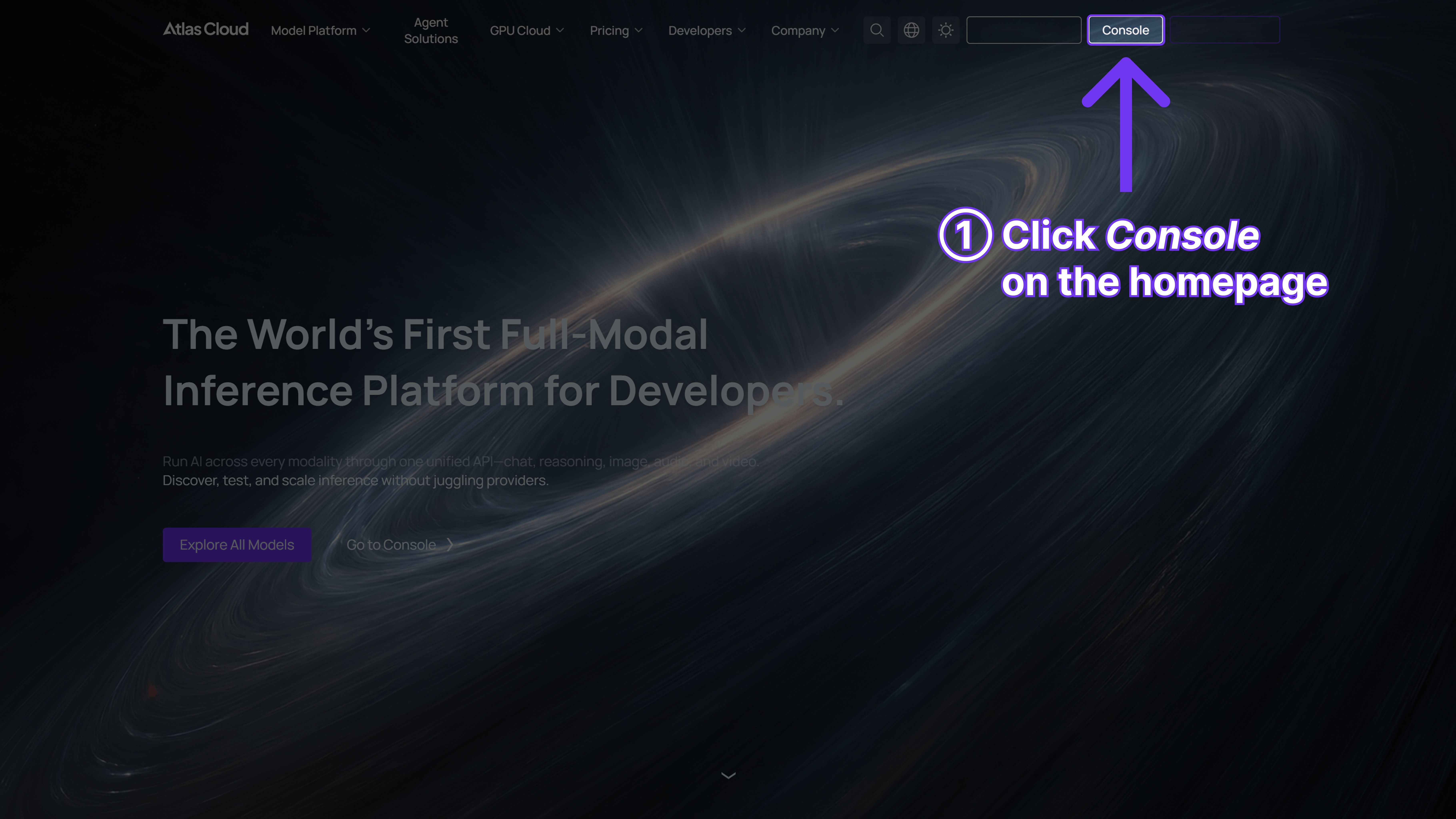

Method 1: Use Directly in the Atlas Cloud Playground

No code needed. Go to atlascloud.ai, sign up, navigate to the Models page, find Seedance 2.0, and hit "Try in Playground." Type your prompt, pick your settings, and generate right in the browser. It's the fastest way to validate an idea before you wire up the API.

Method 2: Access via API

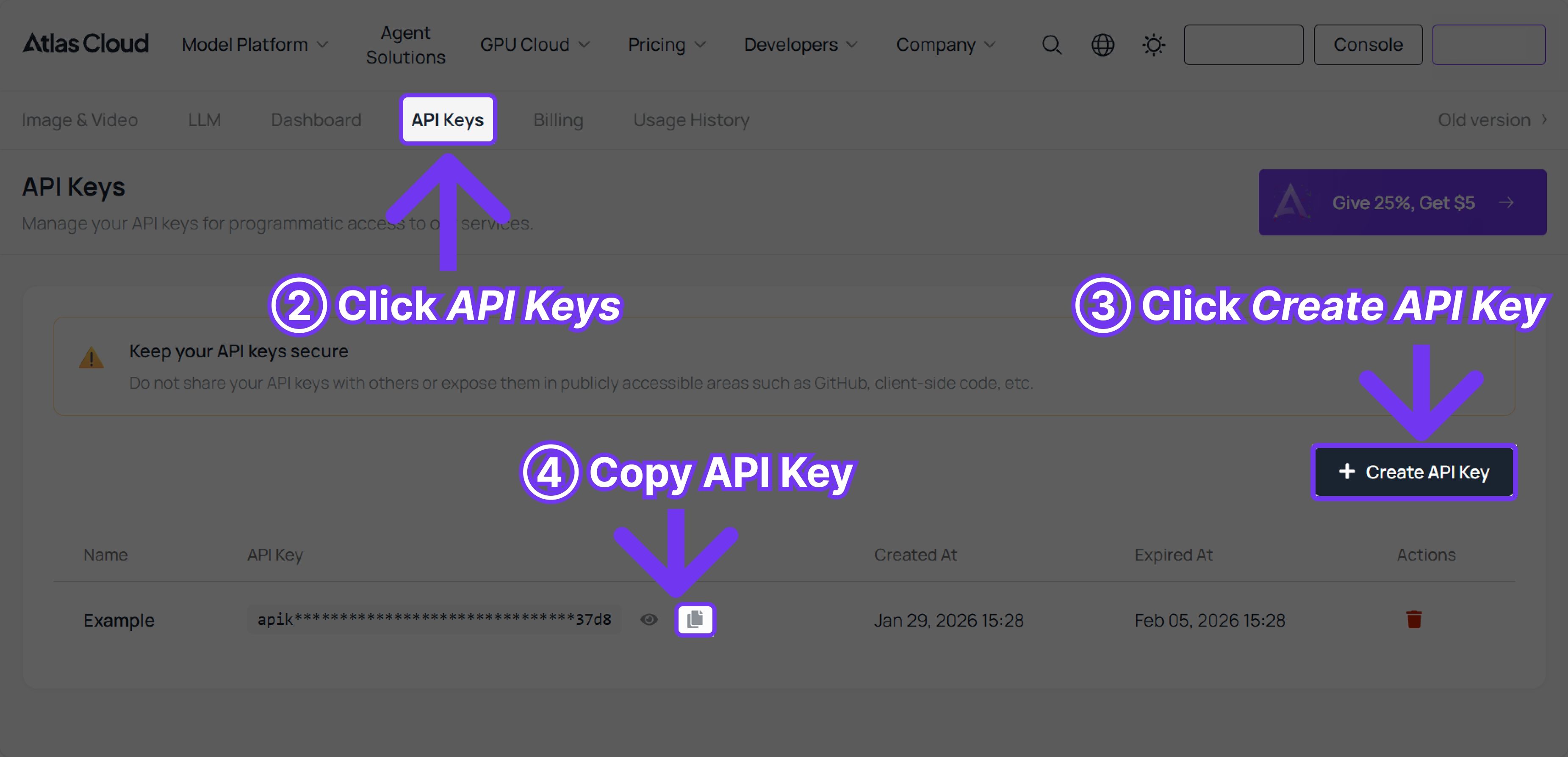

Step 1: Get your API key

Head to atlascloud.ai/console/api-keys. Click Create API Key, name it, and copy it immediately — it's only shown once. Drop it in your environment as ATLASCLOUD_API_KEY.

Step 2: Check the API documentation

The full reference lives at atlascloud.ai/docs. You'll find every parameter, all model IDs, the upload endpoint for reference files, and the polling lifecycle. Worth a 5-minute read before you build.

Step 3: Make your first request

The flow is always the same: submit a generation job → get a prediction ID → poll until complete → grab the video URL. Here's a working Python example to get you started:

plaintext1import requests 2import time 3 4# Step 1: Start video generation 5generate_url = "https://api.atlascloud.ai/api/v1/model/generateVideo" 6headers = { 7 "Content-Type": "application/json", 8 "Authorization": "Bearer $ATLASCLOUD_API_KEY" 9} 10data = { 11 "model": "bytedance/seedance-2.0/text-to-video", # Required. Model name 12 "prompt": "A cinematic view of the endless ocean at sunrise, golden sunlight spreading " 13 "across the deep blue sea, powerful waves rising and crashing with white foam. " 14 "The camera flies low above the water surface, water droplets and mist glowing " 15 "in the light. Epic cinematic atmosphere, 4K.", 16 "duration": 5, # Video duration in seconds (4–15), or -1 for auto 17 "resolution": "720p", # options: 480p | 720p 18 "ratio": "adaptive", # 9:16 | 16:9 | 1:1 | 4:3 | adaptive 19 "generate_audio": True, # Generate synced voice, SFX, and background music 20 "watermark": False, 21} 22 23generate_response = requests.post(generate_url, headers=headers, json=data) 24prediction_id = generate_response.json()["data"]["id"] 25 26# Step 2: Poll for result 27poll_url = f"https://api.atlascloud.ai/api/v1/model/prediction/{prediction_id}" 28 29def check_status(): 30 while True: 31 response = requests.get( 32 poll_url, 33 headers={"Authorization": "Bearer $ATLASCLOUD_API_KEY"} 34 ) 35 result = response.json() 36 status = result["data"]["status"] 37 38 if status in ["completed", "succeeded"]: 39 video_url = result["data"]["outputs"][0] 40 print("Generated video:", video_url) 41 return video_url 42 elif status == "failed": 43 raise Exception(result["data"].get("error", "Generation failed")) 44 else: 45 time.sleep(2) # Still processing — check again in 2 seconds 46 47video_url = check_status()

Atlas Cloud's Seedance 2.0 Fast tier costs USD 0.081 per second, versus Kling 3.0 at USD 0.153/sec. Reference file uploads don't count toward billing — you're only charged for the generated video duration. New accounts receive $1 free credit with no credit card required (Atlas Cloud documentation, 2026).

What Is Seedance 2.0 and Why Does It Matter?

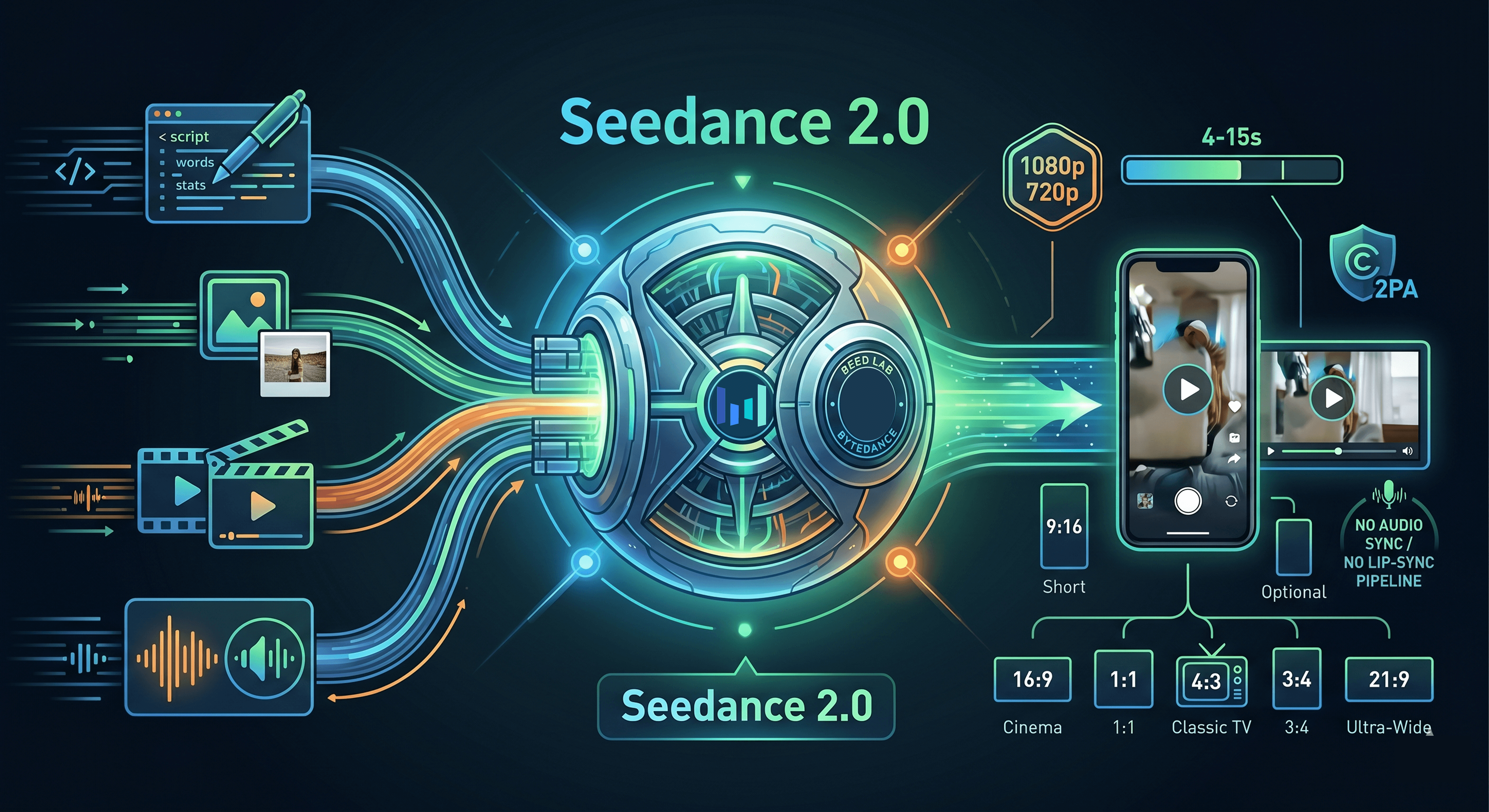

In April 2026, Seedance 2.0 earned an ELO score of 1,271 on the Artificial Analysis Video Arena, placing it 2nd globally and 1st among all audio-capable models at 1,221. That ranking reflects something real: it's one of the few models that handles text, images, video clips, and audio in a single unified pass, rather than chaining separate tools together.

ByteDance built Seedance 2.0 inside its SEED lab and launched it publicly on February 12, 2026. The architecture is called unified multimodal audio-video joint generation. What that means in practice: you provide a prompt, optional reference images, a video clip, and an audio file, and the model processes all of them together. No separate audio sync step. No manual lip-sync pipeline.

Output resolution goes up to 1080p through the Dreamina UI and 720p through the API. You can generate clips between 4 and 15 seconds across six aspect ratios: 21:9, 16:9, 4:3, 1:1, 3:4, and 9:16. That last one matters for social content. Every output carries C2PA provenance watermarking baked into the file metadata.

Why does the ELO gap matter? Kling 3.0 Pro scores 1,250 and Runway Gen-4.5 scores 1,218. Those 21-to-53 point gaps translate into noticeably cleaner motion and better prompt adherence on complex scenes. It's worth running your own A/B test on your specific use case, but Seedance 2.0 tends to win on cinematic camera moves and multilingual audio.

Seedance 2.0 scored an ELO of 1,271 on the Artificial Analysis Video Arena in April 2026, ranking second globally among all text-to-video models and first among audio-capable models, which scored 1,221 on a parallel audio sub-leaderboard (Artificial Analysis Video Arena, April 2026).

The AI video market itself is accelerating fast. Fortune Business Insights put the global AI video generator market at 716.8millionin2025,growingto716.8 million in 2025, growing to 716.8millionin2025,growingto847 million in 2026, an 18.8% year-over-year increase. Grand View Research, also in 2026, values the market at $946.4 million with a 20.3% compound annual growth rate through 2033. Both figures confirm this isn't a niche experiment. It's a market where tooling choices made now will compound for years.

Advanced Seedance 2.0 Prompting and Multimodal Inputs

In 2026, 63% of video marketers used AI tools to create or edit marketing videos, according to Wyzowl via Ngram. The gap between average outputs and professional-grade results comes down to prompt precision. Seedance 2.0's multimodal inputs make strong prompting even more impactful, but they also introduce failure modes that most guides don't cover.

Using @AssetName Reference Tags

The @AssetName syntax is how you link uploaded files to specific roles in the scene. You can upload a product shot, a background video, and a background music file, then write: "@product_front on a minimalist desk, slow orbit, jazz @ambient_track playing softly." The model uses the image as a visual anchor, the video clip for motion reference, and the audio for the sonic layer. This is the core advantage over text-only generation.

Camera Control Options

Seedance 2.0 supports three named camera moves: dolly (forward/backward movement), pan (left/right rotation), and push (zoom with motion). These are director-level terms, so treat them as literals in your prompt. "Slow dolly push forward" will produce a different result than "zoom in." Use one camera move per clip. Stacking two moves in a single 8-second clip usually produces visual artifacts.

Prompt Failures and Fixes

Here's a pattern that shows up consistently. Vague prompts produce vague results. Consider the difference between these two prompts for the same product shot:

Before (weak): "Show a water bottle in a nice setting with good lighting." After (strong): "@bottle Translucent blue water bottle on a wet stone surface, water droplets catching morning light, forest background blurred, slow dolly push, cool cinematic tone."

The weak version produces a generic studio mockup. The strong version generates something you could actually use in an ad. What changed? Specificity on surface texture, lighting source, background depth, and camera movement. The model responds to concrete nouns and precise descriptors, not adjectives like "nice" or "good."

Seedance 2.0 supports director-level camera control including dolly, pan, and push moves. Treat camera direction as literal instructions in every prompt. Source: Unsplash / Harrison Kugler.

Seedance 2.0 accepts up to 12 reference files per request — a mix of 9 images, 3 video clips, and 3 audio files — all processed in a single multimodal pass using the @AssetName syntax for targeted referencing. No competitor in the top-6 ELO rankings offers this level of combined input control (ByteDance SEED lab documentation, 2026).

Frequently Asked Questions About Seedance 2.0

1. Is Seedance 2.0 free to use?

Yes, up to a point. The Dreamina free tier includes 225 shared daily tokens and adds a watermark to all outputs. Paid plans start at $18/month for Standard, which removes watermarks and increases token limits. AI video platform monthly active users hit 124 million in January 2026 (Ngram, 2026), so free tiers can get congested during peak hours.

2. What's the maximum video length Seedance 2.0 can generate?

The maximum single-clip duration is 15 seconds via both the Dreamina UI and the API. The minimum is 4 seconds. For longer videos, chain multiple clips using consistent reference assets via the @AssetName tag. This matches how professional AI video workflows are structured across most top-tier models in 2026.

3. How does Seedance 2.0 compare to Sora 2 on cost?

At the API level, Seedance 2.0 via Atlas Cloud Fast costs 0.022persecond,puttinga10−secondclipat0.022 per second, putting a 10-second clip at 0.022persecond,puttinga10−secondclipat0.22. Sora 2 costs roughly 0.15persecond,makingthesameclip0.15 per second, making the same clip 0.15persecond,makingthesameclip1.50. That's approximately a 7x cost advantage for Seedance 2.0. Given its ELO score of 1,271 vs. Sora 2 Pro's 1,186, the quality-to-cost ratio clearly favors Seedance (Atlas Cloud, March 2026).

4. Can I use Seedance 2.0 outputs commercially?

Commercial use is permitted on paid Dreamina tiers and via the API, subject to ByteDance's terms of service. Every output carries embedded C2PA provenance metadata, which some ad platforms and brand safety tools can detect. Review each platform's AI content disclosure policy before running paid campaigns. Traditional production costs 4,500/minvs.AIat4,500/min vs. AI at 4,500/minvs.AIat400/min (vidBoard.ai, 2026), so getting compliance right matters.

5. How long does API generation take?

Generation time via the AtlasCloud API ranges from 30 to 120 seconds per clip. Use the async submit-poll-download pattern rather than a blocking request. For batch workflows, submit all jobs first, then poll for results.

6. How do I use Seedance 2.0 outside China without a VPN?

No VPN needed. How to use Seedance 2.0 outside China: visit dreamina.capcut.com directly from any country. Dreamina is the international platform, fully separate from Jimeng (China-only). It's available in 130+ countries including the US, UK, India, Japan, and most of Europe. For API access, Atlas Cloud and fal.ai are globally accessible with no geo-restrictions.

7. How do I use Seedance 2.0 in CapCut?

Download CapCut from your app store, sign in, tap "AI Video," select Seedance 2.0 from the model picker, write your prompt, set aspect ratio and duration, and tap Generate. CapCut shares the same token pool as Dreamina on the same ByteDance account. ByteDance expanded global CapCut access in April 2026 to 150+ countries.

8. How do I use Seedance 2.0 API as a developer?

How to use Seedance 2.0 API: sign up at atlascloud.ai, create an API key, fund your account (10minimum).Pollforstatusevery5secondsuntilstatus:"completed".Seedance2.0Fastcosts10 minimum). Poll for status every 5 seconds until status:"completed". Seedance 2.0 Fast costs 10minimum).Pollforstatusevery5secondsuntilstatus:"completed".Seedance2.0Fastcosts0.022/sec — 7x cheaper than Sora 2 at ~$0.15/sec (Atlas Cloud, March 2026).