What is GLM-5.1? Zhipu AI's Autonomous Coding Model

GLM 5.1 model is coming to Atlas Cloud soon!

- What is GLM-5.1: GLM 5.1 is Zhipu AI's most advanced open-source model, boasting the strongest programming capabilities among open models, rivaling Opus 4.6!

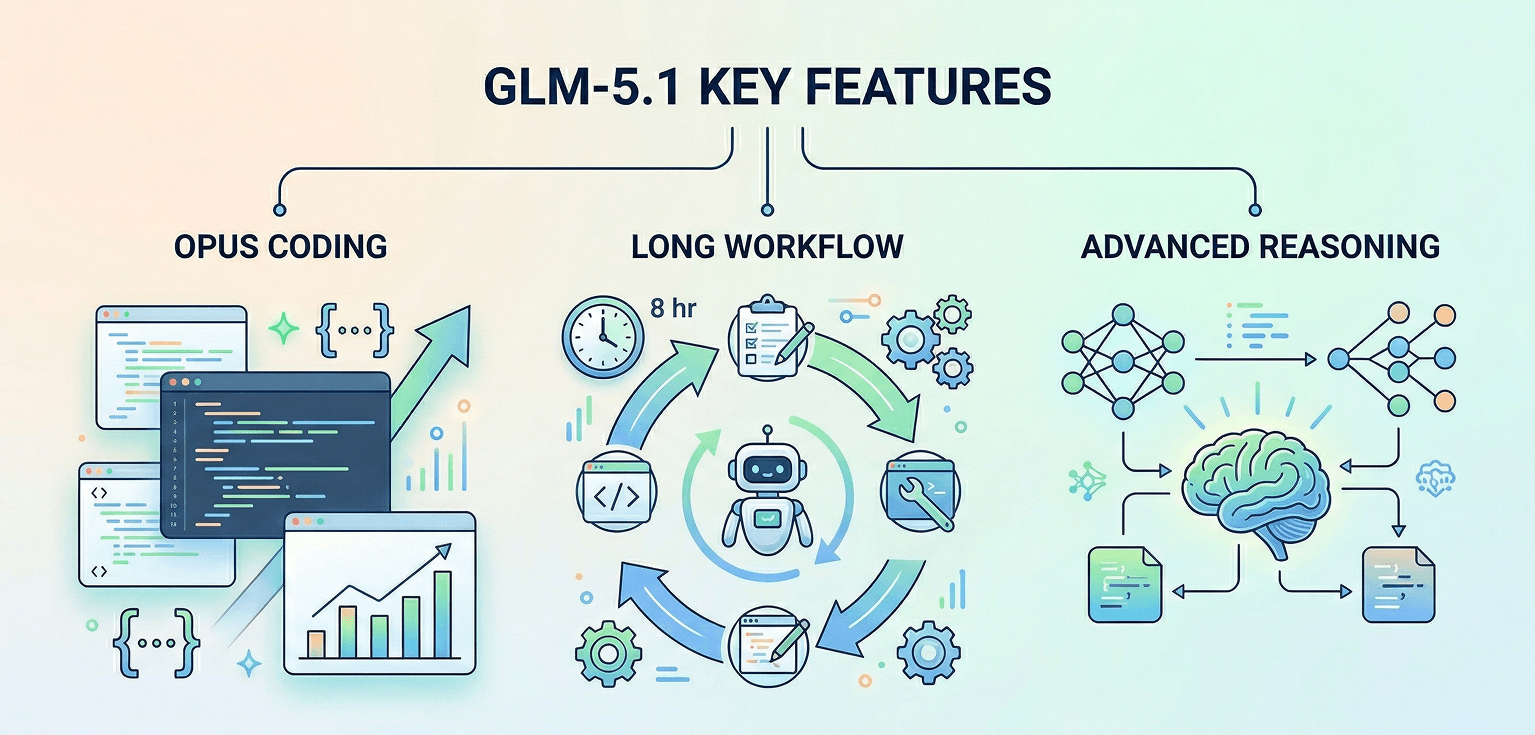

- Key Features: GLM 5.1 boasts top-tier coding abilities. It automates tasks up to eight hours, with adaptive problem-solving to ensure smooth workflows and fully functional results.

- Launch Time: This month.

GLM-5.1 Core Features

Coding Performance: Opus 4.6 Level

GLM-5.1 achieves the strongest benchmark results in coding tasks, matching the performance of Claude Opus 4.6. It ranks at the top among open-source models across major public benchmarks.

Comparison with Claude 3.5 Sonnet:

- Claude 3.5 Sonnet: Strong coding capabilities, widely adopted for development

- GLM-5.1: Matches Opus 4.6-level performance, competitive pricing

Comparison with DeepSeek V3.2:

- DeepSeek V3.2: Excellent open-source coding model with broad capabilities

- GLM-5.1: Superior on specialized coding benchmarks, unique long-horizon execution

Long-Horizon Task Execution: 8-Hour Autonomy

GLM-5.1 goes far beyond normal dialogue. Hand it a real engineering project, and it will plan the workflow, write code, run tests, switch strategies if it gets stuck, fix issues when things break — and keep working for up to 8 hours to deliver a complete result. Few models have this capability.

Comparison with GPT-4o:

- GPT-4o: Excellent for multi-turn conversations and short tasks

- GLM-5.1: Designed for extended autonomous execution with state management

Comparison with Qwen2.5:

- Qwen2.5: Strong performance on diverse tasks

- GLM-5.1: Specialized for long-duration, complex engineering workflows

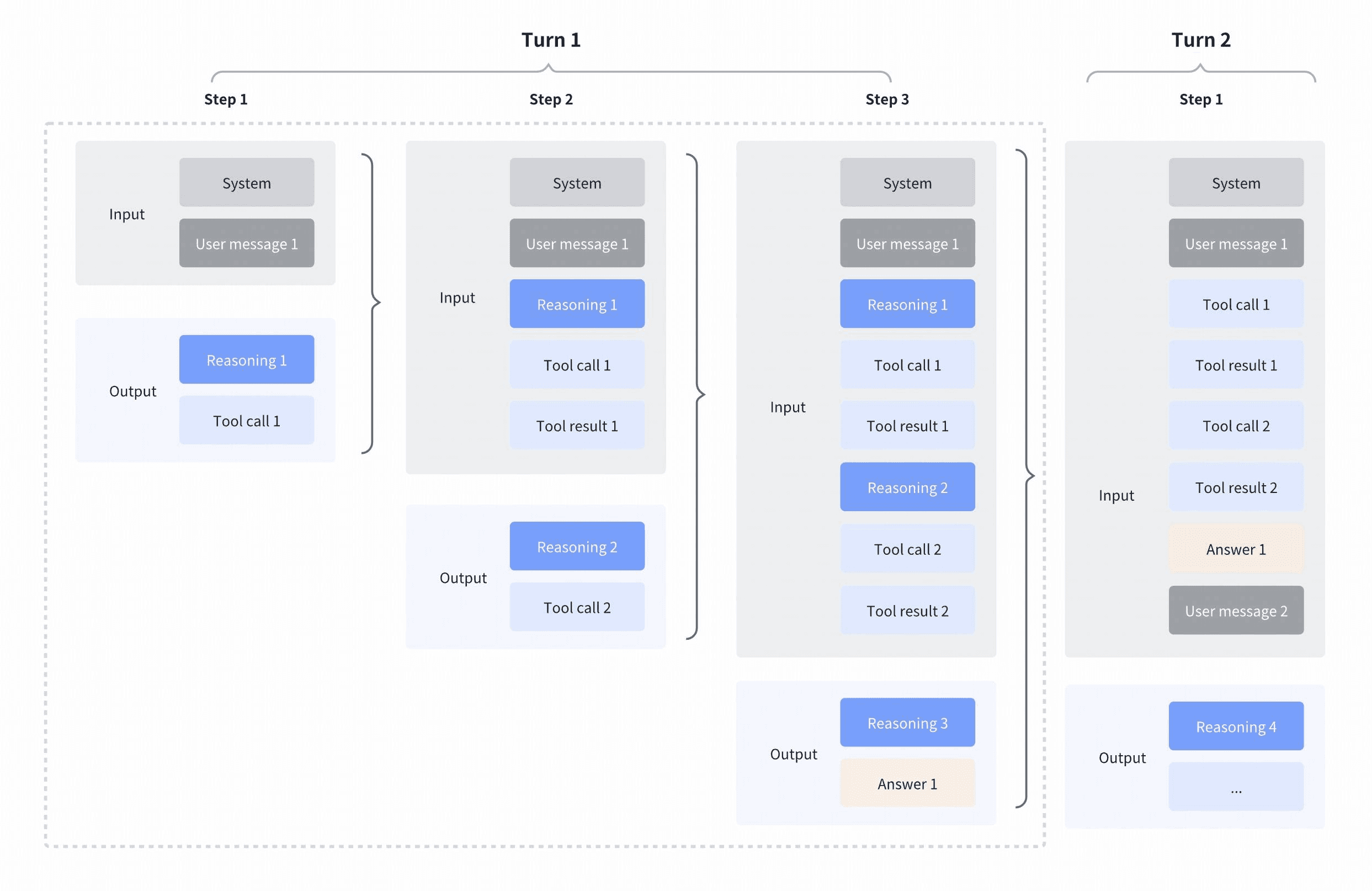

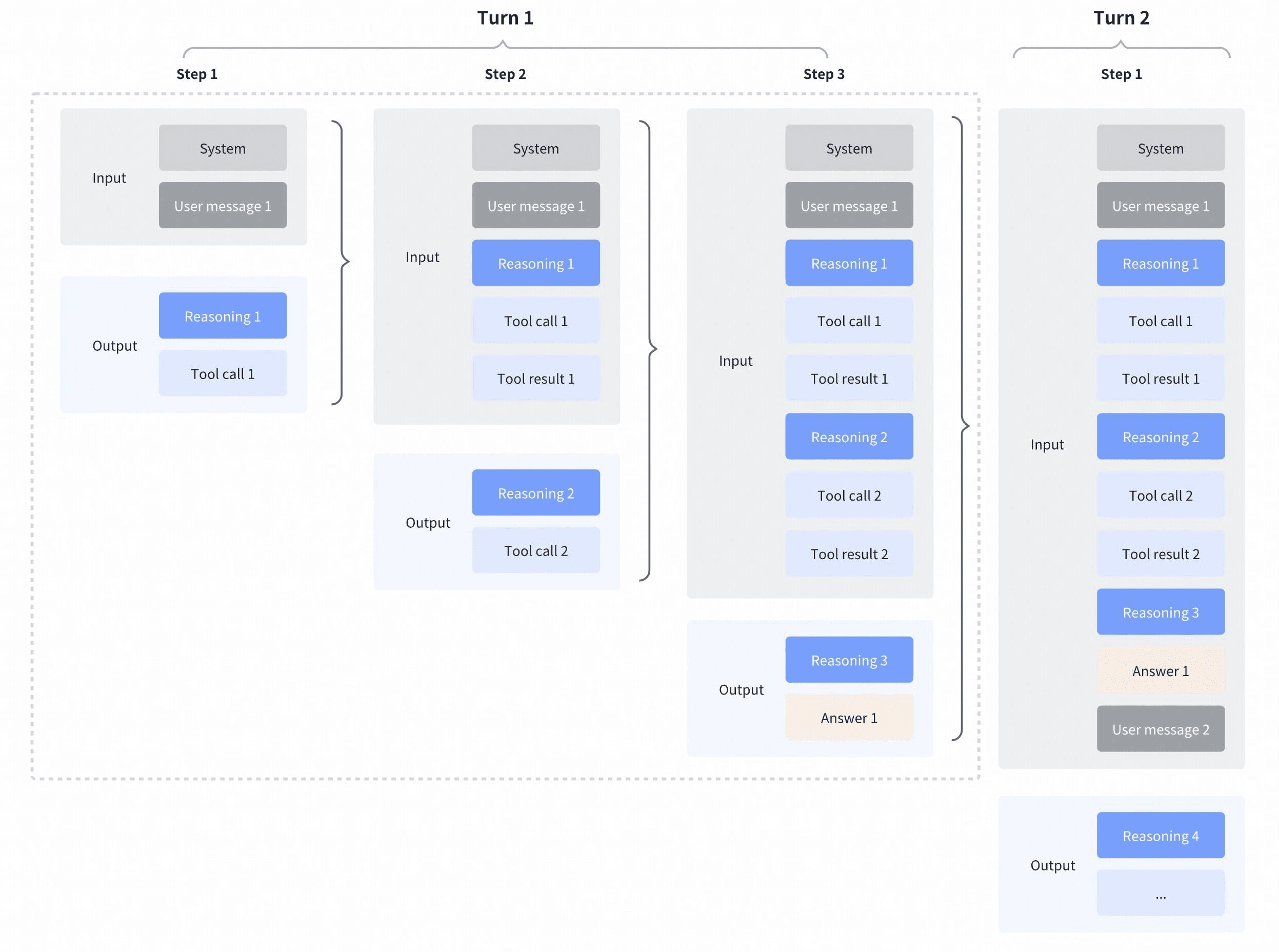

Interleaved & Preserved Thinking

- Interleaved Thinking

Allowing GLM to reason between and after tool calls enables step-by-step thinking: interpreting outputs, chaining calls, and making precise decisions based on intermediate results.

- Preserved Thinking

GLM-5.1 retains reasoning from previous turns, ensuring continuity, improving performance, boosting cache hits, and saving tokens in real tasks.

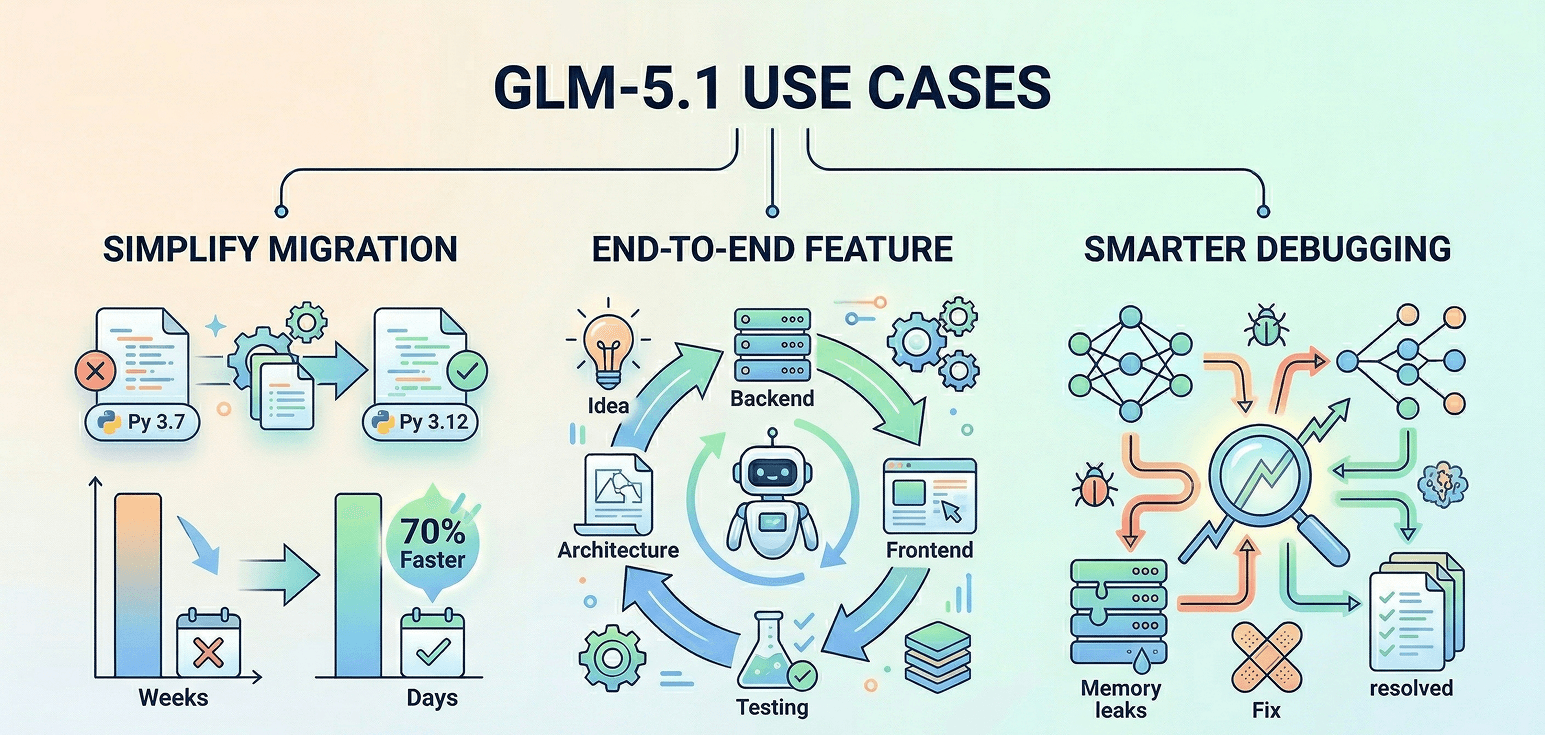

GLM-5.1 Use Cases: Code Migration, Feature Development, Debugging

Simplifying Code Migration

GLM-5.1 takes on time-consuming code migration tasks, turning weeks of effort into a matter of days. It reviews the codebase, creates a strategy, edits files at scale, and performs thorough testing. This has made common projects, like upgrading Python versions or modernizing frameworks, up to 70% faster and easier.

End-to-End Feature Development

From initial ideas to fully built applications, GLM-5.1 runs the entire process. It plans the architecture, builds backend and frontend systems, writes tests, and even handles documentation. Without needing constant oversight, it delivers complete solutions ready for deployment, including security, databases, and production configurations.

Smarter Debugging

When complex bugs appear in large systems, GLM-5.1 handles the heavy lifting. It persistently traces problems, whether they’re race conditions or memory leaks, and applies fixes based on careful testing. Every step is documented, leaving developers with solutions they can build on.

Why Use GLM-5.1 on Atlas Cloud?

What is Atlas Cloud?

It’s a platform that simplifies AI by giving you access to 300+ top models in one place—text, images, video, and more.

Who’s it for?

• Developers who want easy, affordable AI access. • Teams handling projects that need AI across multiple areas. • Businesses needing reliable AI for important work. • People using tools like ComfyUI and n8n.

Why choose it?

• One API lets you use everything—just one key. • Clear pricing, no surprises, and low costs. • Built for enterprise: stable, secure, and supported by experts. • Works with the tools you already have. • Your data stays safe and meets compliance needs.

How does it compare?

• Fal.ai: Atlas has more models and better prices. • Wavespeed: Atlas costs less and includes enterprise support. • Kie.ai: Atlas is clearer on pricing and offers a bigger selection. • Replicate: Smaller library and higher costs. • Other providers (like OpenAI): Atlas combines everything in one simple platform.

How to Use GLM-5.1 on Atlas Cloud

Atlas Cloud lets you use models side by side — first in a playground, then via a single API.

Method 1: Use directly in the Atlas Cloud playground

Method 2: Access via API

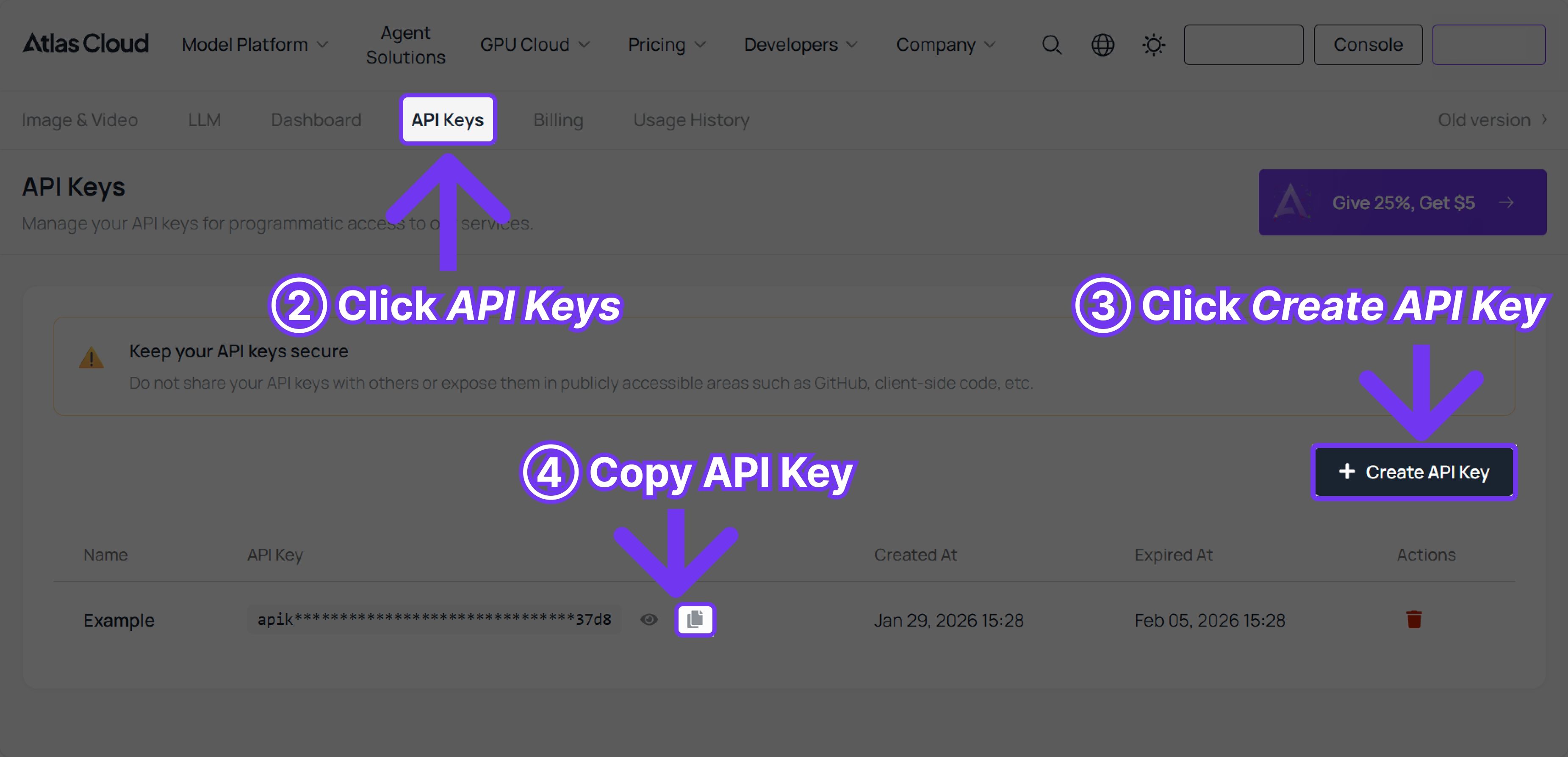

Step 1: Get your API key

Create an API key in your console and copy it for later use.

Step 2: Check the API documentation

Review the endpoint, request parameters, and authentication method in our API docs.

Step 3: Make your first request (Python example)

Example: generate a video with GLM-5-Turbo

plaintext1import os 2from openai import OpenAI 3 4client = OpenAI( 5 api_key=os.getenv("ATLASCLOUD_API_KEY"), 6 base_url="https://api.atlascloud.ai/v1" 7) 8 9response = client.chat.completions.create( 10 model="zai-org/glm-5-turbo", 11 messages=[ 12 { 13 "role": "user", 14 "content": "hello" 15 } 16], 17 max_tokens=1024, 18 temperature=0.7 19) 20 21print(response.choices[0].message.content)

FAQ: GLM-5.1 Frequently Asked Questions

Q: How does GLM-5.1 stack up against Claude 3.5 Sonnet for coding?

A: GLM-5.1 matches Claude Opus 4.6 on coding benchmarks, outpacing Claude 3.5 Sonnet. Its ability to handle extended tasks makes it stand out.

Q: What’s different about “long-horizon task execution”?

A: Regular chat models respond to individual prompts. GLM-5.1, however, can stay on task for up to 8 hours, adapting and self-correcting throughout.

Q: Is GLM-5.1 open source?

A: Yes, it’s fully open source. Developed by Zhipu AI, you can find licensing details in the official documentation.

Q: Is it ready for production use?

A: Definitely! GLM-5.1 offers a 128K context window and runs on Atlas Cloud’s reliable infrastructure, making it production-ready.

Q: How do I integrate its 8-hour execution capability?

A: For long tasks, use a webhook or polling setup. Atlas Cloud handles the backend, and your app receives the final output on completion.

Q: What tasks is GLM-5.1 best suited for?

A: It’s ideal for challenging projects like code migration, debugging complex systems, and full-stack software development.