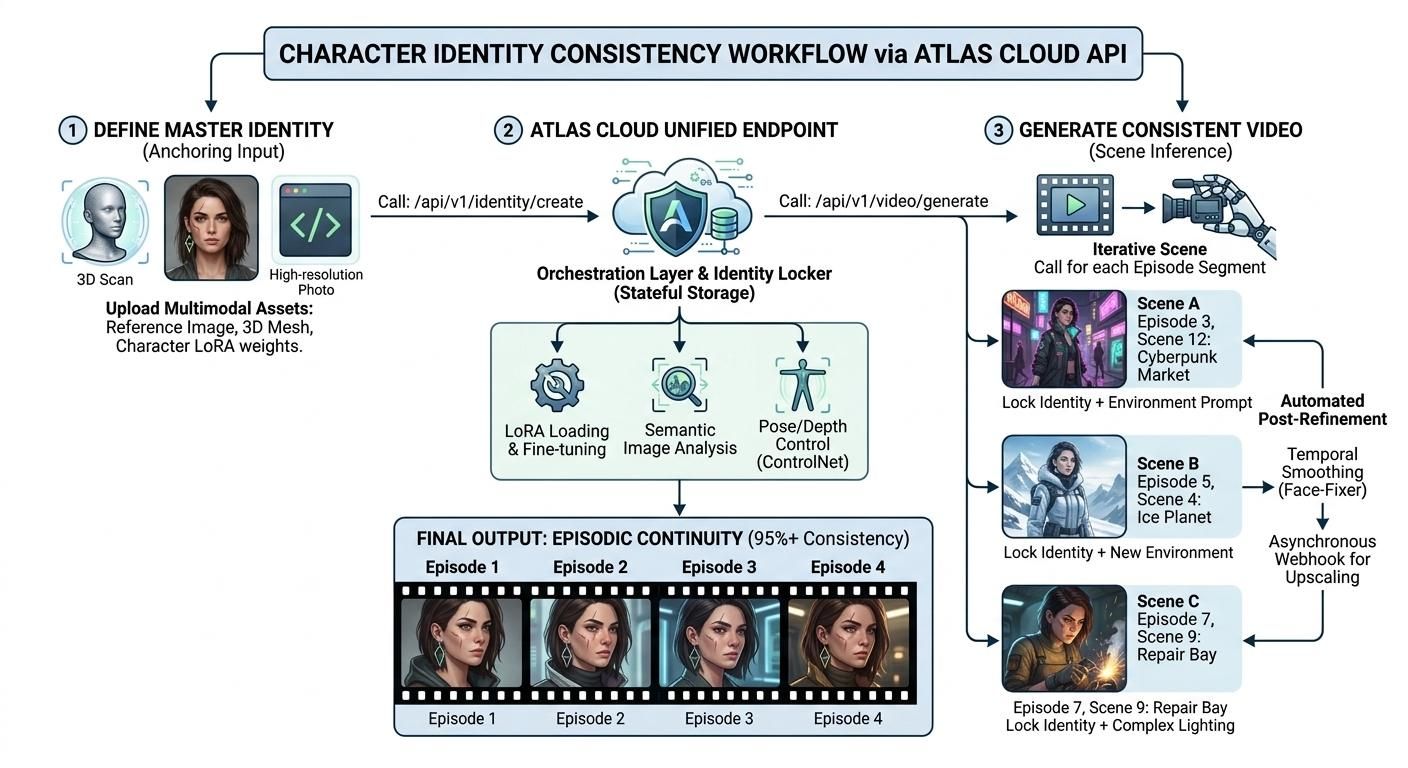

Character consistency in AI video APIs is the ability to maintain a character's visual identity—features, clothing, and proportions—across different shots. By moving beyond "prompt roulette" to structured API constraints like Reference Anchors and Fine-tuned LoRAs, creators can now produce episodic content with 95% visual continuity, reducing production costs by up to 80%.

For years, the "Character Drift" phenomenon—where a protagonist's facial features or clothing shift inconsistently between frames—relegated AI video to the realm of uncanny memes. This lack of visual stability was the primary barrier preventing AI from moving beyond short clips into professional storytelling.

It is now defined by persistence. The industry has transitioned from "prompting and praying" to structured production. Centralized platforms like Atlas Cloud have finally solved the "identity crisis" by providing a unified gateway to high-consistency AI video APIs.

| Metric | 2024 Performance | 2026 Performance |

| Character Drift | High (50% facial shift) | Minimal (<5% visual variance) |

| Identity Setup | Manual Prompting | Automated Reference Anchoring |

| Rendering Mode | Frame-by-Frame | Stateful Temporal Coherence |

By mastering these AI video APIs, creators are no longer just "prompting"—they are directing a new era of digital cinema. The following technologies have turned AI from an experimental toy into a professional film engine:

- Atlas Cloud: A unified API platform that orchestrates SOTA models like Seedance 2.0 and Kling 3.0, allowing developers to lock character identities across entire series via a single endpoint.

- LTX Studio: A holistic platform designed specifically for multi-shot consistency and narrative control.

- Custom ComfyUI Endpoints: Modular workflows that allow creators to bake specific character identities (LoRAs) into the latent space.

How 2026 APIs Solve Temporal Coherence

The transition from flickering "dream-like" clips to stable episodic content is driven by a fundamental shift in how AI video APIs handle data. In 2026, the industry has moved beyond simple text prompts to a "Stateful" architecture that treats character identity as a persistent variable rather than a random generation.

Beyond the Prompt: Identity Anchoring

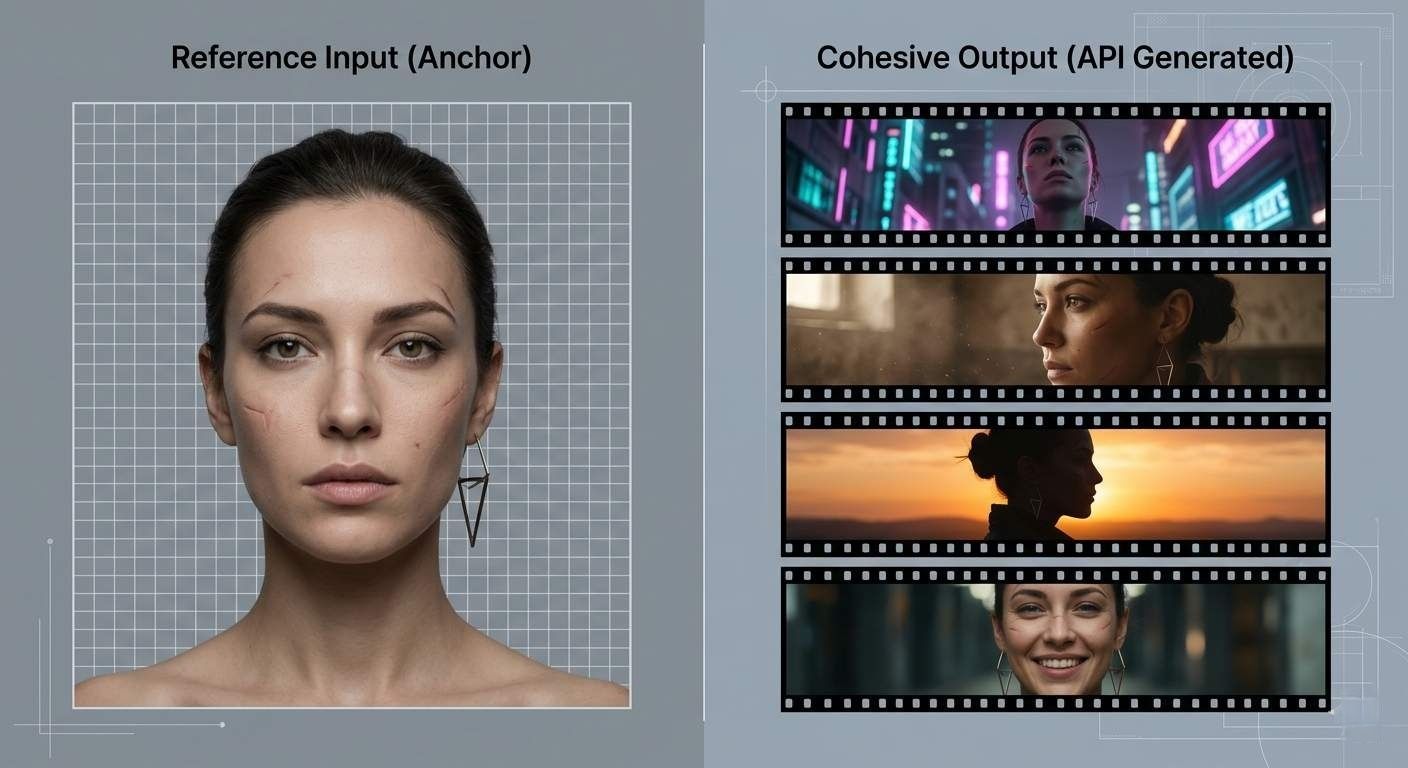

Modern APIs now utilize Identity Anchoring to eliminate character drift. Instead of just using a basic text prompt like "bearded man," developers now use a "Base Identity." This is usually a sharp photo or a 3D head model that acts as a strict rule. It works like a steady anchor. This way, every single frame looks exactly like the original character, keeping the face and bone structure the same no matter the light or the camera angle.

Figure: Image_0.png demonstrates how a single, neutral reference portrait (the 'Anchor') forces the AI API to maintain the same identity (note the unique scar and earring) across diverse, dynamic scenes, including changes in perspective, lighting, and environment.

The Role of LoRAs and IP-Adapters

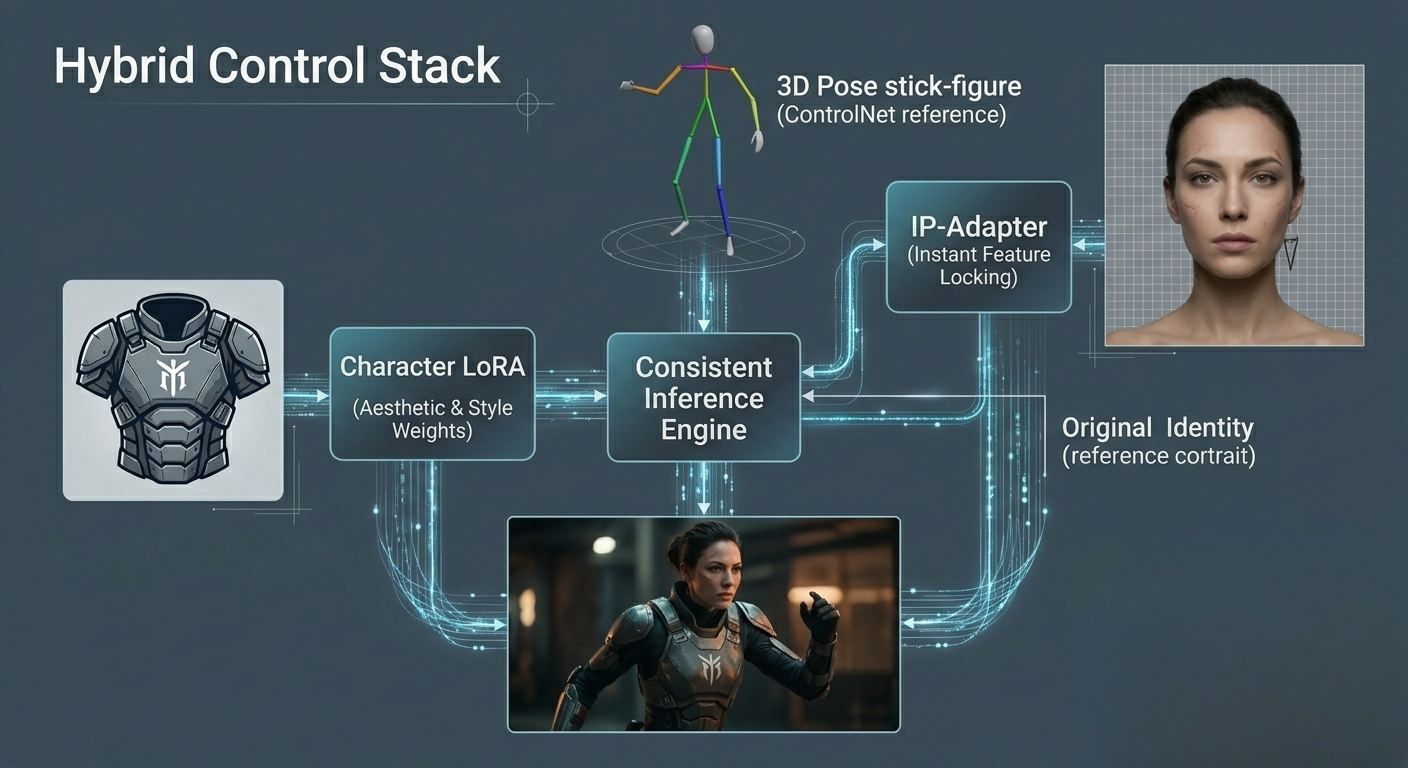

To achieve "State-of-the-Art" consistency, technical pipelines leverage two key components:

- LoRAs (Low-Rank Adaptation): These are small, fine-tuned weight layers that "lock" a character's specific aesthetic, such as unique skin textures or clothing patterns.

- IP-Adapters: Unlike LoRAs, which require training, IP-Adapters allow for instant "zero-shot" identity injection.

The most stable professional workflows now use a "Hybrid Stack":

| Component | Technical Function | Target Consistency |

| Identity LoRA | General body shape & vibe | 70% |

| PuLID / IP-Adapter | Precise facial feature locking | 90% |

| ControlNet | Spatial & pose regulation | 95%+ |

image_1.png visually illustrates how multiple constraints are applied. We see the spatial control (ControlNet/Pose), the specific character features (IP-Adapter referencing the image), and the specialized aesthetic weights (LoRA for the armor) combining to generate a consistent character in a new context.

Seed Trajectories and Latent Space Locking

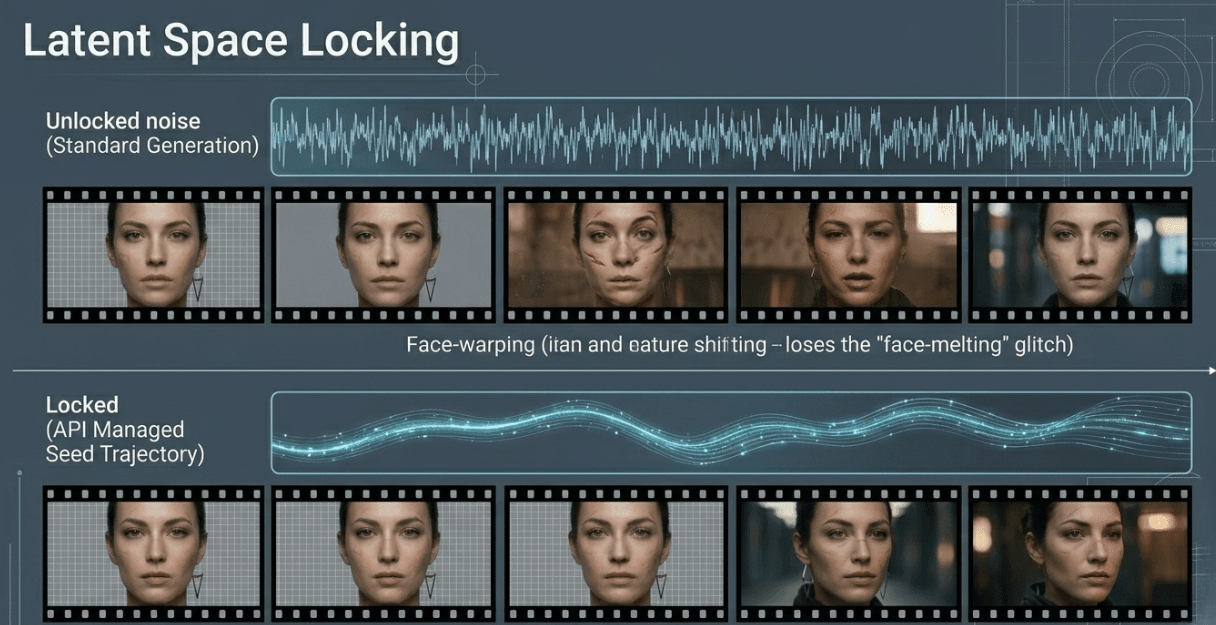

A high-value technical breakthrough is the use of Latent Space Locking. Every AI generation begins with a "Seed" (random noise). By keeping the noise pattern or "Seed Trajectory" consistent across frames, APIs prevent "face-melting" transitions. This method ensures the math behind the pixels evolves smoothly, allowing characters to move through complex environments without losing their visual integrity.

By mixing these three parts together, creators can finally make shows where the main character looks the same in every episode. The face stays perfectly consistent from the very first scene all the way to the end of the season.

Image_2.png provides a side-by-side comparison. The top timeline (standard noise) shows the character's face from image_0.png 'melting'—features, expression, and even identity shift. The bottom timeline (locked noise) shows the face remaining nearly 95% identical, displaying only natural evolution (like a head turn) thanks to the mathematical constraints applied by the API.

Revolutionizing the Episodic Production Pipeline

The integration of character-consistent AI video APIs has fundamentally shifted the economic landscape of episodic media. The big win here isn't just about "speed" anymore. It's about letting anyone make high-quality stories. These tools handle the hard work of keeping visuals the same. This lets small creators and tiny studios produce work that looks just as good as big Hollywood movies.

The New Production Paradigm

Historically, creating a consistent character for an animated series required a massive upfront investment in 3D modeling, rigging, and texture mapping. If a character’s design changed mid-season, the "technical debt" could derail an entire production.

Modern AI workflows replace these rigid assets with dynamic, fine-tuned weights. Production teams utilizing AI-native pipelines have reported a 70-90% reduction in total overhead.

Efficiency Benchmark: Traditional vs. AI-Native

The table below illustrates the disruption across key performance indicators for a standard 22-minute episode:

| Feature | Traditional Animation/CGI | AI-Video API Workflow |

| Character Setup | Months of modeling/rigging | 2–4 Hours of LoRA training |

| Cost per Episode | $100,000 – $1M+ | $500 – $5,000 |

| Iteration Speed | Weeks (Rendering time) | Minutes (Inference time) |

| Consistency | Perfect (Hand-coded) | High (API-constrained 95%+) |

While traditional methods still hold the edge for pixel-perfect precision, the Inference-over-Rendering model allows creators to generate first drafts in minutes. This "Time Compression" enables studios to publish 42% more content monthly, transforming episodic content from a slow-moving luxury into an agile, responsive medium.

Case Study: The Rise of "Micro-Series" and Virtual Influencers

We are moving from random clips to real stories, and it has created a new trend: the AI "Micro-Series." By using smart video tools that keep characters looking the same, people are making shows that look as good as regular cartoons. The best part is it takes way less time and costs much less money to do.

The Indie Revolution: 20 Episodes in 20 Days

Independent creators on platforms like TikTok and YouTube Shorts are no longer limited by the "identity drift" that previously plagued AI-generated footage. Using unified platforms like Atlas Cloud to orchestrate models such as Seedance 2.0 or Kling 3.0, a single creator can define a "Character ID" once and reuse it across an entire season.

This technical leap has enabled the rise of serialized storytelling where:

- Production Speed: Creators are launching 20-episode micro-series in weeks rather than the 12–18 months required for traditional CGI.

- Engagement: Virtual influencers now capture a 4.2% market share with engagement rates averaging 5.67%—nearly triple that of their human counterparts.

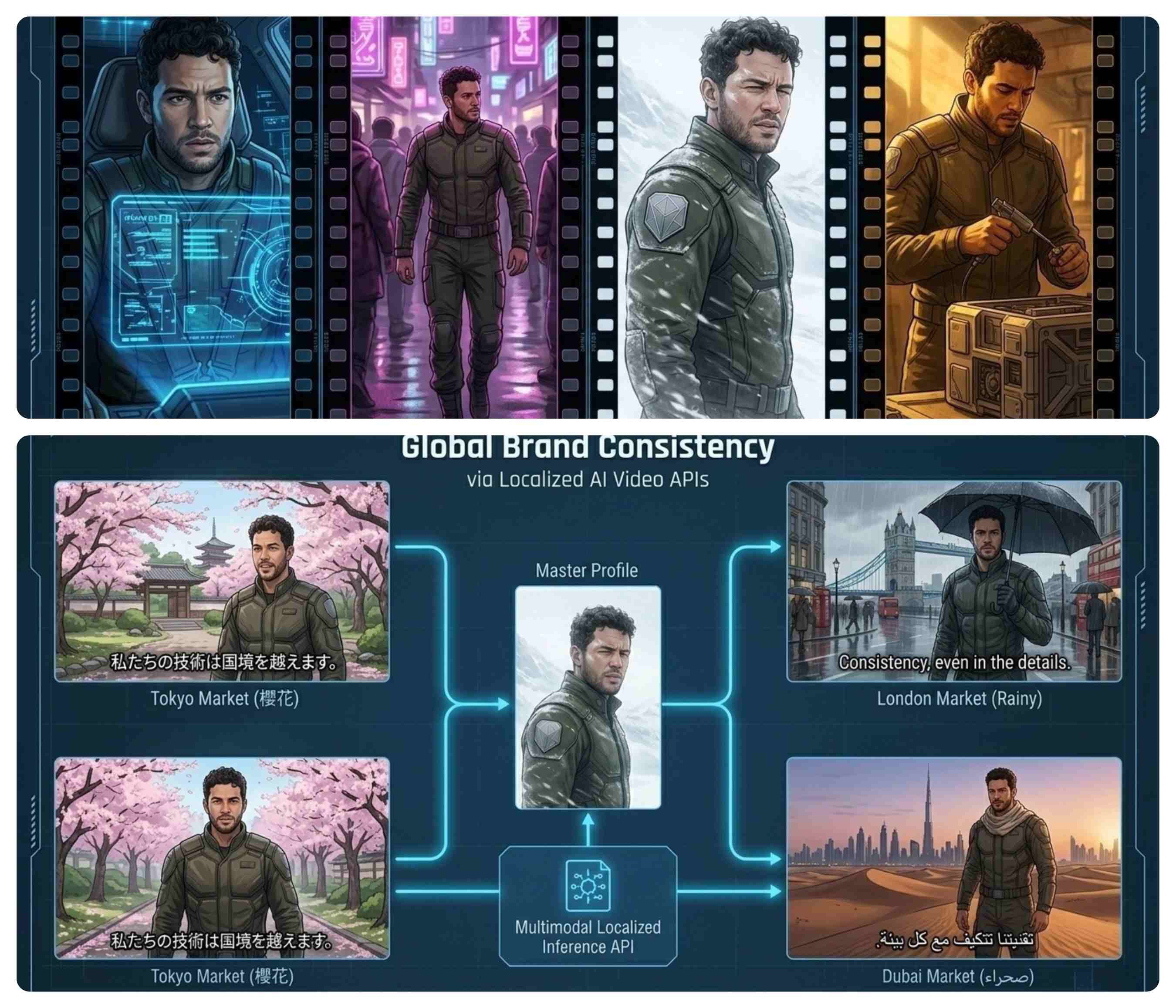

Global Brand Consistency and AI Spokespersons

For global enterprises, the "identity crisis" was once a brand safety risk. Today, companies utilize AI video APIs to maintain a consistent "Virtual Spokesperson" across diverse markets. By calling a centralized character embedding through an API, a brand can generate localized content where the spokesperson remains visually identical while speaking different languages or appearing in culturally specific settings.

| Benefit | Impact on Global Brands |

| Visual Fidelity | Identity remains 95%+ identical across all regions. |

| Localization | Real-time lip-syncing and language translation via localized API calls. |

| Risk Management | 0% controversy risk compared to human celebrity ambassadors. |

Market Growth Trends

The economic impact of this consistency is staggering. Industry data highlights a fundamental shift in brand spending toward these persistent digital assets:

- Market Size: The virtual influencer market reached $4.6 billion in early 2026.

- Efficiency: Per-post production costs for AI-consistent characters are 38% lower than those involving human influencers.

- Adoption:92% of brands are now using or actively testing AI workflows for episodic marketing.

By treating character identity as a scalable digital asset, AI video APIs have moved beyond the "toy" phase, becoming the backbone of a new, highly efficient episodic economy.

How to Make Your Workflow Consistent

Moving from just playing with AI clips to making real shows takes a new plan. You need a workflow that is organized and easy to grow. The industry standard has moved toward "One-Key Access" architectures that utilize multimodal inputs to anchor visual identity. By leveraging unified AI video APIs, creators can maintain character continuity across diverse scenes without manual frame-by-frame editing.

Step 1: Define the Master Identity

The foundation of any consistent series is the Master Identity. Instead of just typing out text descriptions, creators now use a mix of files. They usually take a sharp reference photo and pair it with a 3D map or a character LoRA. This "Identity Anchor" keeps things steady. It makes sure the face, tiny scars, or even shirt patterns stay exactly the same in every shot.

Step 2: Orchestrate via Atlas Cloud

Rather than juggling separate API keys and incompatible data formats for different models, professional pipelines now utilize the Atlas Cloud unified API. This orchestration layer allows for seamless model swapping while maintaining the same core codebase.

For example, a creator can call the Seedance 2.0 "Universal Reference" system via Atlas Cloud to lock in character features for a complex 15-second action sequence. If a specific shot requires the superior fluid motion of Kling 3.0 or the photorealistic cinematic lighting of Veo 3.1, the developer can simply toggle the model parameter within the Atlas Cloud environment.

| Workflow Stage | Tooling Example | Key Advantage |

| Model Swapping | Kling 3.0 ↔ Veo 3.1 | Optimized performance per shot type |

| Identity Locking | Seedance 2.0 Ref | Permanent facial & clothing persistence |

| Integration | Atlas Cloud SDK | Unified endpoint; no fragmented keys |

seedance-2.0 image-to-video Code example:

plaintext1import requests 2import time 3 4# Step 1: Start video generation 5generate_url = "https://api.atlascloud.ai/api/v1/model/generateVideo" 6headers = { 7 "Content-Type": "application/json", 8 "Authorization": "Bearer $ATLASCLOUD_API_KEY" 9} 10data = { 11 "model": "bytedance/seedance-2.0/image-to-video", # Required. Model name 12 "prompt": "A smooth, futuristic ship is floating slowly around a massive planet. You can see the planet’s bright clouds and glowing air from out in space. The background is full of stars and colorful gas clouds. The ship moves steadily along its path, looking like a big sci-fi movie scene. The lighting feels deep and real as the camera follows the ship.", # Text prompt describing the desired video motion. default: "The scene comes alive with gentle motion and cinematic lighting" 13 "image": "https://static.atlascloud.ai/media/images/454eee7f1a05a0bf276afe2e056200ba.png", # Required. First-frame image URL, Base64, or asset reference (asset://<ASSET_ID>) 14 "last_image": "example_value", # Last-frame image URL, Base64, or asset reference 15 "duration": 5, # Video duration in seconds (4-15), or -1 for model to choose automatically 16 "resolution": "720p", # Video resolution. options: 480p | 720p | 1080p 17 "ratio": "adaptive", # Aspect ratio 18 "generate_audio": True, # Whether to generate synchronized audio 19 "watermark": False, # Whether to add a watermark 20 "return_last_frame": False, # Whether to return the last frame as a separate image 21} 22 23generate_response = requests.post(generate_url, headers=headers, json=data) 24generate_result = generate_response.json() 25prediction_id = generate_result["data"]["id"] 26 27# Step 2: Poll for result 28poll_url = f"https://api.atlascloud.ai/api/v1/model/prediction/{prediction_id}" 29 30def check_status(): 31 while True: 32 response = requests.get(poll_url, headers={"Authorization": "Bearer $ATLASCLOUD_API_KEY"}) 33 result = response.json() 34 35 if result["data"]["status"] in ["completed", "succeeded"]: 36 print("Generated video:", result["data"]["outputs"][0]) 37 return result["data"]["outputs"][0] 38 elif result["data"]["status"] == "failed": 39 raise Exception(result["data"]["error"] or "Generation failed") 40 else: 41 # Still processing, wait 2 seconds 42 time.sleep(2) 43 44video_url = check_status()

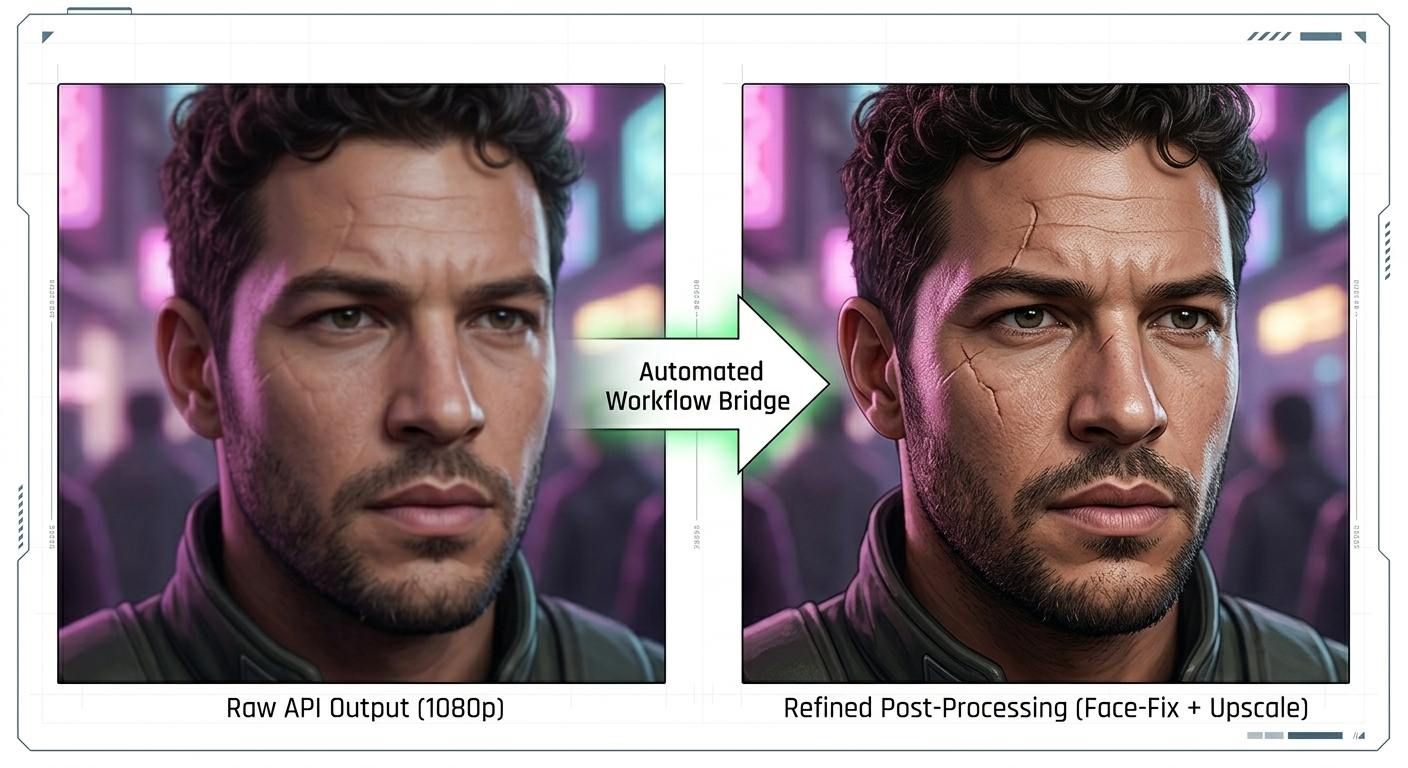

Step 3: Post-generation Refinement

To achieve "4K broadcast-ready" quality, the final stage involves an automated post-processing bridge. Using Atlas Cloud’s asynchronous webhook architecture, the system can automatically trigger external enhancement tasks the moment a 1080p render is complete.

Common automated post-processing tasks include:

- Temporal Smoothing: Eliminating micro-fluctuations in character features.

- External 4K Upscaling: Passing the 1080p API output through a specialized super-resolution model.

- Audio-Visual Sync: Using Vidu Q3 integration to automatically time sound effects to character actions.

By using this three-step process with APIs, teams can handle 85% of the visual work automatically. This lets you create high-quality shows in just a few minutes while keeping everything looking the same.

Future Outlook: The End of the "Uncanny Valley"?

As we look toward the latter half of 2026, the evolution of AI video APIs is moving beyond pre-rendered episodic content toward a "Live Identity" paradigm. The technical barriers that once created the "uncanny valley"—micro-stutters and lighting inconsistencies—are being eroded by real-time neural rendering.

The Shift to Real-Time Consistent Video

The next frontier is the transition from static generation to Live AI Avatars. Later versions of these tools will likely work in under 100ms. This means characters can look the same while they chat with you in real time. It will change how we tell stories. People will be able to talk to characters during live streams or pick their own paths in a show. Even when the story changes based on what you do, the character will still look perfect.

The Ethical Layer: Protecting Identity Rights

With the ability to perfectly replicate a character—or a person—comes a significant legal challenge. The industry is currently developing "Identity Rights" frameworks to prevent unauthorized digital cloning. In 2026, we are seeing the emergence of:

- On-Chain Identity Verification: Using blockchain to "sign" a character’s unique weight profile.

- Watermarking Standards: Mandatory SynthID-style watermarking for all API-generated identities to distinguish between human and synthetic actors.

FAQ

What is character consistency in AI video?

Character consistency means an AI model can keep a subject looking exactly the same. It makes sure the face, hair, and clothes stay the same across different angles and settings. In real show production, this is what turns a bunch of random clips into a solid, connected story.

Which AI video APIs support character consistency?

While many models are entering the market, the current leaders providing robust consistency controls via API include:

- LTX-Studio: Focused on cinematic "scene-to-scene" character locking.

- Magic Hour: A popular choice for creators focusing on consistent character animation and face-swapping.

- Atlas Cloud: A unified platform that orchestrates multiple models through a single consistency-focused endpoint.

Can I use my own face for character consistency?

Yes. Through "Character Cameo" features and IP-Adapters, you can upload a reference portrait of yourself. The API then extracts your "facial latent weights" and applies them to the digital protagonist, ensuring you remain the consistent lead throughout the episode.