DeepSeek LLM Models

DeepSeek, developed by the deepseek-ai team, is a cutting-edge series of open-source generative AI models engineered to democratize access to high-performance computing through a cost-effective and efficiency-first strategy. Its flagship reasoning model, DeepSeek-R1, made waves by rivaling top-tier proprietary models in mathematics, programming, and complex logical deduction, while the DeepSeek-V3.2, is designed for seamless daily interaction and autonomous Agent workflows. By significantly lowering the barrier to entry for advanced AI, DeepSeek has become a cornerstone for the "vibe coding" movement and a transformative tool in specialized fields like academic research and high-level technical problem-solving.

Explorar Modelos Líderes

Atlas Cloud le proporciona los últimos modelos creativos líderes en la industria.

Qué Hace Destacar a DeepSeek LLM Models

Atlas Cloud le proporciona los modelos creativos líderes en la industria más recientes.

Potencia Abierta

Modelos de primer nivel totalmente de código abierto, que garantizan transparencia y control.

Eficiencia arquitectónica

Aprovecha la avanzada tecnología Mixture-of-Experts (MoE) para ofrecer un rendimiento líder a una fracción del costo.

Versatilidad diseñada específicamente

Desde el versátil V3.1 hasta el razonamiento especializado de R1, DeepSeek ofrece modelos para cada tarea.

Libertad centrada en el desarrollador

Con licencia permisiva para uso comercial sin restricciones, fomentando la innovación sin barreras.

Rendimiento comprobado

Logra consistentemente resultados de vanguardia en los puntos de referencia de la industria para programación y razonamiento.

La alternativa práctica

Ofrece la potencia de los principales modelos propietarios con la asequibilidad y flexibilidad del código abierto.

Peak speed

Lowest cost

| Modalidad | Descripción |

|---|---|

| DeepSeek V3.2 | DeepSeek V3.2 es un LLM insignia de propósito general que integra mecanismos de atención dispersa con una robusta capacidad de procesamiento de contexto de 163.8K; con un precio base altamente competitivo, sirve como piedra angular para los flujos de trabajo diarios, incluido el razonamiento general complejo y la creación de Agents de programación de tareas de múltiples pasos. |

| DeepSeek V3.2 Speciale | DeepSeek V3.2 Speciale se posiciona como un LLM personalizado de alto rendimiento, con una enorme ventana de contexto de 163.8K y una estructura de precios escalonada premium ($0.4 entrada / $1.2 salida), diseñado específicamente para nodos de negocios centrales sensibles a la latencia que requieren una calidad de salida definitiva, como servicio al cliente inteligente para clientes de alto patrimonio o análisis cuantitativo a nivel de milisegundos. |

| DeepSeek V3.2 Exp | DeepSeek V3.2 Exp es una versión experimental de vanguardia basada en la arquitectura V3.2, que integra las últimas características algorítmicas manteniendo un contexto de 163.8K y costos comparables, lo que la hace ideal para equipos de I+D que realizan preinvestigación técnica y pruebas canary para validar preventivamente el poder diferenciador de las capacidades de IA de próxima generación para futuros productos. |

| DeepSeek-V3.1 | DeepSeek-V3.1 es la última generación de modelos de ecosistema de código abierto de alto rendimiento, logrando un nuevo equilibrio entre rendimiento y costo dentro de un contexto de 131.1K; como la mejor opción para proyectos de implementación comercial, actúa como la columna vertebral para escenarios que requieren tanto generación de alta calidad como costos controlables. |

| DeepSeek V3.1 Terminus | DeepSeek V3.1 Terminus sirve como la forma definitiva y estable a largo plazo de la serie V3.1; DeepSeek V3.1 Terminus mantiene parámetros y precios idénticos a la versión estándar, con el objetivo de proporcionar un estilo de salida y una lógica perpetuamente estables para servicios de endpoint en entornos de producción fluidos y orientados al consumidor. |

| DeepSeek-V3-0324 | DeepSeek-V3-0324 es una versión de instantánea histórica específica que cuenta con un contexto de 131.1K y el costo de entrada de texto más bajo disponible, aplicada principalmente en el mantenimiento de sistemas heredados que requieren una consistencia de comportamiento absoluta, o tareas de procesamiento por lotes con un rendimiento de entrada masivo pero requisitos de lógica de salida moderados. |

| DeepSeek-R1-0528 | DeepSeek-R1-0528 se posiciona como un modelo de razonamiento profundo de primer nivel, utilizando un contexto de 131.1K y con el coste de computación más alto ($0.55/$2.15), representando la cúspide de las capacidades dialécticas lógicas, utilizado exclusivamente para tareas críticas de "brainstorming" como el modelado matemático complejo y la generación de arquitectura de código avanzada. |

| DeepSeek OCR | DeepSeek OCR es un LLM multimodal visual dedicado que admite entrada de doble vía imagen-texto con un contexto corto de 8.2K y costos de uso ultrabajos, perfectamente adaptado para escenarios de flujo de trabajo de entrada de datos automatizada, como la digitalización de documentos escaneados masivos y la extracción estructurada de recibos financieros. |

Nuevas funciones de DeepSeek LLM Models + Showcase

La combinación de modelos avanzados con la plataforma acelerada por GPU de Atlas Cloud ofrece velocidad, escalabilidad y control creativo inigualables para la generación de imágenes y videos.

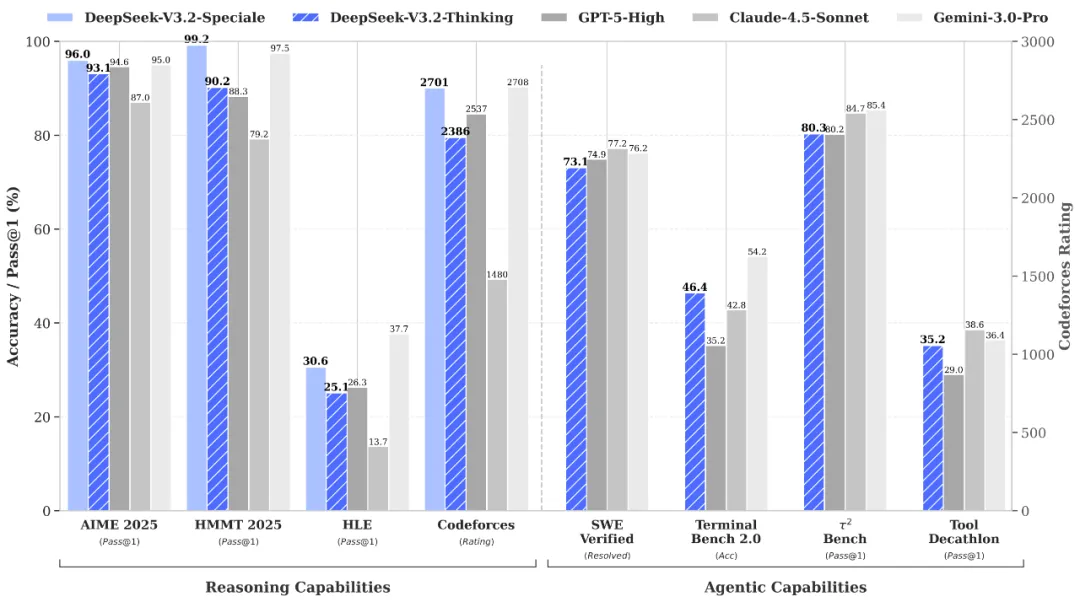

Razonamiento y verificación de clase mundial a través de la API DeepSeek-V3.2-Speciale

DeepSeek-V3.2-Speciale is the "long-thought" enhanced variant of the V3.2 architecture, integrating advanced theorem-proving capabilities from DeepSeek-Math-V2. Engineered for extreme precision, this model excels in rigorous mathematical proofing, complex logical verification, and superior instruction following, rivaling the performance of Gemini-3.0-Pro in mainstream reasoning benchmarks. It is the premier choice for academic research, automated formal verification, and high-stakes technical problem-solving where logical integrity is non-negotiable.

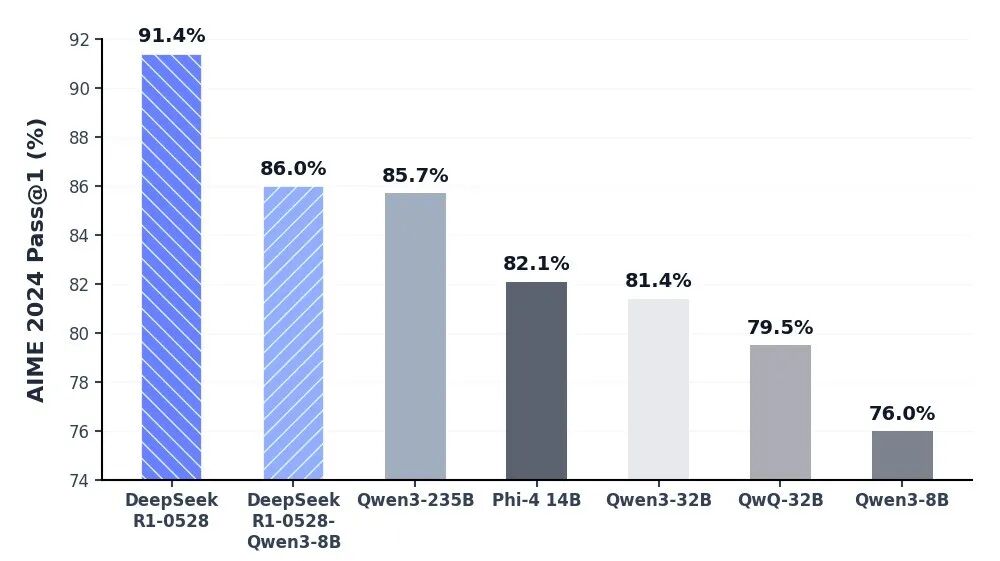

Profundidad cognitiva inigualable vía DeepSeek-R1 API

El modelo DeepSeek-R1 se sitúa a la vanguardia de la IA de razonamiento, ofreciendo un rendimiento líder en la industria en matemáticas, programación y lógica general. Al alcanzar la paridad con modelos globales de élite como o3 de OpenAI y Gemini-2.5-Pro, R1 ha redefinido las capacidades de la inteligencia de código abierto. Está específicamente optimizado para tareas de pensamiento profundo, incluido el desarrollo algorítmico complejo, la síntesis de datos sofisticada y los flujos de trabajo cognitivos avanzados que requieren razonamiento deductivo de múltiples etapas.

Interacción diaria fluida con flujos de trabajo de agentes autónomos mediante la API de DeepSeek V3.2

DeepSeek-V3.2 logra el equilibrio perfecto entre profundidad de razonamiento y velocidad de ejecución, diseñado para potenciar interacciones diarias fluidas y ecosistemas de agentes autónomos. Con una latencia significativamente reducida y un control de salida optimizado, sirve como un motor robusto para la orquestación de tareas de múltiples pasos y asistentes de IA de propósito general. Ya sea implementando automatización a escala empresarial o herramientas interactivas de alta frecuencia, V3.2 garantiza una experiencia de usuario fluida, eficiente y rentable.

Descubrimiento científico riguroso y verificación formal con DeepSeek-V3.2-Speciale API

The DeepSeek-V3.2-Speciale API is engineered for tasks that demand absolute logical precision and multi-step reasoning. By integrating advanced theorem-proving capabilities, it enables researchers and engineers to execute complex mathematical inductions, verify formal logic, and solve high-tier competitive programming challenges. Perfect for academic R&D, automated code auditing, and cryptographic analysis, this API transforms abstract complexity into verifiable results with the performance of top-tier global models.

Advanced Algorithmic Synthesis & Strategic Reasoning using the DeepSeek-R1 API

DeepSeek-R1 empowers developers to build applications centered on deep cognitive workflows and strategic decision-making. Ranking at the forefront of global reasoning benchmarks, the R1 API excels in synthesizing sophisticated code architectures, processing dense technical documentation, and generating innovative solutions for open-ended logical puzzles. It is the ideal engine for AI-driven software engineering, long-form data synthesis, and any scenario where "thinking fast and slow" requires a powerful, reasoning-first foundation.

Orquestación fluida de agentes autónomos con la API de DeepSeek-V3.2

For high-velocity, sensory-driven AI applications, the DeepSeek-V3.2 API provides the perfect equilibrium between reasoning depth and ultra-low latency. It is optimized for building autonomous Agents that can navigate multi-step workflows, manage real-time user interactions, and execute general-purpose tasks with GPT-5 level intelligence. This use case is tailor-made for enterprise-scale automation, intelligent customer ecosystems, and developers looking to deploy responsive, cost-effective AI assistants at scale.

Comparación de Modelos

Vea cómo se comparan los modelos de diferentes proveedores — compare rendimiento, precios y fortalezas únicas para tomar una decisión informada.

| Modelo | Contexto | Salida máxima | Entrada | Posicionamiento |

|---|---|---|---|---|

| DeepSeek V3.2 | 163.84K | 163.84K | Text | General Insignia |

| DeepSeek V3.2 Speciale | 163.84K | 163.84K | Text | Personalizado de alto rendimiento |

| DeepSeek V3.2 Exp | 163.84K | 163.84K | Text | Compilación experimental |

| DeepSeek-V3.1 | 131.07K | 65.54K | Text | Backbone de código abierto |

| DeepSeek V3.1 Terminus | 131.07K | 65.54K | Text | Estable a largo plazo (LTS) |

| DeepSeek-V3-0324 | 131.07K | 32.77K | Text | Instantánea histórica |

| DeepSeek-R1-0528 | 131.07K | 131.07K | Text | Razonamiento de primer nivel |

| DeepSeek OCR | 8.19K | 8.19K | Text | Multimodal dedicado |

| GLM-5 | 200K | 128K | Text | Modelo fundacional insignia |

| MiniMax-M2.5 | 204.8K | 196.6K | Text | Programación agéntica SOTA |

How to Use DeepSeek LLM Models on Atlas Cloud

Get started in minutes — follow these simple steps to integrate and deploy models through Atlas Cloud’s platform.

Create an Atlas Cloud Account

Sign up at atlascloud.ai and complete verification. New users receive free credits to explore the platform and test models.

Por Qué Usar DeepSeek LLM Models en Atlas Cloud

Combina modelos avanzados de DeepSeek LLM Models con la plataforma acelerada por GPU de Atlas Cloud, proporcionando rendimiento, escalabilidad y experiencia de desarrollo incomparables.

Rendimiento y Flexibilidad

Baja Latencia:

Inferencia optimizada por GPU para respuestas en tiempo real.

API Unificada:

Una sola integración para acceder a DeepSeek LLM Models, GPT, Gemini y DeepSeek.

Precios Transparentes:

Facturación por Token, soporta modo Serverless.

Empresa y Escala

Experiencia del Desarrollador:

SDK, análisis de datos, herramientas de ajuste fino y plantillas todo en uno.

Confiabilidad:

99.99% de disponibilidad, control de permisos RBAC, registros de cumplimiento.

Seguridad y Cumplimiento:

Certificación SOC 2 Type II, cumplimiento HIPAA, soberanía de datos en EE.UU.

Preguntas Frecuentes sobre DeepSeek LLM Models

DeepSeek ofrece transparencia de código abierto y una rentabilidad superior. Con capacidades de razonamiento (R1 y V3.2) que rivalizan con GPT-5, proporciona una alternativa de alto rendimiento y menor coste con la flexibilidad del despliegue privado.

Esto refleja la "capacidad cerebral" total del modelo. El diseño MoE de DeepSeek combina un recuento total masivo de parámetros (por ejemplo, 671B) para una inteligencia profunda con un recuento "activo" optimizado para lograr la máxima eficiencia operativa.

Explorar Más Series

Happy Horse 1.0

HappyHorse-1.0 is a unified multimodal AI video generation model that climbed to the top of the Artificial Analysis Video Arena blind-test leaderboard for both text-to-video and image-to-video generation. CNBC Alibaba Group confirmed ownership of HappyHorse, developed under its Alibaba Token Hub (ATH) business unit, where it leads benchmarks outperforming ByteDance's Seedance 2.0 and others. Caixin Global Led by Zhang Di — the former VP of Kuaishou who architected Kling AI — the 15-billion parameter model generates 1080p video with synchronized audio in a single pass using a unified transformer architecture that bypasses the multi-stage pipelines used by every major competitor.

Seedance 2.0 Models

Seedance 2.0(by Bytedance) is a multimodal video generation model that redefines "controllable creation," moving beyond the limitations of text or start/end frames. It supports quad-modal inputs—text, image, video, and audio—and introduces an industry-leading "Universal Reference" system. By precisely replicating the composition, camera movement, and character actions from reference assets, Seedance 2.0 solves critical issues with character consistency and physical coherence, empowering creators to act as true "directors" with deep control over their output.

GPT Image 2 Models

GPT Image 2 is a state-of-the-art multimodal foundation model engineered for exceptional text-to-image generation with unprecedented photorealism and creative versatility. Developed by OpenAI as the evolution of the DALL-E lineage, it transforms detailed natural language descriptions into hyper-realistic imagery at up to 4K resolution. With proprietary "Neural Rendering Engine" technology for precise visual control, GPT Image 2 delivers studio-quality results with accurate anatomy, lighting, and composition—making it the premier AI tool for professional creators, enterprises, and developers demanding production-ready visual assets.

Wan2.7 Models

Launching this March, Wan2.7 is the latest powerhouse in the Qwen ecosystem, delivering a massive upgrade in visual fidelity, audio synchronization, and motion consistency over version 2.6. This all-in-one AI video generator supports advanced features like first-and-last frame control, 3x3 grid synthesis, and instruction-based video editing. Outperforming competitors like Jimeng, Wan2.7 offers superior flexibility with support for real-person image inputs, up to five video references, and 1080P high-definition outputs spanning 2 to 15 seconds, making it the premier choice for professional digital storytelling and high-end content marketing.

Veo3.1 Models

Google DeepMind’s Veo 3.1 represents a paradigm shift in AI video generation, empowering creators with director-level narrative control and cinematic-grade audio quality that seamlessly integrates with its enhanced visual realism. By bridging the gap between imaginative concepts and photorealistic execution, this advanced model offers a transformative solution for a wide range of application scenarios, from professional filmmaking and high-end advertising to immersive digital content creation.

ERNIE Image Models

ERNIE-Image is an open-weight text-to-image model developed by the ERNIE-Image Team at Baidu, built on a single-stream Diffusion Transformer (DiT) with 8B parameters and paired with a lightweight Prompt Enhancer that rewrites short prompts into richer, more structured descriptions before passing them to the diffusion backbone. NYU Shanghai RITS Released on April 15, 2026 under the Apache 2.0 license, it transforms natural language descriptions into detailed imagery with particular strength in text rendering and structured layout generation. ERNIE-Image is designed not only for strong visual quality, but for controllability in practical generation scenarios where accurate content realization matters as much as aesthetics — making it well-suited for commercial posters, comics, multi-panel layouts, and other content creation tasks that require both visual quality and precise control.

GPT Image Models

The GPT Image Family is OpenAI's latest suite of multimodal image generation and editing models, built on the powerful GPT architecture. This family includes three tiers — GPT Image-1, GPT Image-1.5, and GPT Image-1 Mini — each available in both Text-to-Image and Image-to-Image variants. Combining GPT's world-class language understanding with DALL·E-class visual synthesis, these models deliver exceptional prompt adherence, photorealistic rendering, and creative versatility across illustration, photography, design, and visualization tasks. The series offers flexible pricing and quality tiers to match any workflow — from rapid prototyping and high-volume content production to professional-grade final deliverables. Whether you need ultra-fast iterations at minimal cost or maximum quality for brand campaigns, the GPT Image Family has a solution tailored to your needs.

Nano Banana2 Models

Nano Banana 2 (by Google), is a generative image model that perfectly balances lightning-fast rendering with exceptional visual quality. With an improved price-performance ratio, it achieves breakthrough micro-detail depiction, accurate native text rendering, and complex physical structure reconstruction. It serves as a highly efficient, commercial-grade visual production tool for developers, marketing teams, and content creators.

Seedream5.0 Models

Seedream 5.0, developed by ByteDance’s Jimeng AI, is a high-performance AI image generation model that integrates real-time search with intelligent reasoning. Purpose-built for time-sensitive content and complex visual logic, it excels at professional infographics, architectural design, and UI assistance. By blending live web insights with creative precision, Seedream 5.0 empowers commercial branding and marketing with a seamless, logic-driven workflow that turns sophisticated data into stunning, high-fidelity visuals.

Kling3.0 Models

Kuaishou’s flagship video generation suite, Kling 3.0, features two powerhouse models—Kling 3.0 (Upgraded from Kling 2.6) and Kling 3.0 Omni (Kling O3, Upgraded from Kling O1)—both offering high-fidelity native audio integration. While Kling 3.0 excels in intelligent cinematic storytelling, multilingual lip-syncing, and precision text rendering, Kling O3 sets a new standard for professional-grade subject consistency by supporting custom subjects and voice clones derived from video or image inputs. Together, these models provide a comprehensive solution tailored for cinematic narratives, global marketing campaigns, social media content, and digital skit production.

GLM LLM Models

GLM is a cutting-edge LLM series by Z.ai (Zhipu AI) featuring GLM-5, GLM-4.7, and GLM-4.6. Engineered for complex systems and long-horizon agentic tasks, GLM-5 outperforms top-tier closed-source models in elite benchmarks like Humanity’s Last Exam and BrowseComp. While GLM-4.7 specializes in reasoning, coding, and real-world intelligent agents, the entire GLM suite is fast, smart, and reliable, making it the ultimate tool for building websites, analyzing data, and delivering instant, high-quality answers for any professional workflow.

Open AI Model Families

Explore OpenAI’s language and video models on Atlas Cloud: ChatGPT for advanced reasoning and interaction, and Sora-2 for physics-aware video generation.