Flux.2 Image Models

Developed by Black Forest Labs, FLUX.2 is a powerhouse 32-billion parameter rectified flow Transformer model that redefines creative workflows by unifying AI image generation, editing, and composition. It transforms complex text prompts into high-fidelity visuals while offering integrated tools for professional-grade editing at resolutions up to 2K, providing a streamlined, all-in-one solution for digital artists and designers seeking unmatched precision and scalability in their visual content creation.

Explorar Modelos Líderes

Atlas Cloud le proporciona los últimos modelos creativos líderes en la industria.

Qué Hace Destacar a Flux.2 Image Models

Atlas Cloud le proporciona los modelos creativos líderes en la industria más recientes.

Photorealistic Quality

Generates crisp, high-resolution images with accurate lighting, textures, and detail for production use.

Fast, Lightweight Inference

Optimized architecture delivers rapid image generation on modest GPUs and edge hardware.

Fine-Grained Control

Supports styles, presets, and prompt controls so designers can quickly dial in the exact look they want.

Seamless Workflow Integration

Simple APIs and plugins connect Nano Banana to design tools, apps, and pipelines with minimal setup.

Cost-Efficient Creativity

Efficient diffusion kernels and smart caching keep generation costs low, so teams can experiment freely at scale.

Flexible Deployment Options

Flexible Deployment Options Run in the cloud, on-prem, or in VPC environments.

Velocidad de pico

Costo más bajo

| Modalidad | Descripción |

|---|---|

| Flux.2 Dev API(Text To Image, Image To Image) | La Flux.2 Dev API otorga acceso al modelo de pesos abiertos de 32 mil millones de parámetros más potente del mundo, diseñado para la generación sofisticada de texto a imagen y la edición de imágenes con múltiples entradas. Al utilizar un checkpoint unificado tanto para la creación como para la modificación, agiliza los flujos de trabajo creativos profesionales y ofrece una base inigualable para crear aplicaciones de IA visual avanzadas y personalizables bajo licencias comerciales. |

| Flux.2 Pro API(Text To Image, Image To Image) | La API Flux.2 Pro ofrece una calidad de imagen líder en la industria y una adherencia a los prompts que rivaliza con los mejores modelos de código cerrado, reduciendo significativamente la latencia y los costos operativos. Proporciona una solución de alto rendimiento para aplicaciones de nivel empresarial que requieren fidelidad visual premium sin un precio elevado. |

| Flux.2 Flex API(Text To Image, Image To Image) | La Flux.2 Flex API ofrece a los desarrolladores un control granular sobre los parámetros de generación, incluidas las escalas de guía y los pasos de inferencia, para calibrar perfectamente el equilibrio entre la velocidad y la fidelidad del prompt. Optimizada específicamente para detalles intrincados y una renderización tipográfica precisa, sirve como un kit de herramientas versátil para creadores que exigen un control de alta precisión sobre composiciones visuales complejas y elementos textuales. |

| Flux.2 Klein API(Text To Image, Image To Image) | La Flux.2 Klein API ofrece una solución ligera pero robusta mediante técnicas avanzadas de destilación de tamaño, publicada bajo la licencia Apache 2.0 amigable para desarrolladores. Supera a modelos de escala similar entrenados desde cero, proporcionando una vía eficiente y accesible para la generación de imágenes de alta calidad en entornos con recursos limitados. |

Nuevas funciones de Flux.2 Image Models + Showcase

La combinación de modelos avanzados con la plataforma acelerada por GPU de Atlas Cloud ofrece velocidad, escalabilidad y control creativo inigualables para la generación de imágenes y videos.

Fidelidad de textura mejorada e iluminación realista con la API de FLUX.2

El modelo FLUX.2 aprovecha su arquitectura de 32 mil millones de parámetros para ofrecer texturas más nítidas e iluminación estabilizada en todas las salidas visuales. Al optimizar la interacción luz-materia en el espacio latente, los usuarios pueden lograr resultados fotorrealistas para la visualización de productos de alta gama y la fotografía profesional. Es la solución definitiva para el renderizado hiperrealista, la consistencia de materiales y los activos digitales de calidad de estudio.

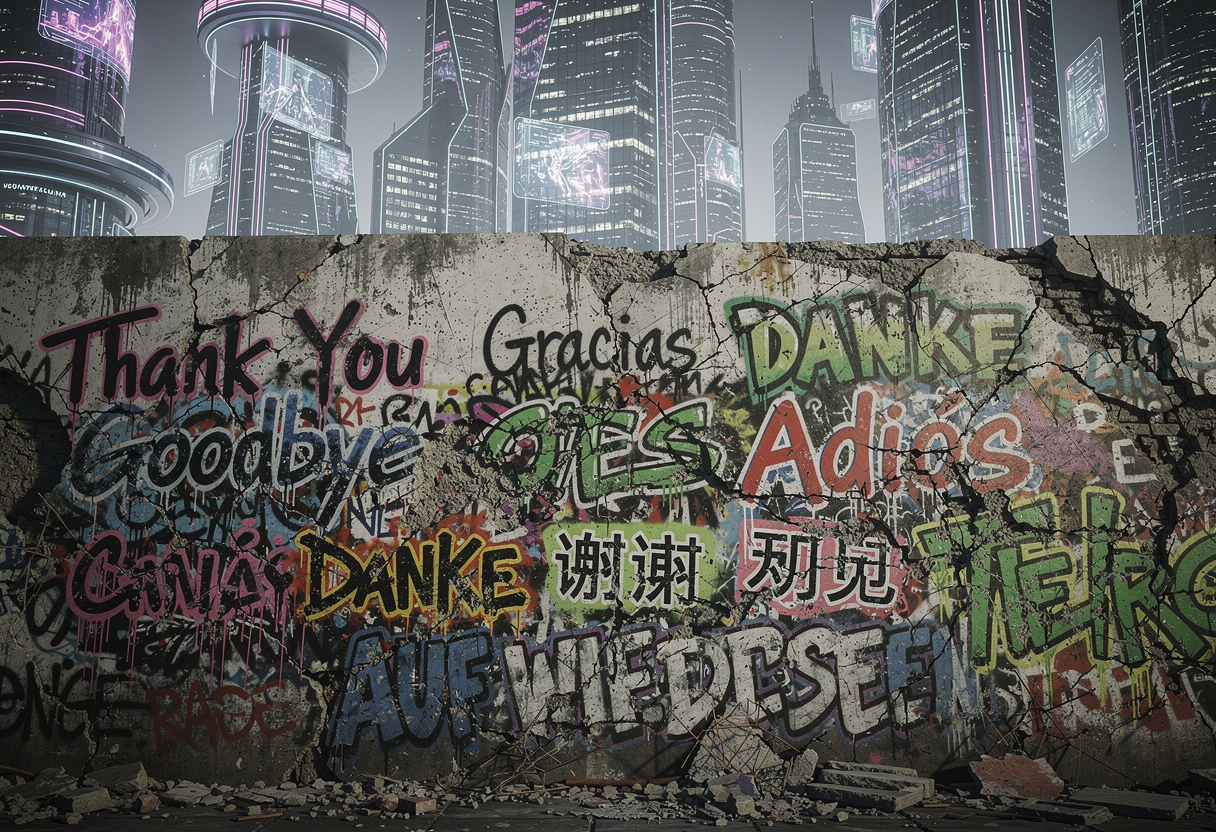

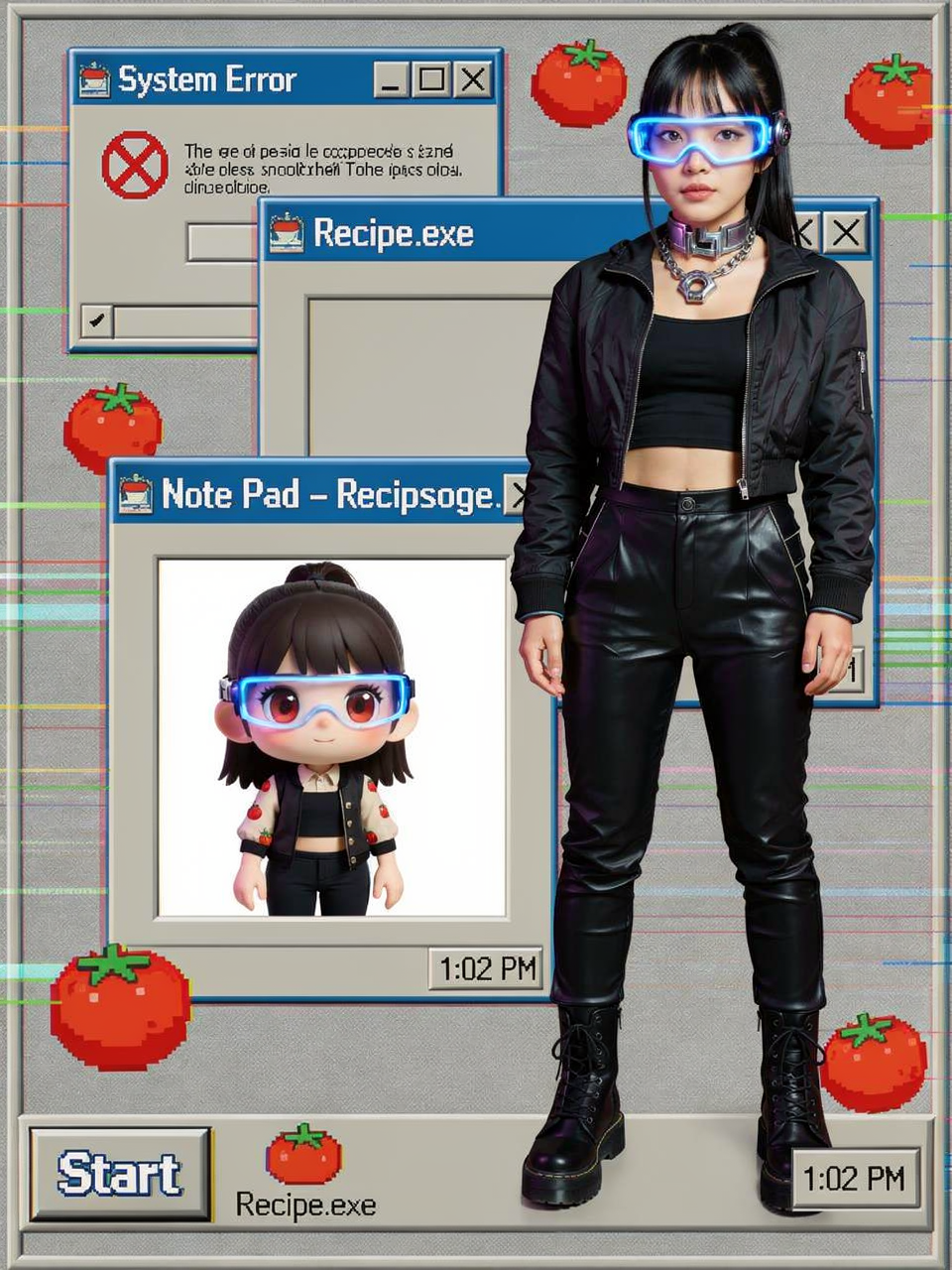

Tipografía avanzada y renderizado gráfico con la API de FLUX.2

FLUX.2 admite diseños tipográficos complejos y simulaciones de UI intrincadas, asegurando que incluso el microtexto permanezca legible y nítido. Al integrar una codificación sofisticada a nivel de caracteres, los usuarios pueden renderizar con precisión infografías, memes y contenido de marca sin distorsión de caracteres. Es la solución definitiva para el diseño gráfico profesional, el prototipado de interfaces y composiciones creativas con gran cantidad de texto.

Comprensión de prompts estructurados y control composicional mediante la API FLUX.2

El motor FLUX.2 proporciona una lógica superior para interpretar prompts de varios párrafos y limitaciones espaciales complejas con alta fidelidad. Al decodificar directivas relacionales matizadas, los usuarios pueden orquestar con precisión escenas con múltiples sujetos y mantener un estricto cumplimiento de la intención compositiva. Es la solución definitiva para la narración sofisticada, el arte digital por capas y las narrativas visuales impulsadas por la precisión.

Mejora de la lógica del mundo y la conciencia espacial utilizando la API FLUX.2

FLUX.2 incorpora un vasto conocimiento del mundo para comprender profundamente las relaciones físicas entre la luz, el espacio y el comportamiento de los objetos. Al fundamentar cada generación en una lógica ambiental realista, los usuarios pueden garantizar que las escenas complejas se comporten exactamente como se espera en el mundo físico. Es la solución definitiva para la visualización arquitectónica, la construcción de mundos inmersivos y la síntesis de escenas lógicamente consistente.

Qué Puedes Hacer con Flux.2 Image Models

Descubra casos de uso prácticos y flujos de trabajo que puede crear con esta familia de modelos — desde creación de contenido y automatización hasta aplicaciones de nivel producción.

Renderizado fotorrealista de alta fidelidad con la API de FLUX.2

El modelo FLUX.2 permite a creadores y desarrolladores construir contenido visual ultrarrealista que conserva texturas verosímiles, iluminación estabilizada y precisión física. Ideal para fotografía de producto profesional y visualización arquitectónica, la arquitectura de 32B de parámetros garantiza reflejos superficiales consistentes y profundidad de material, soportando activos de marketing de alta gama, maquetas de marcas de lujo y fotografía digital de calidad de estudio.

Diseño tipográfico y maquetación de precisión mediante la API de FLUX.2

For information-dense graphics, FLUX.2 renders complex typography, UI simulations, and intricate layouts with absolute clarity and zero character distortion. This use case fits graphic designers, branding experts, and social media creators requiring precise text integration in posters, infographics, and interface prototypes—ensuring even micro-fonts remain legible and perfectly aligned, powered by advanced Transformer-based semantic understanding.

Composición de escenas lógica y edición de alta resolución de 4 MP

FLUX.2 ofrece una interpretación inigualable de prompts estructurados y de múltiples partes, lo que permite crear escenas sofisticadas con múltiples sujetos y arreglos espaciales complejos. Con soporte para edición de alta resolución de hasta 4 millones de píxeles, la API facilita transformaciones fluidas de imagen a imagen y ajustes locales de precisión, proporcionando una solución integral eficiente para artistas digitales profesionales y visionarios que exigen coherencia lógica en proyectos creativos a gran escala.

Comparación de Modelos

Vea cómo se comparan los modelos de diferentes proveedores — compare rendimiento, precios y fortalezas únicas para tomar una decisión informada.

| Modelo | Límite de imágenes de referencia | Número de salidas | Resolución | Modelo |

|---|---|---|---|---|

| Flux.2 | 10 | 1 | 2K | 1:1 3:2 2:3 3:4 4:3 4:5 5:4 9:16 16:9 21:9 |

| Flux.1 | 1 | 1 | 256P~4K | Width[256, 4096]px; Height[256, 4096]px |

| Qwen-Image | 3 | 1~6 | 512P~2K | Width[512, 2048]px; Height[512, 2048]px |

| Nano Banana 2 | 14 | 1 | 4K, 2K, 1K | 1:1 3:2 2:3 3:4 4:3 4:5 5:4 9:16 16:9 21:9 |

| Seedream 5.0 Lite | 14 | 1~15 | 2K~4K+ | 1:1 3:2 2:3 3:4 4:3 4:5 5:4 9:16 16:9 21:9 |

How to Use Flux.2 Image Models on Atlas Cloud

Get started in minutes — follow these simple steps to integrate and deploy models through Atlas Cloud’s platform.

Create an Atlas Cloud Account

Sign up at atlascloud.ai and complete verification. New users receive free credits to explore the platform and test models.

Por Qué Usar Flux.2 Image Models en Atlas Cloud

Combina modelos avanzados de Flux.2 Image Models con la plataforma acelerada por GPU de Atlas Cloud, proporcionando rendimiento, escalabilidad y experiencia de desarrollo incomparables.

Rendimiento y Flexibilidad

Baja Latencia:

Inferencia optimizada por GPU para respuestas en tiempo real.

API Unificada:

Una sola integración para acceder a Flux.2 Image Models, GPT, Gemini y DeepSeek.

Precios Transparentes:

Facturación por Token, soporta modo Serverless.

Empresa y Escala

Experiencia del Desarrollador:

SDK, análisis de datos, herramientas de ajuste fino y plantillas todo en uno.

Confiabilidad:

99.99% de disponibilidad, control de permisos RBAC, registros de cumplimiento.

Seguridad y Cumplimiento:

Certificación SOC 2 Type II, cumplimiento HIPAA, soberanía de datos en EE.UU.

Preguntas Frecuentes sobre Flux.2 Image Models

Unifica la generación de imágenes, la edición local y la composición de múltiples imágenes. FLUX.2 es entre un 30% y un 50% más rápido que su predecesor y admite de forma nativa una salida de alta resolución de 4MP, logrando una excelencia fotorrealista en lógica física, iluminación y texturas.

FLUX.2 renderiza texto nítido y preciso incluso en escenas complejas, admitiendo párrafos largos y microfuentes. Al integrar el modelo de visión-lenguaje Mistral-3 24B, destaca en infografías, maquetas de interfaz de usuario (UI mockups) y activos de marca con gran densidad de texto.

FLUX.2 ha sido desarrollado por Black Forest Labs (BFL), fundada por los creadores originales de Stable Diffusion (SDXL). El equipo fue pionero en la tecnología Latent Diffusion y ahora redefine la inteligencia visual a través de una arquitectura Rectified Flow de 32B de parámetros.

Explorar Más Series

Happy Horse 1.0

HappyHorse-1.0 is a unified multimodal AI video generation model that climbed to the top of the Artificial Analysis Video Arena blind-test leaderboard for both text-to-video and image-to-video generation. CNBC Alibaba Group confirmed ownership of HappyHorse, developed under its Alibaba Token Hub (ATH) business unit, where it leads benchmarks outperforming ByteDance's Seedance 2.0 and others. Caixin Global Led by Zhang Di — the former VP of Kuaishou who architected Kling AI — the 15-billion parameter model generates 1080p video with synchronized audio in a single pass using a unified transformer architecture that bypasses the multi-stage pipelines used by every major competitor.

Seedance 2.0 Models

Seedance 2.0(by Bytedance) is a multimodal video generation model that redefines "controllable creation," moving beyond the limitations of text or start/end frames. It supports quad-modal inputs—text, image, video, and audio—and introduces an industry-leading "Universal Reference" system. By precisely replicating the composition, camera movement, and character actions from reference assets, Seedance 2.0 solves critical issues with character consistency and physical coherence, empowering creators to act as true "directors" with deep control over their output.

GPT Image 2 Models

GPT Image 2 is a state-of-the-art multimodal foundation model engineered for exceptional text-to-image generation with unprecedented photorealism and creative versatility. Developed by OpenAI as the evolution of the DALL-E lineage, it transforms detailed natural language descriptions into hyper-realistic imagery at up to 4K resolution. With proprietary "Neural Rendering Engine" technology for precise visual control, GPT Image 2 delivers studio-quality results with accurate anatomy, lighting, and composition—making it the premier AI tool for professional creators, enterprises, and developers demanding production-ready visual assets.

Wan2.7 Models

Launching this March, Wan2.7 is the latest powerhouse in the Qwen ecosystem, delivering a massive upgrade in visual fidelity, audio synchronization, and motion consistency over version 2.6. This all-in-one AI video generator supports advanced features like first-and-last frame control, 3x3 grid synthesis, and instruction-based video editing. Outperforming competitors like Jimeng, Wan2.7 offers superior flexibility with support for real-person image inputs, up to five video references, and 1080P high-definition outputs spanning 2 to 15 seconds, making it the premier choice for professional digital storytelling and high-end content marketing.

Veo3.1 Models

Google DeepMind’s Veo 3.1 represents a paradigm shift in AI video generation, empowering creators with director-level narrative control and cinematic-grade audio quality that seamlessly integrates with its enhanced visual realism. By bridging the gap between imaginative concepts and photorealistic execution, this advanced model offers a transformative solution for a wide range of application scenarios, from professional filmmaking and high-end advertising to immersive digital content creation.

ERNIE Image Models

ERNIE-Image is an open-weight text-to-image model developed by the ERNIE-Image Team at Baidu, built on a single-stream Diffusion Transformer (DiT) with 8B parameters and paired with a lightweight Prompt Enhancer that rewrites short prompts into richer, more structured descriptions before passing them to the diffusion backbone. NYU Shanghai RITS Released on April 15, 2026 under the Apache 2.0 license, it transforms natural language descriptions into detailed imagery with particular strength in text rendering and structured layout generation. ERNIE-Image is designed not only for strong visual quality, but for controllability in practical generation scenarios where accurate content realization matters as much as aesthetics — making it well-suited for commercial posters, comics, multi-panel layouts, and other content creation tasks that require both visual quality and precise control.

GPT Image Models

The GPT Image Family is OpenAI's latest suite of multimodal image generation and editing models, built on the powerful GPT architecture. This family includes three tiers — GPT Image-1, GPT Image-1.5, and GPT Image-1 Mini — each available in both Text-to-Image and Image-to-Image variants. Combining GPT's world-class language understanding with DALL·E-class visual synthesis, these models deliver exceptional prompt adherence, photorealistic rendering, and creative versatility across illustration, photography, design, and visualization tasks. The series offers flexible pricing and quality tiers to match any workflow — from rapid prototyping and high-volume content production to professional-grade final deliverables. Whether you need ultra-fast iterations at minimal cost or maximum quality for brand campaigns, the GPT Image Family has a solution tailored to your needs.

Nano Banana2 Models

Nano Banana 2 (by Google), is a generative image model that perfectly balances lightning-fast rendering with exceptional visual quality. With an improved price-performance ratio, it achieves breakthrough micro-detail depiction, accurate native text rendering, and complex physical structure reconstruction. It serves as a highly efficient, commercial-grade visual production tool for developers, marketing teams, and content creators.

Seedream5.0 Models

Seedream 5.0, developed by ByteDance’s Jimeng AI, is a high-performance AI image generation model that integrates real-time search with intelligent reasoning. Purpose-built for time-sensitive content and complex visual logic, it excels at professional infographics, architectural design, and UI assistance. By blending live web insights with creative precision, Seedream 5.0 empowers commercial branding and marketing with a seamless, logic-driven workflow that turns sophisticated data into stunning, high-fidelity visuals.

Kling3.0 Models

Kuaishou’s flagship video generation suite, Kling 3.0, features two powerhouse models—Kling 3.0 (Upgraded from Kling 2.6) and Kling 3.0 Omni (Kling O3, Upgraded from Kling O1)—both offering high-fidelity native audio integration. While Kling 3.0 excels in intelligent cinematic storytelling, multilingual lip-syncing, and precision text rendering, Kling O3 sets a new standard for professional-grade subject consistency by supporting custom subjects and voice clones derived from video or image inputs. Together, these models provide a comprehensive solution tailored for cinematic narratives, global marketing campaigns, social media content, and digital skit production.

GLM LLM Models

GLM is a cutting-edge LLM series by Z.ai (Zhipu AI) featuring GLM-5, GLM-4.7, and GLM-4.6. Engineered for complex systems and long-horizon agentic tasks, GLM-5 outperforms top-tier closed-source models in elite benchmarks like Humanity’s Last Exam and BrowseComp. While GLM-4.7 specializes in reasoning, coding, and real-world intelligent agents, the entire GLM suite is fast, smart, and reliable, making it the ultimate tool for building websites, analyzing data, and delivering instant, high-quality answers for any professional workflow.

Open AI Model Families

Explore OpenAI’s language and video models on Atlas Cloud: ChatGPT for advanced reasoning and interaction, and Sora-2 for physics-aware video generation.