DeepSeek-V4 Preview Launch: 1M Token Context, Agent Upgrades & Open Source Weights

Today (24,April), DeepSeek officially launches and open-sources the preview release of DeepSeek-V4, its brand-new model series.

DeepSeek-V4 supports up to one million tokens of context and achieves leading performance among domestic and open-source models in agentic capabilities, world knowledge, and reasoning. The series comes in two sizes:

- DeepSeek-V4-Pro — the flagship model, is a massive MoE (Mixture of Experts) model with 1.6 trillion total parameters but only 49B activated per forward pass — this is the key to its efficiency.

- DeepSeek-V4-Flash — the faster, more cost-efficient option. It follows the same MoE design at a much smaller scale (284B total / 13B active), enabling faster and cheaper inference.

- Both models share the same 1M token context window and are fully open-source with API access.

| Model | Parameters | Activation | Pre-training Data | Context Length | Open Source | API Service | Web/App Access Mode |

| deepseek-v4-pro | 1.6T | 49B | 33T | 1M | ✓ | ✓ | Expert Mode |

| deepseek-v4-flash | 284B | 13B | 32T | 1M | ✓ | ✓ | Fast Mode |

Starting today, you can chat with DeepSeek-V4 at chat.deepseek.com or via the official app. The API is also live — simply set model_name to deepseek-v4-pro or deepseek-v4-flash to get started.

We have reported the speculation and pre-release analysis earlier (see our DeepSeek V4 expectation guide and technical deep dive), we now have confirmed, official details straight from the source. The following covers exactly what shipped, what's new, and what it means if you're building with or evaluating AI models today.

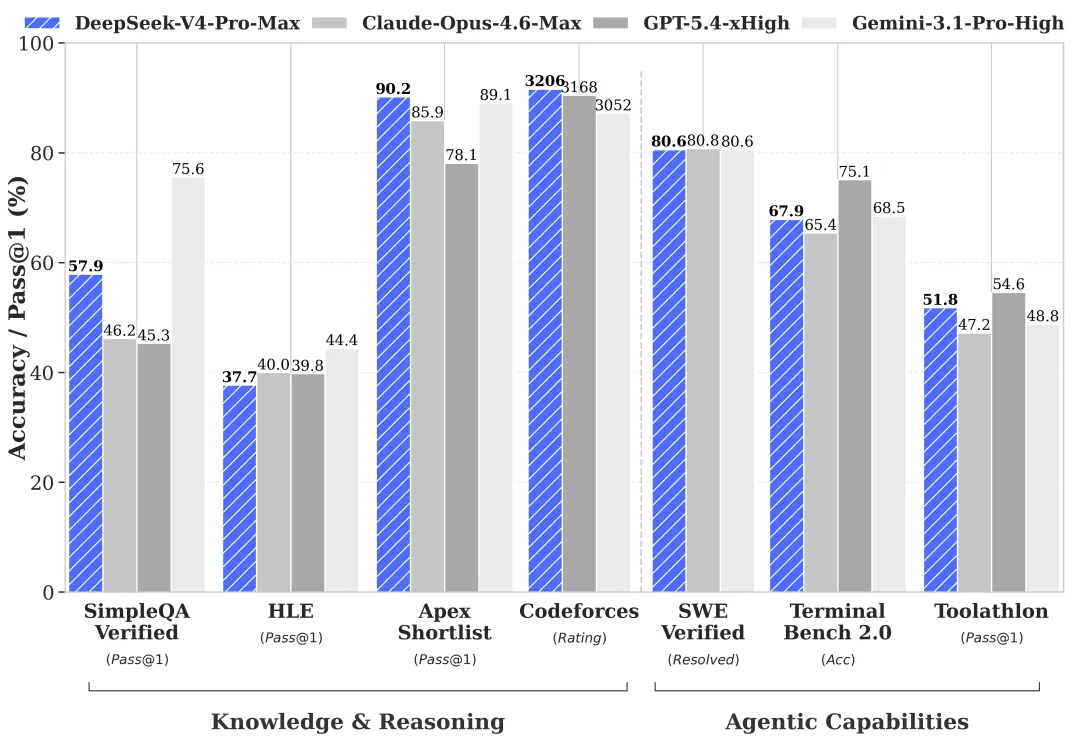

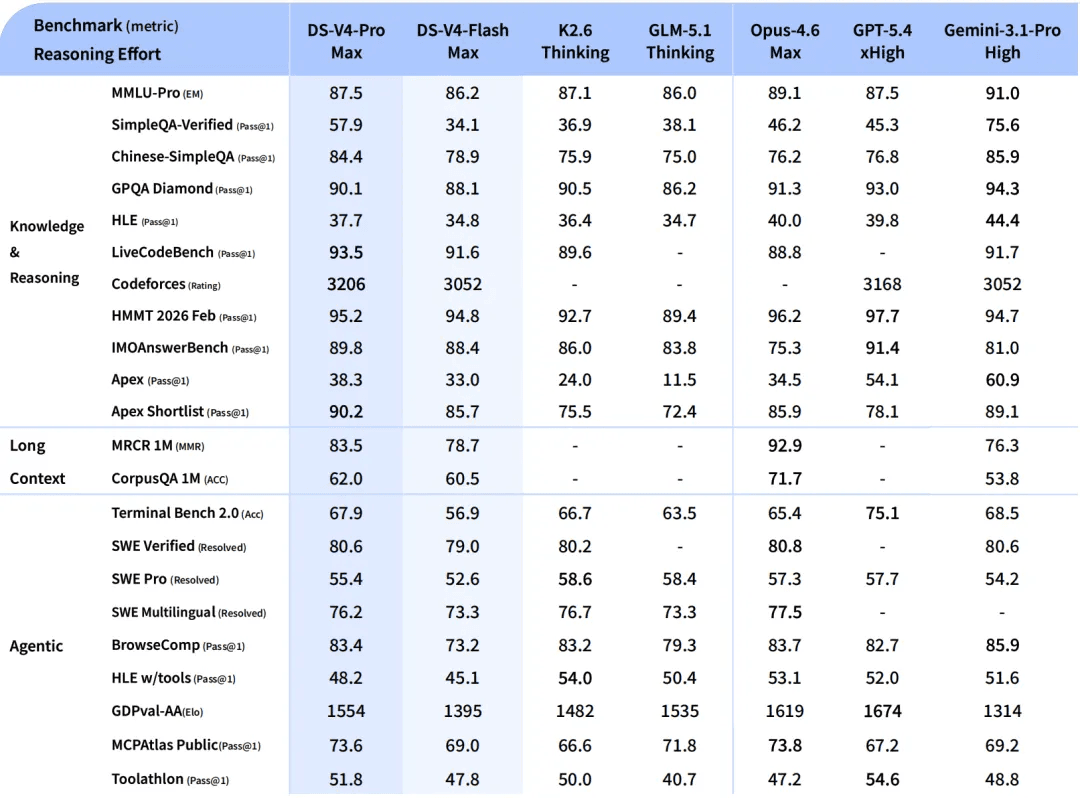

DeepSeek-V4-Pro: Rivaling Top Closed-Source Models

Significantly enhanced agentic capabilities. Compared to its predecessor, DeepSeek-V4-Pro shows a dramatic improvement in agent tasks. In Agentic Coding benchmarks, V4-Pro now leads among all open-source models. DeepSeek has also deployed V4-Pro internally as the company's coding agent of choice — employee feedback indicates the experience surpasses Claude Sonnet 4.5, with output quality approaching Claude Opus 4.6 in non-thinking mode, though still trailing Opus 4.6's thinking mode.

Rich world knowledge. DeepSeek-V4-Pro significantly outperforms other open-source models on world knowledge benchmarks, falling only slightly short of the top closed-source model, Gemini Pro 3.1.

World-class reasoning. In math, STEM, and competitive programming evaluations, DeepSeek-V4-Pro surpasses every previously benchmarked open-source model and matches the performance of the world's leading closed-source models.

DeepSeek-V4-Flash: The Fast and Affordable Choice

Compared to V4-Pro, DeepSeek-V4-Flash falls slightly short on world knowledge, but delivers comparable reasoning performance. Thanks to its smaller parameter count and lower activation costs, V4-Flash provides faster response times and more economical API pricing.

On agent benchmarks, V4-Flash matches V4-Pro on simpler tasks, though a gap remains on more complex ones.

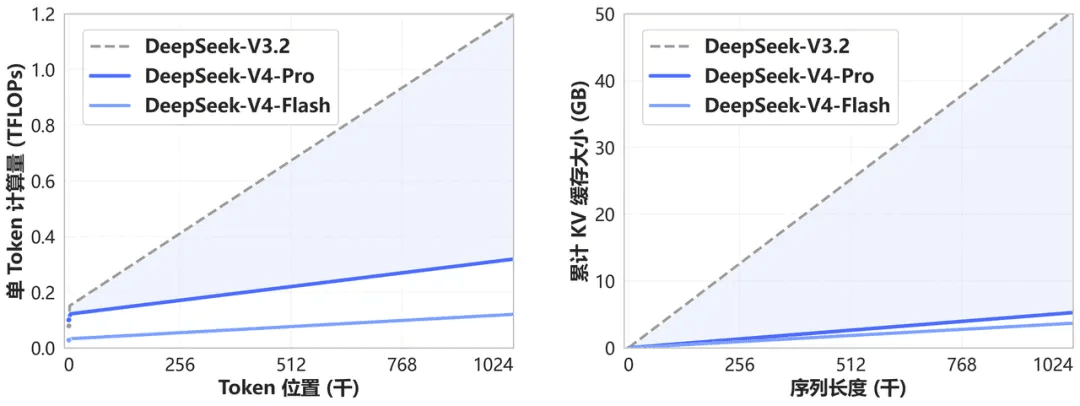

Architectural Innovation and Extreme Context Efficiency

DeepSeek-V4 introduces a novel attention mechanism that performs compression along the token dimension. Combined with DSA (DeepSeek Sparse Attention), this design achieves world-leading long-context performance while dramatically reducing computational and memory requirements compared to conventional approaches.

Going forward, 1M (one million) token context is the standard for all official DeepSeek services.

Specialized Optimization for Agentic Use Cases

DeepSeek-V4 has been fine-tuned and optimized for popular agent products including Claude Code, OpenClaw, OpenCode, and CodeBuddy. Performance improvements have been observed across code generation, document creation, and other agent-driven tasks.

This kind of framework-specific tuning matters more in practice than it might sound. A model that performs well in isolation but behaves inconsistently inside a structured agent loop is difficult to deploy reliably. The decision to treat major agent frameworks as first-class optimization targets reflects how production AI usage has evolved.

DeepSeek-V4 API Access

Both V4-Pro and V4-Flash are now available via the DeepSeek API, with support for both the OpenAI ChatCompletions interface and the Anthropic interface, meaning existing integrations can be pointed at V4 models with minimal code changes. The base_url remains unchanged; simply update the model parameter to deepseek-v4-pro or deepseek-v4-flash.

Both models support a maximum context length of 1M tokens and offer both non-thinking and thinking modes. In thinking mode, a reasoning_effort parameter can be set to high or max. For complex agentic workflows, thinking mode with max intensity is recommended. Docs for API access: https://api-docs.deepseek.com/zh-cn/guides/thinking_mode

⚠️ Deprecation notice: The legacy model names deepseek-chat and deepseek-reasoner will be retired in three months (July 24, 2026). During the transition period, they map to the non-thinking and thinking modes of deepseek-v4-flash, respectively. If you're using either name in production, plan your migration now.

Open-Source Weights & Local Deployment

- Model weights:Hugging Face | ModelScope

- Technical report:DeepSeek-V4 PDF

For teams considering local or on-premise deployment, it's worth noting that models at this parameter scale, particularly V4-Pro at 1.6T total parameters, place substantial demands on hardware. The open-source availability is a meaningful advantage for enterprise compliance and customization use cases, but most teams will find cloud API access the more practical starting point.

What This DeepSeek-V4 Launch Actually Means

3 things stand out about this release taken together.

First, the 1M context commitment is more meaningful than it might appear. DeepSeek isn't offering this as a premium tier — it's the floor for all official services. That's a signal about where the open-source frontier is heading, and it puts quiet pressure on every other provider to follow.

Second, the Agent-first optimization work — specifically adapting V4 for Claude Code, OpenCode, and others — reflects a maturity in how DeepSeek is thinking about deployment. Benchmark performance is table stakes; what matters for production is behavior inside the tools developers actually use.

Third, the honest competitive positioning relative to Claude Opus 4.6 is notable. Rather than claiming across-the-board superiority, DeepSeek gives a layered assessment: better than Sonnet 4.5, approaching Opus 4.6 non-thinking, behind Opus 4.6 thinking mode. That specificity makes the claims more credible, not less.

For developers evaluating models for agentic workflows, long-document processing, or complex reasoning tasks, DeepSeek-V4-Pro is now a serious open-source contender. For cost-optimized or latency-sensitive pipelines, V4-Flash offers a credible lighter-weight alternative.

Try DeepSeek-V4 on Atlas Cloud

Atlas Cloud is a production-grade AI platform designed for developers and teams who want reliable, cost-efficient access to the world's leading AI models without managing infrastructure. With a unified API, transparent pricing, and enterprise-level compliance (SOC 2-aligned, HIPAA-ready), Atlas Cloud lets you focus on building, not on ops.

DeepSeek on Atlas Cloud. We already support the DeepSeek model family, including DeepSeek V3.2, V3.2 Fast, V3.2 Speciale, and V3.2 Exp available today via a single API endpoint at competitive pricing. DeepSeek models on Atlas Cloud are optimized for long-context workloads and agent pipelines, with full context window support and no quantization loss. Beyond DeepSeek, Atlas Cloud gives you access to 300+ models across the LLM landscape.

DeepSeek-V4 is coming to Atlas Cloud. We're actively working on integrating DeepSeek-V4-Pro and V4-Flash. Stay tuned for the launch announcement — and in the meantime, explore everything already available on the platform.