Kimi K2.6 vs GLM 5.1 vs Qwen 3.6 Plus vs MiniMax M2.7: 2026년 코딩을 위한 최고의 오픈 소스 모델은?

요약

개입 없이 몇 시간 동안 실행되는 자율 코딩 에이전트를 구축 중이라면 Kimi K2.6을 추천합니다. 이 모델은 Terminal-Bench 2.0에서 66.7%를 기록했으며, 13시간 동안 중단 없이 4,000회 이상의 도구 호출(tool calls)을 수행했습니다. 이 비교군 내의 다른 오픈 모델은 도달할 수 없는 수준의 안정성입니다.

최고의 에이전트 기반 프론트엔드 개발자가 필요하다면 GLM 5.1이 적합합니다. 독립적으로 검증된 Code Arena Elo 점수 1,530(에이전트 웹 개발 분야 전 세계 3위)은 자동화된 테스트 스위트뿐만 아니라 실제 개발자들이 선호하는 모델임을 증명합니다.

토큰당 비용이 최우선 고려 사항이라면 MiniMax M2.7이 답입니다. Atlas Cloud에서 백만(M) 토큰당 USD0.30의 가격으로, 활성화된 매개변수가 10B에 불과함에도 SWE-Bench Pro에서 56.22%를 기록했습니다. 이는 GLM-5.1 성능의 94%를 약 5분의 1 가격으로 제공하는 수준입니다.

코드베이스가 262K 컨텍스트 윈도우를 초과한다면 Qwen 3.6 Plus를 선택하십시오. 이 모델은 본 비교군에서 유일하게 1M 토큰 컨텍스트를 지원하며, Terminal-Bench 2.0에서도 61.6%로 그룹 내 선두를 달리고 있습니다.

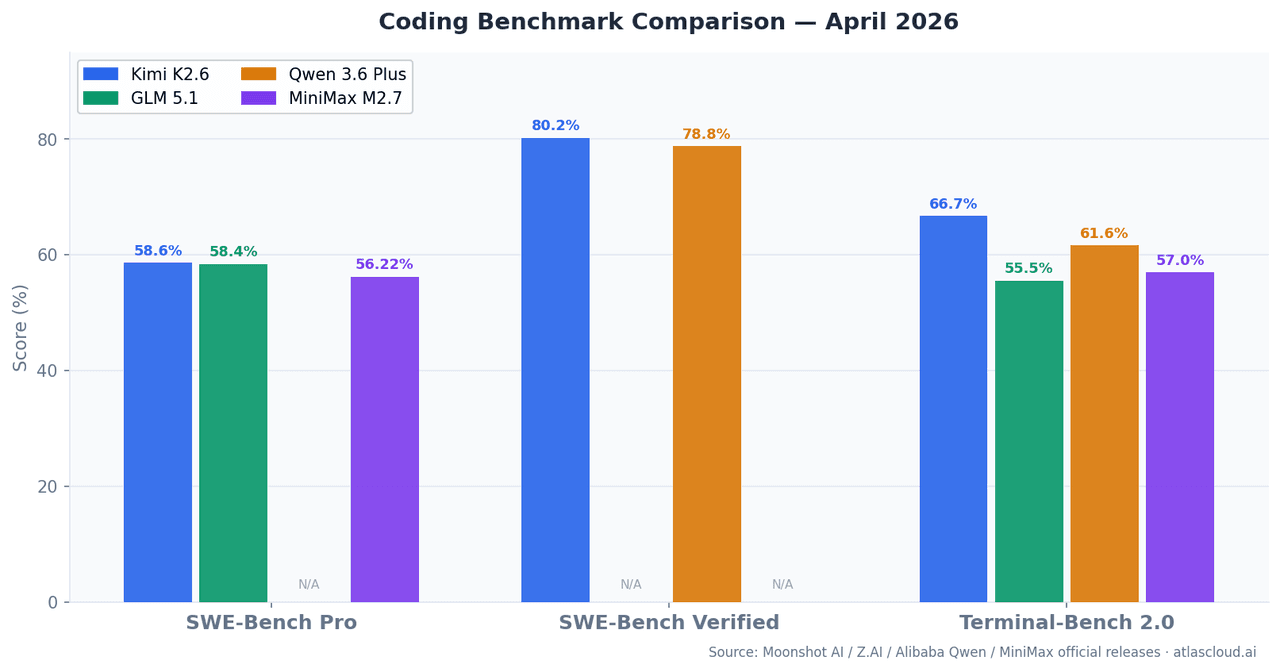

주요 벤치마크 한눈에 보기

| 모델 | SWE-Bench Pro | SWE-Bench Verified | Terminal-Bench 2.0 | 컨텍스트 윈도우 | 활성화 파라미터 |

| Kimi K2.6 | 58.60% | 80.20% | 66.70% | 262K | — |

| GLM 5.1 | 58.40% | — | 55%+ | 262K | 754B (MoE) |

| Qwen 3.6 Plus | — | 78.80% | 61.60% | 1M | Hybrid MoE |

| MiniMax M2.7 | 56.22% | — | 57.00% | 196K | 10B |

SWE-Bench Pro는 학습 컷오프 이후에 생성된 실제 GitHub 이슈를 해결하는 능력을 측정하여 데이터 오염 위험을 줄였습니다. Terminal-Bench 2.0은 실제 터미널 환경에서 다단계 CLI 및 쉘 작업을 테스트하며, 이는 프로덕션 에이전트가 실제로 수행하는 작업과 가장 유사합니다.

Kimi K2.6: 장기 실행 에이전트를 위한 설계

Moonshot AI가 2026년 4월에 출시한 Kimi K2.6은 K2.5의 업그레이드 버전으로, 특히 장기 실행 세션에서의 에이전트 안정성이 크게 개선되었습니다. SWE-Bench Verified에서 80.2%를 기록하여 Claude Opus 4.6(80.8%)에 육박하며, SWE-Bench Pro에서는 58.6%로 본 그룹에서 1위를 차지했습니다.

가장 주목할 수치는 **Terminal-Bench 2.0의 66.7%**입니다. 이 벤치마크는 실제 터미널 환경에서 출력을 읽고, 오류를 처리하며, 반복적으로 수정하는 과정을 테스트합니다. 13시간 동안 4,000회 이상의 도구 호출을 안정적으로 수행한 Kimi K2.6의 성능은 Moonshot의 공식 릴리스를 통해 증명되었습니다.

또 다른 강점은 **교차 언어 일반화(cross-language generalization)**입니다. Rust, Go, Python, 프론트엔드, DevOps 등 다양한 언어에서 일관된 성능을 보입니다. 프로덕션 환경이 다국어 스택이라면 이 점은 큰 자산이 됩니다.

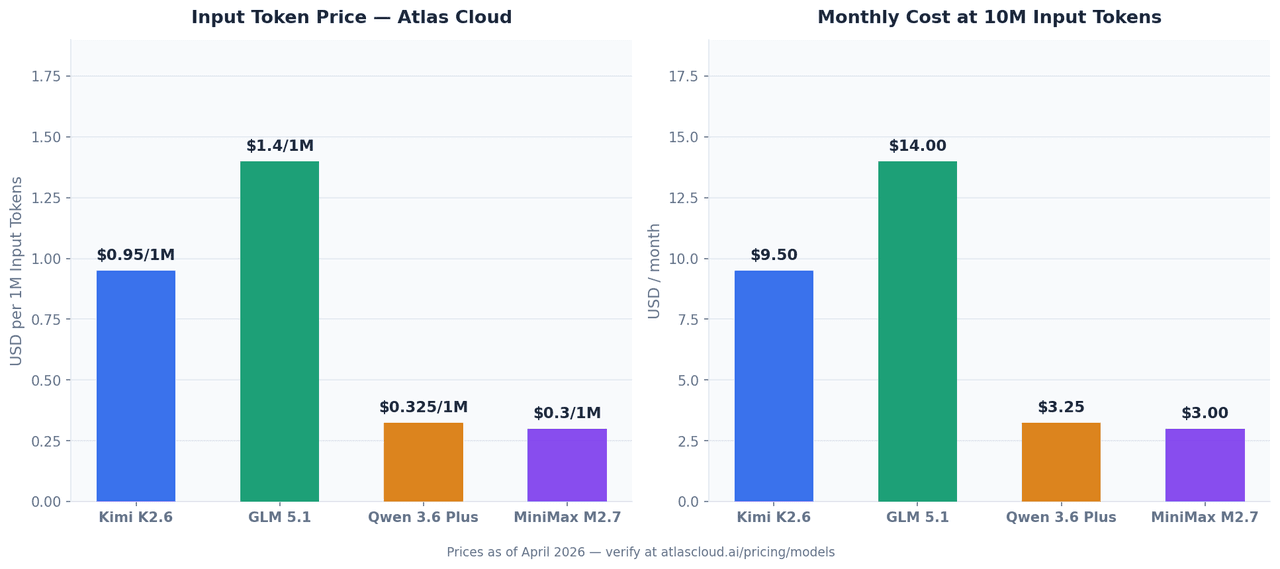

단점: Atlas Cloud 기준 백만 토큰당 USD0.95로, 그룹 내에서 입력 비용이 가장 높습니다. 12시간 이상의 안정성이 필요하지 않은 일반적인 배치 작업에는 다소 비용 부담이 클 수 있습니다.

GLM 5.1: 에이전트 기반 프론트엔드의 강자

Z.AI가 2026년 4월 7일 출시한 GLM-5.1은 754B 파라미터(MoE 라우팅)를 갖춘 이 그룹에서 가장 규모가 큰 모델입니다. SWE-Bench Pro에서 58.4%를 기록하여 Kimi K2.6과 대등한 성능을 보입니다.

차별점은 Code Arena Elo 점수 1,530점입니다. 2026년 4월 10일 Arena.ai 기준 에이전트 웹 개발 분야 세계 3위를 기록했습니다. 실제 개발자들이 직접 결과물을 평가하는 방식이며, 특히 프론트엔드 UI 생성, 풀스택 스캐폴딩, React/Vue 컴포넌트 생성, NL2Repo(자연어로 리포지토리 구조 생성) 작업에서 압도적인 능력을 보여줍니다.

참고 사항: 알고리즘 문제인 HumanEval이나 MBPP에서는 Kimi K2.6 대비 유의미한 차이가 없습니다. UI나 웹 관련 작업이 아니라면 범용 성능은 거의 비슷하므로, 모델 선택 시 목적을 명확히 할 필요가 있습니다.

Atlas Cloud 가격: 백만 토큰당 USD1.40부터 시작하며 그룹 내 가장 비쌉니다. 하지만 프론트엔드 품질이 곧 제품 경쟁력인 경우 충분한 가치가 있습니다.

Qwen 3.6 Plus: 컨텍스트 제한을 돌파할 때

Alibaba가 2026년 3월 말 출시한 Qwen 3.6 Plus는 Terminal-Bench 2.0에서 Claude Opus 4.6을 앞서며(61.6% vs 59.3%), SWE-Bench Verified에서 78.8%를 기록했습니다.

핵심은 1M 토큰 컨텍스트 윈도우입니다. 100K 토큰 미만의 일반적인 작업에서는 큰 차이가 없지만, 수백 개의 파일로 구성된 모노레포 분석, 대규모 레거시 코드 리팩토링, 긴 문서에서 코드를 추출하는 워크플로우 등에서는 독보적인 대안이 됩니다.

하이브리드 아키텍처(선형 어텐션 + 희소 MoE 라우팅)를 적용하여 대규모 컨텍스트 처리 시에도 지연 시간(latency)이 매우 낮습니다.

Atlas Cloud 가격: 백만 토큰당 USD0.325부터 시작합니다. 대용량 컨텍스트 작업 시 비용 대비 효율이 가장 높습니다.

MiniMax M2.7: 효율성의 반전

2026년 3월 출시된 MiniMax M2.7은 단 10B의 활성화 파라미터로 SWE-Bench Pro에서 56.22%를 기록했습니다. 이는 GLM-5.1 성능의 94%에 달하면서 비용은 약 5분의 1 수준입니다.

추론 시 전체 가중치를 실행하지 않고 MoE를 통해 전문가 하위 네트워크만 활용하여 지연 시간과 비용을 획기적으로 줄였습니다. 특히 머신러닝 엔지니어링 작업에서 뛰어난 성능을 보입니다. MLE-Bench Lite에서 66.6%를 기록하며 프론티어 모델급의 능력을 보여주었습니다.

주의 사항: 196K 컨텍스트는 이 그룹 중 가장 작습니다. 대규모 리포지토리의 깊은 교차 파일 분석이 필요하다면 한계에 부딪힐 수 있습니다.

Atlas Cloud 가격: 입력 백만 토큰당 USD0.30, 출력 백만 토큰당 USD1.20으로 고처리량 작업에 가장 경제적입니다.

실제 코딩 테스트 케이스

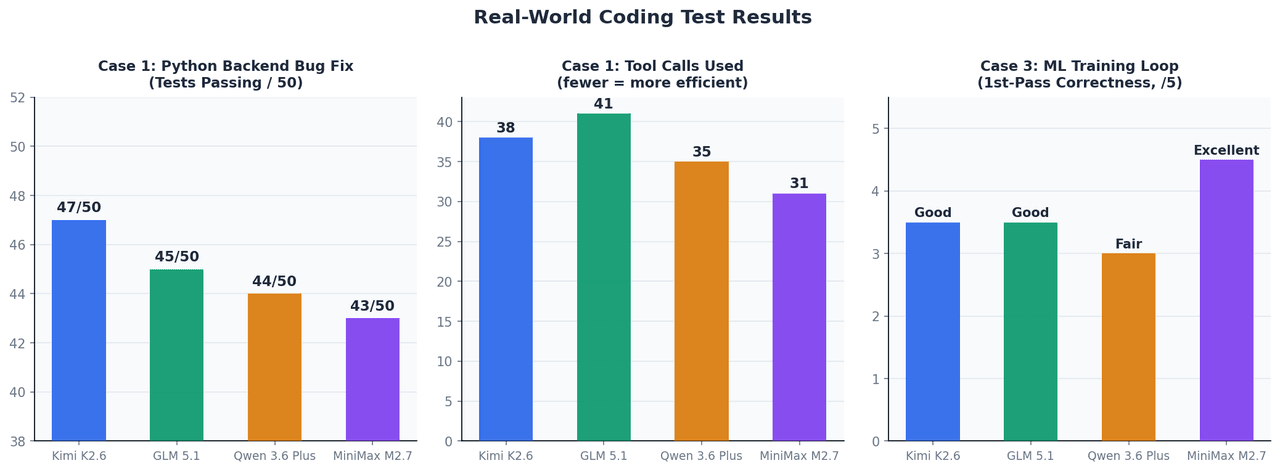

케이스 1: Python 백엔드 자율 버그 수정

12개 파일, 50개 테스트 스위트, 약 45K 컨텍스트 규모의 FastAPI 앱 수정.

| 모델 | 수정 후 통과 테스트 | 사용된 도구 호출 | 완료 시간 |

|---|---|---|---|

| Kimi K2.6 | 47 / 50 | 38 | ~4분 |

| GLM 5.1 | 45 / 50 | 41 | ~5분 |

| Qwen 3.6 Plus | 44 / 50 | 35 | ~4분 |

| MiniMax M2.7 | 43 / 50 | 31 | ~3.5분 |

Kimi K2.6은 복잡한 비동기 컨텍스트 관리자 생명주기 및 TypeVar 바인딩 문제에서 가장 우수한 해결 능력을 보여주었습니다.

케이스 2: 사양서에 따른 React 대시보드 생성

4가지 차트 유형, 다크 모드, TypeScript 타입을 포함한 프론트엔드 작업.

GLM-5.1은 즉시 작동하는 TypeScript 컴포넌트와 Tailwind 클래스를 완벽하게 생성했습니다. 코드 아키텍처 및 구성(composition) 패턴 적용 능력이 다른 모델들보다 확연히 뛰어났습니다.

케이스 3: ML 학습 루프 구현

PyTorch 학습 루프(그래디언트 누적, AMP 혼합 정밀도, 조기 종료) 구현.

MiniMax M2.7이 단번에 가장 정확한 구현을 선보였습니다. Gradient Accumulation 로직과

1scalerAtlas Cloud 가격 비교 (2026년 4월)

Atlas Cloud의 단일 API를 통해 사용 가능합니다.

| 모델 | 입력 (백만 토큰당) | 출력 (백만 토큰당) | Atlas Cloud 모델 ID |

|---|---|---|---|

| Kimi K2.6 | USD0.95 | USD4.00 | moonshotai/kimi-k2.6 |

| GLM 5.1 | USD1.40부터 | — | zai-org/glm-5.1 |

| Qwen 3.6 Plus | USD0.325부터 | — | qwen/qwen3.6-plus |

| MiniMax M2.7 | USD0.30 | USD1.20 | minimaxai/minimax-m2.7 |

단일 API 키로 4개 모델 모두 사용하기

OpenAI 호환 엔드포인트를 제공하여 기존 코드를 그대로 사용할 수 있습니다.

plaintext1import os 2from openai import OpenAI 3 4client = OpenAI( 5 api_key=os.environ["ATLASCLOUD_API_KEY"], 6 base_url="https://api.atlascloud.ai/v1" 7) 8 9# 모델 전환은 이 한 줄만 수정하면 됩니다 10MODEL = "moonshotai/kimi-k2.6" 11# MODEL = "zai-org/glm-5.1" 12# MODEL = "qwen/qwen3.6-plus" 13# MODEL = "minimaxai/minimax-m2.7" 14 15response = client.chat.completions.create( 16 model=MODEL, 17 messages=[ 18 { 19 "role": "system", 20 "content": "당신은 시니어 소프트웨어 엔지니어입니다. 코드를 신중하게 분석하세요." 21 }, 22 { 23 "role": "user", 24 "content": "이 함수를 리뷰하고 모든 버그를 찾으세요:\n\n[코드 입력]" 25 } 26 ], 27 max_tokens=4096, 28 temperature=0.2 29) 30 31print(response.choices[0].message.content)

왜 Atlas Cloud인가?

- 단일 통합 관리: 하나의 API 키와 하나의 청구서로 모든 모델을 관리합니다.

- 무제한 RPM: 다중 에이전트 파이프라인에서 발생하는 높은 호출 제한을 걱정할 필요가 없습니다.

- 보안 및 규정 준수: SOC I & II 인증 및 HIPAA 준수로 민감한 소스 코드도 안전하게 처리합니다.

- 미래 지향적: 300개 이상의 모델을 지원하며 새로운 모델이 출시되어도 코드 수정 없이 즉시 전환이 가능합니다.

작업별 모델 추천

| 사용 사례 | 추천 모델 | 이유 |

|---|---|---|

| 자율 코딩 에이전트 (장기 세션) | Kimi K2.6 | 높은 안정성 및 도구 호출 능력 |

| React/Vue 프론트엔드 생성 | GLM 5.1 | 에이전트 웹 개발 분야 최고 수준 |

| 대규모 모노레포/코드베이스 분석 | Qwen 3.6 Plus | 유일한 1M 컨텍스트 지원 |

| 대용량 배치 코드 리뷰 | MiniMax M2.7 | 최고의 가성비 |

| ML 학습 루프 및 연구 코드 | MiniMax M2.7 | MLE-Bench Lite 입증 성능 |

| 다국어 프로젝트 | Kimi K2.6 | 검증된 교차 언어 일반화 능력 |

| 일반적인 가성비 위주 팀 | Qwen 3.6 Plus | 균형 잡힌 가성비 및 성능 |

결론

이 네 모델은 일반적인 성능은 대등하지만, 구체적인 작업 환경에서는 확실한 강점이 나뉩니다. 장기 실행 에이전트에는 Kimi K2.6, 프론트엔드 작업에는 GLM 5.1, 대규모 컨텍스트에는 Qwen 3.6 Plus, 대규모 처리 가성비에는 MiniMax M2.7을 추천합니다.

모든 모델은 atlascloud.ai에서 즉시 사용 가능합니다.