Many generators make images that look solid until you take a closer look. Once you zoom in, skin frequently looks like fake plastic or smooth wax. Details like trees often get blurry, and fabric patterns look messy or smudged. This occurs because common models only create at 1024p. They use basic upscaling to fill in the missing parts, which forces the system to guess and ruins the fine details.

The Reveal: Native 2K Pipeline

Qwen Image 2.0 changes the game by ditching the usual "upscale" fix. This model runs on a native 2K, 2048 x 2048 setup. Since it builds images at this size from the jump, it catches tiny details. Standard upscalers usually just guess or blur these small parts, but this system keeps them sharp and real.

The Value of Microscopic Detail

The move to Native 2K AI image generation isn't just about larger file sizes; it is about the mathematical precision of textures. By increasing the resolution of the latent space, Qwen Image 2.0 achieves a level of fidelity previously unreachable.

| Feature | Standard AI Models | Qwen Image 2.0 |

| Native Resolution | 1024 x 1024 | 2048 x 2048 |

| Texture Integrity | Smoothed/Waxy | High-Fidelity |

| Human Skin | Plastic-like | Photorealistic AI human textures |

| Fabric Detail | Blurred weaves | Realistic AI skin and fabric textures |

| Architecture | Soft edges | High-detail AI architectural rendering |

This native approach ensures that every pixel is grounded in the original prompt, making it a premier choice for professional workflows where clarity is non-negotiable.

Native 2K vs. Post-Processing Upscaling: The "Secret Sauce"

In high-quality AI art, there is a huge gap between making new pixels and just pulling on old ones. Getting this right is vital. It shows exactly why Qwen Image 2.0 is the top choice for clear pictures in 2026. This shift in how images are built makes all the difference in the final look.

The Technical Edge: Native vs. Hallucinated Pixels

Traditional models typically operate at a base resolution of 1024px. To achieve larger outputs, they rely on post-processing upscalers. Upscaling tools use math to guess what missing parts of a picture should be. This method works fast, but it often creates fake textures. Little details confuse the AI, causing it to add items that aren't really there, and the final image ends up looking messy and loses realistic feel.

In contrast, Qwen Image 2.0 utilizes a Native 2K AI image generation pipeline. A complex dual-engine design is used to achieve this:

- 8B Qwen3-VL Encoder: A powerful vision-language tool that reads long prompts (up to 1,000 tokens). It makes sure the system catches every single detail you type.

- 7B Diffusion Decoder: A smart decoder built just for images. Without any extra steps, it creates a sharp 2048 × 2048 size image.

This direct method stops the weird shifts that happen when you upscale in separate steps. It keeps the whole image solid and correct from the very start of the process. By avoiding these extra stages, the final picture stays true to its original shape and structure.

Texture Fidelity and Micro-Geometry

By generating natively at 2K, Qwen 2.0 preserves what engineers call "micro-geometry"—the tiny, surface-level details that define reality.

| Texture Category | Result in Qwen Image 2.0 | Competitive Advantage |

| Human Skin | Photorealistic AI human textures | Visible pores, fine hairs, and subsurface scattering without a "plastic" look. |

| Architecture | High-detail AI architectural rendering | Sharp edges on brushed steel and concrete grain without "shimmering" artifacts. |

| Fabrics | Realistic AI skin and fabric textures | Individual thread weaves in linen or silk visible at high zoom. |

| Nature | Organic Detail | Hyper-clear dew drops on grass and translucent veins in leaves. |

This "Special Mix" makes sure your work stays sharp. Whether you need a pro ad or a deep building plan, the textures look real. Nothing gets blurry because the system isn't guessing like a basic upscaler. Everything stays crisp and grounded in the real world.

Next, let's do a test together:

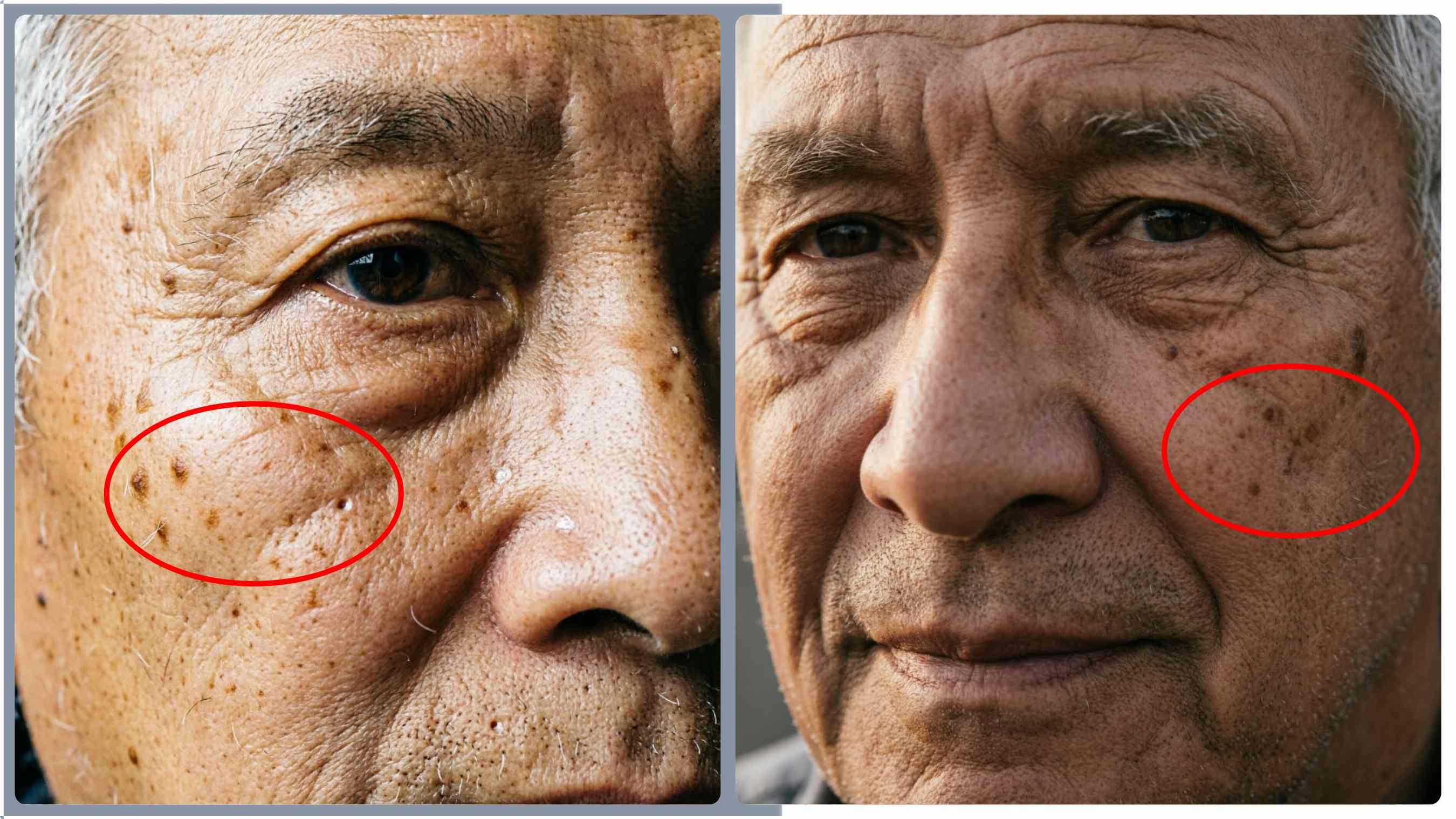

To keep the test fair, I used the same prompt for both. I set one version to 1024x1024 to mimic standard Model. Then, I compared it to the original 2K image (2048x2048) that Qwen 2.0 made.

My prompt:

An ultra-photorealistic extreme close-up portrait of an elderly man with textured skin. Focus solely on his eye, cheek, and nose bridge. The skin must feature sharp, visible pores, fine vellus hair, sun spots, and shallow wrinkles. The texture should look raw and unfiltered, capturing the 'subsurface scattering' effect of natural light. Side lighting to emphasize topography. Hyper-detailed, National Geographic style micro-photography.

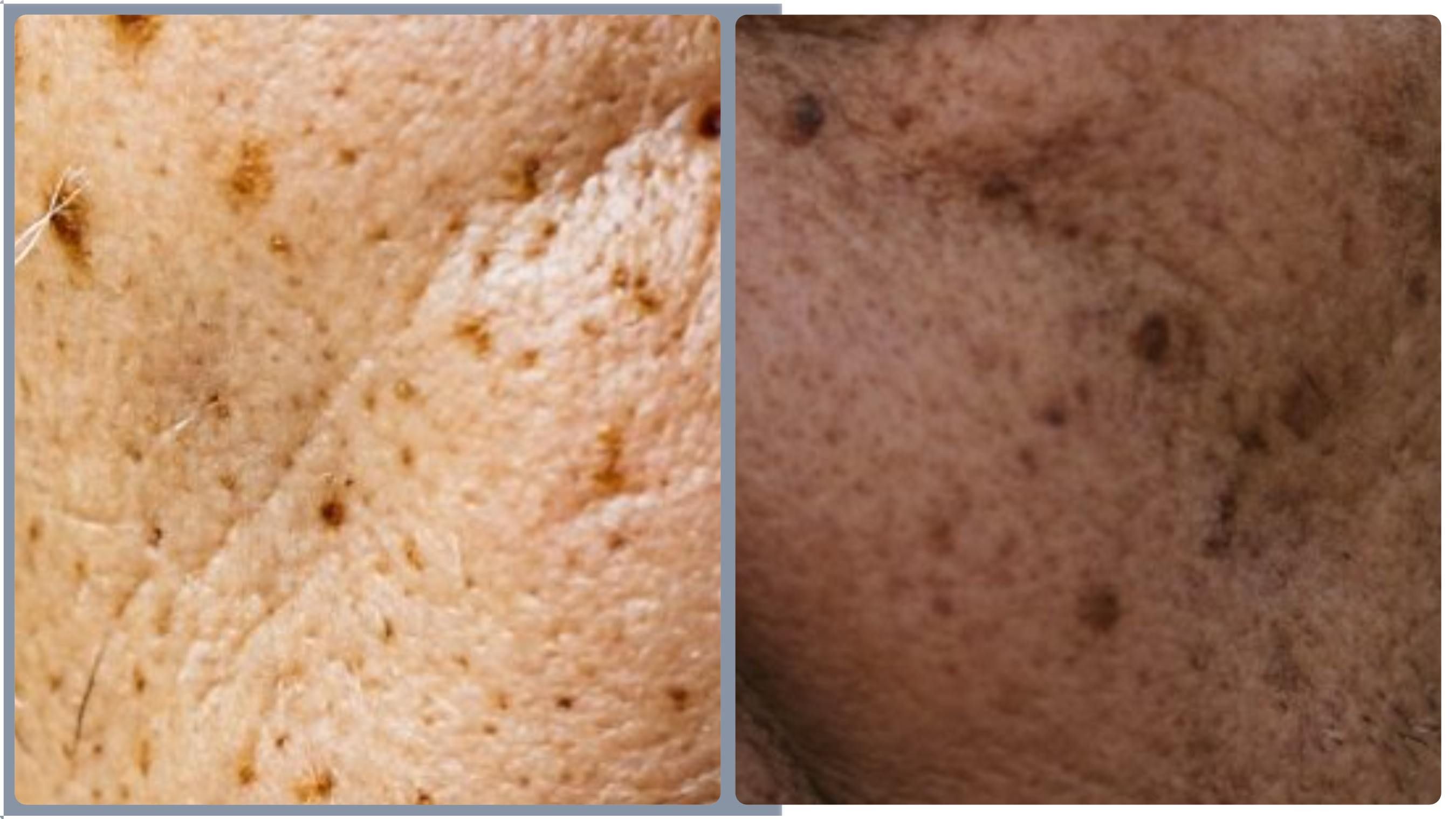

As shown in the image: the left side is a 2K image generated by Qwen 2.0, and the right side is a simulated 1K image. Next, I'll zoom in by 400% on the area within the red box:

Analysis: Qwen 2.0 (Native 2K) vs. Simulated Standard 1K

- Texture & Geometry: Qwen 2.0 (Left) exhibits superior micro-geometry, preserving distinct pores and sharp vellus hairs with organic depth. Standard 1K (Right) suffers from "smearing" artifacts, where fine details merge into a muddy, undefined pattern due to upscaling data loss.

- Contrast & Realism: Left maintains high micro-contrast and realistic light interaction, showing clear depth in skin ridges. Right appears "flat" and "waxy" as noise-reduction smoothing destroys the subtle shadows essential for skin realism.

- Artifact Control: Left is clean and grounded. Right shows heavy pixelation and "pixel-soup" artifacts, failing to reconstruct high-frequency details from a 1024p base.

More Than Just Pixels: The Unified 7B Architecture

The true innovation of Qwen Image 2.0 lies in its efficiency. While its predecessor utilized 20 billion parameters, the 2026 version has been optimized down to a 7B unified architecture.

Efficiency Meets Power

Alibaba didn’t just trim the model from 20B down to 7B. They totally rebuilt the system from scratch. Even though it is now three times smaller, it still runs circles around the older version in big tests. It even climbed to the top of the AI Arena leaderboard.

The "One-Model" Advantage

Unlike competitors that chain separate models for generation and modification, Qwen 2.0 uses a single pipeline for both. This "Single-Model" method keeps AI skin and clothing textures looking right during edits. If you swap a character's outfit, the light and tiny fabric details stay locked to the original scene. This prevents that "fake" or "pasted-on" look that often happens when you use multiple different tools for one job.

Surgical Semantic Adherence

The model’s expanded 1,000-token prompt capacity allows users to act as "material scientists" rather than just prompt engineers. You can describe textures with surgical precision to achieve specific results:

- Complex Materials: "Worn out full-grain leather showing clear salt marks and tiny cracks right along the stitching."

- Environmental Detail: "Old limestone blocks filled with fossil shapes and bits of wet green moss."

This level of detail ensures that High-detail AI architectural rendering looks intentional and structurally sound. Whether you are creating a professional marketing asset or a cinematic frame, Qwen Image 2.0 provides the precision required for production-ready visuals.

Case Study: Three Areas Where Qwen 2.0 Wins

The transition to Native 2K AI image generation isn't just a technical milestone; it’s a practical revolution for specialized industries. By capturing "micro-geometry" at the source, Qwen Image 2.0 excels in scenarios where traditional upscaled models typically fail.

Macro Photography: Beyond the Visible

In macro photography, the smallest artifact can break the illusion of reality. Qwen Image 2.0 demonstrates exceptional precision when rendering intricate subjects like a bee’s iridescent wing or the internal gears of a mechanical watch. Because the 7B diffusion decoder operates natively at 2048px, it preserves the sharp edges of tiny components and the semi-transparent textures of biological specimens without the "hallucinated" blurring often seen in post-processed images.

Prompt:

A macro photograph of a luxury skeletonized tourbillon watch, presented in a partial 'exploded view' layout. The sapphire glass and titanium ring float just above the base plate. This setup lets you see the whole gear system inside.

The escapement wheel and hairspring are visible in the foreground with microscopic chamfered edges and faint linear brushing. Visible micro-screws with blued-steel finishes secure the bridges. Background elements include the mainspring barrel and a blurred ruby jewel bearing with realistic light refraction. Lighting is high-contrast cinematic 'rim lighting' to define the edges of every gear tooth. No shimmering on the hair-thin balance spring. Hyper-realistic textures, visible metallic grain, zero noise.

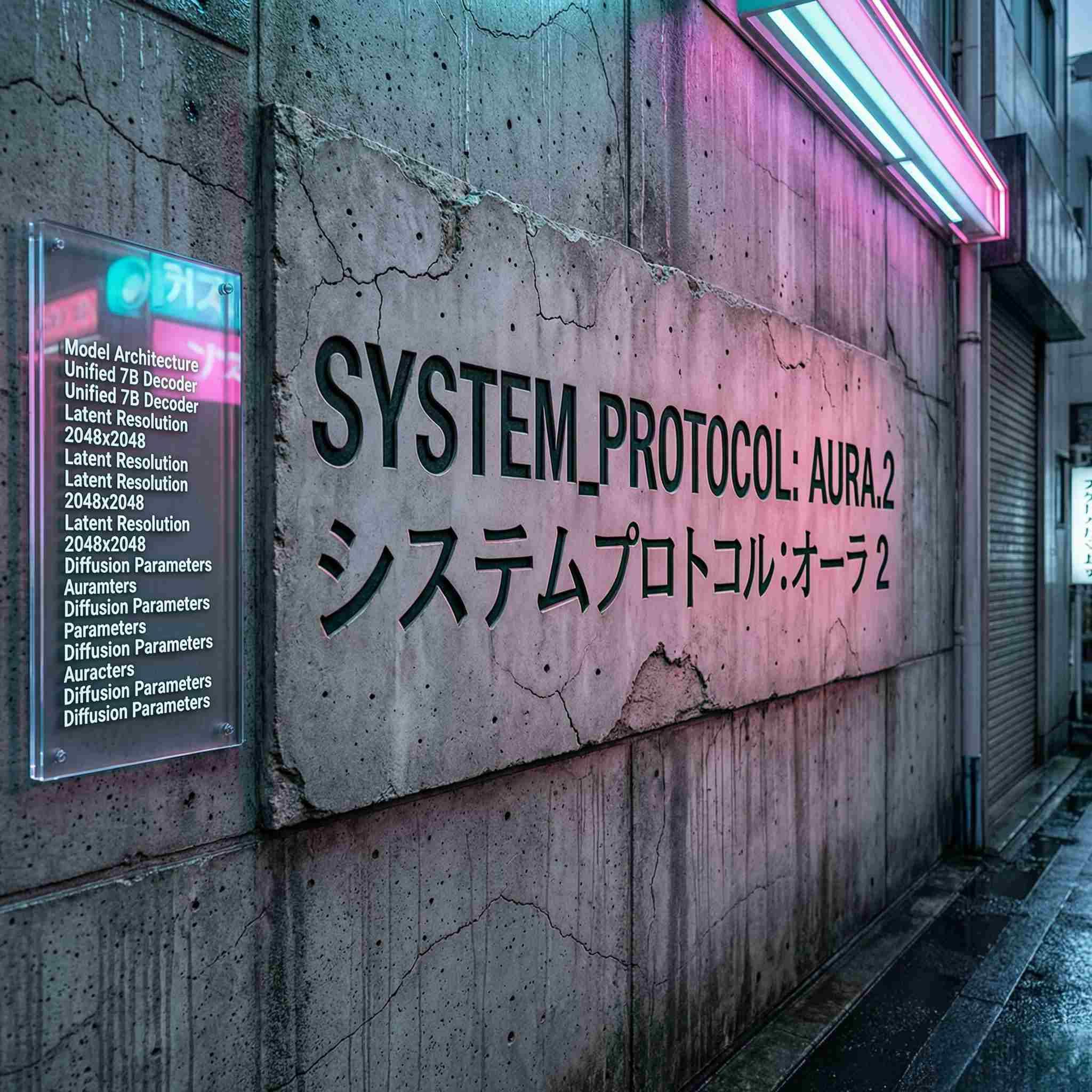

Professional Typography and Bilingual Layouts

Qwen 2.0 can handle prompts up to 1,000 tokens long. This lets you write deep AI art instructions that pinpoint exactly where text goes and what fonts to use.

- True Perspective: Words on surfaces—like an old sign on a brick wall—fit the angle and shape of the background perfectly.

- Natural Lighting: Show real reflections from surroundings. This makes it look like truly.

Prompt:

A super-clear shot shows a worn concrete wall in a wet Tokyo alley. The surface looks rough and soaked from the rain. Right in the middle of this porous concrete, there is a large area with deep carvings cut into the stone.

In bold, clean sans-serif English letters, the main title reads: 'SYSTEM_PROTOCOL: AURA.2'.

Directly below this, engraved in precise, traditional Japanese Mincho calligraphy, is the corresponding title: 'システムプロトコル:オーラ 2'.

The carved letters have real stone chips inside the lines. Pink neon light from a sign nearby hits the rough edges of each character. This light creates tiny shadows deep inside the stone carvings.

A multi-layered acrylic sign sits on the concrete to the left of the main text. On its surface, ten lines of clear technical info are printed in a 12pt sans-serif font. The text mentions things like 'Model Architecture', 'Unified 7B Decoder', and '2048x2048 Resolution'. You can read every word easily, even with the cyan and magenta lights glowing nearby. There are also clear light reflections bouncing off the plastic surface.

The surrounding concrete texture must reveal individual sand grains, hairline cracks, and water streaking, providing high-detail context. 2K resolution is mandatory to maintain perfect typography alignment across both the engraved and printed text.

Architectural Visualization: Texture Without "Shimmer"

For designers, High-detail AI architectural rendering is often plagued by "shimmering" or moiré patterns in fine textures. Qwen Image 2.0 solves this by natively rendering raw concrete, brushed steel, and wood grain.

Prompt:

A high-end, simple kitchen, a massive island takes center stage. It has a rich, wavy wood grain and mades of dark walnut. The top features a matte, brushed steel finish that shows off thin, horizontal lines. These catch the morning sun beautifully as it streams in through the giant windows. Look closely at the back wall—it's raw concrete and still has those round marks left over from the building molds. No shimmering on the metal surfaces. Extremely sharp edges, realistic light bounce between the wood and steel, photorealistic architectural photography.

| Material | Fidelity Level | Technical Edge |

| Raw Concrete | High | Visible aggregate and porous surface detail. |

| Brushed Steel | Extreme | Linear grain patterns without digital noise. |

| Wood Grain | Organic | Realistic fabric textures logic applied to natural knots and fibers. |

This stability is key for professional work. It's vital that buildings and materials look real. With this all-in-one system, architects can easily swap out surfaces. You could change a marble floor to polished concrete in seconds. Even with these big changes, the AI people in the room still look like real humans. The whole scene stays sharp and consistent.

The New Standard for High-Fidelity AI

The landscape of AI image generation has reached a critical turning point. The days of "good enough" 1024p generations followed by destructive upscaling are fading. Native 2K resolution is no longer a luxury reserved for high-end experimental rigs; it has become a fundamental requirement for professional AI workflows. Whether for commercial photography, architectural visualization, or high-end digital art, the demand for "pixel-perfect" integrity is non-negotiable.

Moving to native high-res processing is a huge win. Models like Qwen Image 2.0 finally solve the big headaches we had with older versions. You don't have to deal with that extra "upscale" step anymore. This means creators can trust their AI art prompts will stay crisp. It looks sharp and real, even if you zoom in to a 1:1 view.

| Industry | Impact of Native 2K Workflow |

| Marketing & Ads | Print-ready assets with zero "waxy" skin artifacts. |

| Architecture | Stable textures on high-frequency patterns like steel and wood. |

| Product Design | Legible, perspective-accurate bilingual typography on 3D surfaces. |

| Entertainment | Cinematic frames that capture micro-expressions and fabric weaves. |

Final Thought: Capturing Reality at a Cellular Level

Qwen Image 2.0 isn't just catching up to the physical world; it is capturing it at a cellular level. By utilizing a unified 7B architecture that maintains semantic adherence across $2048 \times 2048$ pixels, the model preserves the "micro-geometry" of life—the pores in human skin, the grit in concrete, and the individual threads in a textile. This level of fidelity, proves that smaller, more efficient models can deliver superior results when the underlying architecture is optimized for resolution.

The standard for realism has been raised. In a world where AI-generated content is becoming indistinguishable from photography, the secret lies in the pixels you don't have to guess.

Ready to see the pores? Try Qwen Image 2.0 on Atlas Cloud.

FAQ

What is the difference between Native 2K generation and AI upscaling?

Native 2K generation creates microscopic details (like skin pores and fabric weaves) directly from the prompt within the latent space. Traditional AI upscaling merely "stretches" 1024p images and uses predictive algorithms to "guess" missing pixels. Native 2K eliminates the "waxy" skin and "hallucinated" artifacts common in upscaled images, ensuring 100% structural integrity for professional print and marketing assets.

How does the 1,000-token prompt capacity improve AI image accuracy?

Most models truncate prompts after 77 tokens, losing complex instructions. Qwen 2.0’s expanded 1,000-token capacity allows for "Surgical Semantic Adherence." Creators can provide exhaustive descriptions of micro-geometry, lighting, and bilingual text placement, ensuring the model understands intricate material science and complex environmental details without losing context.

Why is Qwen Image 2.0’s 7B architecture better than larger 20B models?

Size doesn't always equal quality. While older 20B models relied on brute-force parameters, Qwen 2.0 uses a unified 7B architecture optimized specifically for native high-resolution output. By integrating the 8B vision encoder with a specialized 7B diffusion decoder, it achieves higher benchmark scores and cleaner 2K textures with lower latency and higher computational efficiency.