Not long ago, making a decent social video required a team: scripting, shooting, editing, sound design. By 2026, that entire process has been compressed into a text prompt and an API call. The infrastructure that turns a product description, a line of script, or a content brief directly into a publishable video clip now exists.

This article explains what that infrastructure looks like, how to build on it, and what it takes to make it work reliably at scale.

Why Social Video Automation Matters Now

Short‑form video is no longer just entertainment. TikTok, Instagram Reels, and YouTube Shorts have become core distribution engines for culture, marketing, and e‑commerce. But there is a simple constraint: content production does not scale with demand.

Even for experienced creators, producing a high‑quality video takes serious time – scripting, storyboarding, shooting or finding footage, editing, color grading, sound mixing, captions. The bottleneck is rarely ideas. It is execution speed. When a trend lasts only a few hours, whoever publishes first wins. AI video generation changes the game by turning batch production from a capital project into a routine operational cost.

Why APIs Matter More Than User Interfaces

Many AI video tools offer nice web interfaces: type a prompt, click a button, see the result. That is convenient for individual creators. But if you are building an automated content system, a UI will not help you. What enables real scale is the API.

APIs bring programmability. You can submit jobs in batches. You can automatically adjust aspect ratios for different platforms. You can embed video generation into your SaaS platform as a native feature. You can run programmatic A/B tests – generate ten stylistic variations of the same product description, publish them to different audience segments, then use engagement data to refine the next batch of prompts.

Consider an e‑commerce platform that lists two hundred new products every day. Making a showcase video for each manually would require dozens of video professionals. With an API, you write a script that reads the product database, automatically assembles a prompt template, calls the API, and pushes the results to a social media scheduler. No human ever opens editing software. UIs are for people. APIs are for systems. The real breakthrough comes from the latter.

The Life of One API Call

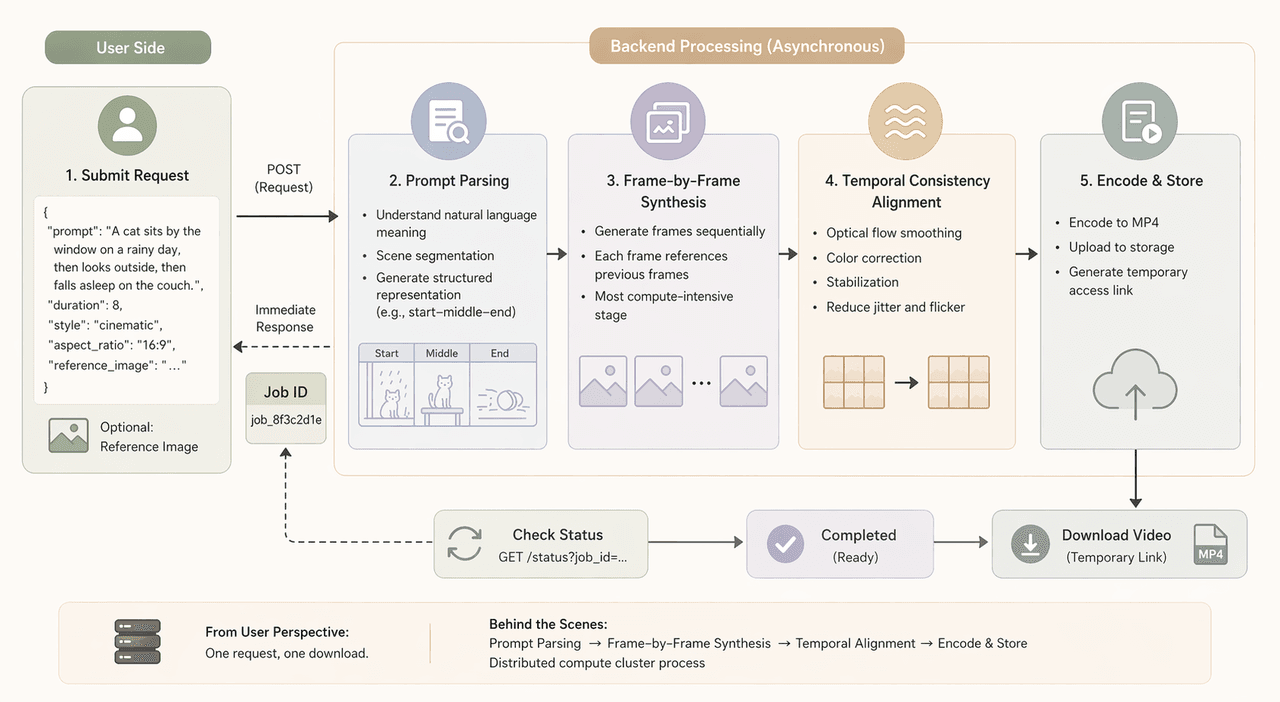

Let us trace a real API call from submission to download.

First, you package your prompt and parameters into JSON. The request typically includes prompt, duration (say, eight seconds), a style preset, aspect_ratio, and sometimes a reference image to lock in a character or scene. You send this to the endpoint, and the system immediately returns a unique job ID. Generation takes seconds to minutes, so the process is asynchronous.

After submission, the backend starts working.

Step one is prompt parsing – turning your natural language into a structured representation. This includes scene segmentation: if your description implies three sequential actions, the model figures out the beginning, middle, and end.

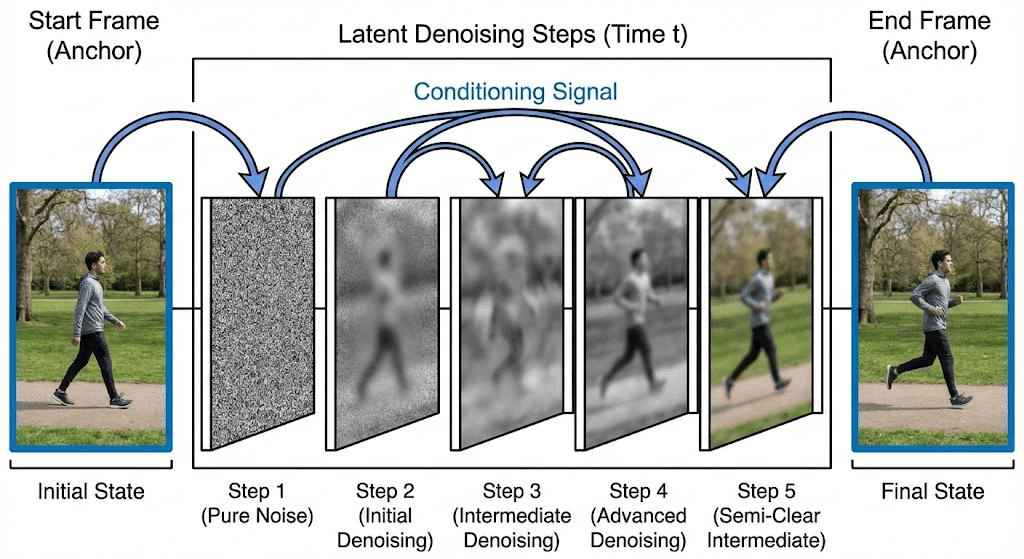

Step two is frame‑by‑frame synthesis. The model generates frames sequentially, each referencing previous frames to maintain coherence. This is the most compute‑intensive stage.

Step three is temporal consistency alignment. Even with the model's best efforts, raw frames may have small jitter. A post‑processing stage applies optical flow smoothing, color correction, and stabilization.

Finally, the system encodes the video into MP4, uploads it to storage, and generates a temporary access link. From the user's perspective, it is one request and one download. Behind the scenes, a distributed compute cluster has done a lot of work.

How Different People Use This

Independent creators use the API to multiply their output. You can take one core idea and generate a dozen variations – change the color tone, swap the voiceover style, adjust camera motion. Instead of three videos a week, you can make ten a day. The bottleneck shifts from production speed to your judgment about what to keep.

Quick demo: One idea, many variations

plaintext1import requests 2 3API_KEY = "YOUR_API_KEY" 4url = "https://api.atlascloud.ai/api/v1" 5 6styles = ["cinematic", "anime", "documentary", "vlog"] 7 8for style in styles: 9 payload = { 10 "prompt": "A cat sitting by the window, wind blowing curtain", 11 "duration": 6, 12 "style": style, 13 "aspect_ratio": "1:1" 14 } 15 16 res = requests.post(url, json=payload, headers={ 17 "Authorization": f"Bearer {API_KEY}" 18 }).json() 19 20 print(f"{style} → job_id:", res["job_id"])

Marketing teams use more systematic approaches. A common use case is multi‑region localization. A global brand launching in twenty countries can generate a master video, then run a script that automatically replaces on‑screen text, voiceover, and visual details for each language. A month of work becomes a few days.

E‑commerce is another fast‑growing area. A static product image plus a short description becomes a dynamic showcase. For a smartwatch, you input a close‑up description with lighting and camera movement, and the system generates a six‑second loop. Placed on a product page, such clips often outperform static images. And you can batch the whole catalogue.

Developers and SaaS platforms package video generation as a service. A social media scheduling tool might integrate an API so users can input a tweet and have the tool automatically expand it into a short video script, generate the video, and schedule it. These platforms are turning video generation into a fundamental capability.

Making AI Output Production‑Ready

A hard truth: raw API output is rarely ready to publish. Successful production systems wrap the API with several layers.

First, prompt engineering. Mature teams maintain prompt template libraries for different categories, styles, and platforms. An Instagram Reels prompt emphasizes high saturation and fast cuts. A YouTube Shorts prompt focuses on narrative flow. Templates have variables that scripts fill in dynamically.

Second, generation quality management. The same prompt run five times might produce three usable clips and two with glitches – a deformed finger, an illogical background object. You write automated checks that detect common failure modes and flag clips for regeneration.

Third, post‑processing pipelines. After generation, you might need to add a logo, intro/outro, or burned‑in subtitles. Do this with scripts, not by re‑importing into editing software.

Fourth, caching and reuse. If your library repeatedly uses the same product or character, cache the results. This cuts costs and maintains visual consistency.

All these layers together make a real content engine. The API is just a component. The value is in how you assemble the system.

What Still Does Not Work

AI video generation is far from perfect. If you try to generate anything longer than about fifteen seconds, you will likely run into problems – objects deform, scene logic breaks, characters become inconsistent. The effective narrative window of current models is short.

Computational cost is another constraint. Generating one second of high‑quality video takes far more GPU time than generating an image. Prices are falling, but for teams that need hundreds of videos per day, the math still matters. A pragmatic approach reserves high‑cost generation for critical content and uses cheaper options for testing.

Prompt unpredictability is a persistent nuisance. The same prompt may give different results today than yesterday. Results across providers vary wildly. Automated systems need extra robustness – assume that not every generation will meet expectations, and build in retries.

Multi‑scene narrative coherence is still very weak. You can generate "a person drinking coffee in a café" and also generate "the same person walking out onto the street," but the model will not automatically understand the transition. To get a multi‑scene video, you currently have to describe every cut in detail.

Where Things Are Headed

Despite these limitations, the direction is clear. Video generation will not remain a standalone tool. Over the next few years, expect fully automated content pipelines: a system scans trends each morning, automatically generates video concepts, runs small tests, picks the best performers, and amplifies them. No human makes creative decisions – only final brand‑safety review.

Also expect agentic creative systems. You give an AI agent a goal – "increase new product awareness this week" – and it proposes script directions, generates candidates, publishes test audiences, analyses feedback, adjusts strategy, and generates the next batch.

Real‑time personalised video streams will appear. A fitness app could produce a personalised weekly summary with the user's own data, progress visuals, and encouraging voiceover.

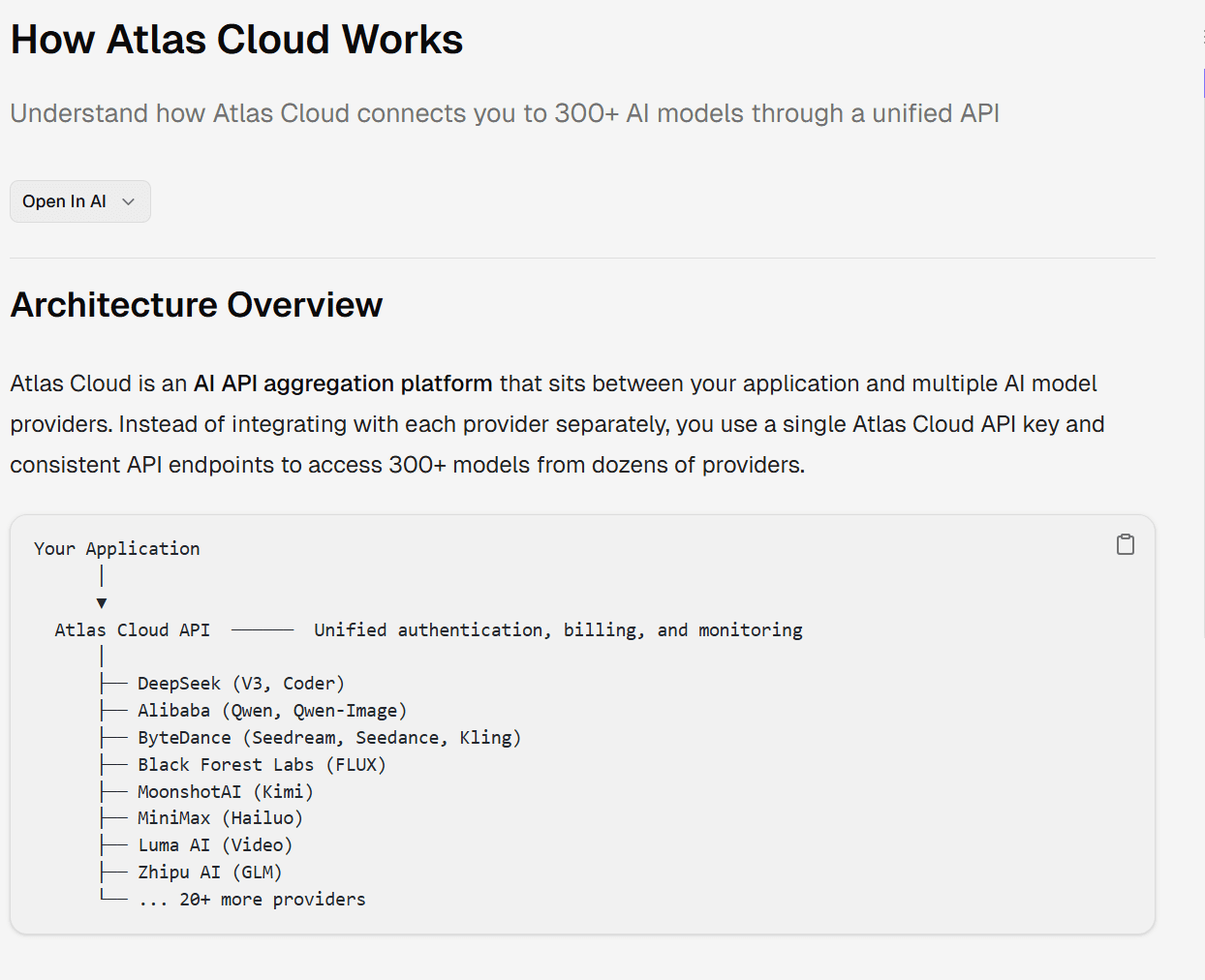

Finally, expect deep integration with marketing automation stacks. Platforms like AtlasCloud support aggregating multiple models for image and video generation, making it easier for users to integrate them into their own creative or commercial projects.

Final Thought

The shift from manual editing to API‑based generation is not a tool upgrade. It is a structural change in how content is made and consumed. Video generation APIs are becoming the infrastructure layer of modern digital storytelling. For creators, this means scale. For developers, opportunity. For platforms, automation. And for the internet, a transition from static batch production to continuous generative media systems. That transition is already happening. Anyone with an API key and an idea can start building their own video pipeline – no million‑dollar budget required.