Budget matters. For every team with an unlimited production budget, there are a hundred teams that need to stretch every dollar. Alibaba's Wan 2.6 exists for the latter. At $0.07 per second of generated video on Atlas Cloud, it is the cheapest AI video generation model available through any major API -- and the quality-to-cost ratio is genuinely impressive. You will not confuse Wan 2.6 output with Sora 2 physics simulation or Veo 3.1 cinematic polish, but for the price of a single Sora 2 clip, you can generate over 20 seconds of Wan 2.6 video.

This Wan 2.6 tutorial covers everything developers need to integrate Alibaba's budget-friendly video model into their pipelines through Atlas Cloud -- pricing breakdowns, Python code examples, prompt tips, and a direct comparison against the leading alternatives.

*Last Updated: February 28, 2026*

See AI video models in action:

The Wan 2.6 API is accessible via Atlas Cloud for $0.07 per second of generated video. Atlas also provides $1 in free credit upon sign-up -- enough for over 14 seconds of Wan 2.6 video. Atlas customers can access Wan 2.6 alongside Seedance 2.0, Kling 3.0, Veo 3.1, Sora 2, and 300+ other models with a single API key.

Wan 2.6 at a Glance

| Spec | Detail |

| Developer | Alibaba |

| Model ID | `alibaba/wan-2.6/text-to-video` |

| Max Resolution | 1080p |

| Max Duration | 10 seconds |

| Frame Rate | 30fps |

| Native Audio | No |

| Reference Input | 1 image (image-to-video) |

| Core Strength | Cost efficiency, reliable quality at lowest price point |

| Atlas Cloud Price | $0.07/sec |

Why Wan 2.6 Matters

The Cost Advantage

The AI video generation market has a pricing problem. Premium models like Sora 2 ($0.15/sec) and Kling 3.0 ($0.126/sec) produce stunning output, but the per-clip costs add up fast for teams producing content at volume. A 10-second Sora 2 clip costs $1.50. The same duration from Wan 2.6 costs $0.70 -- less than half.

For a team generating 100 clips per week, the annual cost difference between Sora 2 and Wan 2.6 is over $40,000. That is not a trivial difference, and for many use cases -- social media content, draft previews, batch processing, concept testing -- the quality premium of more expensive models is not necessary.

Quality That Exceeds Expectations

Wan 2.6 is not a toy model marketed at a toy price. Alibaba invested heavily in the underlying architecture, and the results show. At 1080p resolution with 30fps frame rate, the output is clean, coherent, and usable for production contexts where absolute top-tier quality is not the requirement. Motion rendering is smooth, colors are accurate, and temporal consistency holds across the full 10-second duration.

Is it as good as Seedance 2.0 or Veo 3.1? No. But it is 44% cheaper than Kling 3.0 ($0.126/sec) and 53% cheaper than Sora 2 ($0.15/sec), which are the premium models most teams compare against. The value proposition is clear: Wan 2.6 delivers solid quality at a fraction of the premium models' cost.

Alibaba's AI Research Pedigree

Alibaba's AI research division is one of the largest in the world. The Wan series of models benefits from the same infrastructure and research investment that powers Alibaba's cloud computing, e-commerce recommendation engines, and natural language processing systems. Wan 2.6 represents the team's latest iteration on efficient video generation -- a model specifically optimized to deliver maximum visual quality per dollar.

Key Features of Wan 2.6

Text-to-Video Generation

The core text-to-video pipeline accepts natural language prompts and generates 1080p video clips up to 10 seconds long. The model handles a wide range of subjects -- people, animals, landscapes, abstract scenes, product demonstrations -- with reasonable quality across all categories. It is not specialized for any single style, which makes it a good general-purpose option for teams with varied content needs.

Image-to-Video Generation

Wan 2.6 accepts a single reference image as the starting frame for video generation. This is useful for animating still photographs, creating video from product images, or maintaining visual consistency with existing brand assets. The model preserves the visual style and composition of the input image while adding natural motion and temporal progression.

1080p Output at 30fps

At 1080p resolution and 30 frames per second, Wan 2.6 output meets the minimum standard for professional use on social media, web content, and internal presentations. The resolution is not the highest in the market -- Kling 3.0 offers Ultra HD -- but 1080p is sufficient for the vast majority of digital video use cases, particularly when the content will be viewed on mobile devices or embedded in web pages.

Fast Generation Times

Wan 2.6 clips typically generate in 20-60 seconds, depending on duration and complexity. This is comparable to or faster than more expensive models, making it viable for interactive applications and workflows that require quick turnaround.

Consistent Quality

One of Wan 2.6's practical strengths is consistency. The quality variance between generations is relatively low compared to some competing models. You will get fewer "bad" generations that need to be discarded and regenerated, which effectively reduces the true cost-per-usable-clip even further.

Wan 2.6 Pricing

Alibaba Direct Access

Wan 2.6 is available through Alibaba Cloud's Model Studio platform, which requires an Alibaba Cloud account. The pricing model and documentation are primarily oriented toward the Chinese market, with interfaces and documentation that may present friction for international developers. API access requires navigating Alibaba Cloud's console, which has a steeper onboarding curve than alternatives.

Atlas Cloud API Pricing (Recommended)

The most straightforward way for developers to access the Wan 2.6 API is through Atlas Cloud:

| Detail | Value |

| Model | `alibaba/wan-2.6/text-to-video` |

| Price | $0.07/sec |

| 5-second clip | $0.35 |

| 10-second clip (max) | $0.70 |

| Free signup credit | $1.00 |

| Queue | No wait times |

The $1 free credit at sign-up equals over 14 seconds of Wan 2.6 video -- enough for at least one full-length clip and several shorter tests. This is more free video than any other model on the platform provides relative to its pricing.

Cost at Scale

For teams producing video at volume, Wan 2.6's cost advantage compounds:

- 50 clips/week (10s each): $35/week, ~$1,820/year

- 100 clips/week (10s each): $70/week, ~$3,640/year

- 500 clips/week (10s each): $350/week, ~$18,200/year

For comparison, the same 500 clips/week at Sora 2 pricing ($0.15/sec) would cost $39,000/year -- more than double.

Cost Comparison Across Models

| Model | Price/sec | 10s Clip | 100 Clips/Week (Annual) |

| Wan 2.6 | $0.07 | $0.70 | $3,640 |

| Seedance 2.0 | $0.022 | $0.22 | $1,144 |

| Veo 3.1 | $0.03 | $0.30 | $1,560 |

| Kling 3.0 | $0.126 | $1.26 | $6,552 |

| Sora 2 | $0.15 | $1.50 | $7,800 |

Note: Seedance 2.0 and Veo 3.1 are cheaper per second, making them better options for teams optimizing purely on cost. However, Wan 2.6 offers advantages in different areas -- it supports up to 10 seconds of video (compared to Veo 3.1's 8-second max), provides a distinct visual style from Alibaba's research, and delivers significant savings over premium models like Kling 3.0 and Sora 2. For teams that need affordable video generation without paying premium prices, Wan 2.6 is a solid option.

How to Access Wan 2.6 API

Option 1: Alibaba Cloud Direct

Wan 2.6 is accessible through Alibaba Cloud's Model Studio. This requires creating an Alibaba Cloud account, navigating the console (which is primarily designed for the Chinese market), and configuring API access. Documentation is available but may require translation for English-speaking teams.

Option 2: Atlas Cloud (Recommended)

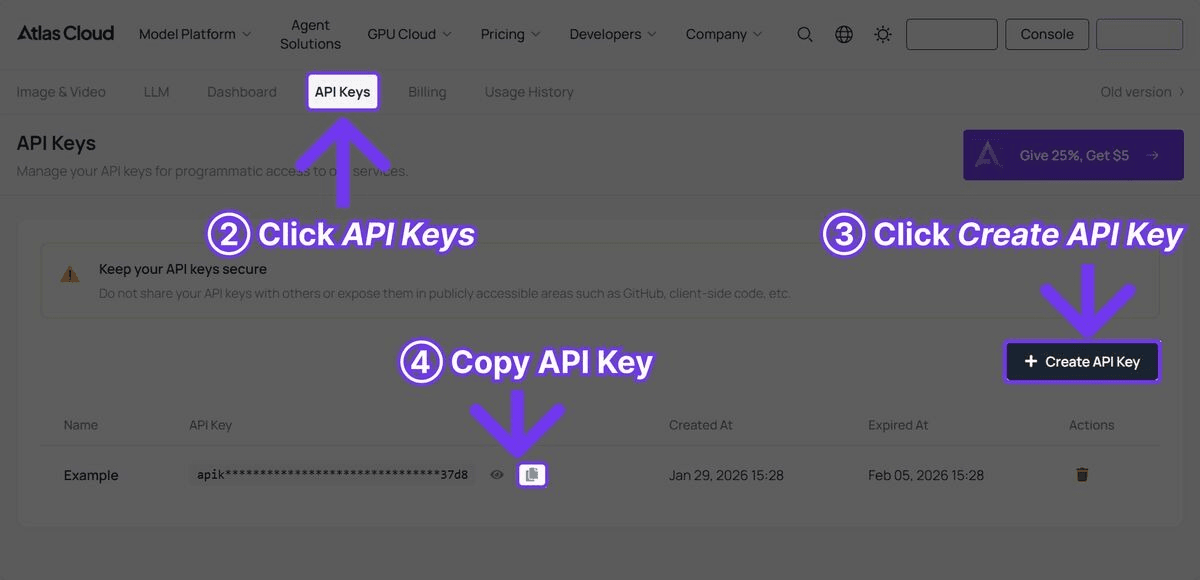

For most developers, Atlas Cloud offers the most accessible path to production with Wan 2.6. One API key provides access to Wan 2.6 and over 300 other models, including Seedance 2.0, Kling 3.0, Veo 3.1, and Sora 2. No separate accounts. Single billing. English-language documentation and support.

Step 1: Sign up on atlascloud.ai and get your API key from the dashboard. $1 free credit is added automatically to your account.

Step 2: Generate video with Wan 2.6 in Python:

plaintext1 2```python 3import requests 4import time 5 6 7API_KEY = "your-atlas-cloud-api-key" 8BASE_URL = "https://api.atlascloud.ai/api/v1" 9 10 11# Generate video with Wan 2.6 12response = requests.post( 13 f"{BASE_URL}/model/generateVideo", 14 headers={ 15 "Authorization": f"Bearer {API_KEY}", 16 "Content-Type": "application/json" 17 }, 18 json={ 19 "model": "alibaba/wan-2.6/text-to-video", 20 "prompt": "A golden retriever running through a sunlit meadow with wildflowers, slow motion, warm natural lighting, shallow depth of field, cinematic quality", 21 "duration": 10, 22 "resolution": "1080p" 23 } 24) 25 26 27result = response.json() 28 29 30# Poll for results 31while True: 32 status = requests.get( 33 f"{BASE_URL}/model/prediction/{result['request_id']}/get", 34 headers={"Authorization": f"Bearer {API_KEY}"} 35 ).json() 36 if status["status"] == "completed": 37 print(f"Video: {status['output']['video_url']}") 38 break 39 time.sleep(5) 40```

Step 3: The API immediately returns a `request_id`. Poll the prediction endpoint until the status is `completed`, then retrieve the video URL from the response. Generation time for Wan 2.6 is typically 20-60 seconds depending on duration and prompt complexity.

Image-to-Video Example

Wan 2.6 also supports image-to-video generation, where a reference image serves as the starting frame:

plaintext1 2```python 3import requests 4import time 5 6 7API_KEY = "your-atlas-cloud-api-key" 8BASE_URL = "https://api.atlascloud.ai/api/v1" 9 10 11# Image-to-video with Wan 2.6 12response = requests.post( 13 f"{BASE_URL}/model/generateVideo", 14 headers={ 15 "Authorization": f"Bearer {API_KEY}", 16 "Content-Type": "application/json" 17 }, 18 json={ 19 "model": "alibaba/wan-2.6/text-to-video", 20 "prompt": "The camera slowly zooms in as the subject turns to face the viewer, soft natural movement, cinematic lighting", 21 "image_url": "https://example.com/your-reference-image.jpg", 22 "duration": 8, 23 "resolution": "1080p" 24 } 25) 26 27 28result = response.json() 29 30 31# Poll for results 32while True: 33 status = requests.get( 34 f"{BASE_URL}/model/prediction/{result['request_id']}/get", 35 headers={"Authorization": f"Bearer {API_KEY}"} 36 ).json() 37 if status["status"] == "completed": 38 print(f"Video: {status['output']['video_url']}") 39 break 40 time.sleep(5) 41```

Wan 2.6 Prompt Tips

After extensive testing with the Wan 2.6 API, the following prompt strategies produce the best results at this price point.

1. Keep Prompts Focused on One Action

Wan 2.6 performs best when the prompt describes a single, clear action rather than a sequence of events. "A cat stretching and yawning on a windowsill" will produce better results than "A cat jumps onto a windowsill, stretches, yawns, then looks out the window." Keep it simple and let the model execute one thing well.

2. Specify Lighting and Atmosphere

Even a budget model responds strongly to lighting descriptors. "Golden hour backlighting," "overcast soft light," "neon-lit urban night" -- these terms consistently improve output quality with minimal effort. Omitting lighting descriptions leads to flat, generic illumination.

3. Use Cinematic References Sparingly

Wan 2.6 responds to basic cinematic terms -- "slow motion," "tracking shot," "close-up" -- but does not handle highly specific camera work as well as premium models. Use simple camera directions and avoid complex multi-movement descriptions.

4. Describe Materials for Product Content

For product-related prompts, material descriptions improve quality significantly: "brushed aluminum laptop," "frosted glass bottle," "matte black packaging." The model differentiates materials reasonably well, and explicit descriptions help it avoid defaulting to generic textures.

5. Leverage Natural Scenes

Wan 2.6 performs particularly well with nature and outdoor scenes -- landscapes, animals, water, vegetation. These subjects tend to produce the highest quality output relative to the model's price point. Indoor and urban scenes are handled competently but with less consistency.

Example prompts that performed well in testing:

Nature scene:

plaintext1``` 2A serene mountain lake at dawn, mist rising from the water surface, 3pine trees reflected in perfectly still water, first light of sunrise 4painting the peaks gold, slow camera pan from left to right, 5documentary quality, peaceful atmosphere 6```

Product showcase:

plaintext1``` 2A sleek wireless speaker on a wooden desk, camera slowly orbiting 3around it, warm ambient lighting from a nearby window, clean 4minimalist background, product commercial style, soft shadows 5```

Abstract art:

plaintext1``` 2Flowing liquid paint in slow motion, vibrant cobalt blue mixing 3with molten gold, abstract patterns forming and dissolving, 4extreme macro close-up, studio lighting with deep black background, 5satisfying visual texture 6```

Wan 2.6 vs Competitors

| Feature | Wan 2.6 | Seedance 2.0 | Kling 3.0 | Veo 3.1 | Sora 2 |

| Max Resolution | 1080p | High Definition | Ultra HD | Cinematic | High Definition |

| Max Duration | 10s | 15s | 10s | 8s | 12s |

| Reference Input | 1 image | 12 files | 1-2 images | 1-2 images | 1 image |

| Native Audio | No | Yes | Yes (5 languages) | Yes | Yes |

| API Cost (Atlas Cloud) | $0.07/sec | $0.022/sec | $0.126/sec | $0.03/sec | $0.15/sec |

| Best Strength | Cost efficiency | Multimodal control | Resolution + value | Cinematic polish | Physics simulation |

| Content Filter | Moderate | Strict | Very strict | Moderate | Strict |

Where Wan 2.6 Wins

Wan 2.6's defining advantage is cost efficiency at scale. At $0.07/sec, it sits in a sweet spot between the cheapest options and the premium models -- affordable enough for high-volume production while delivering quality that is genuinely usable for commercial purposes. For teams that need to generate hundreds of clips per week, the savings compared to Kling 3.0 or Sora 2 are substantial. The model's consistency is also a practical advantage -- fewer wasted generations mean lower effective costs.

Where Wan 2.6 Falls Short

Wan 2.6 lacks native audio generation, which means any video that needs sound requires a separate audio pipeline. Resolution caps at 1080p, below Kling 3.0's Ultra HD. Reference input is limited to a single image, versus Seedance 2.0's 12 files. The maximum 10-second duration is shorter than Seedance 2.0 (15s) and Sora 2 (12s). And in terms of raw visual quality -- physics accuracy, cinematic polish, material rendering -- the premium models produce noticeably better output. These are the tradeoffs that come with the lowest price point.

The Practical Approach

Most production teams will use Wan 2.6 as part of a multi-model strategy. Use Wan 2.6 for draft versions, concept testing, social media content, and any high-volume use case where cost matters more than maximum quality. Use Seedance 2.0, Veo 3.1, Kling 3.0, or Sora 2 for hero content, final renders, and use cases where quality cannot be compromised. Atlas Cloud makes this multi-model approach seamless with a single API key and consolidated billing.

Who Should Use Wan 2.6?

Choose Wan 2.6 if:

- Budget efficiency is the primary concern. At $0.07/sec, it is among the most affordable video generation options available through any major API.

- The project involves high-volume content production -- dozens or hundreds of clips per week -- where per-clip cost is a critical constraint.

- 1080p resolution is sufficient for the intended distribution channels (social media, web, internal use).

- The content does not require native audio generation (audio will be added separately).

- Quick concept testing and draft generation are needed before committing to more expensive models for final output.

Choose Seedance 2.0 instead if:

- Multi-reference input is needed. Seedance 2.0 accepts up to 12 files (images, videos, audio), giving much more control over the output.

- Native audio is required. Seedance 2.0 generates synchronized audio; Wan 2.6 does not.

- Even lower per-second pricing is needed. At $0.022/sec, Seedance 2.0 is cheaper per second.

- Longer clips (up to 15 seconds) are necessary.

Choose Kling 3.0 instead if:

- Ultra-high-definition output is required. Kling 3.0 outputs at higher resolution than Wan 2.6's 1080p.

- Free-tier access matters. Kling 3.0 offers 66 daily credits; Wan 2.6 requires paid API access.

- Text rendering in video is important for the use case.

Choose Veo 3.1 instead if:

- Cinematic visual quality and color grading are top priorities.

- Native audio generation is needed.

- Google's safety and content moderation standards are preferred.

Choose Sora 2 instead if:

- Physics accuracy is the primary requirement -- realistic object interactions, material behavior, cause-and-effect chains.

- Budget is less of a concern than output quality for physics-driven content.

- Longer clips (up to 12 seconds with higher quality) are needed.

Frequently Asked Questions

How much does Wan 2.6 cost per video?

Wan 2.6 is priced at $0.07 per second of generated video on Atlas Cloud. A 5-second clip costs $0.35, and a maximum-length 10-second clip costs $0.70. The $1 free credit at sign-up provides over 14 seconds of generated video.

Does Wan 2.6 support audio?

No. Wan 2.6 generates video only, without native audio. If your workflow requires audio, you will need to add it separately using an audio generation model or manually in post-production. Alternatively, models like Seedance 2.0, Kling 3.0, Veo 3.1, and Sora 2 all offer native audio generation.

What is the maximum video length for Wan 2.6?

Wan 2.6 generates clips up to 10 seconds at 1080p resolution and 30fps. This matches Kling 3.0's maximum but is shorter than Seedance 2.0 (15s) and Sora 2 (12s). For longer content, generate multiple clips and combine them in editing.

How do I access the Wan 2.6 API?

The easiest way is through Atlas Cloud. Sign up, get an API key, and use the model ID `alibaba/wan-2.6/text-to-video` in your requests. $1 free credit is applied automatically. Wan 2.6 is also available through Alibaba Cloud's Model Studio, but the onboarding process is more complex for international developers.

Is Wan 2.6 good enough for commercial use?

For many commercial applications, yes. Social media content, web videos, internal presentations, concept previews, and draft production are all viable use cases. The 1080p resolution and 30fps frame rate meet industry standards for digital distribution. For hero content, broadcast work, or applications demanding the highest possible quality, consider using a premium model for final renders while using Wan 2.6 for drafts and iteration.

Verdict

Wan 2.6 fills a specific and important gap in the AI video generation landscape. It is not trying to compete with Sora 2 on physics or Veo 3.1 on cinematic quality. It is trying to be the most useful video model you can afford to use at scale -- and it succeeds at that goal.

For solo creators, startups, and teams operating on tight budgets, Wan 2.6 makes AI video generation economically viable in a way that premium models do not. For larger teams with mixed requirements, it serves as the volume workhorse alongside premium models reserved for hero content. In both cases, the model earns its place in the toolkit.

Access Wan 2.6 alongside Seedance 2.0, Kling 3.0, Veo 3.1, Sora 2, and 300+ other models on Atlas Cloud. One API key. One bill. $1 free credit to start -- enough for over 14 seconds of Wan 2.6 video.

Get $1 Free Credit on Atlas Cloud -- Try Wan 2.6 and 300+ Models

────────────────────────────────────────────────────────────