DeepSeek LLM Models

DeepSeek, developed by the deepseek-ai team, is a cutting-edge series of open-source generative AI models engineered to democratize access to high-performance computing through a cost-effective and efficiency-first strategy. Its flagship reasoning model, DeepSeek-R1, made waves by rivaling top-tier proprietary models in mathematics, programming, and complex logical deduction, while the DeepSeek-V3.2, is designed for seamless daily interaction and autonomous Agent workflows. By significantly lowering the barrier to entry for advanced AI, DeepSeek has become a cornerstone for the "vibe coding" movement and a transformative tool in specialized fields like academic research and high-level technical problem-solving.

Explorez les Modèles Leaders

Atlas Cloud vous offre les derniers modèles créatifs de pointe de l'industrie.

Ce Qui Distingue DeepSeek LLM Models

Atlas Cloud vous fournit les derniers modèles créatifs de pointe du secteur.

Puissance Ouverte

Des modèles de premier plan entièrement open source, garantissant transparence et contrôle.

Efficacité architecturale

Exploite la technologie avancée Mixture-of-Experts (MoE) pour des performances de premier plan à une fraction du coût.

Polyvalence spécialement conçue

Du polyvalent V3.1 au raisonnement spécialisé du R1, DeepSeek propose des modèles pour chaque tâche.

Libertad axée sur le développeur

Sous licence permissive pour une utilisation commerciale illimitée, favorisant l'innovation sans barrières.

Performances éprouvées

Obtient systématiquement des résultats de pointe sur les indicateurs de référence de l'industrie pour le codage et le raisonnement.

L'alternative pratique

Offre la puissance des principaux modèles propriétaires avec l'abordabilité et la flexibilité de l'open source.

Peak speed

Lowest cost

| Modalité | Description |

|---|---|

| DeepSeek V3.2 | DeepSeek V3.2 est un LLM phare à usage général, intégrant des mécanismes d'attention clairsemée à de robustes capacités de traitement de contexte de 163.8K ; affichant une tarification de base hautement compétitive, il sert de pierre angulaire aux flux de travail quotidiens, y compris le raisonnement général complexe et la construction d'Agents de planification de tâches à plusieurs étapes. |

| DeepSeek V3.2 Speciale | DeepSeek V3.2 Speciale est positionné comme un LLM personnalisé haute performance, doté d'une fenêtre contextuelle massive de 163,8K et d'une structure tarifaire premium par paliers (0,4 $ entrée / 1,2 $ sortie), spécifiquement conçu pour les nœuds commerciaux centraux sensibles à la latence nécessitant une qualité de sortie ultime, tels que le service client intelligent pour les clients fortunés ou l'analyse quantitative à la milliseconde. |

| DeepSeek V3.2 Exp | DeepSeek V3.2 Exp est une version expérimentale de pointe basée sur l'architecture V3.2, intégrant les dernières fonctionnalités algorithmiques tout en maintenant un contexte de 163.8K et des coûts comparables, ce qui la rend idéale pour les équipes de R&D menant des pré-recherches techniques et des tests canary pour valider de manière préventive la puissance différenciatrice des capacités d'IA de nouvelle génération pour les futurs produits. |

| DeepSeek-V3.1 | DeepSeek-V3.1 est la dernière génération de modèles d'écosystème open-source haute performance, atteignant un nouvel équilibre entre performance et coût dans un contexte de 131,1K ; en tant que premier choix pour les projets de mise en œuvre commerciale, il agit comme la colonne vertébrale pour les scénarios nécessitant à la fois une génération de haute qualité et des coûts contrôlables. |

| DeepSeek V3.1 Terminus | DeepSeek V3.1 Terminus constitue la forme ultime et stable à long terme de la série V3.1. DeepSeek V3.1 Terminus conserve des paramètres et une tarification identiques à la version standard, visant à fournir un style de sortie et une logique perpétuellement stables pour des services de point de terminaison (endpoint) fluides dans des environnements de production destinés aux consommateurs. |

| DeepSeek-V3-0324 | DeepSeek-V3-0324 est une version instantanée historique spécifique dotée d'un contexte de 131,1 K et du coût d'entrée de texte le plus bas disponible, principalement appliquée à la maintenance des systèmes existants nécessitant une cohérence comportementale absolue, ou aux tâches de traitement par lots avec un débit d'entrée massif mais des exigences de logique de sortie modérées. |

| DeepSeek-R1-0528 | DeepSeek-R1-0528 se positionne comme un modèle de raisonnement profond de premier plan, utilisant un contexte de 131,1K et affichant le coût de calcul le plus élevé (0,55 $/2,15 $). Il représente l'apogée des capacités dialectiques logiques et est exclusivement utilisé pour des tâches critiques de "brainstorming" telles que la modélisation mathématique complexe et la génération d'architectures de code avancées. |

| DeepSeek OCR | DeepSeek OCR est un LLM multimodal visuel dédié qui prend en charge l'entrée double voie image-texte avec un contexte court de 8,2K et des coûts d'utilisation ultra-faibles, parfaitement adapté aux scénarios de pipeline de saisie de données automatisée tels que la numérisation de documents scannés massifs et l'extraction structurée de reçus financiers. |

Nouvelles fonctionnalités de DeepSeek LLM Models + Showcase

La combinaison de modèles avancés avec la plateforme accélérée par GPU d'Atlas Cloud offre une vitesse, une évolutivité et un contrôle créatif inégalés pour la génération d'images et de vidéos.

Raisonnement et vérification de classe mondiale via l'API DeepSeek-V3.2-Speciale

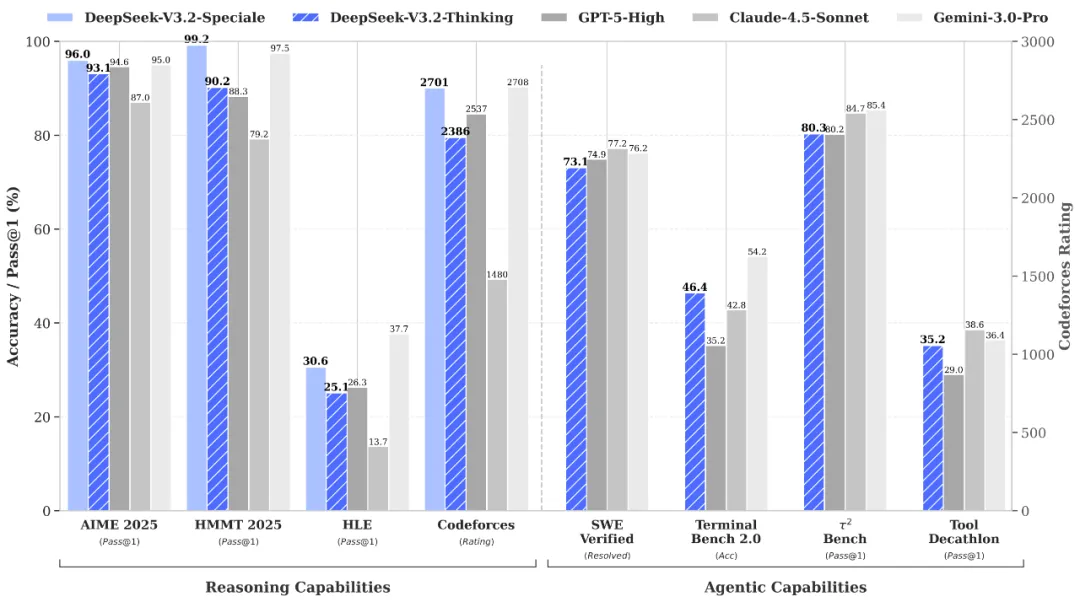

DeepSeek-V3.2-Speciale is the "long-thought" enhanced variant of the V3.2 architecture, integrating advanced theorem-proving capabilities from DeepSeek-Math-V2. Engineered for extreme precision, this model excels in rigorous mathematical proofing, complex logical verification, and superior instruction following, rivaling the performance of Gemini-3.0-Pro in mainstream reasoning benchmarks. It is the premier choice for academic research, automated formal verification, and high-stakes technical problem-solving where logical integrity is non-negotiable.

Une profondeur cognitive inégalée via DeepSeek-R1 API

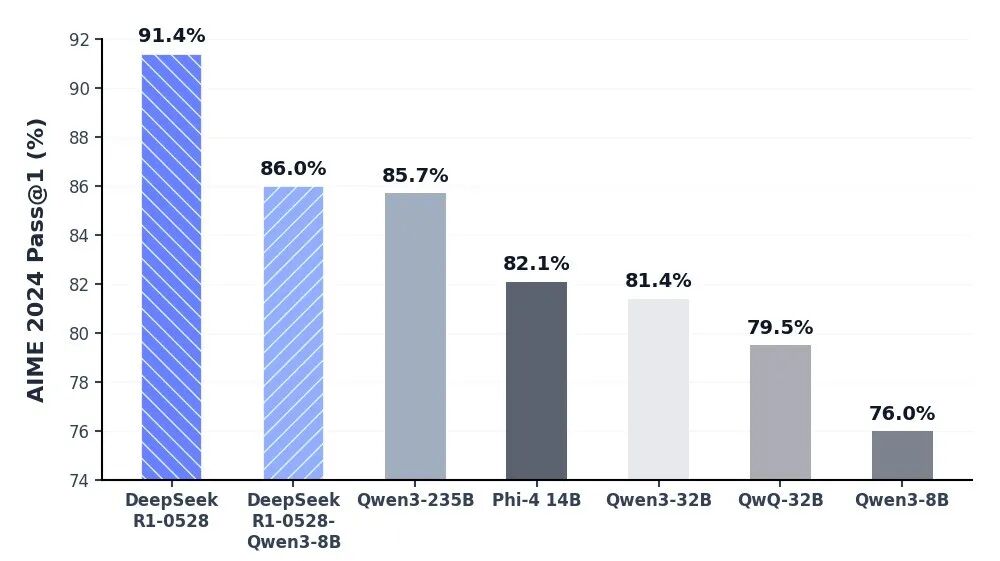

Le modèle DeepSeek-R1 se place à l'avant-garde de l'IA de raisonnement, offrant des performances de pointe en mathématiques, en programmation et en logique générale. En atteignant la parité avec des modèles mondiaux d'élite tels que le o3 d'OpenAI et Gemini-2.5-Pro, R1 a redéfini les capacités de l'intelligence open source. Il est spécifiquement optimisé pour les tâches de réflexion approfondie, notamment le développement algorithmique complexe, la synthèse de données sophistiquée et les flux de travail cognitifs avancés nécessitant un raisonnement déductif en plusieurs étapes.

Interaction quotidienne fluide avec les flux de travail d'agents autonomes via l'API DeepSeek V3.2

DeepSeek-V3.2 atteint l'équilibre parfait entre profondeur de raisonnement et vitesse d'exécution, conçu pour propulser des interactions quotidiennes fluides et des écosystèmes d'agents autonomes. Avec une latence considérablement réduite et un contrôle de sortie optimisé, il sert de moteur robuste pour l'orchestration de tâches en plusieurs étapes et les assistants IA polyvalents. Qu'il s'agisse de déployer une automatisation à l'échelle de l'entreprise ou des outils interactifs à haute fréquence, V3.2 garantit une expérience utilisateur fluide, efficace et rentable.

Découverte scientifique rigoureuse et vérification formelle avec l'API DeepSeek-V3.2-Speciale

The DeepSeek-V3.2-Speciale API is engineered for tasks that demand absolute logical precision and multi-step reasoning. By integrating advanced theorem-proving capabilities, it enables researchers and engineers to execute complex mathematical inductions, verify formal logic, and solve high-tier competitive programming challenges. Perfect for academic R&D, automated code auditing, and cryptographic analysis, this API transforms abstract complexity into verifiable results with the performance of top-tier global models.

Advanced Algorithmic Synthesis & Strategic Reasoning using the DeepSeek-R1 API

DeepSeek-R1 empowers developers to build applications centered on deep cognitive workflows and strategic decision-making. Ranking at the forefront of global reasoning benchmarks, the R1 API excels in synthesizing sophisticated code architectures, processing dense technical documentation, and generating innovative solutions for open-ended logical puzzles. It is the ideal engine for AI-driven software engineering, long-form data synthesis, and any scenario where "thinking fast and slow" requires a powerful, reasoning-first foundation.

Orchestration fluide d'agents autonomes avec l'API DeepSeek-V3.2

For high-velocity, sensory-driven AI applications, the DeepSeek-V3.2 API provides the perfect equilibrium between reasoning depth and ultra-low latency. It is optimized for building autonomous Agents that can navigate multi-step workflows, manage real-time user interactions, and execute general-purpose tasks with GPT-5 level intelligence. This use case is tailor-made for enterprise-scale automation, intelligent customer ecosystems, and developers looking to deploy responsive, cost-effective AI assistants at scale.

Comparaison des Modèles

Découvrez comment les modèles de différents fournisseurs se comparent — performance, tarification et atouts uniques pour une décision éclairée.

| Modèle | Contexte | Sortie maximale | Entrée | Positionnement |

|---|---|---|---|---|

| DeepSeek V3.2 | 163.84K | 163.84K | Text | Général Phare |

| DeepSeek V3.2 Speciale | 163.84K | 163.84K | Text | Personnalisé haute performance |

| DeepSeek V3.2 Exp | 163.84K | 163.84K | Text | Build expérimental |

| DeepSeek-V3.1 | 131.07K | 65.54K | Text | Backbone open source |

| DeepSeek V3.1 Terminus | 131.07K | 65.54K | Text | Stable à long terme (LTS) |

| DeepSeek-V3-0324 | 131.07K | 32.77K | Text | Instantané historique |

| DeepSeek-R1-0528 | 131.07K | 131.07K | Text | Raisonnement de premier ordre |

| DeepSeek OCR | 8.19K | 8.19K | Text | Multimodal dédié |

| GLM-5 | 200K | 128K | Text | Modèle de fondation phare |

| MiniMax-M2.5 | 204.8K | 196.6K | Text | Codage agentique SOTA |

How to Use DeepSeek LLM Models on Atlas Cloud

Get started in minutes — follow these simple steps to integrate and deploy models through Atlas Cloud’s platform.

Create an Atlas Cloud Account

Sign up at atlascloud.ai and complete verification. New users receive free credits to explore the platform and test models.

Pourquoi Utiliser DeepSeek LLM Models sur Atlas Cloud

Combiner les modèles DeepSeek LLM Models avancés avec la plateforme accélérée par GPU d'Atlas Cloud offre des performances, une évolutivité et une expérience développeur inégalées.

Performance et Flexibilité

Faible Latence :

Inférence optimisée par GPU pour un raisonnement en temps réel.

API Unifiée :

Exécutez DeepSeek LLM Models, GPT, Gemini et DeepSeek avec une seule intégration.

Tarification Transparente :

Facturation prévisible par token avec options serverless.

Entreprise et Échelle

Expérience Développeur :

SDK, analytiques, outils de fine-tuning et modèles.

Fiabilité :

99,99% de disponibilité, RBAC et journalisation conforme.

Sécurité et Conformité :

SOC 2 Type II, alignement HIPAA, souveraineté des données aux États-Unis.

Questions Fréquentes sur DeepSeek LLM Models

DeepSeek offre une transparence open-source et une rentabilité supérieure. Avec des capacités de raisonnement (R1 & V3.2) rivalisant avec GPT-5, il constitue une alternative performante et moins coûteuse, offrant la flexibilité d'un déploiement privé.

Cela reflète la « capacité cérébrale » totale du modèle. La conception MoE de DeepSeek associe un nombre total massif de paramètres (par exemple, 671B) pour une intelligence profonde à un nombre « actif » rationalisé pour une efficacité opérationnelle maximale.

Explorer Plus de Familles

Happy Horse 1.0

HappyHorse-1.0 is a unified multimodal AI video generation model that climbed to the top of the Artificial Analysis Video Arena blind-test leaderboard for both text-to-video and image-to-video generation. CNBC Alibaba Group confirmed ownership of HappyHorse, developed under its Alibaba Token Hub (ATH) business unit, where it leads benchmarks outperforming ByteDance's Seedance 2.0 and others. Caixin Global Led by Zhang Di — the former VP of Kuaishou who architected Kling AI — the 15-billion parameter model generates 1080p video with synchronized audio in a single pass using a unified transformer architecture that bypasses the multi-stage pipelines used by every major competitor.

Seedance 2.0 Models

Seedance 2.0(by Bytedance) is a multimodal video generation model that redefines "controllable creation," moving beyond the limitations of text or start/end frames. It supports quad-modal inputs—text, image, video, and audio—and introduces an industry-leading "Universal Reference" system. By precisely replicating the composition, camera movement, and character actions from reference assets, Seedance 2.0 solves critical issues with character consistency and physical coherence, empowering creators to act as true "directors" with deep control over their output.

GPT Image 2 Models

GPT Image 2 is a state-of-the-art multimodal foundation model engineered for exceptional text-to-image generation with unprecedented photorealism and creative versatility. Developed by OpenAI as the evolution of the DALL-E lineage, it transforms detailed natural language descriptions into hyper-realistic imagery at up to 4K resolution. With proprietary "Neural Rendering Engine" technology for precise visual control, GPT Image 2 delivers studio-quality results with accurate anatomy, lighting, and composition—making it the premier AI tool for professional creators, enterprises, and developers demanding production-ready visual assets.

Wan2.7 Models

Launching this March, Wan2.7 is the latest powerhouse in the Qwen ecosystem, delivering a massive upgrade in visual fidelity, audio synchronization, and motion consistency over version 2.6. This all-in-one AI video generator supports advanced features like first-and-last frame control, 3x3 grid synthesis, and instruction-based video editing. Outperforming competitors like Jimeng, Wan2.7 offers superior flexibility with support for real-person image inputs, up to five video references, and 1080P high-definition outputs spanning 2 to 15 seconds, making it the premier choice for professional digital storytelling and high-end content marketing.

Veo3.1 Models

Google DeepMind’s Veo 3.1 represents a paradigm shift in AI video generation, empowering creators with director-level narrative control and cinematic-grade audio quality that seamlessly integrates with its enhanced visual realism. By bridging the gap between imaginative concepts and photorealistic execution, this advanced model offers a transformative solution for a wide range of application scenarios, from professional filmmaking and high-end advertising to immersive digital content creation.

ERNIE Image Models

ERNIE-Image is an open-weight text-to-image model developed by the ERNIE-Image Team at Baidu, built on a single-stream Diffusion Transformer (DiT) with 8B parameters and paired with a lightweight Prompt Enhancer that rewrites short prompts into richer, more structured descriptions before passing them to the diffusion backbone. NYU Shanghai RITS Released on April 15, 2026 under the Apache 2.0 license, it transforms natural language descriptions into detailed imagery with particular strength in text rendering and structured layout generation. ERNIE-Image is designed not only for strong visual quality, but for controllability in practical generation scenarios where accurate content realization matters as much as aesthetics — making it well-suited for commercial posters, comics, multi-panel layouts, and other content creation tasks that require both visual quality and precise control.

GPT Image Models

The GPT Image Family is OpenAI's latest suite of multimodal image generation and editing models, built on the powerful GPT architecture. This family includes three tiers — GPT Image-1, GPT Image-1.5, and GPT Image-1 Mini — each available in both Text-to-Image and Image-to-Image variants. Combining GPT's world-class language understanding with DALL·E-class visual synthesis, these models deliver exceptional prompt adherence, photorealistic rendering, and creative versatility across illustration, photography, design, and visualization tasks. The series offers flexible pricing and quality tiers to match any workflow — from rapid prototyping and high-volume content production to professional-grade final deliverables. Whether you need ultra-fast iterations at minimal cost or maximum quality for brand campaigns, the GPT Image Family has a solution tailored to your needs.

Nano Banana2 Models

Nano Banana 2 (by Google), is a generative image model that perfectly balances lightning-fast rendering with exceptional visual quality. With an improved price-performance ratio, it achieves breakthrough micro-detail depiction, accurate native text rendering, and complex physical structure reconstruction. It serves as a highly efficient, commercial-grade visual production tool for developers, marketing teams, and content creators.

Seedream5.0 Models

Seedream 5.0, developed by ByteDance’s Jimeng AI, is a high-performance AI image generation model that integrates real-time search with intelligent reasoning. Purpose-built for time-sensitive content and complex visual logic, it excels at professional infographics, architectural design, and UI assistance. By blending live web insights with creative precision, Seedream 5.0 empowers commercial branding and marketing with a seamless, logic-driven workflow that turns sophisticated data into stunning, high-fidelity visuals.

Kling3.0 Models

Kuaishou’s flagship video generation suite, Kling 3.0, features two powerhouse models—Kling 3.0 (Upgraded from Kling 2.6) and Kling 3.0 Omni (Kling O3, Upgraded from Kling O1)—both offering high-fidelity native audio integration. While Kling 3.0 excels in intelligent cinematic storytelling, multilingual lip-syncing, and precision text rendering, Kling O3 sets a new standard for professional-grade subject consistency by supporting custom subjects and voice clones derived from video or image inputs. Together, these models provide a comprehensive solution tailored for cinematic narratives, global marketing campaigns, social media content, and digital skit production.

GLM LLM Models

GLM is a cutting-edge LLM series by Z.ai (Zhipu AI) featuring GLM-5, GLM-4.7, and GLM-4.6. Engineered for complex systems and long-horizon agentic tasks, GLM-5 outperforms top-tier closed-source models in elite benchmarks like Humanity’s Last Exam and BrowseComp. While GLM-4.7 specializes in reasoning, coding, and real-world intelligent agents, the entire GLM suite is fast, smart, and reliable, making it the ultimate tool for building websites, analyzing data, and delivering instant, high-quality answers for any professional workflow.

Open AI Model Families

Explore OpenAI’s language and video models on Atlas Cloud: ChatGPT for advanced reasoning and interaction, and Sora-2 for physics-aware video generation.