GPT Image Models

The GPT Image Family is OpenAI's latest suite of multimodal image generation and editing models, built on the powerful GPT architecture. This family includes three tiers — GPT Image-1, GPT Image-1.5, and GPT Image-1 Mini — each available in both Text-to-Image and Image-to-Image variants. Combining GPT's world-class language understanding with DALL·E-class visual synthesis, these models deliver exceptional prompt adherence, photorealistic rendering, and creative versatility across illustration, photography, design, and visualization tasks. The series offers flexible pricing and quality tiers to match any workflow — from rapid prototyping and high-volume content production to professional-grade final deliverables. Whether you need ultra-fast iterations at minimal cost or maximum quality for brand campaigns, the GPT Image Family has a solution tailored to your needs.

主要モデルを探索

Atlas Cloudは、業界をリードする最新のクリエイティブモデルを提供します。

最高速度

最低コスト

| モダリティ | 説明 |

|---|---|

| GPT Image-1 T2I API(Text to Image) | GPT Image-1のText to Image APIは、開発者がテキストプロンプトを並外れたディテールを持つ驚くほどリアルな視覚イメージに変換できるようにします。GPT-4 Turboの推論能力とDALL·Eクラスの視覚合成を組み合わせることで、プロフェッショナルな画像制作において業界をリードするプロンプト忠実度と複雑な構図の作成能力を提供します。 |

| GPT Image-1 Edit API(Image to Image) | GPT Image-1 Edit APIは、開発者が既存の画像を、シームレスな一貫性を持つ洗練された、あるいは再構築された傑作へと変換することを可能にします。マルチモーダルな理解を活用することで、プロフェッショナルレベルのアセットのイテレーションに向けて、正確なスタイル転送、コンテキストに応じた構図の作成、およびターゲットを絞った変更を生成します。 |

| GPT Image-1.5 T2I API(Text to Image) | The GPT Image-1.5 Text to Image API empowers developers to transform text prompts into high-quality visuals at optimized cost. By leveraging GPT-powered architecture, it delivers strong prompt understanding and visual fidelity for balanced production workflows. |

| GPT Image-1.5 Edit API(Image to Image) | The GPT Image-1.5 Edit API empowers developers to refine existing assets with precise modifications. By supporting input_fidelity control, it enables fine-tuned adjustments while preserving essential elements like faces and logos. |

| GPT Image-1 Mini T2I API(Text to Image) | The GPT Image-1 Mini Text to Image API empowers developers with the most cost-efficient image generation in the family. By leveraging GPT-5 architecture, it delivers professional-grade results at the lowest cost-per-image for high-volume content production. |

| GPT Image-1 Mini Edit API(Image to Image) | The GPT Image-1 Mini Edit API empowers developers to transform existing images with streamlined editing capabilities. By providing essential editing functions at minimal cost, it enables rapid iteration and content production workflows. |

GPT Image Models の新機能 + ショーケース

先進的なモデルと Atlas Cloud の GPU アクセラレーションプラットフォームを組み合わせ、画像・動画生成において比類のない速度、拡張性、クリエイティブコントロールを実現します。

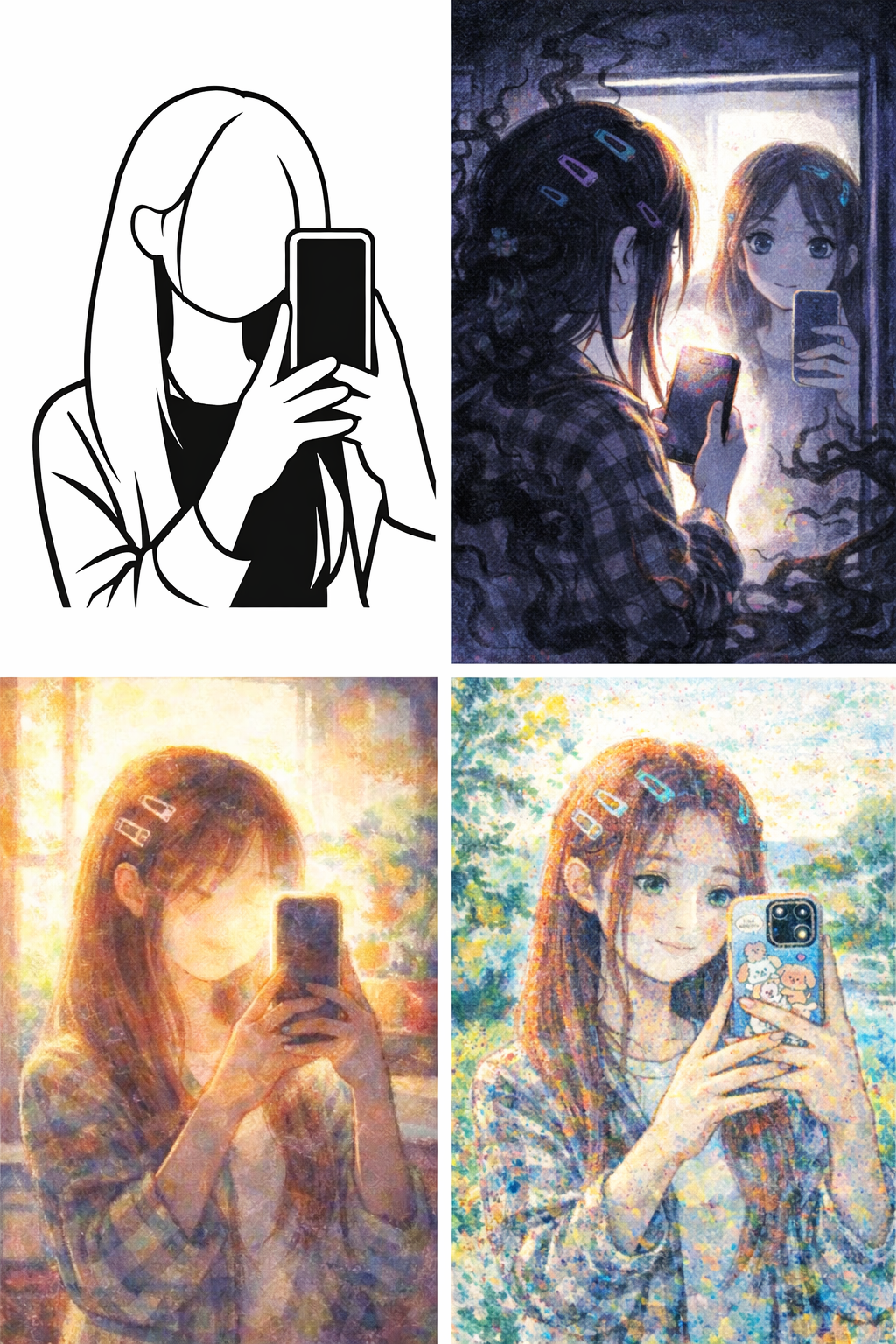

Flexible Style Generation Using GPT Image API

Produces diverse visual outputs spanning photorealistic photography, stylized artwork, concept art, infographics, 3D-style illustrations, and more. From cinematic landscapes to UI mockups, the models adapt to your creative direction with precision.

High Visual Fidelity Using GPT Image API

Maintains object relationships, lighting consistency, and color balance with industry-leading prompt adherence. Generated images exhibit natural textures, accurate proportions, and physically plausible compositions.

Accurate Text Rendering Using GPT Image API

Capable of generating clean, legible typography within images — ideal for posters, memes, comics, branding visuals, and any project requiring integrated textual elements.

Knowledge-Grounded Creativity Using GPT Image API

Leverages GPT-4/GPT-5's world knowledge to generate factually accurate and contextually appropriate visuals. The model understands cultural references, historical contexts, and domain-specific concepts.

GPT Image Models でできること

このモデルファミリーで構築できる実用的なユースケースとワークフローを発見 — コンテンツ作成や自動化から本番グレードのアプリケーションまで。

Professional Photography & Visual Art

Generate photorealistic images with cinematic lighting, precise composition, and natural textures. From product photography to editorial visuals, GPT Image models produce outputs indistinguishable from professional camera work.

UI/UX Design & Mockups

Create clean, modern design concepts including app interfaces, dashboards, websites, and product layouts. The models excel at generating structured compositions with professional aesthetics.

Marketing & Advertising Campaigns

Rapidly produce campaign-ready visuals for social media, digital ads, and brand marketing. Support for multiple quality tiers enables both rapid A/B testing and high-end final deliverables.

Creative Concept Art & Illustration

Explore styles, moodboards, and concept art at speed. Generate illustrations in diverse artistic styles — from watercolor paintings to anime, comic books to oil paintings.

Style Transfer & Artistic Transformation

Transform existing images into different artistic styles while preserving core subject matter. Convert photos to cartoons, paintings, sketches, or any aesthetic direction with natural language instructions.

Content Localization & Adaptation

Quickly adapt visual content for different markets, audiences, or platforms. Modify backgrounds, adjust colors, update styling, or re-contextualize imagery through simple text descriptions.

モデル比較

異なるプロバイダーのモデルを比較 — パフォーマンス、料金、独自の強みを確認して最適な選択を。

| Model | Reference Image Limit | Output Num | Resolution | Aspect Ratio |

|---|---|---|---|---|

| GPT Image-1 | 4 | 1~10 | 1024×1024, 1024×1536, 1536×1024 | 1:1, 3:2, 2:3 |

| GPT Image-1.5 | 10 | 1 | 1024×1024, 1024×1536, 1536×1024 | 1:1, 3:2, 2:3 |

| GPT Image-1 Mini | 4 | 1~10 | 1024×1024, 1024×1536, 1536×1024 | 1:1, 3:2, 2:3 |

| Nano Banana 2 | 14 | 1 | 4K, 2K, 1K | 1:1 3:2 2:3 3:4 4:3 4:5 5:4 9:16 16:9 21:9 |

| Seedream 5.0 | 14 | 1~15 | 2K~4K+ | 1:1 3:2 2:3 3:4 4:3 4:5 5:4 9:16 16:9 21:9 |

Atlas Cloud で GPT Image Models を使う方法

数分で始められます — 以下の簡単なステップに従って、Atlas Cloud プラットフォームでモデルを統合・デプロイしましょう。

Atlas Cloud アカウントを作成

atlascloud.ai でサインアップし、認証を完了します。新規ユーザーには無料クレジットが付与され、プラットフォームの探索やモデルのテストに使用できます。

Atlas CloudでGPT Image Modelsを使用する理由

高度なGPT Image ModelsモデルとAtlas CloudのGPU加速プラットフォームを組み合わせることで、比類のないパフォーマンス、スケーラビリティ、開発者エクスペリエンスを提供。

パフォーマンスと柔軟性

低レイテンシ:

リアルタイム推論のためのGPU最適化推論。

統合API:

1つの統合でGPT Image Models、GPT、Gemini、DeepSeekを実行。

透明な料金:

サーバーレスオプション付きの予測可能なtoken単位の課金。

エンタープライズとスケール

開発者エクスペリエンス:

SDK、分析、ファインチューニングツール、テンプレート。

信頼性:

99.99%の稼働率、RBAC、コンプライアンス対応ロギング。

セキュリティとコンプライアンス:

SOC 2 Type II、HIPAA準拠、米国内のデータ主権。

GPT Image Models に関するよくある質問

GPT Image-1 is the flagship model, combining GPT-4 Turbo's reasoning capabilities with DALL·E-class visual synthesis for the highest quality outputs and most complex prompt handling. GPT Image-1.5 offers optimized performance with strong quality at lower cost, making it ideal for balanced production workflows. GPT Image-1 Mini delivers the most cost-efficient image generation, powered by GPT-5 architecture, perfect for high-volume and rapid iteration scenarios.

Each model supports Low, Medium, and High quality settings. Higher quality produces more detailed and photorealistic results but at higher cost. For initial testing and previews, use Low quality for speed and savings. Switch to High quality for final deliverables requiring maximum fidelity.

Text-to-Image models support three output sizes: 1024×1024 (square), 1024×1536 (portrait), and 1536×1024 (landscape). Choose based on your use case — portrait for characters and vertical art, landscape for cinematic scenes and wide compositions, square for general purpose content.

Yes. The Edit models support optional mask input, allowing you to precisely control which regions of the image are modified. This enables targeted edits while preserving the rest of the image exactly as is.

さらにファミリーを探索

Happy Horse 1.0

HappyHorse-1.0 is a unified multimodal AI video generation model that climbed to the top of the Artificial Analysis Video Arena blind-test leaderboard for both text-to-video and image-to-video generation. CNBC Alibaba Group confirmed ownership of HappyHorse, developed under its Alibaba Token Hub (ATH) business unit, where it leads benchmarks outperforming ByteDance's Seedance 2.0 and others. Caixin Global Led by Zhang Di — the former VP of Kuaishou who architected Kling AI — the 15-billion parameter model generates 1080p video with synchronized audio in a single pass using a unified transformer architecture that bypasses the multi-stage pipelines used by every major competitor.

Seedance 2.0 Models

Seedance 2.0(by Bytedance) is a multimodal video generation model that redefines "controllable creation," moving beyond the limitations of text or start/end frames. It supports quad-modal inputs—text, image, video, and audio—and introduces an industry-leading "Universal Reference" system. By precisely replicating the composition, camera movement, and character actions from reference assets, Seedance 2.0 solves critical issues with character consistency and physical coherence, empowering creators to act as true "directors" with deep control over their output.

GPT Image 2 Models

GPT Image 2 is a state-of-the-art multimodal foundation model engineered for exceptional text-to-image generation with unprecedented photorealism and creative versatility. Developed by OpenAI as the evolution of the DALL-E lineage, it transforms detailed natural language descriptions into hyper-realistic imagery at up to 4K resolution. With proprietary "Neural Rendering Engine" technology for precise visual control, GPT Image 2 delivers studio-quality results with accurate anatomy, lighting, and composition—making it the premier AI tool for professional creators, enterprises, and developers demanding production-ready visual assets.

Grok-Imagine Models

Grok Imagine Image Quality is xAI's latest AI image generation model, delivering studio-grade visuals with up to 2K resolution and razor-sharp detail. It offers best-in-class text rendering across multiple languages, photorealistic outputs with natural lighting, rich textures, and believable physics, plus tighter prompt following and image editing with reference inputs for precise creative control. Ideal for hero images, ad creatives, product renders, and brand-grade visuals.

Wan2.7 Models

Launching this March, Wan2.7 is the latest powerhouse in the Qwen ecosystem, delivering a massive upgrade in visual fidelity, audio synchronization, and motion consistency over version 2.6. This all-in-one AI video generator supports advanced features like first-and-last frame control, 3x3 grid synthesis, and instruction-based video editing. Outperforming competitors like Jimeng, Wan2.7 offers superior flexibility with support for real-person image inputs, up to five video references, and 1080P high-definition outputs spanning 2 to 15 seconds, making it the premier choice for professional digital storytelling and high-end content marketing.

Veo3.1 Models

Google DeepMind’s Veo 3.1 represents a paradigm shift in AI video generation, empowering creators with director-level narrative control and cinematic-grade audio quality that seamlessly integrates with its enhanced visual realism. By bridging the gap between imaginative concepts and photorealistic execution, this advanced model offers a transformative solution for a wide range of application scenarios, from professional filmmaking and high-end advertising to immersive digital content creation.

ERNIE Image Models

ERNIE-Image is an open-weight text-to-image model developed by the ERNIE-Image Team at Baidu, built on a single-stream Diffusion Transformer (DiT) with 8B parameters and paired with a lightweight Prompt Enhancer that rewrites short prompts into richer, more structured descriptions before passing them to the diffusion backbone. NYU Shanghai RITS Released on April 15, 2026 under the Apache 2.0 license, it transforms natural language descriptions into detailed imagery with particular strength in text rendering and structured layout generation. ERNIE-Image is designed not only for strong visual quality, but for controllability in practical generation scenarios where accurate content realization matters as much as aesthetics — making it well-suited for commercial posters, comics, multi-panel layouts, and other content creation tasks that require both visual quality and precise control.

GPT Image Models

The GPT Image Family is OpenAI's latest suite of multimodal image generation and editing models, built on the powerful GPT architecture. This family includes three tiers — GPT Image-1, GPT Image-1.5, and GPT Image-1 Mini — each available in both Text-to-Image and Image-to-Image variants. Combining GPT's world-class language understanding with DALL·E-class visual synthesis, these models deliver exceptional prompt adherence, photorealistic rendering, and creative versatility across illustration, photography, design, and visualization tasks. The series offers flexible pricing and quality tiers to match any workflow — from rapid prototyping and high-volume content production to professional-grade final deliverables. Whether you need ultra-fast iterations at minimal cost or maximum quality for brand campaigns, the GPT Image Family has a solution tailored to your needs.

Nano Banana2 Models

Nano Banana 2 (by Google), is a generative image model that perfectly balances lightning-fast rendering with exceptional visual quality. With an improved price-performance ratio, it achieves breakthrough micro-detail depiction, accurate native text rendering, and complex physical structure reconstruction. It serves as a highly efficient, commercial-grade visual production tool for developers, marketing teams, and content creators.

Seedream5.0 Models

Seedream 5.0, developed by ByteDance’s Jimeng AI, is a high-performance AI image generation model that integrates real-time search with intelligent reasoning. Purpose-built for time-sensitive content and complex visual logic, it excels at professional infographics, architectural design, and UI assistance. By blending live web insights with creative precision, Seedream 5.0 empowers commercial branding and marketing with a seamless, logic-driven workflow that turns sophisticated data into stunning, high-fidelity visuals.

Kling3.0 Models

Kuaishou’s flagship video generation suite, Kling 3.0, features two powerhouse models—Kling 3.0 (Upgraded from Kling 2.6) and Kling 3.0 Omni (Kling O3, Upgraded from Kling O1)—both offering high-fidelity native audio integration. While Kling 3.0 excels in intelligent cinematic storytelling, multilingual lip-syncing, and precision text rendering, Kling O3 sets a new standard for professional-grade subject consistency by supporting custom subjects and voice clones derived from video or image inputs. Together, these models provide a comprehensive solution tailored for cinematic narratives, global marketing campaigns, social media content, and digital skit production.

GLM LLM Models

GLM is a cutting-edge LLM series by Z.ai (Zhipu AI) featuring GLM-5, GLM-4.7, and GLM-4.6. Engineered for complex systems and long-horizon agentic tasks, GLM-5 outperforms top-tier closed-source models in elite benchmarks like Humanity’s Last Exam and BrowseComp. While GLM-4.7 specializes in reasoning, coding, and real-world intelligent agents, the entire GLM suite is fast, smart, and reliable, making it the ultimate tool for building websites, analyzing data, and delivering instant, high-quality answers for any professional workflow.