The World's Leading LLMs.

Ready for Any Conversation.

Power reasoning, coding, and natural language with enterprise-grade large language models.

Explore Our Expansive LLM Models

DeepSeek V4 Pro is a state-of-the-art large language model combining efficient sparse attention, strong reasoning, and integrated agent capabilities for robust long-context understanding and versatile AI applications.

DeepSeek V4 Pro

DeepSeek V4 Flash is a state-of-the-art large language model combining efficient sparse attention, strong reasoning, and integrated agent capabilities for robust long-context understanding and versatile AI applications.

DeepSeek V4 Flash

No description available.

OWL

Kimi K2.6 is an advanced large language model with strong reasoning and upgraded native multimodality. It natively understands and processes text and images, delivering more accurate analysis, better instruction following, and stable performance across complex tasks. Designed for production use, Kimi K2.6 is ideal for AI assistants, enterprise applications, and multimodal workflows that require reliable and high-quality outputs.

Kimi K2.6

The latest Qwen reasoning model.

Qwen3.6 35B A3B

The latest Qwen reasoning model.

Qwen3.6 Plus

GLM-5.1 is Z.AI’s latest flagship model, featuring upgrades in two key areas: enhanced programming capabilities and more stable multi-step reasoning/execution. It demonstrates significant improvements in executing complex agent tasks while delivering more natural conversational experiences and superior front-end aesthetics.

GLM 5.1

MiniMax-M2.7 is a lightweight, state-of-the-art large language model optimized for coding, agentic workflows, and modern application development. With only 10 billion activated parameters, it delivers a major jump in real-world capability while maintaining exceptional latency, scalability, and cost efficiency.

MiniMax M2.7

The Atlas Cloud Advantage:

Speed, Scale, and Savings

Our Model Library isn't just the largest. It's the most cost-effective, reliable, and production-ready. Proven performance, backed by data.

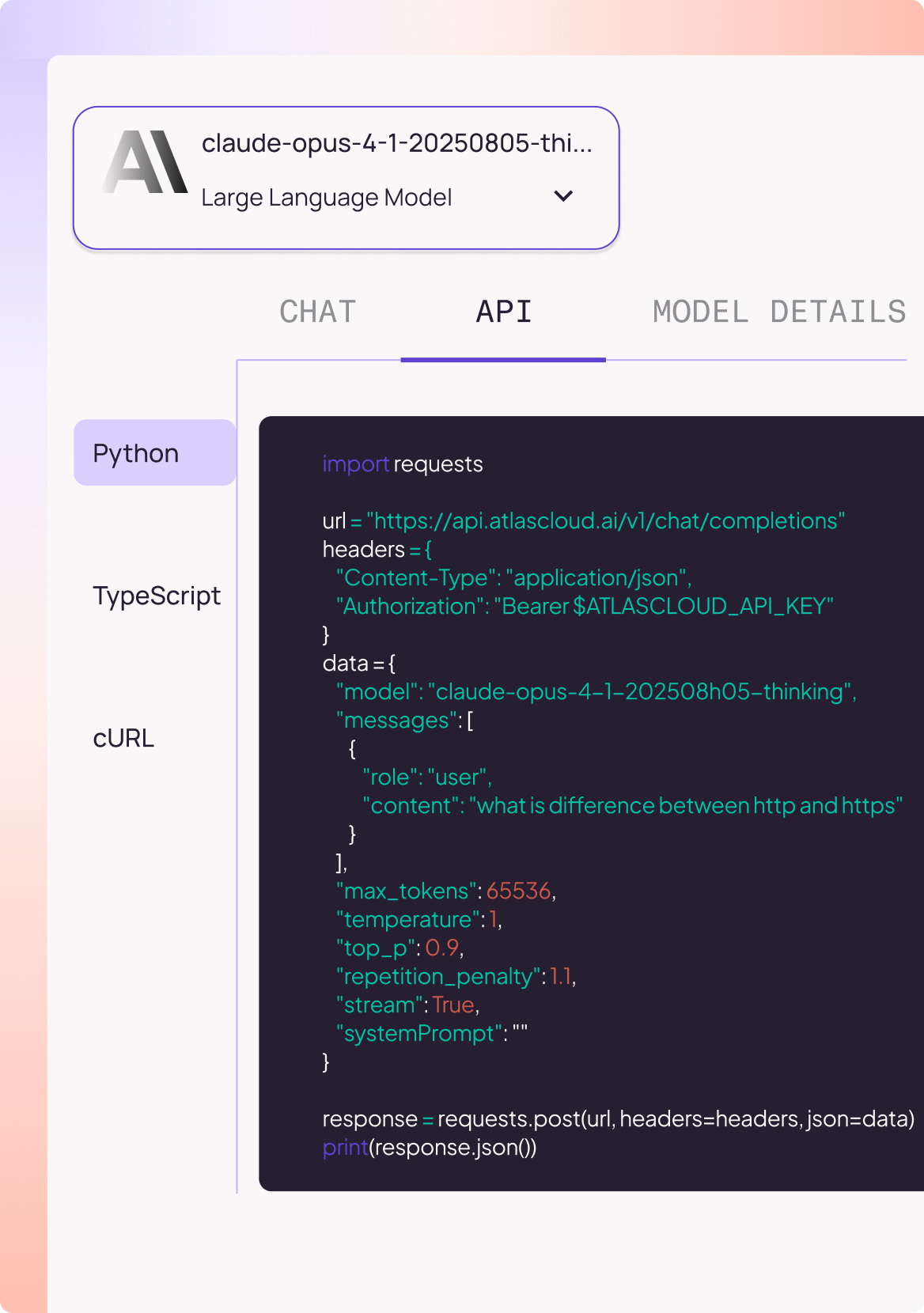

300+ Models, One Unified API

Multimodal, open-source, proprietary: all through one consistent endpoint.

Serverless API Access

Start instantly with Python, TypeScript, or cURL, with no infra setup needed.

Proven Performance at Scale

10M+ API calls/month, 70+ TPS stability, deployed across 12 global regions.

Transparent & Flexible Pricing

Pay-as-you-go. Enterprise discounts up to 50%.