Early AI tools rewarded patience, not strategy — prompt, hope, repeat. In 2026, that model is obsolete. Automated content workflows 2026 demand something more reliable: a system, not a gamble.

The goal has shifted. Forward-thinking teams aren't "making images" anymore — they're engineering a visual engine baked with brand identity. Through image API character consistency, every asset produced reflects the same style, palette, and tone, with zero human handholding per output.

The Competitive Edge: Why Headless APIs Win

| Approach | Visual Consistency | Overhead |

| Manual AI tools | Variable | High |

| Headless Image API | Near-total | Significantly reduced |

Market leaders have abandoned the creative bottleneck of manual generation. By integrating cost-effective AI image generation at the API layer, brands gain:

- Predictable output at scale

- Faster campaign cycles

- Measurable AI image API ROI

Infrastructure beats inspiration. The brands winning on visual content aren't more creative — they're more systematic.

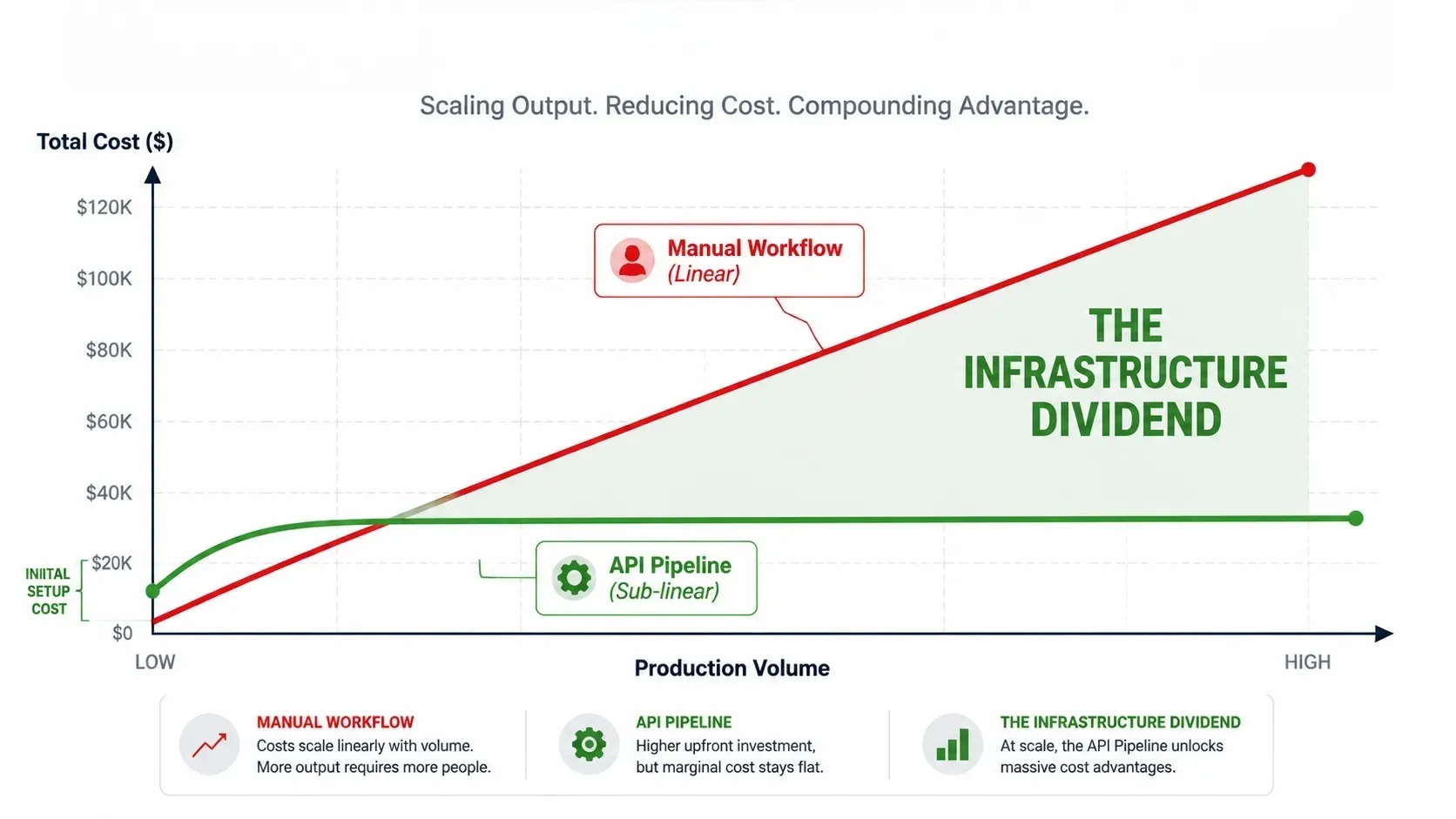

The Infrastructure Dividend: The True ROI of Image APIs

Traditional AI content production treats labor as the primary expense — someone sits at a browser, crafts prompts, reviews outputs, and reruns failures. AI image API ROI becomes real when that model flips: instead of paying for hours, you pay for inference. Compute scales; headcount doesn't have to.

This is the unit economics shift — from Labor-as-a-Cost to Inference-as-a-Utility.

Comparative Production Efficiency

The performance gap between manual workflows and API-integrated pipelines isn't marginal. It's structural.

| Operational Metric | Manual "Artisan" Method | API-Integrated Pipeline |

| Operational Link | Browser-based / Discord | Direct CMS / Server-side |

| Consistency Control | Human memory & intuition | Seed-level & LoRA parameter locks |

| Marginal Cost | Linear — more images, more hours | Sub-linear — scale reduces per-unit cost |

| Error Rate | ~15–20% (requires re-generation) | < 2% (standardized via API parameters) |

Image API character consistency is the direct result of removing human judgment from the loop — not as a loss of creativity, but as a gain in reliability.

Zero-Touch Scalability: Asynchronous Workflows in Practice

The ceiling for manual production is a single operator's bandwidth. API pipelines have no such ceiling.

With asynchronous workflows, a single API call can trigger thousands of parallel image jobs — each with unique localization parameters, regional copy overlays, or audience-specific variables. In automated content workflows 2026, this means:

- No dedicated "AI operator" managing generations one by one

- Cost-effective AI image generation at volume, without proportional headcount growth

- Campaign-ready assets delivered directly into the CMS on completion

The infrastructure dividend isn't a future promise — it's available now, at the API layer.

Solving the "Quality" Problem: Not Cutting Corners

Automation skeptics often raise the same concern: won't consistency come at the cost of quality? In practice, the opposite is true — the API layer is precisely where quality gets engineered, not compromised.

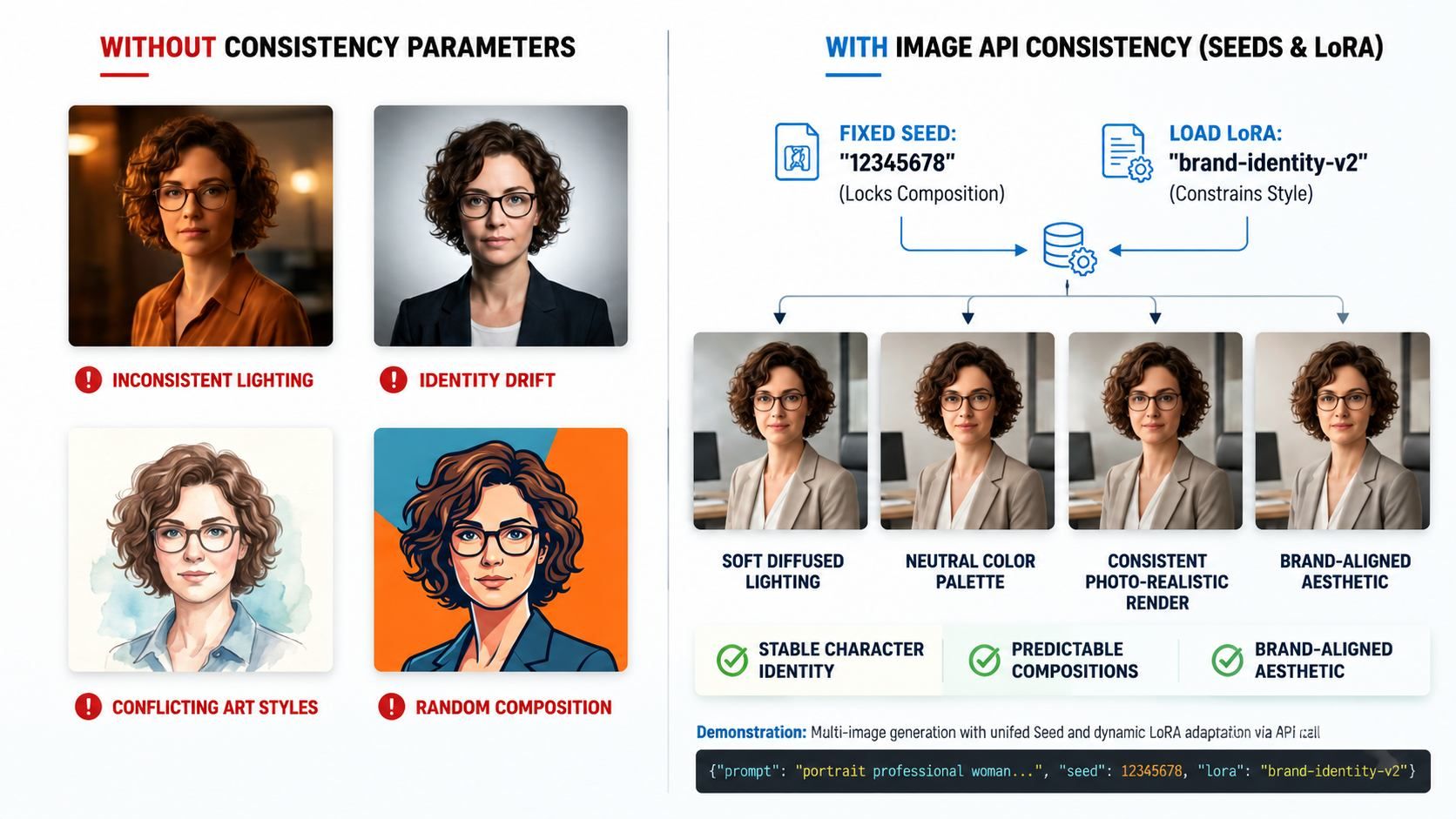

Character & Style Consistency at Scale

The biggest technical challenge in any long-running content program is drift — the gradual erosion of a recognizable visual identity. Image API character consistency solves this through two complementary mechanisms:

- Seeds: A fixed seed value passed via API parameters locks the generative randomness of a model, producing near-identical compositional outputs from the same prompt. This is how a "brand face" stays the same across 100 blog posts without a single manual re-roll.

- LoRA (Low-Rank Adaptation): LoRA files are lightweight fine-tuned model adapters trained on a curated set of brand visuals. When loaded through the API, they constrain output style — lighting, color temperature, subject rendering — to match a predefined aesthetic standard.

Together, seeds and LoRA form the backbone of any serious cost-effective AI image generation pipeline that prioritizes brand fidelity.

The 2026 Authenticity Shift

The hyper-polished, CGI-smooth output that defined early AI imagery is now a liability. Audiences are increasingly fluent in detecting synthetic perfection. In automated content workflows 2026, quality means intentional imperfection:

| Aesthetic Signal | What It Communicates |

| Film grain overlay | Warmth, analogue heritage |

| Soft, natural lighting | Approachability, realism |

| Diverse skin textures | Authenticity, inclusivity |

| Slight lens distortion | Handcrafted, non-corporate feel |

These parameters are fully injectable via API — no manual post-processing required.

Interactive Demo: See the Infrastructure Dividend in action.

Left: Raw API output — functional but unrefined.

Right: Production-ready asset after Chained Inference (Advanced Refraction, Macro Detail Enhancement, and Dynamic Branding).

Note: The images above were generated for free using Atlas Cloud's ERNIE Image Turbo Text-to-Image API.

How much can I save by switching to automated image generation?

Savings vary significantly based on current production costs, asset volume, and the complexity of the pipeline built. Rather than citing figures that wouldn't apply universally, the honest framework is this:

- Fixed costs replaced: Art direction, prompt iteration, and file management labor

- Variable costs reduced: Per-image inference spend is sub-linear at scale — the more you generate, the lower the unit cost

- Hidden savings: Faster turnaround removes dependency on contractor availability

Cost-effective AI image generation delivers measurable AI image API ROI when volume is high enough that per-unit inference costs fall well below equivalent human production rates. For most content teams, that threshold is lower than expected.

Commercial Safety: Choosing the Right Data Foundation

Visual quality means nothing if it carries legal exposure. A growing number of providers now train exclusively on licensed or proprietary datasets:

- Adobe Firefly is trained on Adobe Stock imagery, openly licensed content, and public domain material, making it one of the safer choices for commercial deployment.

- Getty Images' Generative AI offers indemnified output for enterprise users, backed by its fully licensed library.

These "clean room" APIs trade some stylistic breadth for legal clarity — a worthwhile exchange for any brand with commercial publishing needs. AI image API ROI is only realized when the output is actually usable, without a legal review process eating into the time saved.

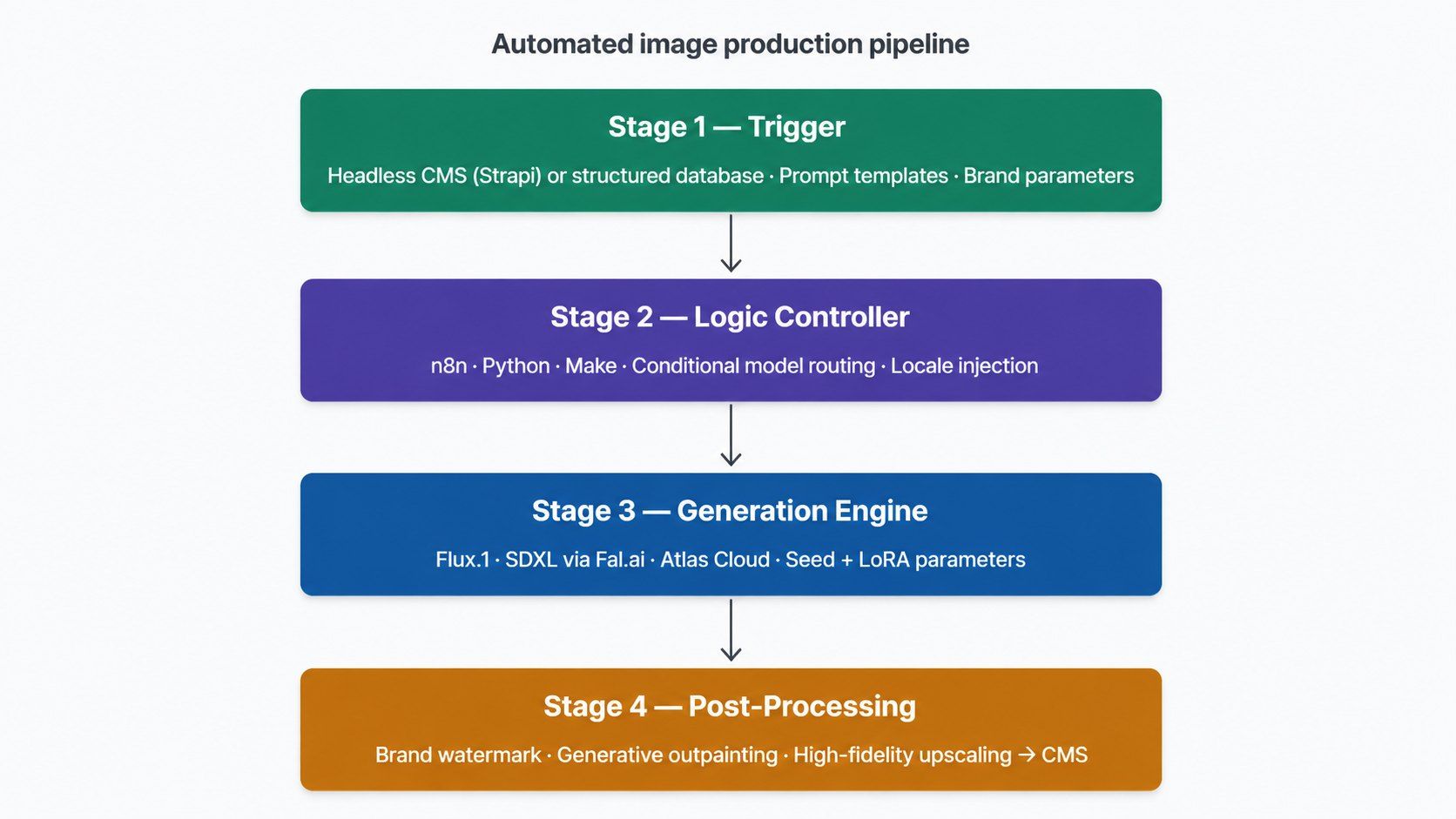

Technical Architecture: A High-Level Workflow

Deploying automated content workflows 2026 doesn't require a large engineering team — but it does require thinking in systems. The pipeline below represents a production-ready image automation stack, broken into four distinct layers that each do one job cleanly.

Stage 1 — The Trigger: Source of Truth

Every image generated by the system traces back to a single, structured input. This is typically a Headless CMS such as Strapi or a relational database. Each record in the CMS carries:

- The prompt template (with dynamic variable slots for localization)

- Brand constraint parameters (LoRA identifiers, seed values, aspect ratio)

- Destination metadata (CMS asset ID, campaign tag, target format)

This structured approach is what makes image API character consistency enforceable at scale — the brand rules live in the data, not inside someone's head.

Stage 2 — The Logic Controller: Orchestration Layer

Raw prompts don't go directly to the image API. An orchestration tool — such as n8n, Make, or a custom Python service — sits between the CMS and the generation engine. Its job is conditional routing:

| Condition | Action |

| Style = photorealistic | Route to Flux.1 [dev] model |

| Style = illustration | Route to SDXL with custom LoRA |

| Resolution = print-ready | Trigger upscaling post-step |

| Locale = non-English market | Inject localized prompt variant |

This layer is where cost-effective AI image generation is actually enforced — by routing lower-priority assets to faster, cheaper models and reserving premium inference for hero imagery.

Stage 3 — The Generation Engine: API Inference

The orchestrator fires API calls to high-performance inference platforms. Production deployments typically use:

- Fal.ai — for low-latency Flux.1 and SDXL inference with queue management

- Replicate — for flexible model hosting across a broad model library

- Atlas Cloud — for enterprise-grade throughput and SLA-backed uptime

Each call passes the full parameter set: model ID, seed, LoRA weights, guidance scale, and output format. The API returns a raw asset URL, which the orchestrator passes forward.

Stage 4 — The Post-Processing Layer: The Refinement Chain

Raw API output rarely ships as-is. A chained set of specialized calls transforms the base image into a production-ready asset:

- Brand watermarking — overlay logo assets at defined anchor positions via compositing API

- Generative outpainting — expand the frame to fit different sizes. Turn 16:9 into 9:16 for Stories or 1:1 for social feeds. You can do this without making a brand new image from zero.

- High-quality upscaling — run your file through an upscaling tool like Real-ESRGAN on Replicate. This helps you reach the high resolution needed for print or big displays.

The finished image goes straight into your CMS. No one needs to move it manually. This full automation is where you really see the value of an AI API. One single step now replaces a production process that used to take several days and multiple people.

Do image APIs require coding knowledge?

Not necessarily, though the level of technical skill required scales with pipeline complexity.

td {white-space:nowrap;border:0.5pt solid #dee0e3;font-size:10pt;font-style:normal;font-weight:normal;vertical-align:middle;word-break:normal;word-wrap:normal;}

| Approach | Coding Required | Best For |

| No-code orchestrators (n8n, Make) | None | Teams new to automation |

| Low-code Python scripts | Basic | Mid-level workflows |

| Custom server-side integration | Intermediate–Advanced | Production-grade pipelines |

Without writing a single line of code, teams running automated content workflows 2026 easily connect a CMS to an image API using no-code tools like n8n or Make. Although it is not a must to begin, full API chaining, as explained in Section 5, benefits from a developer.

Advanced Strategies: Beyond One-Click Generation

A single API call producing a single image is the floor, not the ceiling. The brands achieving the highest AI image API ROI aren't running simple prompt-to-output pipelines — they're chaining models, feeding in live data, and building quality gates that make the output self-correcting.

Multi-Model Orchestration: API Chaining

The move from "one-shot" prompting to chained inference is the single biggest unlock in automated content workflows 2026. Instead than expecting a single model to perform flawlessly, each model is given the duty that best suits it:

| Pipeline Stage | Model Role | Example Tool |

| Base generation | Composition, layout, scene | Flux.1 [dev] / SDXL |

| Face correction | Facial realism, detail recovery | GFPGAN / CodeFormer via Replicate |

| Super-resolution | Upscaling to 4K print quality | Real-ESRGAN via Fal.ai |

Each stage receives the output of the previous one as its input. The result is a finished asset that no single model could produce alone — at a per-image cost far lower than commissioning a human photographer.

Context-Aware Hyper-Personalization

Real-time context can be injected directly into prompt variables before an API call fires. A product image pipeline, for example, might query a viewer's local weather or time of day and dynamically adjust:

- Lighting style → "golden hour" warm tones at sunset, cool overcast fill at noon

- Background season → matching outdoor backgrounds to the viewer's current climate

- Ambient color temperature → cooler blues for morning, warmer ambers for evening

This isn't hypothetical — it's a straightforward extension of any templated prompt system that accepts dynamic variables at runtime. The key is structuring prompt templates with named slots that the orchestration layer populates from a live data source before the API call is made.

Persistent Brand Identity: LoRA + ControlNet

Image API character consistency across thousands of assets requires more than a fixed seed. For recurring characters or precise brand geometries, two tools work in tandem:

- LoRA constrains overall aesthetic, skin tone, style, and lighting to a trained brand standard.

- ControlNet — a structural guidance layer developed for Stable Diffusion — accepts a reference pose, edge map, or depth image and forces the composition to conform to it, regardless of prompt variation. This keeps a brand mascot's proportions identical across wildly different scene contexts.

You can find both as API options on sites like Replicate. This makes it cheap to create high-quality AI images generation with consistent characters. It is now a real choice for projects instead of drawing everything by hand.

Dynamic Human-in-the-Loop Quality Gates

Fully automated pipelines still need a quality floor. Before any asset reaches the CMS, a scoring step filters out outputs that fail minimum standards. Common approaches include:

- LAION Aesthetic Predictor — a CLIP-based model that scores images on perceived aesthetic quality

- Artifact detection classifiers — custom or pre-trained models that flag distorted anatomy, garbled text rendering, or broken symmetry

- Aspect ratio and resolution validators — lightweight checks that reject technically malformed outputs before they propagate downstream

Only assets that clear every gate proceed to the CMS. The cost of an additional inference call for scoring is negligible compared to the cost of a brand publishing a disfigured image at scale.

Which AI image API has the best character consistency in 2026?

There is no universal answer — image API character consistency depends on the method, not just the provider. The most reliable approach combines:

- A LoRA-compatible platform (Fal.ai, Atlas Cloud, Replicate, or Stability AI's API) for style locking

- ControlNet for structural pose or geometry constraints

- Fixed seed values for output reproducibility across runs

Platforms that support all three simultaneously offer the strongest consistency guarantees for recurring brand characters or product visuals.

Conclusion: Future-Proofing Your Creative Output

Automation doesn't eliminate the need for creative judgment — it relocates it.

The New Role: Creative Editor, Not Operator

In a fully automated visual pipeline, the human role shifts from prompt-writer to systems architect and editorial gatekeeper. The "Creative Editor" of 2026 makes decisions that no API parameter can encode:

- Which brand narratives are worth telling visually

- When to override the pipeline's output in favor of something unexpected

- How to evolve the LoRA training data as the brand identity matures

- Where image API character consistency ends and creative stagnation begins

This isn't a diminished role. It's a more leveraged one — where one person's creative vision propagates across thousands of assets instead of dozens.

Final ROI Check: From Experimental to Operational

The inflection point between "we're testing AI" and "AI runs our content operation" comes down to three measurable shifts:

| Signal | Experimental AI | Operational AI |

| Trigger | Manual, ad hoc | Automated, event-driven |

| Output volume | Hundreds per month | Thousands per week |

| Cost structure | Project budget | Predictable utility spend |

| Quality control | Human review of every asset | Automated scoring gates |

When all four rows flip, AI image API ROI stops being a hypothesis and becomes a line item. Cost-effective AI image generation at this stage isn't a competitive advantage — it's the baseline expectation.

Automated content workflows 2026 won't favor the teams with the biggest budgets. They'll favor the teams that built the most reliable systems. The infrastructure is available now. The only remaining variable is whether to build it.