ERNIE Image Models

ERNIE-Image is an open-weight text-to-image model developed by the ERNIE-Image Team at Baidu, built on a single-stream Diffusion Transformer (DiT) with 8B parameters and paired with a lightweight Prompt Enhancer that rewrites short prompts into richer, more structured descriptions before passing them to the diffusion backbone. NYU Shanghai RITS Released on April 15, 2026 under the Apache 2.0 license, it transforms natural language descriptions into detailed imagery with particular strength in text rendering and structured layout generation. ERNIE-Image is designed not only for strong visual quality, but for controllability in practical generation scenarios where accurate content realization matters as much as aesthetics — making it well-suited for commercial posters, comics, multi-panel layouts, and other content creation tasks that require both visual quality and precise control.

Explore the Leading ERNIE Image Models

Atlas Cloud provides you with the latest industry-leading creative models.

Peak speed

Lowest cost

| Modality | Description |

|---|---|

| ERNIE-Image API (Text To Image) | The flagship quality-focused model. The SFT variant runs at guidance scale 4.0 with 50 inference steps for maximum quality 24-7 Press Release — optimized for final production assets including posters, editorial graphics, and commercial layouts. |

| ERNIE-Image Turbo API (Text To Image) | The Turbo variant, optimized through DMD (Diffusion Model Distillation) and reinforcement learning, compresses inference steps from 50 to 8, achieving 6x+ speed improvement while maintaining high-quality output. Stable Learn Ideal for rapid iteration and high-volume workflows. |

New features of ERNIE Image Models + Showcase

Combining advanced models with Atlas Cloud's GPU-accelerated platform delivers unmatched speed, scalability, and creative control for image and video generation.

Text-Heavy Visual Content with ERNIE-Image API

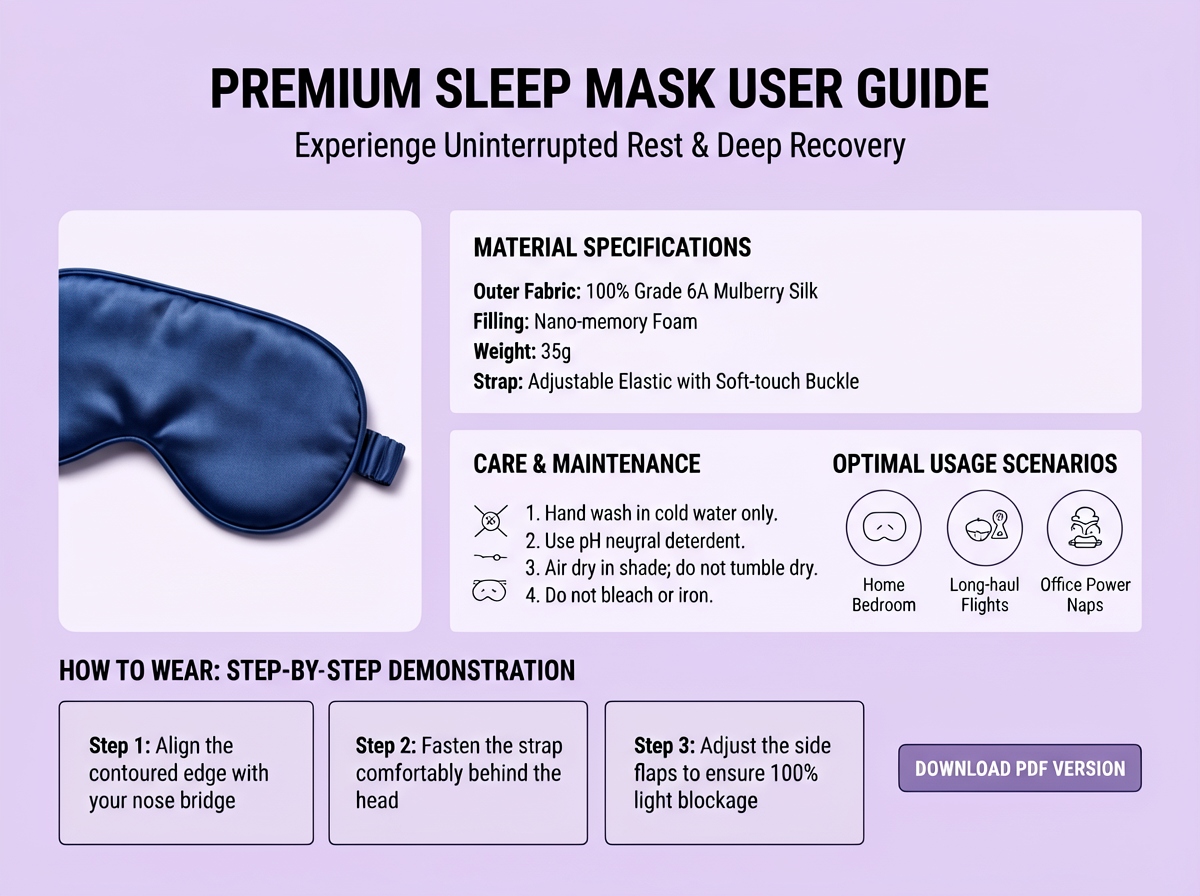

ERNIE-Image leads the open-source field with a LongTextBench score of 0.9733 — rendering accurate text inside images including comic speech bubbles, poster headlines, infographic labels, and UI mockup copy. If your use case requires legible, correctly-spelled text baked into the image, ERNIE-Image is the clear leader.

Structured Layout Generation with ERNIE-Image API

The codebase exposes generation, edit, composite, and upscale primitives so designers can centralize an asset pipeline. Let's Data Science By understanding spatial relationships and grid-based arrangements, it generates coherent multi-panel sequential artwork and formatted designs.

Bilingual Creative Expression with ERNIE-Image API

Both English and Chinese prompts are natively supported through the same encoder pipeline 24-7 Press Release, capturing cultural nuances and idiomatic expressions across languages for authentic visual storytelling.

Commercial Poster and Marketing Asset Creation with ERNIE-Image API

ERNIE-Image generates print-ready marketing materials with embedded typography, product placements, and professional layouts. For creatives and product teams, ERNIE-Image lowers the barrier to production-grade poster, comic, storyboard, and UI asset generation without license friction.

What You Can Do with ERNIE-Image

Discover practical use cases and workflows you can build with this model family — from content creation and automation to production-grade applications.

Marketing and Advertising Production

Generate campaign-ready posters, banners, and promotional materials with embedded text, product visuals, and professional layouts at high throughput — suitable for both quick drafts (Turbo) and final assets (Standard).

Publishing and Editorial Content

Create book covers, magazine illustrations, and editorial graphics with precise typography and artistic consistency. The industry-leading text rendering makes it ideal for text-heavy publication designs.

Comic and Manga Creation

ERNIE-Image lowers the barrier to production-grade comic, storyboard, and sequential art generation Let's Data Science with consistent character representation and integrated dialogue — streamlining production for independent creators and studios.

UI/UX and Product Mockups

Generate realistic application screenshots, website mockups, and interface designs with readable text elements and coherent layout structures for presentation and prototyping.

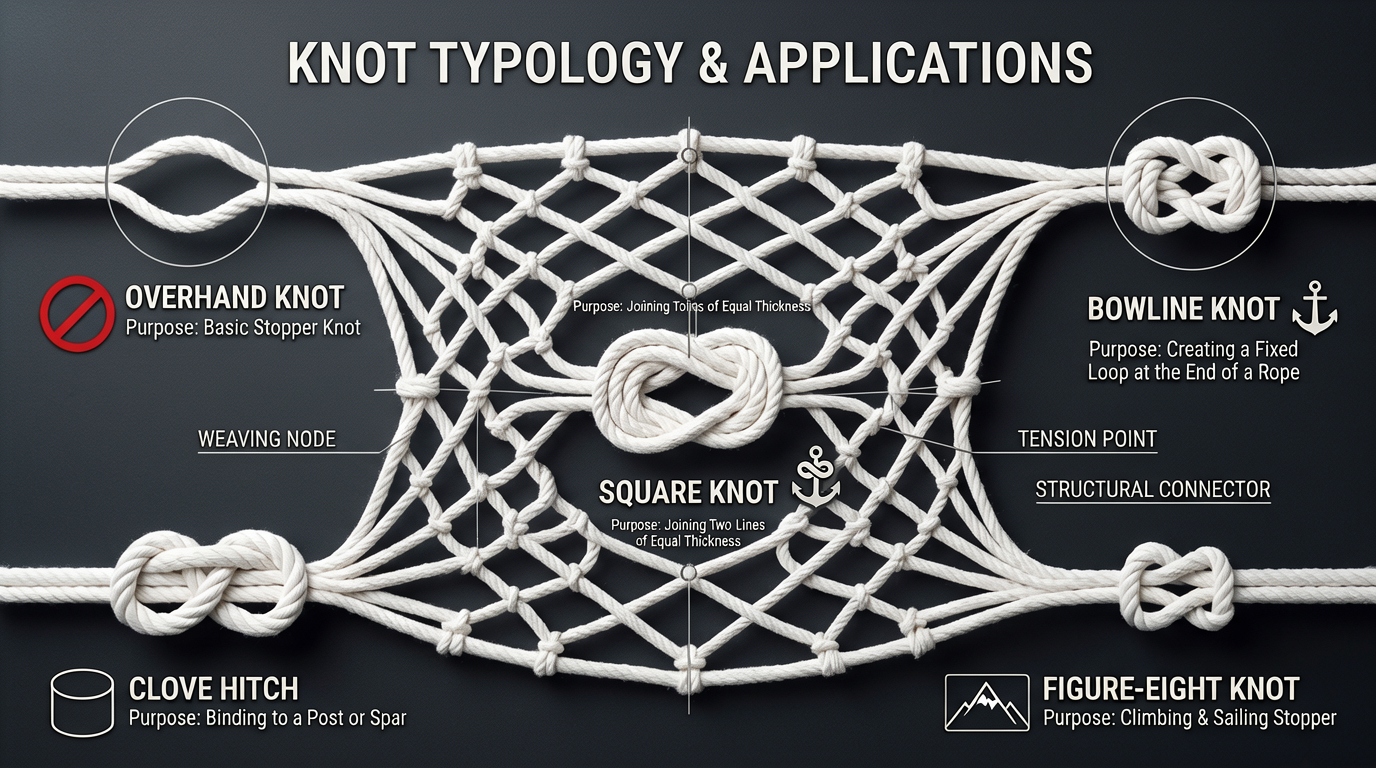

Educational and Infographic Content

ERNIE-Image performs strongly on complex instruction following and text rendering GitHub, making it well-suited for visually engaging educational materials, data visualizations, and explainer graphics combining imagery with clear, legible annotations.

Game Development and Concept Art

Develop character designs, environment concepts, and promotional artwork with cinematic quality and consistent style — supporting both indie and professional production pipelines.

Model Comparison

See how models from different providers stack up — compare performance, pricing, and unique strengths to make an informed decision.

| Model | Reference Image Limit | Output Num | Resolution | Aspect Ratio |

|---|---|---|---|---|

| ERNIE-Image | 0 (T2I) | 1–8 | 1024×1024 | 1:1 |

| ERNIE-Image Turbo | 0 (T2I) | 1–8 | 1024×1024 | 1:1 |

| Qwen-Image | 3 | 1–6 | 512P~2K | Width[512, 2048]px; Height[512, 2048]px |

| Flux.1 | 1 | 1 | 256P~4K | Width[256, 4096]px; Height[256, 4096]px |

| Seedream 5.0 | 14 | 1~15 | 2K~4K+ | 1:1 3:2 2:3 3:4 4:3 4:5 5:4 9:16 16:9 21:9 |

How to Use ERNIE Image Models on Atlas Cloud

Get started in minutes — follow these simple steps to integrate and deploy models through Atlas Cloud's platform.

Create an Atlas Cloud Account

Sign up at atlascloud.ai and complete verification. New users receive free credits to explore the platform and test models.

Why Use ERNIE Image Models on Atlas Cloud

Combining the advanced ERNIE Image Models models with Atlas Cloud's GPU-accelerated platform provides unmatched performance, scalability, and developer experience.

Performance & flexibility

Low Latency:

GPU-optimized inference for real-time reasoning.

Unified API:

Run ERNIE Image Models, GPT, Gemini, and DeepSeek with one integration.

Transparent Pricing:

Predictable per-token billing with serverless options.

Enterprise & Scale

Developer Experience:

SDKs, analytics, fine-tuning tools, and templates.

Reliability:

99.99% uptime, RBAC, and compliance-ready logging.

Security & Compliance:

SOC 2 Type II, HIPAA alignment, data sovereignty in US.

FAQ

A: ERNIE-Image achieves top-tier image rendering on consumer-grade GPUs. It excels in following complex instructions and multi-language text rendering, with comprehensive capabilities comparable to top-tier closed-source models. CnTechPost Its particular strengths in text rendering (LongTextBench 0.9733) and structured layout generation for comics, posters, and infographics set it apart from general-purpose open models.

A: Both English and Chinese text rendering score above 0.96 on LongTextBench. FLUX.2 collapses in Chinese scenarios (scoring 0.2183), while ERNIE-Image remains stable Stable Learn — handling Simplified Chinese, Traditional Chinese, and mixed bilingual content with high accuracy.

Yes. ERNIE-Image is released under the Apache 2.0 license GitHub, which permits commercial use, modification, and distribution. Generated images can be used in advertising, merchandise, publications, and commercial applications.