Every developer understands the pain. You find a superior API, yet the migration feels impossible. You have to update countless integrations and redo auth logic. One wrong move could crash the entire production environment. That's the migration tax—and it stops most teams before they even start. This video pipeline migration guide breaks down exactly how to make the switch safely, using Atlas Cloud as the reference implementation.

Old system updates are a huge headache. Constant crashes, new bugs, and high training costs pile up quickly. This pressure forces many teams to keep using outdated tools they should have replaced long ago.

AI Video Generation API Integration with Atlas Cloud: Built to Plug In, Not Replace

Atlas Cloud's AI Video Workflow API is designed around one principle — fit into what you already have. Whether you're pulling from existing image and video generation APIs or connecting to on-premise pipelines, Atlas Cloud's AI video generation API integration layers on top of your current stack without demanding a full rewrite.

What Makes It Different

| Concern | Traditional Migration | Atlas Cloud Approach |

| Codebase changes | Extensive refactoring | Minimal adapter layer |

| Downtime risk | High | Low—parallel deployment supported |

| Legacy compatibility | Often breaks | Preserves existing endpoints |

Start small, validate, and scale—without burning a sprint on plumbing.

The "Why Now?" of Video Pipeline Migration

If your video pipeline was built three years ago, it was designed for a world of transcoding and thumbnail generation — not generative AI. Today, that mismatch shows up as real operational pain, and AI inference cost reduction has become one of the most pressing engineering priorities for teams scaling generative features.

- High Inference costs: Running heavy video models on-demand makes cloud bills soar. Without smart batching or cost limits, your monthly spending becomes impossible to predict.

- GPU shortages: A lack of available chips and long wait times cause major lag. These delays usually hit at the worst times, like big product launches.

- Rigid rate limits: Most generation APIs have fixed limits that do not scale with your needs. This forces teams to pay for extra capacity or slow down their own apps.

AI inference costs represent one of the fastest-growing line items for product teams scaling generative features. Achieving meaningful AI inference cost reduction requires both architectural changes and choosing the right API layer — not just negotiating better pricing.

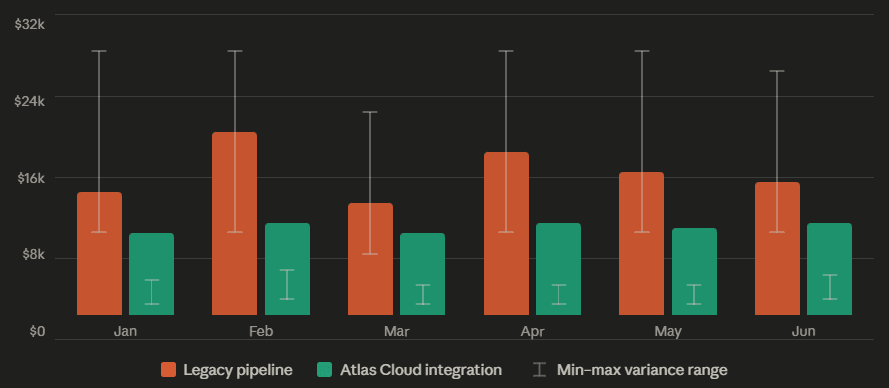

AI inference cost: legacy pipeline vs. Atlas Cloud integration:

Based on a typical mid-market video team at scale

Avg saving: ~39% · Variance reduction: ~85%

The Shift to Multimodal—And Why Static Workflows Can't Keep Up

Traditional video pipelines were linear: ingest → transcode → deliver. Generative AI video workflow demands are fundamentally different. As you'll see in any practical video pipeline migration guide, the core challenge isn't just tooling — it's rethinking the architecture. Models now handle text-to-video prompts, image conditioning, and multi-step generation chains, often in a single request.

Legacy system integration wasn't built for this. Bolting a generative model onto a static pipeline usually means:

| Old Pipeline Assumption | Generative Reality |

| Fixed input/output formats | Dynamic, model-dependent outputs |

| Predictable compute time | Variable inference duration |

| One model per task | Multi-model chaining |

Atlas Cloud's AI video generation API integration addresses this by treating multimodal, multi-step workflows as a first-class design pattern — not an afterthought.

Mapping the Architecture: Where AI Video Generation API Integration Fits In Your Stack

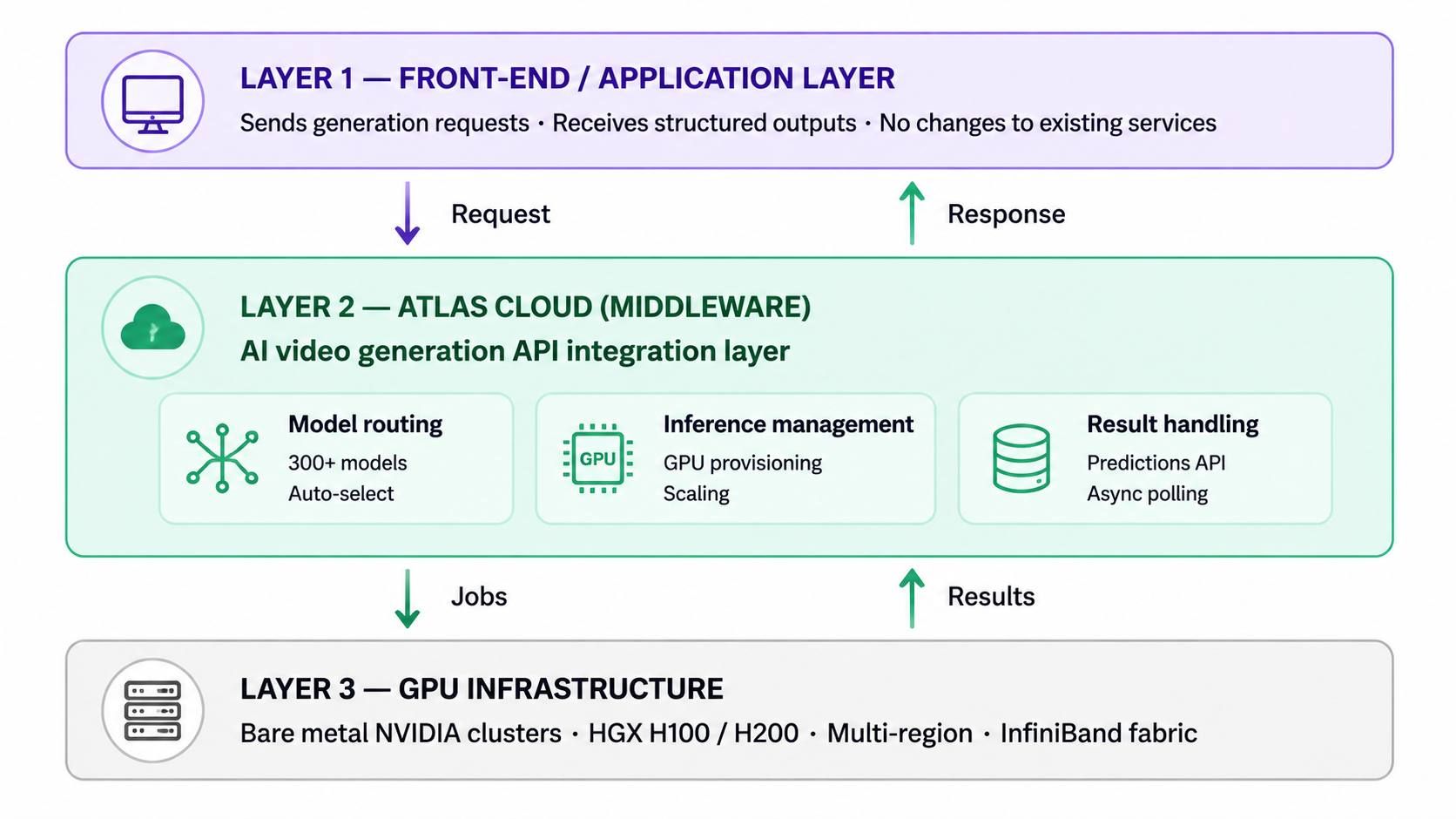

Think of Atlas Cloud as a smart bridge sitting rather than a replacement for your infrastructure. It sits right between your main app and the heavy lifting of AI processing. When your front-end makes a request, Atlas Cloud handles the routing and model execution. It sends back a clean response while your internal services stay completely unaware of the complex work happening behind the scenes.

This middleware pattern is what makes AI video generation API integration practical for teams with established pipelines. Rather than dismantling a working architecture, you insert Atlas Cloud at the processing layer. It handles:

- Model routing — directing requests across 300+ AI models, including those powering your AI video workflow

- Inference management — abstracting GPU provisioning and scaling behind a single endpoint

- Result handling — returning generation outputs in consistent, predictable formats via its Predictions API

Compatibility Layer: Meeting Your Stack Where It Is

Legacy system integration often stalls because new tools demand new toolchains. Atlas Cloud sidesteps this by offering:

| Integration Surface | Details |

| API style | RESTful, OpenAI-compatible endpoint |

| SDK support | Python, Node.js, and any HTTP client |

| Auth | Standard API key-based authentication |

| Model scope | LLM, Image & Video Generation APIs under one key |

The OpenAI-compatible design is particularly useful—teams already using the OpenAI SDK can switch base URLs and get access to Atlas Cloud's full model catalog, including video generation and image generation models, with minimal code changes.

Legacy pipeline vs. multimodal AI video workflow:

| DIMENSION | Legacy pipeline | Multimodal AI workflow (Atlas Cloud) |

| Processing model | Linear: ingest →\rightarrow→ transcode →\rightarrow→ deliver. Each stage waits for the previous to complete. | Parallel multi-step: text prompt, image conditioning, and generation chains handled in a single request lifecycle. |

| Latency profile | Predictable but slow. Transcoding is bounded; generative tasks are not supported natively. | Variable per model, but managed via async polling. P50/P95 variance is tighter with dedicated endpoints. |

| Schema flexibility | Proprietary internal schemas. New model integrations require full adapter rewrites. | OpenAI-compatible REST. Swap base URL; existing SDK calls and auth middleware carry over unchanged. |

| GPU dependency | Self-managed spot instances. Shortages cause queue spikes during traffic peaks or launches. | Abstracted behind a single endpoint. Scales 0→8000 \rightarrow 8000→800 GPUs automatically; no manual provisioning. |

| Cost model | Always-on provisioning. Teams over-provision to avoid throttling, paying for idle capacity. | Per-request billing on serverless tier. Dedicated endpoints for high-volume workloads with predictable pricing. |

| Migration effort | — | 3-step: auth sync →\rightarrow→ payload mapping →\rightarrow→ async polling. No downtime required; runs parallel to existing stack. |

3-Step Video Pipeline Migration Guide: Zero-Downtime Connection

Switching APIs doesn't have to mean a service freeze. This video pipeline migration guide walks through a practical three-step approach to wiring Atlas Cloud into a live stack without pulling the plug on what's already running.

Step 1: Authentication & Environment Sync

Atlas Cloud authenticates every request via a Bearer token passed in the Authorization header—the same pattern used across most modern REST APIs, which means your existing auth middleware likely needs zero changes.

The secure setup checklist:

| Task | Recommendation |

| Store the API key | Use environment variables (ATLAS_API_KEY), never hardcode |

| Header format | Authorization: Bearer <your_api_key> |

| Base URL | https://api.atlascloud.ai/v1 |

| Key rotation | Generate new keys from the Atlas Cloud dashboard without touching code |

Keep your key out of version control. A .env file with a .gitignore entry is the minimum bar; secrets managers are preferable in production.

Step 2: Mapping Data Payloads

Each model in Atlas Cloud's catalog—whether you're targeting its Image & Video Generation APIs or an LLM—accepts a model field that identifies the target by its full model ID e.g., kling-video/v1.6/standard/image-to-video. This is where legacy system integration teams spend the most time: translating proprietary internal JSON schemas into the format each model expects.

A practical mapping approach:

- Audit your existing payload — identify fields like input_url, resolution, duration, and prompt that need renaming or restructuring.

- Reference the model's parameter spec in the Model APIs docs before writing any transformation logic.

- Write a thin adapter function that takes your internal schema and outputs the Atlas Cloud-compatible body—keeping the transformation isolated makes it easy to update when model versions change.

Step 3: Asynchronous Result Polling

Video generation is not instant. Submitting a request returns a request_id; your app then polls GET /api/v1/model/result/{request_id} until the status field resolves to a completed state and the outputs array is populated.

To keep your application non-blocking during an AI video workflow render:

- Submit the generation request and store the returned request_id.

- Queue a background job e.g., via a task queue like Celery or BullMQ to poll the result endpoint at a sensible interval.

- Trigger downstream logic only when status confirms completion—then pass outputs to your delivery pipeline.

This decouples render time from your API response latency, keeping the user-facing layer responsive throughout.

Solving Cold Starts and Latency — the Hidden Driver of AI Inference Cost Reduction

Two things kill stakeholder confidence in a new AI video workflow faster than anything: slow first-response times and unpredictable render performance. Addressing them is also central to any serious AI inference cost reduction strategy — because latency variance forces over-provisioning, which drives up spend.

Edge Processing vs. Cloud Centralization

Latency in AI inference is often a geography problem as much as a hardware one. The further your request travels to reach a GPU, the slower your pipeline feels—regardless of how powerful the model is.

Atlas Cloud operates bare metal GPU clusters across multiple regions, giving teams the option to route workloads closer to their users or data sources:

| GPU Model | Location | QTY | Pricing ($/Gpu/Hour) | Network |

| H100 | EU | 200 | $1.95 | IB |

| Singapore | 32 | $2.10 | IB | |

| US | 16 | $2.10 | IB | |

| H200 | US | 128 | $2.35 | RoCe |

| Japan | 8 | $2.40 | IB | |

| EU | 16 | $2.40 | IB | |

| Singapore | 8 | $2.40 | IB | |

| US | 8 | $2.40 | IB | |

| GB200 | Malaysia | 8 | $4.50 | IB |

| A100 | US | 64 | $1.35 | / |

Source: Atlas Cloud Bare Metal

Unlike virtualized cloud environments, bare metal instances give your AI video workflow direct access to NVIDIA hardware—no hypervisor overhead eating into inference throughput. Atlas's HGX H100 and H200 clusters use a rail-optimized InfiniBand design specifically to minimize inter-node latency during parallel generation tasks.

For teams on the serverless tier, Atlas Cloud's Dedicated Endpoint scales from 0 to 800 GPUs in seconds and claims a 90% reduction in cold start times compared to standard serverless deployments—addressing the most common latency complaint during traffic spikes.

Benchmarking Performance: What to Measure Before You Commit

No vendor benchmark replaces your own workload test. When stress-testing Atlas Cloud API integration against your current Image & Video Generation APIs, focus on three metrics:

| Metric | Why it matters | Target threshold | Signal to watch |

| P50 render time | Median experience for the majority of requests — your baseline user expectation. | ≤ 8 s for 15s clip | If P50 is already above target, the architecture won't recover at scale. |

| P95 render time | Variance is the real cost driver. Unpredictable tail latency forces over-provisioning. | ≤ 2x P50 | A P50 of 8 s with a P95 of 45 s is a worse pipeline than a P50 of 12 s with P95 of 14 s. |

| Cold start latency | First-request delay after idle periods — the primary UX complaint during traffic spikes. | ≤ 3 s to first token | Compare dedicated endpoint vs. serverless tier. Atlas Cloud claims 90% reduction vs. standard serverless. |

| Error rate under load | Rate limits and GPU shortages surface as errors, not just slowness, at production volume. | < 0.5% at peak RPS | Stress-test at 2x expected peak. Any > 1% error rate indicates inadequate burst headroom. |

| Output consistency | Generative models can drift in resolution, format, or artefact rate across identical prompts. | 100% spec-compliant format | Log resolution, codec, and file-size variance across 50+ identical runs. Flag outliers > ±10%. |

| Cost per render | The unit economics that determine whether the integration pays for itself at scale. | Track vs. current provider | Compare total cost including idle GPU time, not just per-request price. Atlas Cloud: per-request billing on serverless tier. |

Run parallel tests: Try running some side-by-side tests. Send the exact same prompts to your current setup and Atlas Cloud at the same time. Check things like render speed, final quality, and how often things fail. Most teams realize the biggest win isn't just about being fast. It is about being reliable. It's preferable to have a steady wait time of 8 seconds than to never know if a task will take 3 or 25 seconds.

Real-World Integration Scenarios

The architecture discussions above become concrete when you map them to the actual systems most teams are already running. The two scenarios below are representative integration patterns—not specific customer case studies—built on Atlas Cloud's verified capabilities.

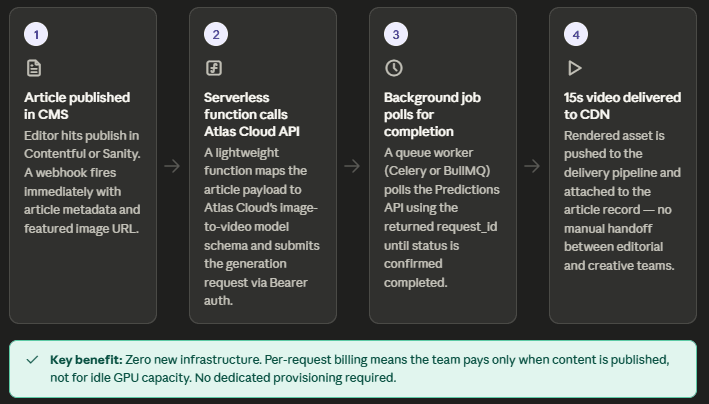

Scenario A — The Creative Suite: CMS-Triggered Social Video Previews

The setup: A digital media group uses a headless CMS like Contentful or Sanity to post their stories. Every new article needs a 15-second social media video to go with it. Making these videos by hand is way too slow. It creates a massive logjam between the writers and the social media team.

How Atlas Cloud API integration fits in:

| Pipeline Stage | Tool / System | Atlas Cloud Role |

| Publish trigger | CMS webhook | Receives POST event with article metadata |

| Prompt construction | Internal middleware | Assembles text prompt from title + thumbnail URL |

| Video generation | Atlas Cloud Video API | Calls models like Kling or Hailuo via unified endpoint |

| Result delivery | CMS asset field | Polls GET /api/v1/model/result/{request_id} and writes output URL back |

Because Atlas Cloud's Image & Video Generation APIs accept standard REST calls with Bearer auth, the CMS integration requires only a lightweight serverless function—no new infrastructure, no dedicated GPU procurement. The per-request billing model also means the team pays only when content is published, not for idle capacity.

Key benefit for this use case: Automated AI video workflow from publish event to rendered asset, with no manual handoff between editorial and creative teams.

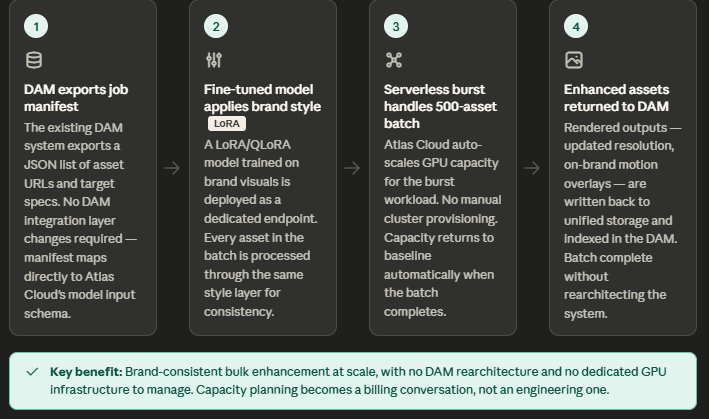

Scenario B — The Enterprise Sandbox: DAM Bulk Video Enhancement

The setup: A large brand's Digital Asset Management system holds thousands of existing product videos—many at outdated resolutions or missing on-brand motion overlays. The task is enhancing and re-rendering them at scale without rebuilding the DAM integration layer.

How Atlas Cloud fits in:

- Legacy system integration is preserved: the DAM exports a job manifest (JSON list of asset URLs and target specs) that maps directly to Atlas Cloud's model input schema.

- Fine-tuned models via LoRA/QLoRA can be trained on brand-specific visual styles and deployed as dedicated inference endpoints—keeping outputs consistent across thousands of assets (Atlas Cloud Fine-Tuning).

- Serverless scaling handles burst workloads: a 500-asset batch job can scale to the required GPU capacity automatically without manual cluster provisioning.

- Unified storage keeps fine-tuned model weights, input assets, and rendered outputs accessible across the entire pipeline from a single location.

Key benefit for this use case: Brand-consistent bulk video enhancement at scale, without rearchitecting the DAM system or managing dedicated GPU infrastructure.

Future-Proofing: Privacy and Scalability

Privacy by Design

For teams handling sensitive assets in their AI video workflow, Atlas Cloud is built with compliance at the infrastructure level—not bolted on afterward. The platform holds SOC I & II certification and HIPAA compliance across all tiers, with fine-tuning pipelines described as "secure, fully managed."

For legacy system integration in regulated industries, this removes a common blocker: proving to security teams that a new API vendor meets existing data governance standards without requiring custom audits.

Scaling Up Without Manual Intervention

Volume growth is where many Image & Video Generation APIs quietly break down. Atlas Cloud's Dedicated Endpoint addresses this directly:

| Scale Trigger | Atlas Cloud Response |

| Traffic spike | Scales 0 → 800 GPUs in seconds |

| Cold start | 90% reduction vs. standard serverless |

| Billing model | Per-request only — no idle GPU costs |

There are no manual infrastructure adjustments required between 10 requests and 10,000. The same Atlas Cloud API integration handles both, making capacity planning a billing conversation rather than an engineering one.