OpenAI LLM Models

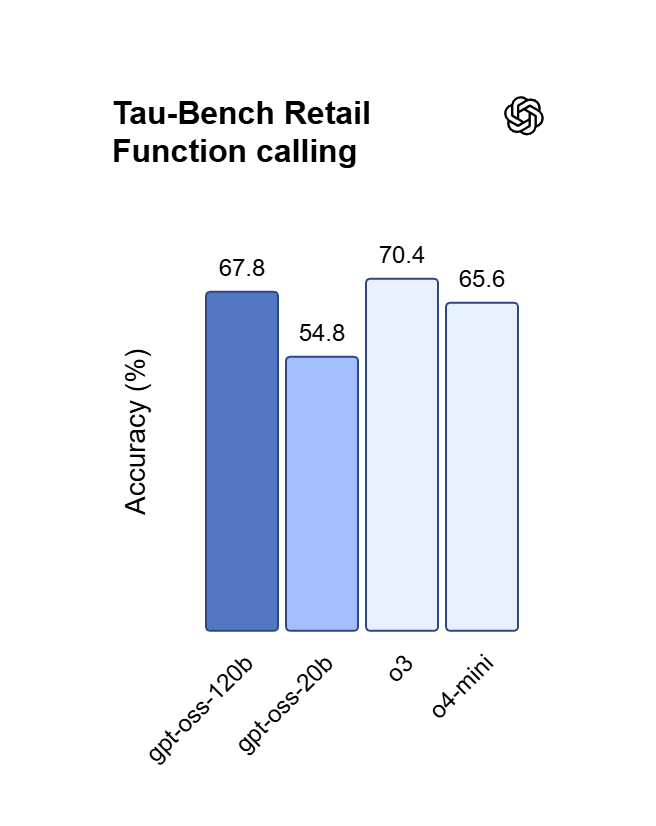

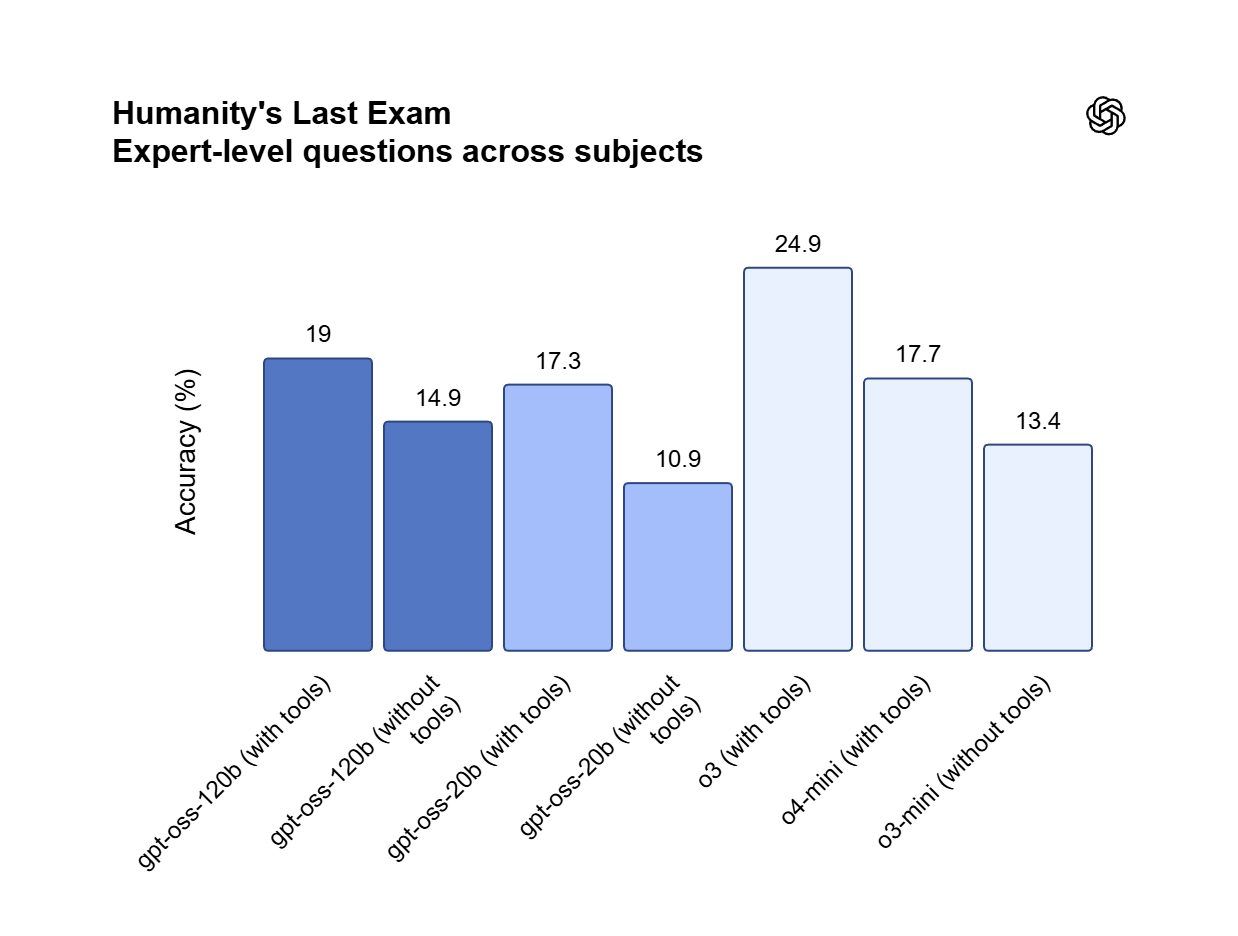

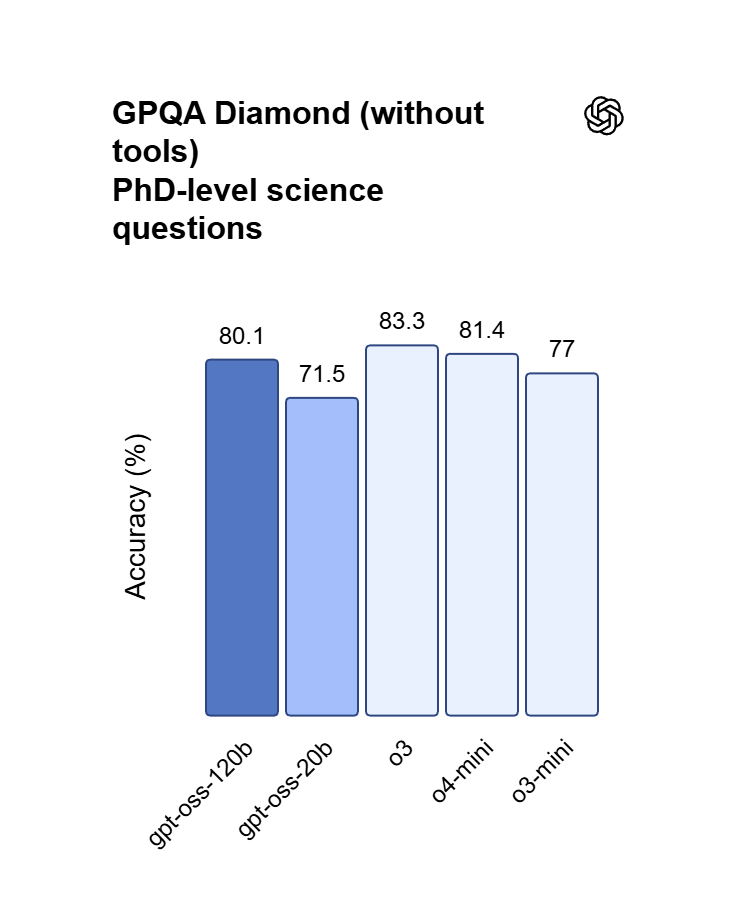

OpenAI’s premier GPT model family leads the industry, highlighted by the GPT OSS 120B which achieves near-parity with OpenAI o4-mini on core reasoning benchmarks while running efficiently on a single 80GB GPU. Perfectly optimized for vibecoding and complex logic operations, this model balances top-tier intelligence with hardware accessibility for modern developers and AI-driven web development.

Explore the Leading OpenAI LLM Models

Atlas Cloud provides you with the latest industry-leading creative models.

What Makes OpenAI LLM Models Stand Out

Atlas Cloud provides you with the latest industry-leading creative models.

Frontier Research

Cutting-edge models that set global benchmarks in reasoning, multimodality, and AI safety.

Cost-Efficient Performance

Optimized families like GPT-4.1 mini and GPT-5 nano balance accuracy, speed, and cost.

Developer Ecosystem

APIs powering millions of daily requests across diverse platforms and industries.

Flexible Model Sizes

Choice of flagship, mini, and nano models for every workload and budget.

Enterprise Reliability

SLAs, monitoring, and compliance-ready logging trusted by Fortune 500 companies.

Open Model Options

Access to open-source models (gpt-oss-20b, gpt-oss-120b) for transparency and customization.

Peak speed

Lowest cost

| Model | Description |

|---|---|

| GPT OSS 120B | GPT OSS 120B is a high-performance reasoning-centric LLM, integrating optimized architecture with robust 131.07K context processing capabilities; attaining near-parity with OpenAI o4-mini on a single 80 GB GPU, it serves as the engine for rapid iterative development, including vibecoding and executing complex logic-driven workflows. |

New features of OpenAI LLM Models + Showcase

Combining advanced models with Atlas Cloud's GPU-accelerated platform delivers unmatched speed, scalability, and creative control for image and video generation.

Precise Instruction Compliance via GPT OSS 120B

GPT OSS 120B exhibits exceptional steerability, strictly adhering to complex system prompts to ensure absolute output reliability. By leveraging its fine-tuned alignment architecture, users can enforce specific formats, constraints, and stylistic nuances with zero character drift. It is the definitive choice for autonomous agents, structured data extraction, and mission-critical production environments.

Commercial Sovereignty under Apache 2.0 License

GPT OSS 120B is distributed under the Apache 2.0 license, permitting unrestricted commercial usage and private fine-tuning without per-token fees. Unlike closed-source APIs, it allows for local hosting on a single 80 GB GPU to keep sensitive proprietary data fully on-premises. This framework provides the legal and technical freedom to build, modify, and scale AI-driven software stacks.

High-Efficiency Logic and Vibecoding using GPT OSS 120B

Achieving near-parity with OpenAI o4-mini, this 120B parameter model excels at handling complex code synthesis and mathematical proofs. Developers can leverage its reasoning engine for "vibe coding"—translating natural language ideas directly into functional web applications through iterative prompting. It is a high-speed solution for debugging nested logic and orchestrating sophisticated task-scheduling workflows.

What You Can Do with OpenAI LLM Models

Discover practical use cases and workflows you can build with this model family — from content creation and automation to production-grade applications.

Deep Logic Debugging and Prototyping with the GPT OSS 120B

The GPT OSS 120B enables engineers to solve "vibecoding" challenges by translating high-level architectural ideas into production-ready Python or React components. Its reasoning engine handles the nested dependencies and edge cases that often trip up mini-models, ensuring multi-step code synthesis remains functional. Supporting algorithmic proofs and complex task scheduling, it is the perfect tool for building technical MVPs, automated QA scripts, and data-intensive web applications.

Offline Proprietary Tooling Using the GPT OSS 120B

Under the Apache 2.0 license, teams can host GPT OSS 120B on a single 80 GB GPU to process sensitive internal data without cloud-leakage risks. This setup allows for permanent local fine-tuning on niche internal codebases or medical logs without recurring per-token API costs. Ideal for high-security internal tools and offline AI assistance, the model provides full weight sovereignty—supporting private RAG systems and customized proprietary software stacks.

Schema-Perfect Data Extraction with the GPT OSS 120B

The GPT OSS 120B enables developers to convert messy, unstructured documents into strictly formatted JSON or Markdown without "instruction drift." By anchoring the 131.07K context window with rigid system rules, the model ensures fields are never hallucinated or skipped during long-form processing. Ideal for CRM automation and automated content tagging, it maintains logical guardrails across massive datasets—supporting reliable API integrations and database population.

Model Comparison

See how models from different providers stack up — compare performance, pricing, and unique strengths to make an informed decision.

| Model | Context | Max Output | Input | Positioning |

|---|---|---|---|---|

| GPT OSS 120B | 131.07K | 131.07K | Text | High-Efficiency Reasoning LLM |

| GLM-5 | 202.75K | 202.75K | Text | Flagship Foundation Model |

| DeepSeek V3.2 | 163.84K | 163.84K | Text | Flagship General |

| MiniMax-M2.5 | 204.8K | 196.6K | Text | SOTA Agentic Coding |

How to Use OpenAI LLM Models on Atlas Cloud

Get started in minutes — follow these simple steps to integrate and deploy models through Atlas Cloud's platform.

Create an Atlas Cloud Account

Sign up at atlascloud.ai and complete verification. New users receive free credits to explore the platform and test models.

Why Use OpenAI LLM Models on Atlas Cloud

Combining the advanced OpenAI LLM Models models with Atlas Cloud's GPU-accelerated platform provides unmatched performance, scalability, and developer experience.

Performance & flexibility

Low Latency:

GPU-optimized inference for real-time reasoning.

Unified API:

Run OpenAI LLM Models, GPT, Gemini, and DeepSeek with one integration.

Transparent Pricing:

Predictable per-token billing with serverless options.

Enterprise & Scale

Developer Experience:

SDKs, analytics, fine-tuning tools, and templates.

Reliability:

99.99% uptime, RBAC, and compliance-ready logging.

Security & Compliance:

SOC 2 Type II, HIPAA alignment, data sovereignty in US.

Frequently Asked Questions about OpenAI LLM Models

It achieves near-parity with OpenAI o4-mini on core reasoning and math benchmarks. While o4-mini is a closed API, OSS 120B offers comparable logic depth with the added benefit of full model weight access.

The model is optimized for a single 80 GB GPU, avoiding multi-node complexity. However, for instant scalability and zero maintenance, we recommend accessing it via API on Atlas Cloud.

Yes. It is released under the Apache 2.0 license, which permits unrestricted commercial usage, modification, and distribution without per-token licensing fees or vendor lock-in.

The 131.07K context window is designed for "needle-in-a-haystack" retrieval accuracy. It can ingest entire project directories or 100+ page technical manuals while maintaining logical consistency across the entire input.

Extremely. Its reasoning engine is fine-tuned for iterative code synthesis. It handles nested React components and complex Python backends more reliably than standard 70B-class models, making it ideal for natural-language-to-app workflows.

Explore More Families

Seedance 2.0 Models

Seedance 2.0(by Bytedance) is a multimodal video generation model that redefines "controllable creation," moving beyond the limitations of text or start/end frames. It supports quad-modal inputs—text, image, video, and audio—and introduces an industry-leading "Universal Reference" system. By precisely replicating the composition, camera movement, and character actions from reference assets, Seedance 2.0 solves critical issues with character consistency and physical coherence, empowering creators to act as true "directors" with deep control over their output.

GPT Image 2 Models

GPT Image 2 is a state-of-the-art multimodal foundation model engineered for exceptional text-to-image generation with unprecedented photorealism and creative versatility. Developed by OpenAI as the evolution of the DALL-E lineage, it transforms detailed natural language descriptions into hyper-realistic imagery at up to 4K resolution. With proprietary "Neural Rendering Engine" technology for precise visual control, GPT Image 2 delivers studio-quality results with accurate anatomy, lighting, and composition—making it the premier AI tool for professional creators, enterprises, and developers demanding production-ready visual assets.

Wan2.7 Models

Launching this March, Wan2.7 is the latest powerhouse in the Qwen ecosystem, delivering a massive upgrade in visual fidelity, audio synchronization, and motion consistency over version 2.6. This all-in-one AI video generator supports advanced features like first-and-last frame control, 3x3 grid synthesis, and instruction-based video editing. Outperforming competitors like Jimeng, Wan2.7 offers superior flexibility with support for real-person image inputs, up to five video references, and 1080P high-definition outputs spanning 2 to 15 seconds, making it the premier choice for professional digital storytelling and high-end content marketing.

Veo3.1 Models

Google DeepMind’s Veo 3.1 represents a paradigm shift in AI video generation, empowering creators with director-level narrative control and cinematic-grade audio quality that seamlessly integrates with its enhanced visual realism. By bridging the gap between imaginative concepts and photorealistic execution, this advanced model offers a transformative solution for a wide range of application scenarios, from professional filmmaking and high-end advertising to immersive digital content creation.

ERNIE Image Models

ERNIE-Image is an open-weight text-to-image model developed by the ERNIE-Image Team at Baidu, built on a single-stream Diffusion Transformer (DiT) with 8B parameters and paired with a lightweight Prompt Enhancer that rewrites short prompts into richer, more structured descriptions before passing them to the diffusion backbone. NYU Shanghai RITS Released on April 15, 2026 under the Apache 2.0 license, it transforms natural language descriptions into detailed imagery with particular strength in text rendering and structured layout generation. ERNIE-Image is designed not only for strong visual quality, but for controllability in practical generation scenarios where accurate content realization matters as much as aesthetics — making it well-suited for commercial posters, comics, multi-panel layouts, and other content creation tasks that require both visual quality and precise control.

Happy Horse 1.0

HappyHorse-1.0 is a unified multimodal AI video generation model that climbed to the top of the Artificial Analysis Video Arena blind-test leaderboard for both text-to-video and image-to-video generation. CNBC Alibaba Group confirmed ownership of HappyHorse, developed under its Alibaba Token Hub (ATH) business unit, where it leads benchmarks outperforming ByteDance's Seedance 2.0 and others. Caixin Global Led by Zhang Di — the former VP of Kuaishou who architected Kling AI — the 15-billion parameter model generates 1080p video with synchronized audio in a single pass using a unified transformer architecture that bypasses the multi-stage pipelines used by every major competitor.

GPT Image Models

The GPT Image Family is OpenAI's latest suite of multimodal image generation and editing models, built on the powerful GPT architecture. This family includes three tiers — GPT Image-1, GPT Image-1.5, and GPT Image-1 Mini — each available in both Text-to-Image and Image-to-Image variants. Combining GPT's world-class language understanding with DALL·E-class visual synthesis, these models deliver exceptional prompt adherence, photorealistic rendering, and creative versatility across illustration, photography, design, and visualization tasks. The series offers flexible pricing and quality tiers to match any workflow — from rapid prototyping and high-volume content production to professional-grade final deliverables. Whether you need ultra-fast iterations at minimal cost or maximum quality for brand campaigns, the GPT Image Family has a solution tailored to your needs.

Nano Banana2 Models

Nano Banana 2 (by Google), is a generative image model that perfectly balances lightning-fast rendering with exceptional visual quality. With an improved price-performance ratio, it achieves breakthrough micro-detail depiction, accurate native text rendering, and complex physical structure reconstruction. It serves as a highly efficient, commercial-grade visual production tool for developers, marketing teams, and content creators.

Seedream5.0 Models

Seedream 5.0, developed by ByteDance’s Jimeng AI, is a high-performance AI image generation model that integrates real-time search with intelligent reasoning. Purpose-built for time-sensitive content and complex visual logic, it excels at professional infographics, architectural design, and UI assistance. By blending live web insights with creative precision, Seedream 5.0 empowers commercial branding and marketing with a seamless, logic-driven workflow that turns sophisticated data into stunning, high-fidelity visuals.

Kling3.0 Models

Kuaishou’s flagship video generation suite, Kling 3.0, features two powerhouse models—Kling 3.0 (Upgraded from Kling 2.6) and Kling 3.0 Omni (Kling O3, Upgraded from Kling O1)—both offering high-fidelity native audio integration. While Kling 3.0 excels in intelligent cinematic storytelling, multilingual lip-syncing, and precision text rendering, Kling O3 sets a new standard for professional-grade subject consistency by supporting custom subjects and voice clones derived from video or image inputs. Together, these models provide a comprehensive solution tailored for cinematic narratives, global marketing campaigns, social media content, and digital skit production.

GLM LLM Models

GLM is a cutting-edge LLM series by Z.ai (Zhipu AI) featuring GLM-5, GLM-4.7, and GLM-4.6. Engineered for complex systems and long-horizon agentic tasks, GLM-5 outperforms top-tier closed-source models in elite benchmarks like Humanity’s Last Exam and BrowseComp. While GLM-4.7 specializes in reasoning, coding, and real-world intelligent agents, the entire GLM suite is fast, smart, and reliable, making it the ultimate tool for building websites, analyzing data, and delivering instant, high-quality answers for any professional workflow.

Open AI Model Families

Explore OpenAI’s language and video models on Atlas Cloud: ChatGPT for advanced reasoning and interaction, and Sora-2 for physics-aware video generation.