Nano Banana2 Models

Nano Banana 2 (by Google), is a generative image model that perfectly balances lightning-fast rendering with exceptional visual quality. With an improved price-performance ratio, it achieves breakthrough micro-detail depiction, accurate native text rendering, and complex physical structure reconstruction. It serves as a highly efficient, commercial-grade visual production tool for developers, marketing teams, and content creators.

Poznaj Wiodące Modele

Atlas Cloud zapewnia najnowsze, wiodące w branży modele kreatywne.

Prędkość szczytowa

Najniższy koszt

| Model | Oficjalna nazwa |

|---|---|

| Nano Banana 2 T2I API(Text to Image) | API Nano Banana 2 Text to Image umożliwia programistom przekształcanie podpowiedzi tekstowych w oszałamiające wizualizacje klasy kinowej z natywną precyzją 4K. Wykorzystując zaawansowaną logikę kontroli sceny, generuje wykwintne detale i złożone kompozycje z wieloma postaciami dla kreatywnych przepływów pracy o wysokiej współbieżności. |

| Nano Banana 2 Edit API(Image to Image) | API Nano Banana 2 Edit umożliwia programistom przekształcanie istniejących obrazów w udoskonalone lub wymyślone na nowo arcydzieła z zachowaniem idealnej spójności. Wykorzystując najnowocześniejszą sterowaną dyfuzję, generuje precyzyjne transfery stylistyczne i modyfikacje strukturalne na potrzeby profesjonalnej iteracji zasobów i projektowania marketingowego. |

| Nano Banana 2 T2I Developer API(Text to Image Developer) | Interfejs API dla deweloperów Nano Banana 2 Text to Image oferuje te same możliwości generowania obrazu w kinowej jakości 4K. Chociaż zachowuje pełną logikę twórczą dla złożonych kompozycji przy niższych kosztach, jest mniej stabilny. |

| Nano Banana 2 Edit Developer API(Image to Image Developer) | Nano Banana 2 Edit Developer API oferuje wysokiej wierności transfery stylistyczne i modyfikacje strukturalne po obniżonych kosztach. Zapewnia tę samą profesjonalną iterację zasobów co wersja standardowa, chociaż użytkownicy mogą doświadczać wahań stabilności odpowiedzi przy szczytowym obciążeniu. |

Nowe funkcje Nano Banana2 Models + Showcase

Połączenie zaawansowanych modeli z platformą Atlas Cloud z akceleracją GPU zapewnia niezrównaną szybkość, skalowalność i kreatywną kontrolę w generowaniu obrazów i wideo.

Natywna rozdzielczość 4K z ekstremalną szczegółowością przy użyciu Nano Banana 2 API

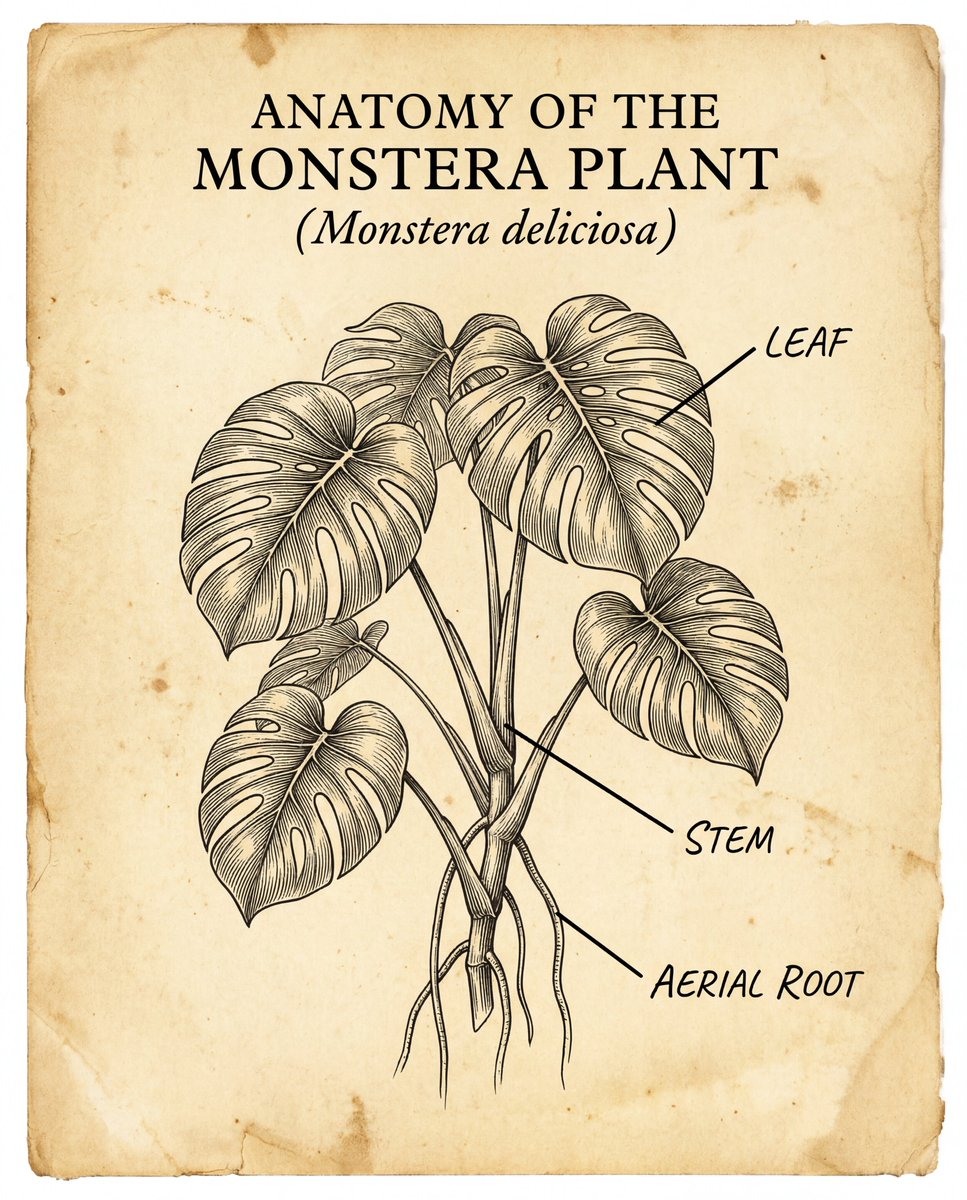

Nano Banana 2 generuje natywne obrazy 4K, koncentrując się na dokładności strukturalnej. Dzięki uchwyceniu subtelnych detali, takich jak realistyczne odbicia światła i złożona anatomia człowieka, zapewnia spójność wizualną w całym kadrze. Nawet trudne elementy, takie jak precyzyjne renderowanie tekstu w obrazach, są obsługiwane z jasnością i ostrością.

Błyskawiczna szybkość generowania przy użyciu Nano Banana 2 API

Zaprojektowany z myślą o wydajności, Nano Banana 2 łączy wysoką jakość wyników ze znacznie skróconym czasem renderowania. Taka wydajność pozwala na bardziej płynny proces twórczy, co czyni go szczególnie skutecznym w branżach o dużym wolumenie, takich jak e-commerce i marketing w mediach społecznościowych, gdzie cykle realizacji projektów są napięte. Jest idealnie dostosowany do reklam e-commerce i działań w mediach społecznościowych, które wymagają szybkich iteracji.

Doskonała kontrola nad wieloma postaciami i złożonymi scenami przy użyciu Nano Banana 2 API

Nano Banana 2 zapewnia stabilną kontrolę nad interakcjami wielu podmiotów i skomplikowanymi tłami. Zachowuje logiczne relacje przestrzenne i spójność postaci w ramach jednego promptu, umożliwiając użytkownikom tworzenie wyrafinowanych, wielowarstwowych kompozycji bez utraty głównej narracji obrazu.

Co Możesz Zrobić z Nano Banana2 Models

Odkryj praktyczne przypadki użycia i przepływy pracy, które możesz zbudować z tą rodziną modeli — od tworzenia treści i automatyzacji po aplikacje klasy produkcyjnej.

Kinowej jakości kreatywne wizualizacje 4K dzięki API Nano Banana 2

API Nano Banana 2 umożliwia twórcom generowanie natywnych obrazów 4K z niezrównaną precyzją światła i cienia. Idealne do wysokiej klasy reklam marek i concept artu, API zapewnia dokładność strukturalną w złożonych renderach anatomicznych oraz krystalicznie czystą integrację tekstu. Utrzymując tekstury o wysokiej wierności w całym kadrze, stanowi solidną podstawę dla profesjonalnych procesów twórczych i wielkoformatowych zasobów cyfrowych.

Szybkie generowanie zasobów e-commerce przy użyciu API Nano Banana 2

W przypadku szybkich cykli marketingowych interfejs API Nano Banana 2 zapewnia wiodącą w branży szybkość generowania bez uszczerbku dla jakości danych wyjściowych. Idealnie nadaje się do kampanii e-commerce i działań w mediach społecznościowych, umożliwiając markom natychmiastowe tworzenie iteracji wizualizacji skoncentrowanych na produkcie. Ta zoptymalizowana wydajność drastycznie skraca cykle realizacji projektów, czyniąc go niezbędnym narzędziem dla cyfrowych witryn sklepowych o dużym wolumenie, wymagających zarówno szybkości, jak i doskonałości wizualnej.

Zaawansowana kompozycja wielu postaci przy użyciu API Nano Banana 2

Nano Banana 2 wyróżnia się w zarządzaniu zawiłymi relacjami przestrzennymi i wielowątkową narracją w ramach jednego promptu. Dzięki wykorzystaniu doskonałej logiki kontroli sceny, API zachowuje spójność wizualną i konsekwencję postaci w złożonych środowiskach. Ten przypadek użycia jest idealny dla ilustracji narracyjnych, budowania światów (world-building) i wyrafinowanych projektów marketingowych, które wymagają precyzyjnej koordynacji wielu elementów w ramach jednolitej sceny o wysokiej rozdzielczości.

Porównanie Modeli

Zobacz, jak wypadają modele różnych dostawców — porównaj wydajność, ceny i unikalne mocne strony, aby podjąć świadomą decyzję.

| Model | Limit obrazów referencyjnych | Liczba wyników | Rozdzielczość | Proporcje obrazu |

|---|---|---|---|---|

| Nano Banana 2 | 14 | 1 | 4K, 2K, 1K | 1:1 3:2 2:3 3:4 4:3 4:5 5:4 9:16 16:9 21:9 |

| Nano Banana Pro | 10 | 1 | 4K, 2K, 1K | 1:1 3:2 2:3 3:4 4:3 4:5 5:4 9:16 16:9 21:9 |

| Seedream 5.0 Lite | 14 | 1~15 | 2K~4K+ | 1:1 3:2 2:3 3:4 4:3 4:5 5:4 9:16 16:9 21:9 |

| Qwen-image | 3 | 1~6 | 512P~2K | Width[512, 2048]px;Height[512, 2048]px |

How to Use Nano Banana2 Models on Atlas Cloud

Get started in minutes — follow these simple steps to integrate and deploy models through Atlas Cloud’s platform.

Create an Atlas Cloud Account

Sign up at atlascloud.ai and complete verification. New users receive free credits to explore the platform and test models.

Dlaczego Używać Nano Banana2 Models na Atlas Cloud

Połączenie zaawansowanych modeli Nano Banana2 Models z platformą GPU-akcelerowaną Atlas Cloud zapewnia niezrównaną wydajność, skalowalność i doświadczenie deweloperskie.

Wydajność i Elastyczność

Niska Latencja:

Inferencja zoptymalizowana pod GPU dla rozumowania w czasie rzeczywistym.

Zunifikowane API:

Uruchamiaj Nano Banana2 Models, GPT, Gemini i DeepSeek za pomocą jednej integracji.

Przejrzysta Wycena:

Przewidywalne rozliczenia za token z opcjami serverless.

Przedsiębiorstwo i Skala

Doświadczenie Dewelopera:

SDK, analityka, narzędzia dostrajania i szablony.

Niezawodność:

99,99% dostępności, RBAC i logowanie gotowe na zgodność.

Bezpieczeństwo i Zgodność:

SOC 2 Type II, zgodność z HIPAA, suwerenność danych w USA.

Często Zadawane Pytania o Nano Banana2 Models

Natywne 4K odnosi się do obrazów generowanych bezpośrednio w wysokiej rozdzielczości, a nie skalowanych w górę, obsługując do 4096*2304. Oferujemy również specyfikacje poziomu 2K, zoptymalizowane pod kątem szybkich podglądów i zastosowań w mediach społecznościowych.

Atlas Cloud zapewnia konfigurowalny rozmiar wyjściowy i współczynnik proporcji za pośrednictwem konsoli i API, dzięki czemu można dopasować typowe formaty, takie jak 1:1, 16:9, 9:16 i inne. (Dokładne opcje zależą od wybranego punktu końcowego i ustawień modelu).

Edit API wykorzystuje technologię guided diffusion do precyzyjnego transferu stylu i modyfikacji strukturalnych. Umożliwia programistom iterację, przeobrażanie lub udoskonalanie istniejących zasobów przy zachowaniu płynnej spójności, co czyni to narzędzie idealnym do profesjonalnej iteracji zasobów i projektowania marketingowego.

Ponieważ wdrożenie ma znaczenie: Ujednolicone API dla przepływów pracy text-to-image i image-to-image / Przejrzysty cennik + śledzenie użycia / Łatwiejsza wymiana modeli bez przebudowywania potoku (pipeline)

Poznaj Więcej Rodzin

Seedance 2.0 Models

Seedance 2.0(by Bytedance) is a multimodal video generation model that redefines "controllable creation," moving beyond the limitations of text or start/end frames. It supports quad-modal inputs—text, image, video, and audio—and introduces an industry-leading "Universal Reference" system. By precisely replicating the composition, camera movement, and character actions from reference assets, Seedance 2.0 solves critical issues with character consistency and physical coherence, empowering creators to act as true "directors" with deep control over their output.

Happy Horse 1.0

HappyHorse-1.0 is a mysterious AI video generation model that recently claimed the #1 spot on the Artificial Analysis Video Arena leaderboard. Submitted pseudonymously without a verifiable team identity, this 15B parameter unified Transformer features a 40-layer architecture that jointly denoises text tokens, image latents, video tokens, and audio tokens in a single sequence. The model supports both text-to-video (T2V) and image-to-video (I2V) generation with native multilingual audio synthesis for Chinese, English, Japanese, Korean, German, and French—all produced in one unified forward pass without cross-attention mechanisms.

Wan2.7 Models

Launching this March, Wan2.7 is the latest powerhouse in the Qwen ecosystem, delivering a massive upgrade in visual fidelity, audio synchronization, and motion consistency over version 2.6. This all-in-one AI video generator supports advanced features like first-and-last frame control, 3x3 grid synthesis, and instruction-based video editing. Outperforming competitors like Jimeng, Wan2.7 offers superior flexibility with support for real-person image inputs, up to five video references, and 1080P high-definition outputs spanning 2 to 15 seconds, making it the premier choice for professional digital storytelling and high-end content marketing.

Veo3.1 Models

Google DeepMind’s Veo 3.1 represents a paradigm shift in AI video generation, empowering creators with director-level narrative control and cinematic-grade audio quality that seamlessly integrates with its enhanced visual realism. By bridging the gap between imaginative concepts and photorealistic execution, this advanced model offers a transformative solution for a wide range of application scenarios, from professional filmmaking and high-end advertising to immersive digital content creation.

GPT Image Models

The GPT Image Family is OpenAI's latest suite of multimodal image generation and editing models, built on the powerful GPT architecture. This family includes three tiers — GPT Image-1, GPT Image-1.5, and GPT Image-1 Mini — each available in both Text-to-Image and Image-to-Image variants. Combining GPT's world-class language understanding with DALL·E-class visual synthesis, these models deliver exceptional prompt adherence, photorealistic rendering, and creative versatility across illustration, photography, design, and visualization tasks. The series offers flexible pricing and quality tiers to match any workflow — from rapid prototyping and high-volume content production to professional-grade final deliverables. Whether you need ultra-fast iterations at minimal cost or maximum quality for brand campaigns, the GPT Image Family has a solution tailored to your needs.

Nano Banana2 Models

Nano Banana 2 (by Google), is a generative image model that perfectly balances lightning-fast rendering with exceptional visual quality. With an improved price-performance ratio, it achieves breakthrough micro-detail depiction, accurate native text rendering, and complex physical structure reconstruction. It serves as a highly efficient, commercial-grade visual production tool for developers, marketing teams, and content creators.

Seedream5.0 Models

Seedream 5.0, developed by ByteDance’s Jimeng AI, is a high-performance AI image generation model that integrates real-time search with intelligent reasoning. Purpose-built for time-sensitive content and complex visual logic, it excels at professional infographics, architectural design, and UI assistance. By blending live web insights with creative precision, Seedream 5.0 empowers commercial branding and marketing with a seamless, logic-driven workflow that turns sophisticated data into stunning, high-fidelity visuals.

Kling3.0 Models

Kuaishou’s flagship video generation suite, Kling 3.0, features two powerhouse models—Kling 3.0 (Upgraded from Kling 2.6) and Kling 3.0 Omni (Kling O3, Upgraded from Kling O1)—both offering high-fidelity native audio integration. While Kling 3.0 excels in intelligent cinematic storytelling, multilingual lip-syncing, and precision text rendering, Kling O3 sets a new standard for professional-grade subject consistency by supporting custom subjects and voice clones derived from video or image inputs. Together, these models provide a comprehensive solution tailored for cinematic narratives, global marketing campaigns, social media content, and digital skit production.

GLM LLM Models

GLM is a cutting-edge LLM series by Z.ai (Zhipu AI) featuring GLM-5, GLM-4.7, and GLM-4.6. Engineered for complex systems and long-horizon agentic tasks, GLM-5 outperforms top-tier closed-source models in elite benchmarks like Humanity’s Last Exam and BrowseComp. While GLM-4.7 specializes in reasoning, coding, and real-world intelligent agents, the entire GLM suite is fast, smart, and reliable, making it the ultimate tool for building websites, analyzing data, and delivering instant, high-quality answers for any professional workflow.

Open AI Model Families

Explore OpenAI’s language and video models on Atlas Cloud: ChatGPT for advanced reasoning and interaction, and Sora-2 for physics-aware video generation.

Seedream4.5 Models

Seedream 4.5, developed by ByteDance’s Jimeng AI, is a versatile, high-fidelity model that unifies creative generation with precise image editing. Engineered for professional consistency and intricate text rendering, it excels at multi-subject fusion, brand identity, and high-resolution marketing assets. By bridging spatial logic with artistic control, Seedream 4.5 empowers designers with a seamless, instruction-driven workflow that transforms complex concepts into polished, commercial-grade visuals.

Vidu Models

Vidu, a joint innovation by Shengshu AI and Tsinghua University, is a high-performance video model powered by the original U-ViT architecture that blends Diffusion and Transformer technologies. It delivers long-form, highly consistent, and dynamic video content tailored for professional filmmaking, animation design, and creative advertising. By streamlining high-end visual production, Vidu empowers creators to transform complex ideas into cinematic reality with unprecedented efficiency.